How to turn service catalog mapping into operational calm: a practical playbook for shift-based mobility

Dispatch teams live this reality: GPS glitches, driver shortages, and weather or traffic disruptions are part of the shift. When things go wrong, the operations command center must stay steady without finger-pointing or piling on new tools. This framework groups the most consequential questions into actionable lenses that translate SLA noise into repeatable, on-ground SOPs. It’s designed to give you clear escalation paths, audit-ready evidence, and frontline procedures you can actually execute in a 5-minute window during peak shifts.

Is your operation showing these patterns?

- Escalations spike at odd hours with no clear owner

- Vendors blame each other over blended SLAs

- No-shows and last-minute roster changes overwhelm dispatch

- Audits uncover patchy evidence trails and inconsistent SLA proof

- Control-room spends time reconciling dashboards instead of solving issues

- Drivers report fatigue or morale dips even when OTP appears compliant

Operational Framework & FAQ

Definition, scope and KPI design by service line

Clarifies what to map, why KPIs differ by line, and how to avoid one-size-fits-all SLAs; sets the foundation for auditable outcomes across EMS, CRD, ECS, and LTR.

What exactly is “service catalog mapping” in corporate mobility, and how is it different from a simple list of cab types and prices?

B0192 Define service catalog mapping — In India corporate ground transportation, what does “service catalog mapping” mean for employee mobility services (EMS) and corporate car rental (CRD), and how is it different from just listing vehicle types and rate cards?

Service catalog mapping in Indian corporate ground transportation means defining exactly which mobility services, entitlements, and SLAs each employee or use‑case is allowed to use, and then wiring those rules into operations, technology, and commercials. A simple list of vehicle types and rate cards is only a price sheet, while a mapped service catalog ties those vehicles and prices to policies, personas, timebands, and governance.

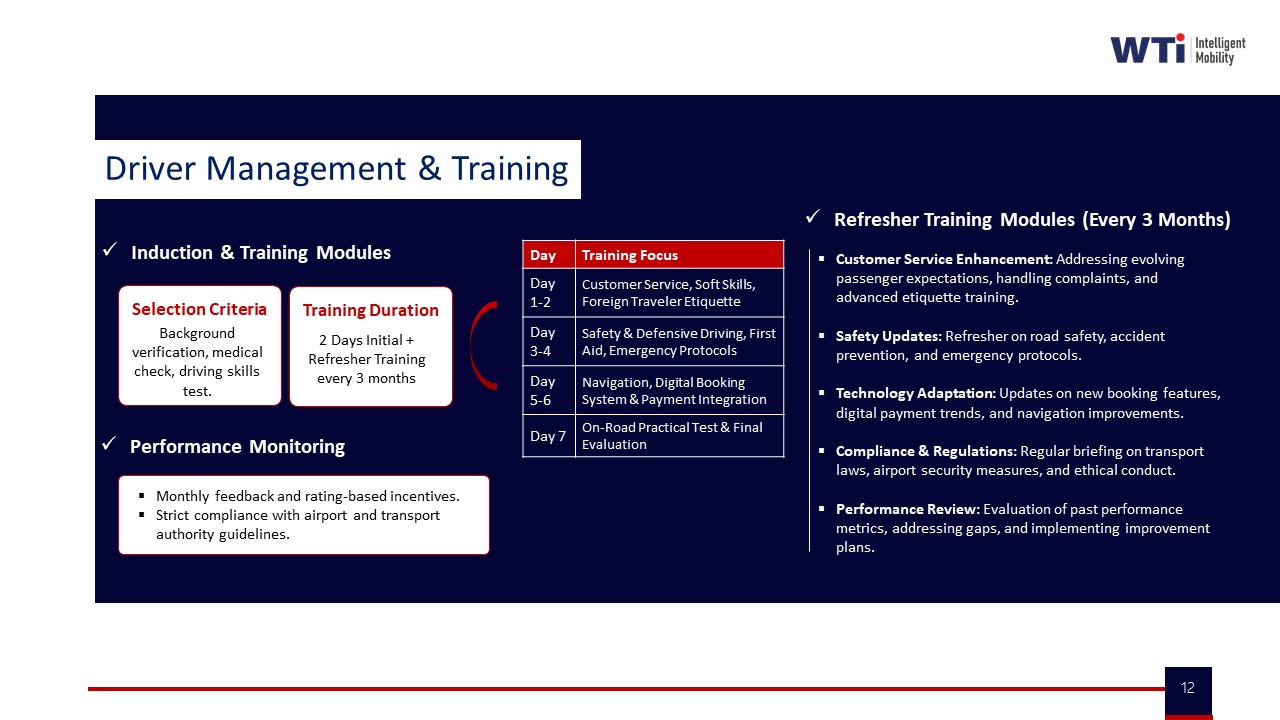

In employee mobility services, service catalog mapping links shift windows, routing rules, women-safety protocols, and escort policies to specific service lines such as home–office shuttles, pooled cabs, or night-shift drops. Each service line carries defined SLAs for on-time performance, safety controls like SOS and geo-fencing, and compliance expectations for driver KYC and vehicle fitness, which are then enforced through the routing engine, HRMS integration, and the command center.

In corporate car rental, service catalog mapping separates use-cases such as airport transfers, intra-city trips, intercity movements, and long-term rentals, and assigns who can request which category, what class of vehicle is permitted, and which approval and billing path applies. This structure enables outcome-linked procurement, centralized booking and spend control, and consistent audit trails, instead of ad-hoc usage driven only by which car is available at which tariff.

A pure vehicle-type and rate-card list ignores employee personas, policy tiers, safety requirements, hybrid-work patterns, and outcome KPIs such as cost per employee trip, seat-fill, and trip adherence rate. Service catalog mapping connects all of these dimensions so that EMS and CRD run as governed programs with predictable reliability, cost, safety, and ESG performance, rather than as a loose collection of cars and prices.

For shift commute, why can’t we use one common SLA for all trips—why do we need different outcomes and KPIs by service line?

B0193 Why KPIs differ by line — In India employee mobility services (shift-based EMS), why do buyers map outcomes and KPIs separately for each service line (day shift, night shift, women-safety rides, pooled routes) instead of using one common SLA for all employee trips?

In shift-based employee mobility services in India, buyers separate outcomes and KPIs by service line because risk, cost, and operational complexity are fundamentally different for day shift, night shift, women-safety rides, and pooled routes. A single, averaged SLA hides these differences, makes audits weak, and leaves HR, Transport, Security, and Finance exposed when an incident or cost overrun occurs.

Day and night operations behave like different products. Night shifts face higher safety risk, escort and route-approval requirements, and tighter scrutiny from HR, Security, and regulators. Buyers therefore track separate on-time performance, incident rates, and escort compliance for night trips. Day shifts are usually higher volume and cost-sensitive, so KPIs focus more on seat-fill, dead mileage, and cost per employee trip to drive utilization and TCO.

Women-safety rides carry disproportionate reputational and legal exposure. Organizations need distinct KPIs for female-first policies, route audits, SOS performance, driver KYC/PSV freshness, and escort adherence. If these are blended into a generic SLA, a single lapse can still look “within threshold” on paper, while HR and Security have no defensible evidence for boards or investigators.

Pooled and non-pooled routes also have different economics and reliability patterns. Pooled routes are measured on trip fill ratio, routing efficiency, and dead-mile caps. Point-to-point or ad-hoc trips are measured on response time and OTP. If buyers do not isolate KPIs, they cannot see whether optimization engines and pooling policies are genuinely reducing CPK and CET or just shifting pain into cancellations and late logins.

Separate KPI maps allow outcome-linked procurement. Contracts can index payouts and penalties to service-specific metrics such as night-shift OTP, women-safety incident rate, pooled-seat utilization, and exception-closure time instead of a blunt, system-level SLA. This reduces disputes with vendors and gives Finance and Procurement traceable logic between invoices, SLAs, and actual trip logs.

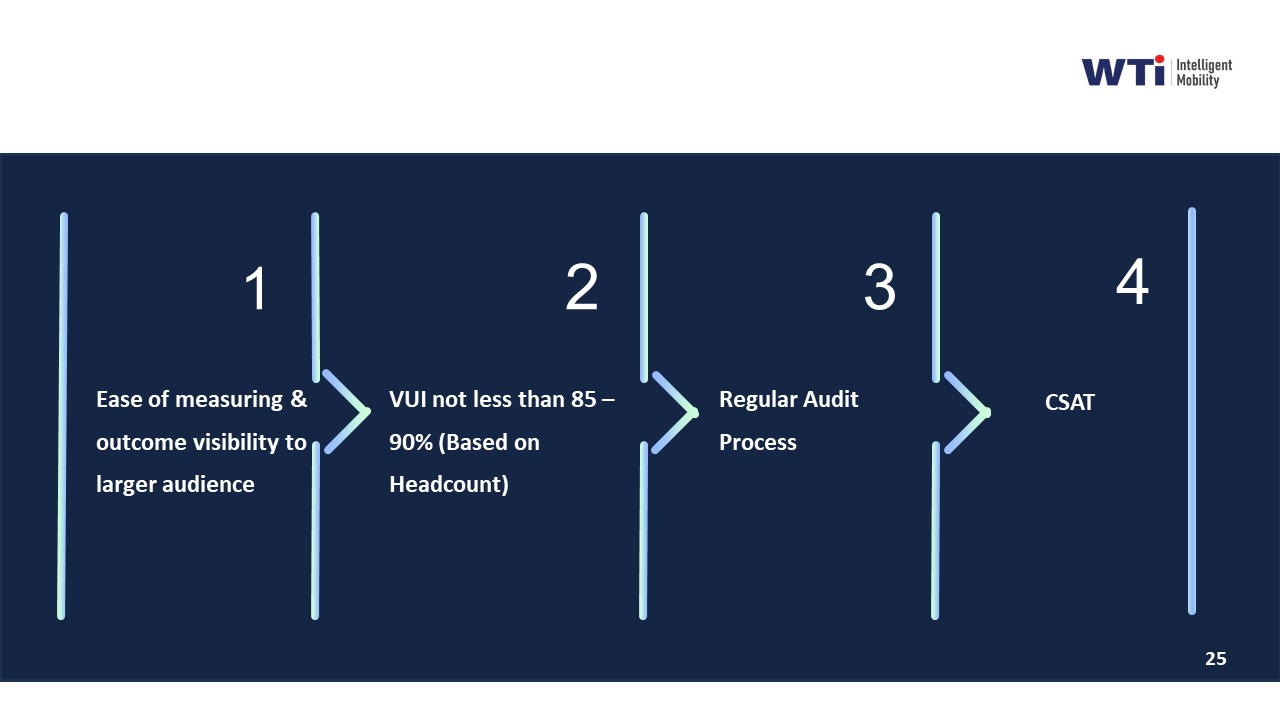

For Transport Heads and Command Centers, service-line-specific KPIs translate into clearer playbooks. Dispatch rules, buffer fleets, routing strategies, and escalation matrices differ by timeband, gender mix, and pooling model. Without separate KPIs, command centers cannot tune routing engines, capacity buffers, or EV/ICE fleet mix by shift window or risk profile.

A common SLA is therefore treated as a governance summary metric, while service-line KPIs are the real control levers. Buyers rely on granular, service-specific KPIs to meet regulatory expectations, protect against greenwashing in ESG disclosures, and maintain continuous assurance on safety, compliance, and cost at the same time.

For airport and intercity corporate trips, what do we usually map in service catalog mapping—trip types, SLAs, exceptions, billing—what’s in scope?

B0194 What gets mapped in CRD — In India corporate car rental (airport and intercity CRD), how does service catalog mapping typically work at a high level—what is being mapped (trip types, approval flows, SLAs, exception categories, billing rules) to define measurable outcomes?

In India corporate car rental for airport and intercity (CRD), service catalog mapping typically links each trip type to defined approval flows, SLA metrics, exception categories, and billing rules so outcomes like on‑time performance, cost per trip, and incident rates can be measured and governed. The catalog behaves like a controlled menu of “what is allowed, under which conditions, at what service standard, and at what commercial logic.”

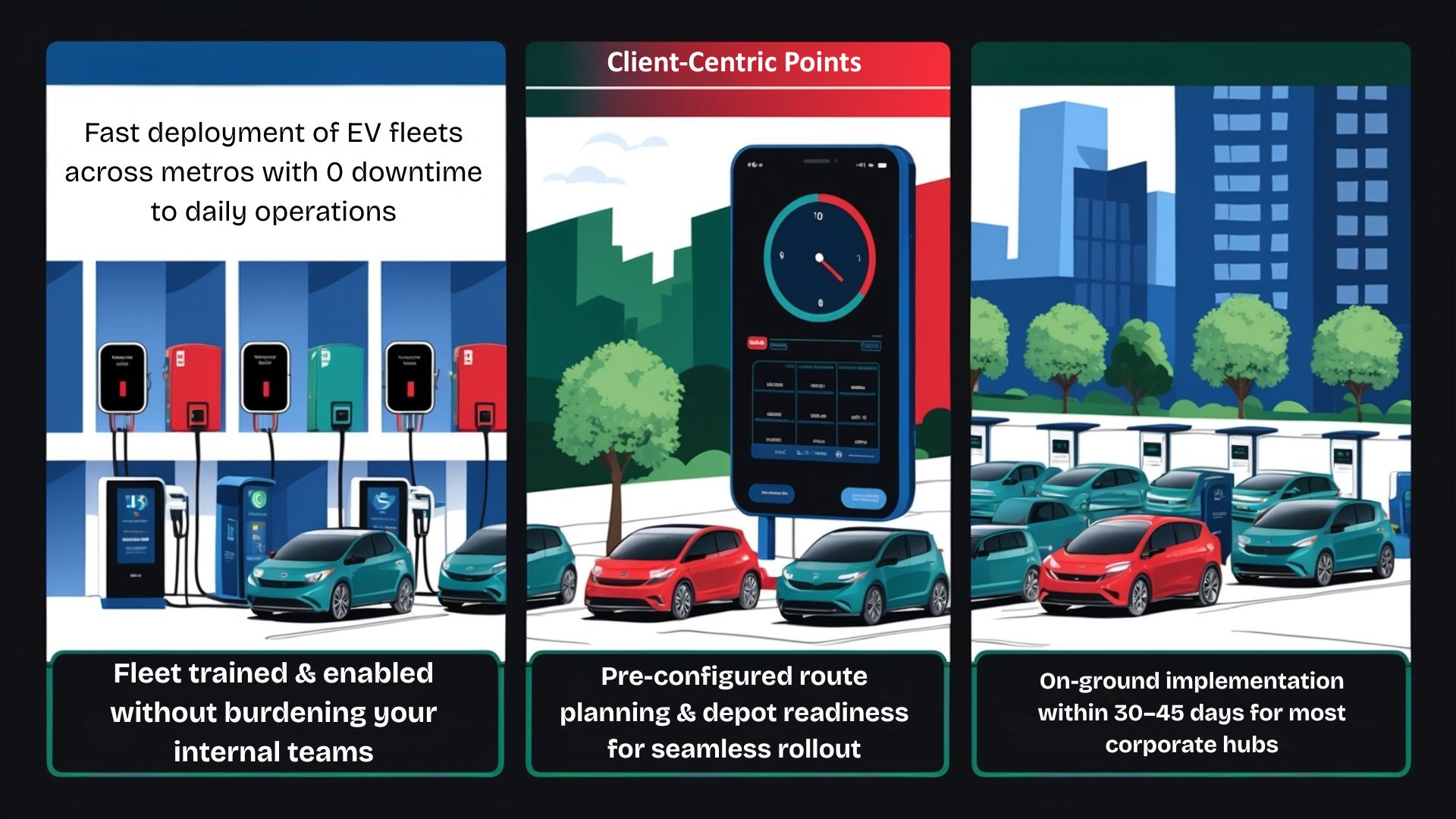

At a high level, organizations first segment CRD use cases into clear trip types. These typically include airport transfers, intra-city point‑to‑point, hourly packages, intercity one‑way and round‑trip, event or VIP movements, and project or long‑duration disposals, as shown in the collateral on corporate car rental and related service overviews. Each trip type is then mapped to allowed vehicle classes, operating models such as point‑to‑point or garage‑to‑garage, and whether bookings are ad‑hoc or pre‑scheduled.

For each mapped trip type, a standard approval flow is defined. This usually specifies who can initiate the request, which manager or travel desk approves, and any policy constraints such as advance‑booking cut‑offs or entitlement tiers. Admin and transportation apps, partner portals, and centralized booking tools reflect this by enforcing manager approvals, cutoff configurations, and role‑based access for employees, admins, and travel agents.

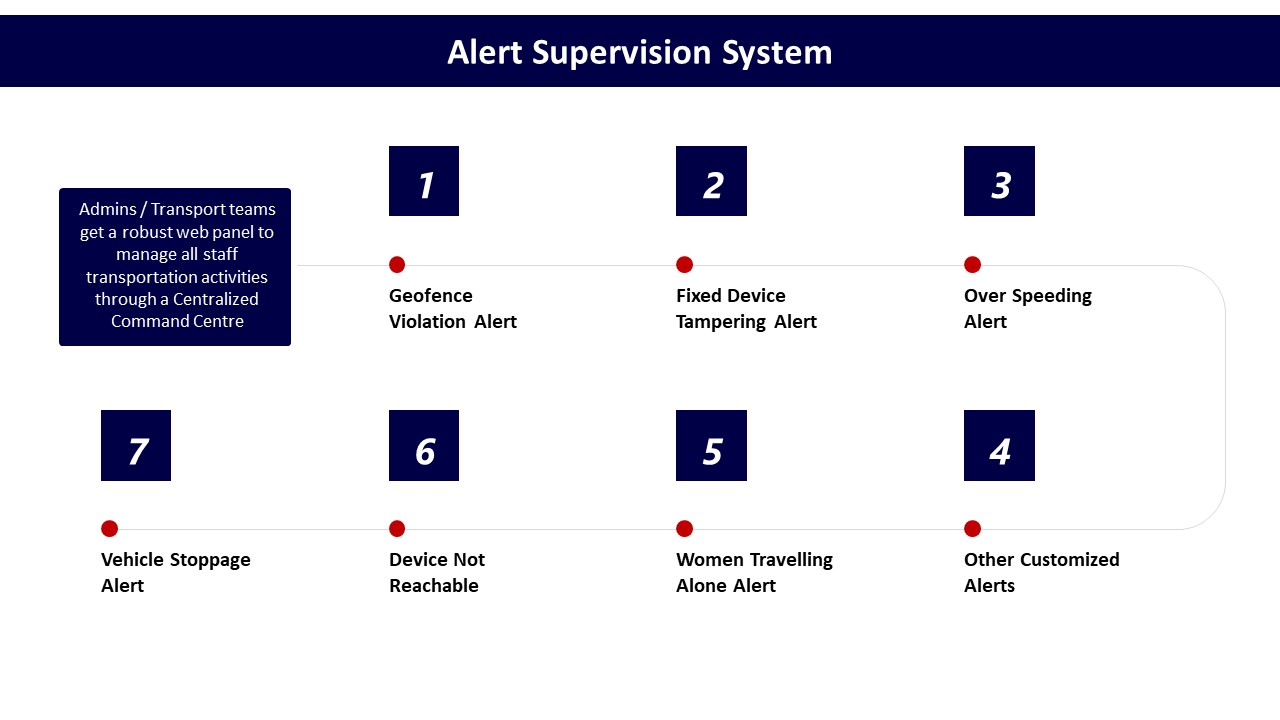

SLA mapping focuses on response times, punctuality, and service quality. For airport CRD, SLAs are often tied to flight tracking, vehicle reporting before pickup time, and waiting time thresholds. For intercity, SLAs cover vehicle age and fitness, chauffeur compliance, route adherence, rest‑break norms, and real‑time tracking availability. These commitments are captured into dashboards, alert supervision systems, and command‑center views so OTP, trip adherence, geofence violations, over‑speeding, and safety events can be monitored in real time.

Exceptions are categorized and mapped to both operational and commercial handling rules. Common exception categories include no‑shows, last‑minute cancellations, schedule changes, app or GPS failures, vehicle breakdowns, and safety incidents. For each category, there is a defined SOP for escalation, communication, and whether charges apply. Collateral on business continuity plans, escalation matrices, and on‑time delivery management shows how these exception categories are operationalized through buffer vehicles, backup systems, and pre‑agreed mitigation steps.

Billing rules are tightly bound to the catalog. For every trip type and operating model, the catalog specifies the billing model (per km, hourly package, trip‑based, FTE, pay‑per‑usage, or monthly rental), inclusions (fuel, toll, parking, driver charges), minimum billing slabs, and out‑of‑scope charges such as overnight halt, extra hours, or extra kilometers. Centralized billing systems then map trip logs and GPS data to these rules via tariff mapping, automated tax calculations, and reconciliation workflows, as illustrated in the billing process and features collateral.

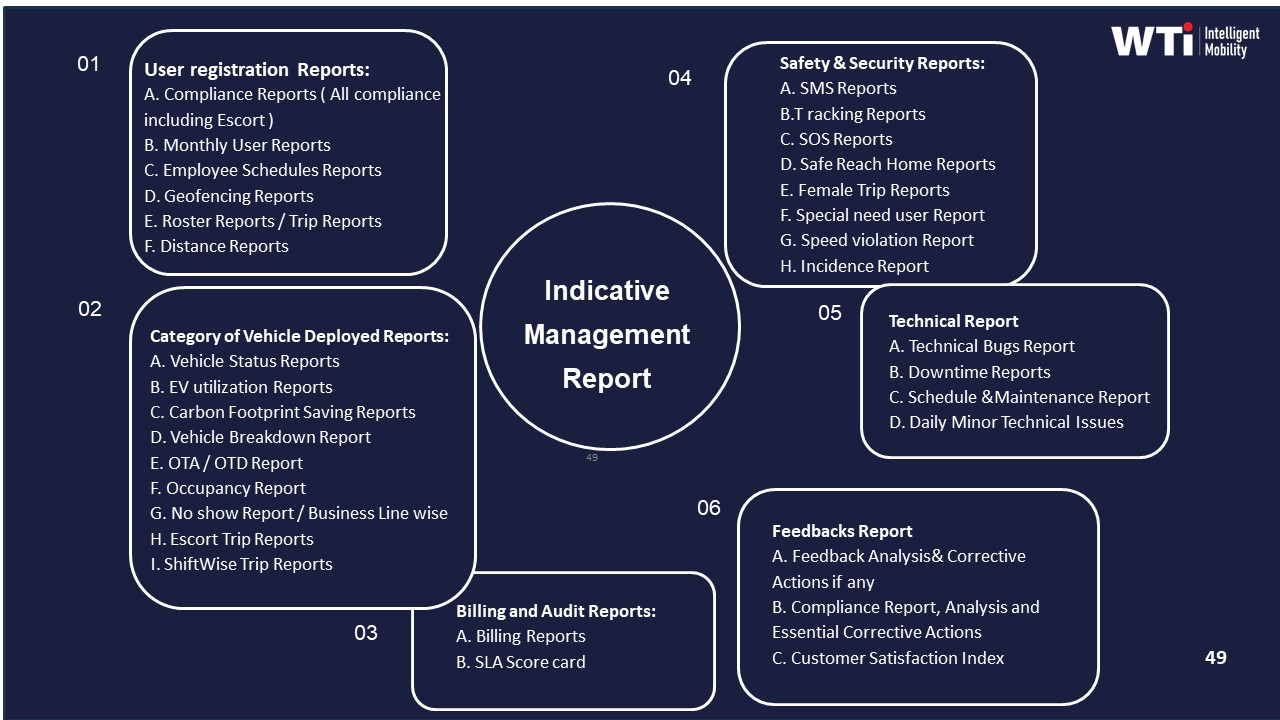

Measurable outcomes emerge because each catalog entry carries its own KPI stack. For an airport transfer SKU, for example, the organization can track OTP%, complaint rates, and average cost per trip against a known SLA and commercial pattern. For intercity disposals, managers can monitor vehicle utilization, dead mileage, and incident rates relative to the contracted package model. Data‑driven insights dashboards and indicative management reports then aggregate this, enabling procurement, finance, and operations to benchmark vendors, enforce penalties, and optimize fleet mix or package selection.

In mature setups, the same catalog mapping is also linked to compliance and safety frameworks. Vehicle and driver compliance status, women‑safety protocols, and HSSE responsibilities by role are attached to service types and timebands. This allows safety and EHS teams to test whether night airport runs or late‑evening intercity arrivals are always serviced by credentialed drivers, compliant vehicles, and appropriate escort or SOS coverage, with trip and incident evidence centrally logged.

Overall, high‑level service catalog mapping in CRD creates a structured grid where trip archetypes, approval flows, SLAs, exceptions, and billing logic are pre‑defined and technology‑enforced. This reduces ad‑hoc decisions by control‑room teams, simplifies billing and audit, and enables consistent measurement of reliability, safety, and total cost across airport and intercity corporate car rental.

How can HR tell if our commute issues are because outcomes/KPIs aren’t defined well versus the vendor just executing badly?

B0195 Diagnose KPI vs execution gap — In India enterprise-managed employee transport (EMS), how can an HR head diagnose whether the current problem is “unclear outcome definition” versus “vendor execution,” using symptoms like repeated escalations, SLA disputes, and inconsistent on-time performance reporting?

In enterprise-managed employee transport, repeated escalations and SLA disputes usually have two different roots. Vague, shifting expectations point to unclear outcome definition. Clear written expectations with consistent gaps in field execution point to vendor execution failure. HR can separate the two by mapping what is written, what is measured, and what actually happens in daily operations.

If outcome definition is weak, HR teams usually find that there is no single, signed document that defines on-time performance, safety duties, women’s night-shift rules, and escalation SLAs in measurable terms. Different stakeholders often quote different OTP baselines, grace periods, or escort rules. Reports from the command center, the vendor, and internal MIS rarely align on the same KPI definitions, and Procurement cannot easily link SLAs to billing, penalties, or incentive logic.

If vendor execution is weak, the contract and SOPs are usually quite clear, but route adherence, driver compliance, or fleet uptime are inconsistent across sites or time-bands. Transport or facility heads see patterns like good OTP on day shifts but chronic slippage on night or monsoon shifts, despite stable definitions. The command center logs show repeated geofence violations, driver fatigue breaches, or unclosed incidents, even though the safety and compliance rules are well defined.

HR can run a basic diagnostic by checking three things in a short review:

- Documents: Are OTP, safety, women’s safety, and escalation targets written in specific numbers and time-bands?

- Data: Do all parties use one canonical report for OTP, incidents, and SLA breaches, or are there multiple, conflicting versions?

- Behavior: When challenged, does the vendor say “this was never agreed” or “we could not execute as agreed”?

If definitions and data are fragmented, HR’s first move is to clarify outcomes and standardize KPI definitions with Procurement, Transport, and the vendor. If definitions and data are clear, HR’s focus should shift to enforcing vendor governance, strengthening command-center monitoring, and using audit trails, route adherence checks, and incident logs to hold execution accountable.

How do we set different outcomes/KPIs for executives, big shift cohorts, and pooled shuttles—without ending up with a messy SLA matrix?

B0196 Avoid an unmanageable SLA matrix — In India shift-based employee mobility services (EMS), what are the practical steps to map outcomes and KPIs differently for executives, large shift populations, and pooled shuttles serving campuses or business parks without creating an unmanageable SLA matrix?

In India shift-based employee mobility, organizations can map outcomes and KPIs differently by defining 2–3 outcome “profiles” (executive, large-shift EMS, pooled shuttle) and then mapping most sites and routes to one of these profiles instead of writing bespoke SLAs for every lane. Each profile uses a small, stable KPI set tuned to the service intent, while sharing a common data and reporting spine from the centralized command center to avoid matrix explosion.

For executives using corporate car rental–style EMS, the KPI profile prioritizes punctuality and service consistency. Transport teams typically anchor on on-time performance for pick-up and airport/intercity drops, vehicle standard and chauffeur compliance, and incident-free trips. Trip-level analytics and CRD-style dashboards support Finance and Admin with response-time SLAs, clean invoicing, and audit-ready duty slips without adding separate tools.

For large shift populations in classic EMS, organizations move to a volume and reliability profile. The command center tracks on-time performance at shift start, trip adherence rate against planned routes, seat-fill or trip fill ratio to control cost per employee trip, and exception detection-to-closure time for safety or GPS incidents. Safety and compliance indicators such as escort compliance, women-first night routing, and credential currency sit in the same SLA bundle, surfaced through centralized compliance management and real-time alerts rather than separate SLAs per route. Data-driven insights and single-window dashboards help transport heads see OTP, dead mileage, and no-shows without manual collation.

For pooled shuttles serving campuses or business parks, the profile shifts to throughput and experience. KPIs focus on headway adherence, capacity utilization across timebands, dwell times at key stops, and emission intensity per trip where EV fleets are in use. In EV-enabled campuses, organizations may add EV utilization ratio and CO₂ reduction per kilometer using measurable sustainability dashboards, aligning with ESG reporting without rewriting operational SLAs. Pooled services still use the same routing and tracking stack, but apply community metrics at route or corridor level rather than employee level.

To keep the SLA matrix manageable, transport heads usually standardize three elements. First, they define a core KPI core (OTP, safety incidents, incident closure time, and cost per trip) that applies across all profiles. Second, they add only one or two profile-specific KPIs per archetype, such as executive experience scores for leadership cars or seat-fill and emission indices for pooled shuttles. Third, they implement a single observability and reporting layer, using dashboards and indicative management reports that slice the same data by persona, route type, or site instead of multiplying distinct SLA templates. This lets command centers run unified SOPs for alerts, escalation, and business continuity plans while still giving HR, Finance, and ESG leads the differentiated metrics they need.

In an RFP, how do we make vendors commit to service-line outcomes instead of giving generic ‘we meet SLAs’ promises?

B0197 RFP structure for mapped outcomes — In India corporate ground transportation sourcing, how should Procurement structure an RFP so that service catalog mapping forces vendors to commit to service-line outcomes (EMS shift routes vs airport trips vs event transport) rather than hiding behind generic “we meet SLAs” language?

Procurement should treat the service catalog as the backbone of the RFP and require vendors to price and commit at the service-line level for each mobility vertical, not at a generic “transport services” level.

The RFP should first define the four core service verticals explicitly as separate lots or sections. These are Employee Mobility Services for shift-aligned routes, Corporate Car Rental Services for intra-city, intercity and airport movements, Project/Event Commute Services for temporary high-volume needs, and Long-Term Rental for 6–36 month dedicated vehicles. Each vertical should have its own scope, KPIs, and commercial model so that vendors must respond with service-specific capabilities, playbooks, and SLAs.

A common failure mode is an RFP that bundles EMS, CRD, ECS, and LTR under a single SLA and rate card. Vendors then default to vague “95% SLA” claims without proving how they handle shift windowing, night safety, airport delay handling, or rapid event fleet ramp-up. Procurement can avoid this by demanding outcome-linked KPIs per vertical, such as OTP% and Trip Adherence Rate for EMS, response-time SLAs for CRD, scale-up timelines for ECS, and uptime targets for LTR.

To make vendors commit, the RFP should ask for a structured response table for each service line. The table should map use cases to operational methods, outcome KPIs, and penalties or earnbacks. The RFP should also require vertical-specific case evidence, such as zero-incident programs for women’s night shifts, EV uptime parity for fixed fleets, or high on-time arrival rates during adverse weather, to separate generic claims from proven execution.

What are the usual HR vs Finance fights when setting EMS KPIs, and how can service catalog mapping reduce the back-and-forth?

B0198 Resolve HR–Finance KPI conflict — In India employee mobility services (EMS), what are the most common conflicts between HR (employee experience and duty of care) and Finance (cost per trip and audit defensibility) when defining service-line KPIs, and how can service catalog mapping reduce the political back-and-forth?

In India employee mobility services, the most common HR–Finance conflict arises when HR optimizes for safety, reliability, and employee experience, while Finance optimizes for cost per trip, cost per km, and audit defensibility. HR pushes for high on-time performance, women-safety overlays, and flexible capacity to avoid escalations, but Finance resists when these choices inflate unit costs, add “exceptions,” or create opaque billing logic.

HR typically wants KPIs centered on commute experience and duty of care. HR focuses on OTP%, safety incidents, women-safety compliance, complaint closure SLAs, and impact on attendance and attrition. Finance typically wants KPIs centered on TCO and traceability. Finance focuses on cost per employee trip, cost per km, dead mileage, utilization, and SLA-to-invoice reconciliation. A frequent failure mode is defining KPIs at a generic “transport” level, which forces every service line to be judged on both maximum EX and minimum cost simultaneously, creating recurring political debates during renewals and audits.

Service catalog mapping reduces this back-and-forth by explicitly segmenting EMS into distinct service lines with different KPI stacks and commercial expectations. A structured catalog separates, for example, standard pooled shifts, women-only or night-shift routes, and premium executive or critical-ops transport, and assigns different reliability, safety, and cost baselines to each. This lets HR justify higher-cost, higher-protection SKUs as policy-driven choices, while Finance benchmarks each SKU on the appropriate economics and audit metrics.

A practical pattern is to define for each catalog item: the target personas and policy entitlements, primary KPIs (EX/safety-heavy vs cost/utilization-heavy), accepted cost envelope and commercial model, and evidence requirements for audit and ESG reporting. Once these mappings are codified and approved, routine decisions move from ad-hoc negotiation to policy execution, and quarterly reviews shift from “who is right, HR or Finance?” to “is each service line hitting its agreed KPI band?”.

For exec car rental, how do we define outcomes like punctuality and vehicle standards so it feels premium but is still enforceable?

B0199 Executive CRD outcomes that enforce — In India corporate car rental (CRD) for executives, how should an Admin or Travel Desk define persona-specific outcomes (punctuality, vehicle standardization, driver behavior, airport delay handling) so the service feels “consistent” without making the SLA impossible to enforce?

In corporate car rental for executives, Admin or Travel Desk should convert “consistent service” into a small set of persona-specific, observable outcomes with clear measurement rules and realistic buffers. These outcomes should be framed as objective behaviors on each trip rather than subjective satisfaction, and they should be tied to data that the operator and client can both see in the booking and trip logs.

Admin or Travel Desk leaders usually care most about predictable arrival times, standard vehicle category, and executive comfort. They can define punctuality as a specific window around scheduled pickup, such as “on-time” being arrival 10–15 minutes before pickup for airports and 5–10 minutes for city trips. They should measure this on GPS and trip timestamps, and they should separate controllable delays from force‑majeure events like sudden road closures.

Executives expect a familiar experience regardless of city or vendor. Admin or Travel Desk can set vehicle standardization as a defined list of acceptable models per segment, with visible checks on age, fitness, and amenities captured through centralized fleet compliance records. They can also require that any substitution stays within the same or higher segment and is pre‑communicated to the executive.

Driver behavior strongly shapes perceived consistency. Admin or Travel Desk should define it through simple, auditable rules like no smoking, appropriate dress code, courteous conduct, and adherence to safe driving norms, with behavior issues recorded via post‑trip feedback in the app and driver compliance logs. A minimum driver rating threshold and a documented training and induction process support these expectations without turning them into vague obligations.

Airport delay handling can be defined as a specific SLA around flight tracking and wait-time logic. For example, the operator must track flights and adjust pickup times for delays, with a defined free waiting period after actual landing. These rules should be reflected in the booking platform and billing so they are enforceable yet fair to both sides.

To avoid making SLAs impossible to enforce, Admin or Travel Desk should focus on a small core set of KPIs that can be directly tied to data: on-time performance, vehicle compliance rate, driver rating, and SLA adherence for airport and intercity trips. They should also include clear exception categories, such as extreme weather or political disruptions, linked to business continuity plans and command center escalation procedures. Balanced penalty and incentive structures based on these KPIs keep the operator focused on consistency without driving gaming behavior or unrealistic promises.

For event/project transport, what KPI mistakes lead to nonstop firefighting, and how do we write ECS outcomes that reduce 3 a.m. escalations?

B0200 ECS KPIs that reduce firefighting — In India project/event commute services (ECS), what outcome definition mistakes cause “time-bound delivery pressure” to turn into constant on-ground firefighting, and how should ECS-specific KPIs be written to protect Operations from 3 a.m. escalations?

In India project and event commute services, “time-bound delivery pressure” turns into constant firefighting when outcomes are defined only as “buses on time” in generic SLAs and not as a combination of OTP, routing, capacity buffers, and control-desk behavior that reflects real ECS conditions. ECS-specific KPIs need to explicitly encode peak-load realities, rapid scale-up/scale-down behavior, exception-closure speeds, and on-ground supervision so that Operations can point to clear thresholds and not absorb endless 3 a.m. blame.

A common failure mode in ECS is copying Employee Mobility Services or Corporate Car Rental KPIs. ECS has rapid fleet mobilization, temporary routing, and zero-tolerance for project delays. If contracts only mention high-level OTP or cost per km, then last-minute headcount swings, weather, or political disruptions create exceptions with no agreed buffer, so every deviation becomes a “failure” for the transport head. Another frequent mistake is ignoring the role of dedicated project/event control desks, which the context identifies as essential for on-ground control and live coordination.

ECS KPIs should separate “normal-conditions performance” from “disruption-handling performance.” They should define what is guaranteed under standard assumptions about demand, route lock-in, and access conditions, and what triggers Business Continuity Plan playbooks and different expectations. Operations protection comes from three KPI groups:

- Reliability KPIs for time-bound movement. These include on-time performance windows for first pickups and last drops, trip adherence rate for temporary routes, and exception-detection-to-closure times during project critical windows.

- Capacity and flexibility KPIs for scale-up/scale-down. These define response time for additional vehicles, maximum allowed variance between forecast and actual volumes before SLAs are recalibrated, and utilization thresholds for temporary routing and crowd movement planning.

- Governance and visibility KPIs for control desks. These cover live coordination metrics like control-desk uptime, escalation matrix adherence, and reporting cadence on deviations during events.

When KPIs for ECS explicitly articulate these reliability, flexibility, and governance dimensions, Operations can manage rapid, high-volume, time-bound programs with clear rules of engagement. This reduces uncontrolled obligation creep and protects on-ground teams from constant, undefined 3 a.m. escalations driven by unrealistic or generic outcome definitions.

For pooled shuttles on campus, what outcomes should we set—seat-fill, wait time, loop adherence—so employees don’t feel it’s a downgrade?

B0201 Fair outcomes for pooled shuttles — In India enterprise employee mobility (EMS), how should a Facility/Transport Head define outcome KPIs for pooled shuttles in campuses or business parks (seat-fill, wait time, adherence to fixed loops) so employees don’t feel “second class” versus point-to-point cabs?

A Facility or Transport Head should define pooled shuttle KPIs so that reliability, access time, and comfort are clearly comparable to point-to-point cabs, then track them with the same command-center rigor used for cabs. Outcome KPIs must focus on on-time performance, wait time at stops, seat availability, and audit-ready safety, because these are the dimensions employees use to judge whether shuttles feel “second class.”

For campus or business-park loops, a central command center and real-time dashboards are essential to monitor shuttle adherence, crowding, and exceptions. Pooled services work best when routing and capacity are optimized up-front, then continuously corrected using live data on boarding patterns, delays, and missed trips. In practice, this means linking scheduling and route design to HRMS rosters and shift windows, and using data-driven insights to rebalance fleet size or frequency before peak periods become a problem.

Outcome KPIs for pooled shuttles should focus on a small set of control-room metrics that translate directly into fewer complaints and fewer 2 a.m. calls:

- Seat-Fill & Capacity. Define a target Trip Fill Ratio for shuttles that balances efficiency and comfort. For example, a range that avoids both under-filled runs and standing loads. Use this to drive dynamic routing, frequency adjustments, and fleet mix decisions.

- Wait Time & Access. Set a maximum scheduled wait time at each stop within defined shift windows. Monitor actual average and 95th percentile wait times, not just timetables. Treat “left behind at stop” incidents as critical exceptions with closure SLAs.

- Loop Adherence & Journey Time. Track Trip Adherence Rate for each loop, including deviation from planned sequence and total journey-time variance versus baseline. Use centralized dashboards similar to “Command Centre” views from the collateral to trigger alerts when loops start slipping.

- On-Time Performance (OTP%). Measure OTP for arrival at campus and for drop back home against shift-reporting times, exactly as for cabs. Link OTP to SLA and penalties so pooled users are not deprioritized.

- Safety & Compliance. Apply the same women-safety, driver-compliance, and SOS controls that exist for cabs. Use geo-fencing, IVMS, and centralized compliance management to ensure pooled routes pass safety audits, especially for early-morning or late-night loops.

- Experience & Escalations. Track a simple Commute Experience Index or user satisfaction score specifically for pooled shuttles. Combine complaint volume, closure time, and “no-show bus” incidents into a regular management report, so issues are visible and fixed, not normalized.

When shuttles share the same NOC, safety tooling, and reporting discipline as cabs, employees experience them as a first-class option rather than a downgrade.

For airport trips, what KPIs should Admin and Finance agree on—arrival buffer, delay handling, no-shows—to reduce disputes and escalations?

B0206 Airport trip KPIs to cut disputes — In India corporate car rental (CRD), what persona-specific KPIs should Finance and Admin agree on for airport trips (driver arrival buffer, flight-delay handling, no-show rules) to reduce billing disputes and traveler escalations?

For airport CRD in India, Finance and Admin reduce disputes fastest when they jointly fix a small, explicit KPI set around three areas: driver arrival buffer, flight‑delay handling, and no‑show rules. Each KPI must be defined in time or money terms and tied to billing logic and escalation SOPs.

For driver arrival, most organizations standardize an on‑time performance KPI linked to a buffer window. Admin typically defines a required reporting buffer before scheduled pickup or scheduled landing, and the vendor commits to an OTP% target against that buffer. Finance links this directly to penalties or non‑billable trips when the vendor misses the agreed buffer, which reduces arguments later. The same OTP% can sit on the mobility dashboard and the CRD billing reports so Admin sees reliability and Finance sees contract enforcement.

For flight delays, Finance and Admin usually agree on a free‑wait band and a paid‑wait band. Admin defines what counts as a “flight‑linked” trip and how long the driver must wait at no additional charge when flight status shows delays. Finance then hard‑codes what happens after that band, such as moving to per‑hour or per‑km waiting charges and whether an additional trip can be billed if the delay crosses a threshold. This alignment turns real‑time delay handling into a predictable commercial rule instead of a case‑by‑case negotiation.

For no‑shows, the two functions benefit from defining clear evidence requirements and a standard charge formula. Admin specifies how long the driver must wait at the pickup point, what proof is needed (GPS tag, timestamped photo, app log), and when transport or travel desk must attempt contact. Finance then agrees what percentage of the base fare can be billed as a no‑show fee when those conditions are met, and when the trip must be fully written off if protocols are not followed. This reduces traveler escalations because employees see consistent treatment and a visible SOP rather than arbitrary charges.

Key persona‑specific KPIs that Finance and Admin can align on for airport CRD include:

- On‑Time Performance for airport pickups and airport drops, measured against a defined reporting buffer.

- Average and maximum free waiting time by trip type, with explicit post‑buffer charge rules.

- No‑show rate and percentage of no‑show charges reversed because SOPs were not followed.

- Dispute rate per 100 airport trips, with a target reduction over time tied to cleaner rules.

- Invoice–trip log match rate, ensuring that billed wait time and no‑shows match system records.

When these KPIs are written into CRD contracts and mirrored in the billing engine and dashboards, Finance gains clean, auditable numbers and Admin gains predictable service behavior and fewer late‑night escalations.

How do we lock down KPI definitions (OTP/OTD, cancellations, no-shows, exceptions) across EMS and CRD so we stop arguing about numbers monthly?

B0211 Standardize KPI definitions across lines — In India corporate mobility, how should a vendor and buyer agree on definitions for core KPIs (on-time pickup, on-time drop, cancellation, “no-show,” exception) across EMS and CRD so different departments don’t argue over numbers every month?

In India corporate mobility, vendors and buyers avoid monthly KPI disputes by defining each KPI in a joint “measurement charter” that is service-specific (EMS vs CRD), operationally realistic, and contractually frozen before go-live. Each KPI needs a precise event definition, a clear time window, explicit inclusions and exclusions, and one agreed data source logged by the platform or command center.

A common failure mode is when HR, Transport, and Finance each use different clocks, buffers, and exception rules for the same term. Another failure mode is when “on-time” and “no-show” definitions live only in emails or presentations, not in the contract and SOPs. Most organizations get stability only when KPI semantics are documented as part of the SLA schedule and mirrored in routing, apps, and billing logic.

For EMS, on-time pickup is usually linked to a shift window at the first boarding point, while on-time drop is tied to reaching the workplace before a defined buffer ahead of shift start. For CRD, on-time pickup is typically measured against a confirmed booking time at the employee’s chosen location, and on-time drop is anchored to airport check-in or meeting start requirements.

Practical design steps include: - Define KPI timestamps at trip-lifecycle events such as “driver reached,” “employee boarded,” “trip start,” and “trip end”. - Fix pickup and drop buffers distinctly for EMS and CRD, and record them in the SLA annexure. - Specify what counts as cancellation, what counts as “no-show,” and which cases are tagged as “exceptions” that are excluded from OTP and penalty calculations. - Make the command center or platform the single source of truth, and align HRMS integration and billing reports to those same definitions.

What signs show we’re optimizing exec KPIs while shift populations suffer, and how do we make that trade-off visible in the mapping?

B0213 Detect exec bias in KPIs — In India enterprise-managed employee transport (EMS), what are the warning signs that persona-specific KPIs for executives are being optimized at the expense of large shift populations, and how should the service catalog mapping make that trade-off visible?

In India enterprise-managed employee transport, a system is over-optimizing for executives at the expense of large shift populations when executive KPIs improve while core EMS reliability, safety, and cost KPIs for shift workers stagnate or deteriorate. The service catalog and KPI map should make this visible by clearly separating EMS and CRD services, attaching distinct KPI sets and budget allocations, and showing when improvements in executive-trip metrics correlate with degradation in shift mobility outcomes.

One warning sign is when executive corporate car rental (CRD) metrics like response time, vehicle class availability, and airport SLA adherence trend up, while employee mobility services (EMS) KPIs like on-time performance (OTP%), trip adherence rate, and no-show handling for shift commutes flatline or worsen. Another sign is dead mileage and fleet utilization skewing toward daytime CRD use while night-shift EMS routes suffer low seat-fill, under-provisioned backup capacity, or frequent last-minute vendor substitutions.

A second warning sign is safety and compliance attention drifting toward VIP or senior-travel use cases while women-first night-shift routing, escort compliance, and SOS readiness for bulk shift routes are treated as static “policy” items rather than continuously audited metrics. This gap often appears when incident response SLAs and geofencing controls are tightly enforced on executive trips but random route audits and HSSE compliance for pooled EMS routes lack audit frequency or clear ownership.

A third warning sign is cost and commercial models privileging CRD optics over EMS robustness. This happens when cost per kilometer for executives is continuously optimized and reported to Finance, but cost per employee trip and dead-mile caps for large shifts are not governed with the same discipline. It also appears when buffer vehicles and business continuity capacity are cut from EMS to fund incremental executive fleet comfort, leading to rising exception-closure times in shift hours.

Service catalog mapping should therefore present EMS, CRD, ECS, and LTR as distinct service lines with explicitly different buyer personas and KPIs. EMS entries should be tied to OTP%, seat-fill, dead mileage, incident rate, women-safety compliance scores, and command center observability during shift windows. CRD entries should instead highlight executive response times, airport linkage, and vehicle-standard KPIs, making it obvious if investment is being directed disproportionately toward CRD compared to EMS.

The catalog should also encode which spending lines and contracts serve which populations. It should show, for example, that a given fleet segment or routing engine capacity is reserved for shift-based EMS, not implicitly shared with CRD during peak hours. This mapping prevents silent cannibalization of EMS capacity by executive on-demand use and surfaces when on-time performance degradation for shifts coincides with new executive entitlements.

Finally, the mapping should roll up KPIs by persona: CHRO-facing dashboards focused on commute experience index, safety, and attendance for large workforce segments, CFO-facing views combining CET/CPK across EMS and CRD, and Transport-head views that plot fleet utilization index by service vertical. When these persona views are aligned but clearly segmented, any trade-off that benefits one persona’s KPIs while eroding another’s becomes immediately visible and can be governed rather than hidden inside a blended mobility score.

How can we use service catalog mapping to clarify ownership between vendor, NOC, site admin, and HR so incidents don’t turn into blame games?

B0215 Clarify ownership to prevent blame — In India corporate mobility operations, how can an Operations head use service catalog mapping to create a clear “who owns what” model between vendor, NOC, site admin, and HR—so incidents don’t devolve into blame-shifting?

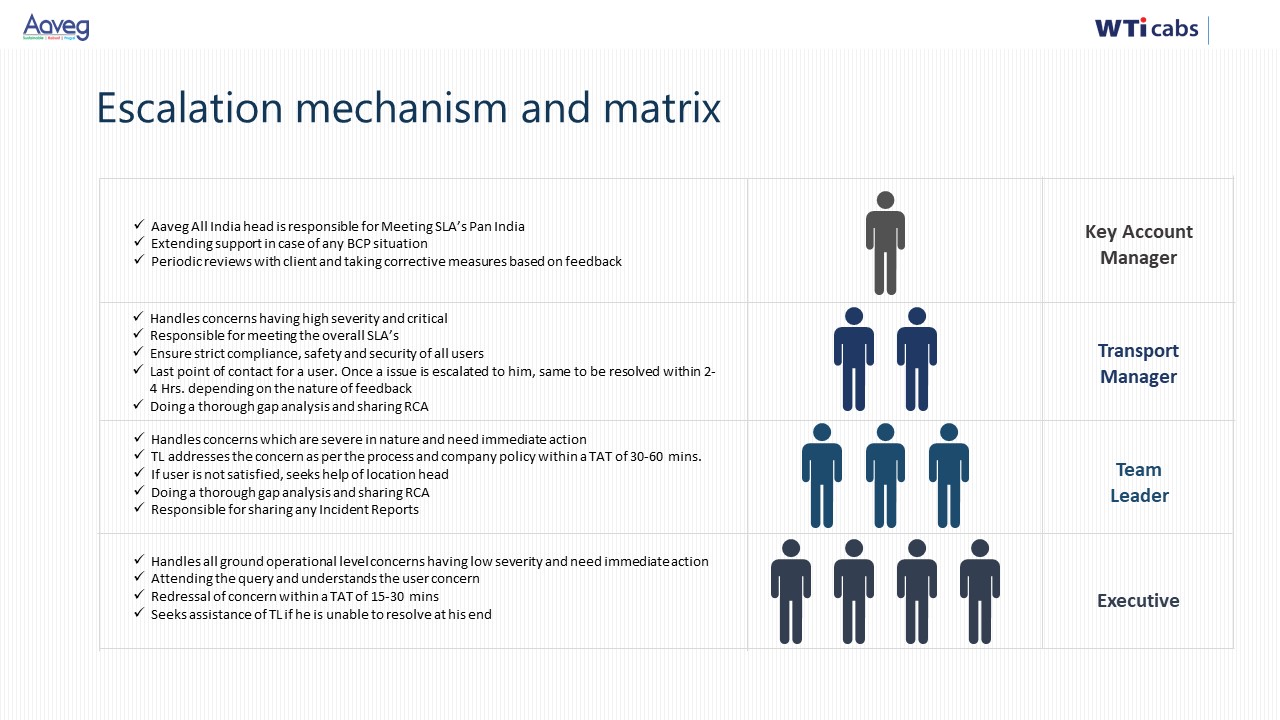

In Indian corporate mobility operations, an Operations head can use a service catalog to pre-define “who owns what” for each transport service, mapping every activity and incident type to a single accountable owner across vendor, NOC, site admin, and HR. A clear, signed-off catalog prevents 2 a.m. blame-shifting because it ties each step in the ETS/CRD/ECS/LTR operation cycle to roles, SLAs, and evidence, not to ad-hoc judgments.

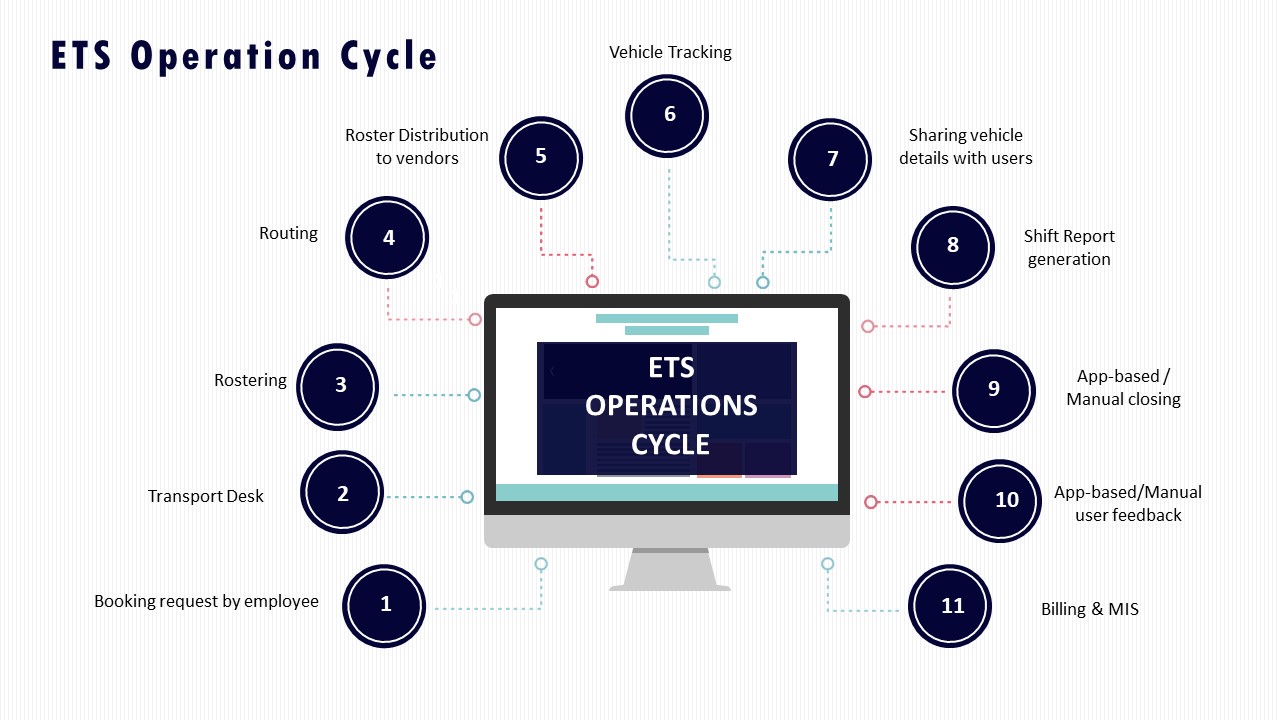

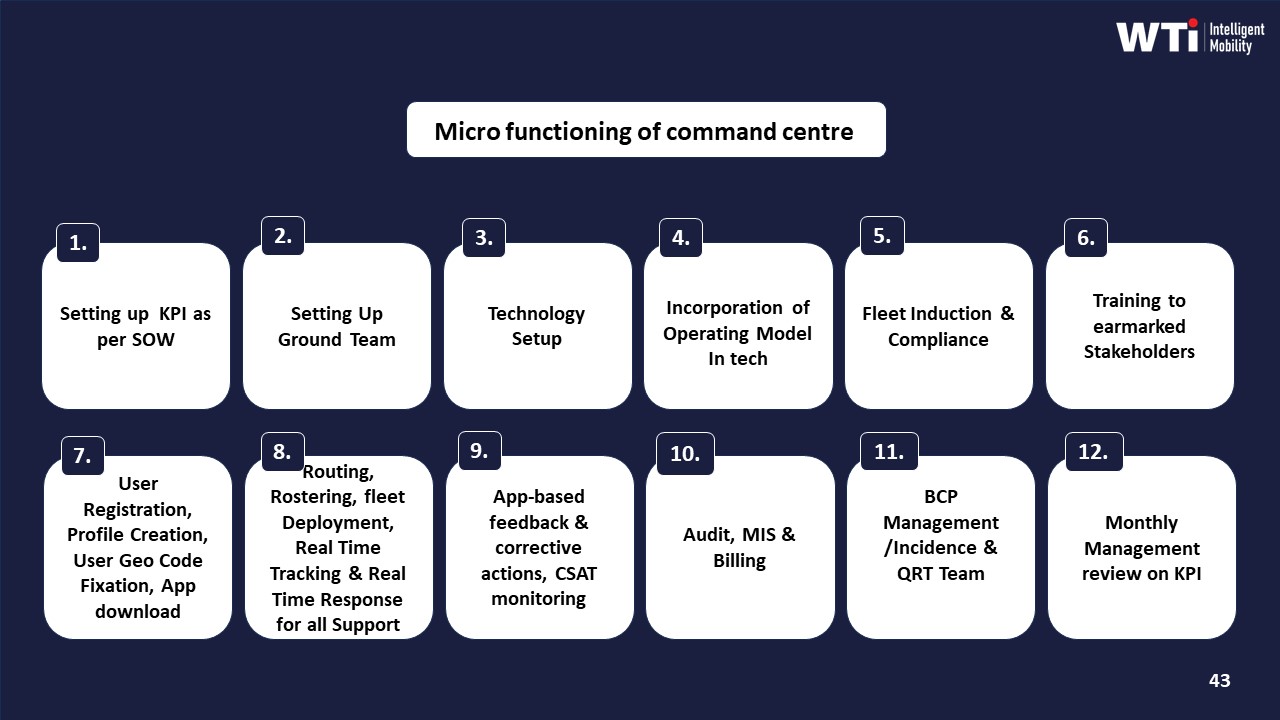

The starting point is to list the concrete services actually run on the ground. Examples include shift-based Employee Mobility Services, on-demand Corporate Car Rental, project/event commute, and long-term rental vehicles. For each service, the Operations head defines a process view similar to the ETS Operation Cycle or Vehicle Deployment & Quality Assurance flows, then breaks it into discrete steps such as roster creation, routing, trip dispatch, driver and vehicle compliance checks, live tracking, SOS handling, incident reporting, billing, and MIS.

Each step then gets a single RACI-style ownership line with explicit role tags. The vendor may own fleet availability, driver induction and compliance, and first-level trip execution. The centralized NOC or Transport Command Centre may own real-time monitoring, alert supervision, SLA tracking, and escalation handling. The site admin may own local coordination such as access gate issues and floor communication. HR may own policy, employee eligibility, grievance closure, and women-safety governance. Safety and compliance dashboards, command-center workflows, and alert systems like SOS or geofence violations become the evidence layer that supports these ownership lines.

The Operations head then links incident types directly to this catalog. For example, “driver no-show,” “vehicle non-compliance,” “late pickup beyond SLA,” “escort missing on night route,” “app/GPS failure,” or “safety SOS triggered” each get a primary owner, a backup owner, a response SLA, and the system of record that proves closure. Business continuity and contingency playbooks, escalation matrices, and TCC roles and responsibilities are attached as annexures to the same catalog so everyone knows who leads when there is a cab shortage, political disruption, or tech outage.

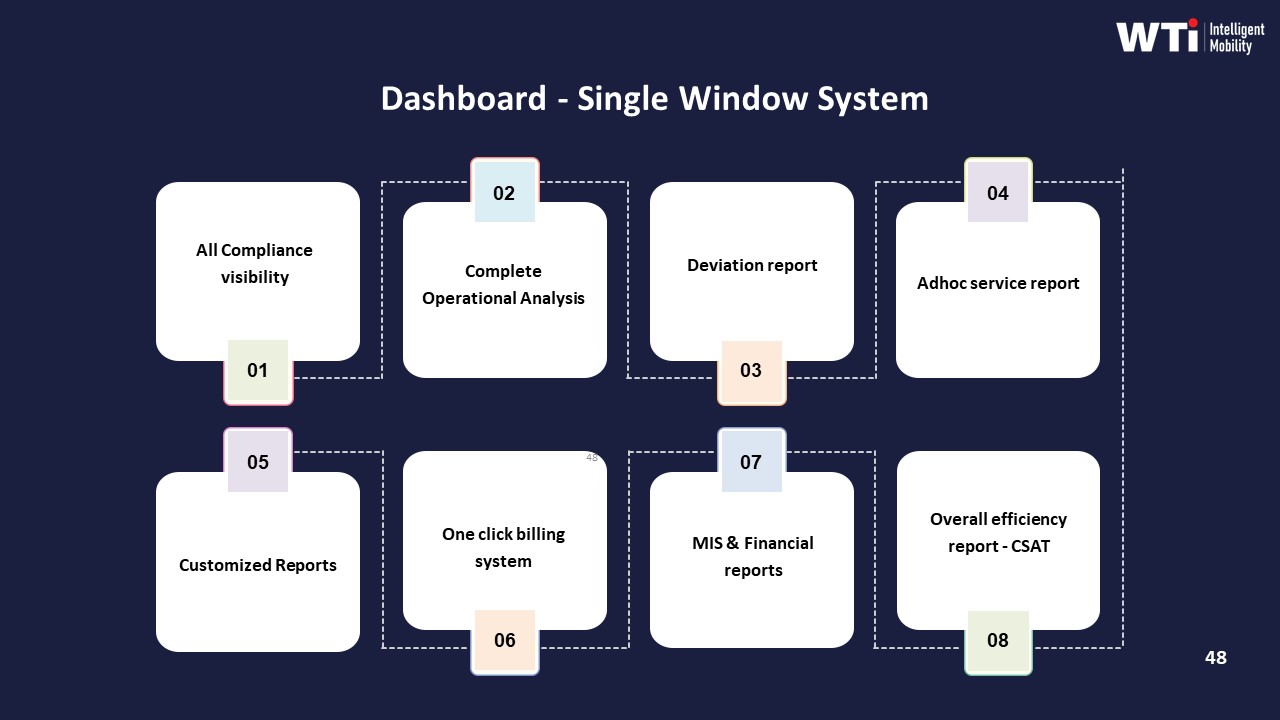

To keep this from becoming a paper exercise, the catalog is mirrored in tools. The NOC dashboard, alert supervision system, and command-centre workflows should route tickets and alerts to the owner defined in the catalog. Centralized compliance management and data-driven insights dashboards provide shared visibility so HR and site admins see the same OTP, incident, and safety numbers that vendors and Operations see. Quarterly governance reviews then use these catalog-linked KPIs and incident logs, rather than emails, to resolve disputes and refine responsibilities.

- Define services and process steps clearly and map each step to a single accountable role.

- Attach incident types, escalation paths, and SLAs to those roles with supporting tools and dashboards.

- Use governance reviews and BCP scenarios to adjust the catalog, keeping it aligned with real on-ground behavior.

How do we decide which outcomes should go into the service catalog and which should stay internal metrics, so vendors don’t game it and teams don’t get overloaded?

B0217 Choose KPIs to expose vs internal — In India corporate car rental (CRD) and employee mobility (EMS), how should a buyer decide which outcomes belong in the service catalog mapping versus which should remain as internal operational metrics, to avoid vendor gaming and cognitive overload?

In corporate car rental (CRD) and employee mobility (EMS) in India, outcomes that are employee‑visible, cross‑functional, and easy to audit should sit in the service catalog, while granular efficiency and tuning metrics should stay as internal operational KPIs. Service catalog items must describe what the business and employees can reliably expect from vendors, and internal metrics must describe how the transport and command‑center teams achieve those expectations without inviting vendor gaming or confusion.

Service catalog mapping works best when it focuses on a small set of “contract and experience” outcomes. These typically include on‑time performance for pickups and drops, safety and incident‑response guarantees, guaranteed availability windows for vehicles or routes, basic cost structures for key service types, and minimum compliance baselines for drivers, vehicles, and women‑safety protocols. These outcomes cut across HR, Finance, Security/EHS, and Facilities, so they are understandable outside Transport and are traceable in audits and in SLA governance.

Operational metrics work better as internal levers when they are highly technical, optimization‑oriented, or easy to game if monetized directly. These include routing efficiency ratios, dead mileage caps on individual routes, fine‑grained vehicle utilization indices by timeband, micro‑level driver fatigue scores, and model‑specific EV telemetry such as battery SoC thresholds and charger dwell times. These are ideal for command‑center dashboards, vendor performance reviews, and continuous improvement sprints, but they should not all be written into the front‑facing service catalog.

A practical way to decide placement is to apply three screens to every proposed metric before putting it in the catalog: • Is this outcome clearly observable and meaningful to non‑specialists like HR or employees? • Can this outcome be reliably measured and audited with existing data and tools? • If money is tied directly to this metric, does it encourage good behavior or create perverse incentives?

If the answer is “no” on clarity or auditability, or “yes, it could create bad incentives,” the metric should remain internal. If the metric defines the service promise and can survive audit scrutiny, it belongs in the catalog.

How do we test in the first 30–60 days if service catalog mapping is reducing escalations and manual coordination—not just improving reports?

B0219 Prove mapping reduces operational drag — In India employee mobility services (EMS), how can a Transport Head test whether service catalog mapping is actually reducing escalations and manual coordination effort over 30–60 days, rather than just producing cleaner reports?

In India EMS operations, a Transport Head can test whether service catalog mapping is genuinely reducing escalations and manual coordination by tracking a small set of operational KPIs and “control-room load” metrics for 30–60 days before and after the new catalog goes live. The test works only if it measures real shift behaviour such as escalation volume, manual overrides, and roster exceptions rather than just cleaner dashboards or reports.

A practical approach is to select 2–3 representative sites or shifts and run a time-bound experiment. The Transport Head can freeze major variables such as fleet size, vendor mix, and shift windows during the test period to avoid confounding factors. The core comparison should be between a “pre-mapping” baseline and a “post-mapping” phase where each entitlement, route type, and SLA is explicitly linked to a catalog item in the EMS platform and visible in the command center.

Key signals to track daily in the command center or NOC include the number of transport-related escalations, the count of manual roster edits and ad-hoc cab requests, and the volume of calls or chat contacts per 100 trips. Additional signals such as the number of trips needing manual routing overrides, driver reassignment after dispatch, or last-minute vehicle substitutions indicate residual fragmentation despite catalog mapping. A sustained downward trend in these metrics, with stable or improved on-time performance and safety compliance, is a strong indicator that service catalog mapping is reducing firefighting rather than just improving reporting hygiene.

To keep the test grounded in on-ground reality, the Transport Head can run brief daily huddles with dispatchers and supervisors similar to the “Daily Shift Wise Briefing” approach described in the collateral. A simple checklist can capture whether fewer edge cases require supervisor intervention, whether SOPs are clearer for night shifts and women employees, and whether driver confusion about trip type or entitlement is declining. This qualitative feedback should be cross-checked with data from command-center dashboards, alert systems, and any SOS or incident logs used for women-safety and HSSE compliance.

A focused 30–60 day test window is usually sufficient to identify whether the mapped catalog is aligned with real EMS patterns. If escalation and manual coordination metrics do not improve despite cleaner dashboards, it typically indicates gaps such as misaligned entitlements, missing catalog items for project or hybrid-work use cases, or routing rules that do not reflect actual traffic and shift behaviour. In such cases, the Transport Head can use incident and exception data from the period as an input for refining the catalog and associated SOPs, rather than concluding that the underlying technology is ineffective.

If we have multiple vendors by city/service line, how do we design service catalog mapping so KPI comparisons are fair and don’t become ‘local conditions’ arguments?

B0220 Fair KPI comparisons across vendors — In India corporate mobility, when multiple vendors serve different cities or service lines (EMS vs CRD), how should Procurement and Operations design service catalog mapping so cross-vendor KPI comparisons are fair and don’t trigger endless disputes about “local conditions”?

In multi-vendor Indian corporate mobility, Procurement and Operations should define a normalized, company-wide service catalog with standard service definitions, context tags, and KPI formulas, then let “local conditions” vary only through pre-agreed parameters and not through ad-hoc excuses. Service catalog mapping must separate what is comparable across vendors from what is structurally different by design.

A stable catalog starts with clear verticals such as Employee Mobility Services, Corporate Car Rental, and Project/Event Commute Services. Each vertical should have named service types like night-shift shared cab, executive airport transfer, or project shuttle with fixed, written inclusions and exclusions. Procurement then maps every vendor product SKUs into these internal service types, not the other way around.

Cross-vendor KPI comparisons work best when metrics use common formulas and unit definitions. OTP, cost per km, cost per employee trip, trip adherence rate, and trip fill ratio should be computed identically from a unified trip ledger. Operations should enforce consistent capture of timestamps, distances, and roster data across all vendors through a command-center or mobility data lake.

Disputes about “local conditions” reduce when context is encoded as explicit tags. City grade, timeband, shift window, route risk category, EV vs ICE, and project vs BAU should be captured as attributes on every trip. Benchmarks are then sliced by these tags, for example comparing all tier-1, night EMS routes across vendors, instead of city-wide averages.

To avoid endless argument cycles, Procurement should pre-define KPI bands and tolerance ranges per service archetype and context tag combination. Vendor evaluations then compare vendors against those bands and against peers operating under the same tags, rather than against a single global “average” that hides mix differences.

A practical pattern is to design three layers of KPI use:

- Base layer of non-negotiable metrics that always compare across all vendors, like audit trail integrity or credentialing currency.

- Context-normalized layer that only compares vendors within the same context slice such as tier, timeband, and service type.

- Vendor-specific improvement layer where each vendor carries its own targeted actions based on baseline and trend within its portfolio.

Fairness also depends on clean commercial mapping. Procurement should keep EMS, CRD, and ECS in separate catalog lines and avoid mixing per-km and per-seat models when comparing TCO. Where models differ, normalize to cost per employee trip under agreed load factors and dead-mile caps.

Centralized governance then ties these elements together. A command-center style operating model with standardized reports, an escalation matrix, and quarterly business reviews lets Procurement, HR, and Transport review the same single-window dashboards rather than vendor-curated views.

If Finance wants strict cost KPIs but Ops needs buffers for peaks and night safety, how do we use service catalog mapping to agree on the trade-off?

B0224 Make cost vs resilience explicit — In India corporate mobility, what should a buyer do when Finance demands strict cost KPIs while Operations insists on buffers for peak shifts and night safety—how can service catalog mapping make the “cost vs resilience” trade-off explicit and agreed?

In Indian corporate mobility, buyers should use a clearly structured service catalog to separate “baseline commute” from “resilience layers” so that cost KPIs apply tightly to core services, and explicit premiums apply to buffers, peak handling, and night safety. Service catalog mapping works when each service type has defined inclusions, KPIs, and pricing logic that Finance can model and Operations can rely on for OTP and safety.

A practical approach is to first segment services by verticals such as Employee Mobility Services, Corporate Car Rental, Project/Event Commute, and Long-Term Rental. Each vertical should then be decomposed into standard SKUs like base shifts, peak overlays, escort requirements, night-band operations, standby vehicles, and incident-ready command-center coverage. Operations can then tag which SKUs are mandatory for specific sites, timebands, and personas, while Finance can see which elements are optional, variable, or outcome-linked.

A common failure mode is when buffers like standby cabs, extra night routing rules, and safety escorts are buried inside “all-inclusive” contracts. This obscures true unit economics and drives recurring disputes between cost-containment and reliability needs. Service catalog mapping avoids this by assigning separate KPIs to each layer such as OTP% and Trip Adherence for base services, seat-fill and dead mileage caps for optimization, and incident-response SLAs for resilience.

Buyers can then align commercials by linking payouts for baseline SKUs to strict cost per kilometer and cost per employee trip thresholds while attaching explicit premiums for agreed resilience SKUs that protect night-shift women safety compliance and business continuity. Quarterly reviews can track which SKUs were actually consumed and reconcile them against On-Time Performance, incident rates, and escalation volumes, making the “cost vs resilience” trade-off visible, auditable, and renegotiable instead of emotional.

For our mobility program, how do we split EMS, airport/intercity, and event/project transport into a clear service catalog so we’re not forcing one KPI model on everything?

B0227 Define service lines without confusion — In India corporate ground transportation programs, how should an enterprise map a service catalog across Employee Mobility Services (shift-based commute), Corporate Car Rental (airport/intercity), and Project/Event Commute so stakeholders stop arguing about “one KPI set,” and each service line has outcome definitions that reflect its real operational constraints?

In corporate ground transportation, enterprises should define a separate service catalog and KPI set for Employee Mobility Services, Corporate Car Rental, and Project/Event Commute, and then align them under one governance view instead of forcing “one KPI to rule all.” Each service line needs its own outcome definitions tied to its operational reality, while a thin common layer only tracks shared themes like reliability, safety, cost, and ESG.

For Employee Mobility Services, outcome definitions work best when centered on shift adherence and safety. Operational constraints include pooled routing, hybrid-work volatility, and night-shift obligations. Strong EMS catalogs specify entitlements by persona, shift-windowing rules, guard/escort conditions, women-first routing, and NOC monitoring expectations. EMS KPIs should prioritize on-time performance to shift start, trip adherence rate, seat-fill, incident rate, and closure SLAs, because missed shifts and safety failures are the dominant risks.

For Corporate Car Rental, outcome definitions should emphasize response time, executive experience, and billing accuracy. Operational constraints include airport-linked timing, point-to-point trips, and high expectation on vehicle standardization. CRD catalogs define service types (airport, intra-city, intercity), SLA-bound response times, vehicle classes, and approval workflows. KPIs should focus on SLA adherence for dispatch and pickup, vehicle quality compliance, cost per km, and invoice accuracy, because Finance and Travel Desks care about predictability and auditability more than pooled-efficiency metrics.

For Project/Event Commute, outcome definitions must be anchored in time-bound execution certainty and surge handling. Operational constraints are rapid scale-up/scale-down, temporary routing, and crowd movement at fixed timebands. ECS catalogs should define project duration, fleet ramp-up timelines, control-desk responsibilities, and peak-load handling rules. KPIs should track day-0 readiness, adherence to event schedules, exception latency during peaks, and ramp-down efficiency, since delay tolerance is near zero.

A practical way to stop cross-stakeholder argument is to publish a service catalog where each line item lists its scope, operational constraints, primary buyer, and 4–6 core KPIs. HR and Transport Heads can then judge EMS on shift reliability and safety outcomes. Finance and Procurement can evaluate CRD on cost and billing control. Projects and Operations can rate ECS on execution certainty. A light, cross-cutting dashboard can still report a few common indicators such as overall on-time performance, incident rate, and cost per employee trip, but governance discussions should reference the service-specific KPI blocks first, so expectations remain realistic and blame is not driven by the wrong benchmark.

In our shift commute setup, what signs tell us our EMS service definitions/KPIs are mis-mapped and that’s why issues keep escalating even when vendors say they met SLAs?

B0228 Spot mis-mapped EMS outcomes — In India Employee Mobility Services (EMS) for shift-based employee transport, what are the early warning signs that the organization’s service catalog mapping is wrong—so on-time performance issues get blamed on the wrong team and escalations keep happening despite “meeting SLAs” on paper?

In Indian Employee Mobility Services for shift-based transport, the clearest early warning sign of wrong service-catalog mapping is when on-time performance complaints keep rising even though vendors and internal teams can show “green” SLA dashboards for their own scope. This misalignment creates a pattern where each function claims compliance, but shift adherence, employee experience, and escalation volume keep worsening.

A common signal is persistent confusion over which service vertical is supposed to handle a given use case. For example, airport-style Corporate Car Rental logic may be used for predictable shift-based Employee Mobility needs, or short-term Project/Event Commute models may be used for what has effectively become a long-term route. This misclassification results in wrong routing rules, wrong buffers, and wrong capacity assumptions, so OPS believes it has “served the request” while HR and business teams see repeated late logins.

Another early indicator is when transport teams experience chronic last-minute changes and manual overrides around the same patterns of demand. This usually means the underlying catalog does not reflect current hybrid-work realities, seat-fill expectations, or peak/non-peak windows for the site. In those cases, command center and routing teams keep firefighting exceptions that could have been avoided with correct catalog definitions and shift-windowing rules.

Frequent cross-functional disputes about “who owns” recurring problem trips are also a strong sign. When HR, Finance, and Transport each interpret a trip as belonging to different catalog buckets, cost models, and SLA definitions, then accountability fragments. In practice, this shows up as repeated escalations on the same corridors or timebands, while billing, routing, and vendor governance each sit on different assumptions.

Another pattern is when cost per employee trip appears acceptable at an aggregate level, but certain cohorts or routes repeatedly miss OTP despite similar spend. This disparity suggests that the service catalog has not differentiated correctly between EMS, CRD, ECS, and Long-Term Rental scenarios, leading to the wrong commercial and operating model for those cohorts. The result is that Finance sees “reasonable CPK/CET” on paper, while operations know specific shifts are structurally under-served.

Finally, if escalation narratives from employees and managers consistently describe the commute as unpredictable, while dashboards and MIS from vendors highlight “SLA met,” the catalog is likely masking the real risk exposure. In mature EMS programs, catalog mapping is tightly tied to shift patterns, women-safety requirements, EV versus ICE suitability, and route-criticality. When these mappings are off, incidents and late arrivals cluster in ways that look like “bad operations,” but the real failure is upstream in how the services were defined and assigned.

As Finance, how do we make sure KPIs for EMS vs airport/intercity vs events/LTR actually tie to billing and audit evidence, not just reports?

B0230 Make KPIs invoice-auditable — In India corporate ground transportation, what’s a practical way for a CFO to verify that each service line in the mobility service catalog (EMS vs airport/intercity vs events vs long-term rental) has KPIs that can be tied to invoice logic and audit trails, rather than being “nice dashboards” that can’t survive finance reconciliation?

A CFO can verify KPI-to-invoice integrity by insisting that each mobility service line runs on a single, auditable trip ledger where every invoice line item is mathematically reconstructible from operational events, documented rules, and preserved evidence. The CFO should approve the service catalog only when Employee Mobility (EMS), airport/intercity (CRD), events/projects (ECS), and long-term rental (LTR) each have clearly defined KPIs, pricing formulas, and log records that reconcile end‑to‑end.

For Employee Mobility Services, finance teams should see trip-level records for every rostered shift. Each record should show route, seat-fill, distance, time, and vendor, with On‑Time Performance (OTP), Trip Adherence Rate, and seat utilization traced directly to the same data used for billing per km, per trip, or per seat. If the KPI changes (for example, OTP-linked penalties), the commercial rule should be versioned and stored alongside the trip ledger.

For airport, intercity, and ad‑hoc rentals, invoices should be generated from a centralized booking system, not emailed duty slips. Each booking should carry customer approvals, timestamps, vehicle class, SLA response times, and distance, with SLA adherence and response-time KPIs derived from the same timestamps. Finance should be able to sample any invoice line and retrieve the underlying trip log, GPS trace, and approval trail.

For events and project shuttles, the CFO should require a dedicated project code with a time-bound route and schedule library. Each movement should post automatically into that project ledger with headcount, schedule compliance, and exception logs. Event KPIs like zero-delay starts or volume moved per hour must aggregate cleanly from that same ledger so that day-wise or phase-wise invoices can be replayed during audits.

For long‑term rentals, the CFO should insist on a separate uptime and utilization register. Each fixed-fee vehicle should have assigned IDs, contract tenure, uptime SLAs, and preventive maintenance logs. Any variable top‑ups, penalties, or downtime credits should be computed by reference to this register, so that CET, cost per vehicle, and uptime ratios can be re‑derived during reconciliation.

A practical verification approach for a CFO usually includes:

- Demanding a unified data schema where trip IDs, vehicle IDs, and employee IDs are consistent across EMS, CRD, ECS, and LTR.

- Requiring that every KPI reported in dashboards (OTP, cost per km, EV utilization) uses the same underlying measures that drive billing and penalties.

- Running periodic sample audits where Finance picks random invoice lines and reconstructs them from raw trip logs, GPS or telematics exports, and approval workflows.

- Making KPI and pricing rules part of the signed contract and insisting that changes go through controlled versioning so audits can track which rule applied when.

If any dashboard metric cannot be reproduced from the trip ledger and commercial rules, or if different data sources are used for billing and reporting, a CFO should treat those KPIs as non‑authoritative for reconciliation or audit defense.

For pooled shuttles on a campus/park, how do we define success so we capture adoption and employee experience, not only cost per seat?

B0234 Define pooled shuttle success — In India corporate pooled shuttles for campuses or business parks, how do facilities and HR define “pooled shuttle success” in the service catalog mapping when employee perceptions (crowding, predictability, boarding discipline) drive adoption as much as cost per seat?

In Indian corporate pooled shuttles, facilities and HR usually define “success” as a balance of four equal dimensions in the service catalog. These dimensions are operational reliability, employee experience, safety/compliance, and cost per seat. Cost per seat matters, but adoption depends just as much on how predictable, safe, and comfortable the pooled experience feels to employees.

Facilities teams focus on operational reliability and load-factor economics. They look at on-time performance for each shift window, seat-fill versus dead mileage, and how well routing and capacity buffers absorb hybrid-work variability. They monitor exception latency from first alert to resolution through a command center or NOC rather than just counting total incidents. This view treats shuttles as a governed EMS service with defined SLAs, not just a cheap bus.

HR teams anchor their definition of success in employee experience, safety, and reputation. They track commute-linked attendance stability, complaint volume and closure SLAs, and specific women-safety controls such as escort rules, SOS readiness, and audit trails. HR links shuttle satisfaction and perceived crowding or boarding discipline back to retention, diversity goals, and employer brand, so a low-cost but visibly chaotic shuttle is treated as a failure.

In catalog mapping, this usually translates into a small set of headline KPIs that sit alongside CPK or cost per seat, rather than beneath it. Those KPIs typically cover on-time performance and trip adherence, seat-fill and crowding thresholds by route, incident and safety metrics, and a commute experience or satisfaction index derived from feedback and grievance closure.

For long-term rentals, what outcomes should we track separately so uptime and maintenance aren’t evaluated like daily EMS or airport trips?

B0235 Separate LTR outcomes clearly — In India corporate Long-Term Rental (LTR) fleets for leadership, sales, or plant operations, what outcomes should Procurement map separately from on-demand trips so uptime, replacement planning, and maintenance discipline don’t get judged by the same metrics used for EMS or CRD?

In India corporate Long-Term Rental (LTR) fleets, Procurement should track asset-level continuity and lifecycle outcomes separately from trip-level performance so LTR does not get misjudged against EMS or CRD metrics. LTR success is defined by assured availability, predictable cost, and disciplined maintenance over months and years, not by daily routing or trip-response SLAs.

Procurement should avoid over-weighting OTP%, trip fill ratio, or dispatch response time for LTR vehicles because these are EMS/CRD metrics tied to dynamic routing and pooled usage. LTR vehicles for leadership, sales, or plant roles usually serve dedicated users and fixed duty cycles, so the critical outcomes sit around uptime, continuity of allocation, and lifecycle governance instead of per-trip efficiency.

The most important LTR-specific outcome buckets are:

- Fleet uptime and continuity for each assigned vehicle.

- Replacement and downtime planning that protects business continuity.

- Preventive maintenance discipline and compliance currency over the contract tenure.

- Cost predictability at a monthly or lifecycle level rather than per-trip economics.

For uptime and continuity, Procurement should map a vehicle-level uptime ratio, maximum allowable downtime per month, and SLA for providing a like-for-like replacement during planned and unplanned off-road events. These outcomes should be reported by asset and user group instead of being aggregated into pooled-fleet OTP dashboards used for EMS.

For replacement planning, Procurement should define explicit thresholds for age, mileage, and breakdown frequency that trigger pre-agreed replacement or fleet refresh actions. These thresholds should be contracted as part of LTR lifecycle governance rather than handled as ad-hoc “incident fixes” the way missed EMS or CRD trips are handled.

For maintenance discipline, Procurement should track preventive-maintenance adherence, closure of manufacturer-recommended services, and audit-ready records of fitness, permits, and inspections. These indicators should feed a compliance view for LTR that is asset-tenure based, not trip-count based.

For financial outcomes, Procurement should map cost per vehicle per month, total cost of ownership over the lease term, and predictability of charges versus the contracted schedule. These outcomes are distinct from cost-per-kilometer or cost-per-trip benchmarks used for pooled EMS or on-demand CRD, which focus on routing efficiency and dead mileage reduction.

For EV-based LTR fleets, Procurement should also map EV utilization ratio, charging reliability for the assigned duty cycle, and emission intensity per vehicle, treating these as ESG and ESG-disclosure outcomes rather than blending them into generic EMS emission dashboards.

Which outcome definitions should we avoid because vendors can game them—like OTP that doesn’t account for early arrivals, missed boardings, or route deviations?

B0249 Avoid gameable outcome definitions — In India corporate mobility vendor governance, what outcome definitions should be explicitly excluded from the service catalog mapping because they are too easy to manipulate (for example, OTP definitions that ignore early arrivals, missed boards, or route deviations)?

In corporate mobility vendor governance in India, outcome definitions that are easy to manipulate are those that ignore the full trip lifecycle, exclude key failure modes, or cannot be independently verified from auditable data. These outcome definitions should be explicitly excluded from the service catalog and SLA mapping because they create a false sense of performance and weaken vendor governance.

Outcome definitions that should be avoided are those that treat reliability as a narrow timestamp metric. If an On-Time Performance (OTP) definition only checks “vehicle reached geofence before scheduled time” but ignores early arrivals, missed boards, and route deviations, then vendors can game OTP through early show-ups and incomplete trips. In practice, reliability must be tied to Trip Adherence Rate, exception detection-to-closure time, and route adherence audits instead of a single arrival timestamp.

Any safety metric that does not use trip-level incident logs, SOS activations, and credentialing currency should be excluded from outcome-based contracts. A safety KPI that only counts “reported incidents” without auditing under-reporting, escort rules, or night-shift compliance makes it easy for operators to suppress data rather than improve behavior. Safety outcomes must be based on continuous assurance, audit trail integrity, and chain-of-custody for GPS and trip logs.

Cost and utilization outcomes are also vulnerable to manipulation when they use partial baselines. Cost per kilometer figures that ignore dead mileage, no-shows, or empty repositioning encourage vendors to shift costs off the visible ledger. Utilization metrics that do not account for seat-fill, Trip Fill Ratio, and dead-mile caps can be inflated through unnecessary deployments. These metrics should be replaced with integrated measures like cost per employee trip, Vehicle Utilization Index, and idle emission loss that cover the entire duty cycle.

ESG or EV outcomes that only count “number of EVs deployed” or “EV trips booked” without using verifiable emission intensity per trip or gCO₂/pax-km should not be accepted. Vendors can park EVs on low-mileage routes or cherry-pick low-utilization deployments to claim green credentials. EV utilization and carbon abatement must be computed from raw telematics, charging data, and reconciled trip ledgers so that carbon abatement indexes and fleet electrification roadmaps are defensible.

Finally, any experience metric that depends solely on optional or vendor-run surveys is weak as a contractual outcome. A Commute Experience Index or NPS that is not linked to adoption, complaint closure SLAs, and exception closure times can be massaged through sampling bias. Experience outcomes should instead be anchored in HRMS-linked attendance deltas, no-show rates, and grievance redressal SLAs.

For shift EMS, how do we set outcomes around driver availability and fatigue so pushing reliability doesn’t lead to burnout and safety problems later?

B0250 Map outcomes to driver fatigue — In India shift-based Employee Mobility Services, how should a transport head map outcomes for driver availability and fatigue risk so reliability targets don’t quietly cause burnout, attrition, and eventually worse safety incidents?

In Indian shift-based employee mobility, a transport head needs to treat driver availability and fatigue as explicit, measured outcomes alongside OTP, not as invisible inputs to “hit targets at any cost.” Driver duty cycles, rest windows, and fatigue indicators should sit on the same dashboard as on-time performance, incident rate, and fleet uptime so reliability improvements never come from silent overuse of the same drivers.

The first discipline is to define hard guardrails for driver utilization. Each driver should have a maximum number of hours per day and per week, with duty cycles aligned to labor and OSH norms, and these limits should be enforced by the rostering and routing system. Any route or shift plan that requires breaches should be flagged as a planning failure, not “driver flexibility.”

The second discipline is to monitor leading indicators of fatigue as operational KPIs. Repeated night shifts for the same driver, frequent last-minute extensions, growing dead mileage before or after duty, and an increased Driver Attrition Rate are all signals of stress that should trigger early intervention. These indicators should be reviewed in the same rhythm as OTP% and Trip Adherence Rate.

The third discipline is to explicitly separate “coverage” from “stretch.” Buffer vehicles and standby drivers should be part of the routing and capacity policy so monsoon, traffic disruptions, and technology failures are absorbed by design. This reduces the temptation to pull the same small pool of “reliable” drivers into repeated double-duties whenever there is disruption.

A practical mapping structure that keeps burnout in check usually includes:

• A Driver Utilization Index per week per driver.

• A hard cap on consecutive night shifts and maximum duty hours per 24-hour window.

• A simple Fatigue Risk flag in the command center that appears before a driver is assigned to another shift.

• Linkage between driver-related safety incidents, OTP dips, and that driver’s utilization history.

When this mapping is in place and visible at the command center level, shift reliability can improve without building a hidden backlog of fatigue that later shows up as accidents, absences, or sudden attrition.

For women safety on night shifts, which service KPIs should Security/EHS lock in (escort, SOS response, geo-fence) so we can prove duty of care?