How to run attribution like a control-room playbook: five lenses for reliability in employee mobility services

In peak shifts, GPS glitches, driver shortages, and weather disruptions push dispatches into crisis mode. This guide helps Operations Heads convert data into a repeatable, auditable playbook that keeps the control room calm instead of chasing firefighting. The five lenses offer practical guardrails, escalation paths, and SOP-level clarity so your team can act within minutes without adding hype or friction.

Is your operation showing these patterns?

- Escalations spike during peak shifts with no clear root cause.

- GPS outages or app downtime undermine credibility of improvements.

- Vendor response gaps force manual workarounds and long recovery times.

- Operations spend hours reconciling mismatched trip logs and rosters.

- Staff burnout rising as frontline incident triage becomes the norm.

- Leadership sees 'improvements' but frontline reports unresolved issues.

Operational Framework & FAQ

data integrity & single source of truth

Establish a clean data foundation across HRMS, attendance, access logs, and trip data; define a single source of truth and guardrails against drift and privacy risk.

For our employee transport program, what does attribution and causality really mean, and how do we avoid confusing correlation with real impact on attendance or attrition?

B0102 Attribution vs correlation explained — In India corporate Employee Mobility Services (EMS), what does “attribution & causality” practically mean when HR claims commute improvements increased attendance or reduced attrition, and what is the simplest way to avoid mistaking correlation for impact?

In Indian corporate Employee Mobility Services, “attribution & causality” means proving that specific commute changes directly drove outcomes like better attendance or lower attrition, instead of just happening around the same time. It requires linking a defined EMS intervention to measurable shifts in HR metrics while ruling out other obvious reasons for the change.

In practice, most organizations only see correlation. Attendance improves after a route revamp or EV rollout, and HR reports “transport solved it.” Attributing causality needs more discipline. The EMS change must be clearly defined in time and scope. The affected population must be separated from a reasonably similar “unaffected” group. Transport KPIs like on‑time performance, safety incidents, or trip fill ratio must move first. Attendance, attrition, or satisfaction should move after, in the same cohort.

A common failure mode is announcing “EVs improved morale” when the same period also had salary corrections, policy changes, or manager reshuffles. Another is using only point-in-time survey results or testimonials without tying them back to shift-wise OTP, no-show rates, or complaint closure SLAs captured in the EMS command center or dashboards.

The simplest way to avoid mistaking correlation for impact is to treat EMS changes as small experiments. HR and Transport can define one change, one cohort, one time window, and one or two HR outcomes, while keeping a comparable control group unchanged. If the treated group shows a clear, time-aligned improvement in both transport KPIs and HR metrics relative to the control, the causal story is much more defensible.

To operationalize this with minimal complexity, teams can follow four checks:

- Is there a clear “before/after” window for the EMS change?

- Is there a comparable set of employees whose commute did not change?

- Did transport reliability or safety KPIs improve first, before HR outcomes?

- Can Finance and HR jointly validate that no bigger concurrent changes explain the shift?

This lightweight, cohort-and-control mindset fits existing EMS governance, uses data already flowing through routing engines and HRMS integration, and gives HR a story about attendance and attrition that can stand up in front of Finance, ESG, and audit reviewers.

Why does Finance usually push back when HR says better OTP improves shift adherence, and what kind of proof actually convinces them?

B0103 Why Finance doubts HR claims — In India corporate ground transportation, why do CFOs often challenge HR’s claims that better on-time performance (OTP) improves shift adherence, and what proof typically changes a Finance leader’s mind?

Most Finance leaders challenge HR’s “better OTP improves shift adherence” claims because the link is usually presented as intuition, not as reconciled, auditable data tied to cost and productivity outcomes. CFOs change their mind only when OTP and shift adherence are shown as hard, repeatable relationships that reconcile cleanly with HRMS, transport logs, and financial impact.

CFOs are accountable for cost transparency, audit integrity, and predictable unit economics like Cost per Employee Trip and Cost per Kilometer. Subjective narratives about “happier employees” or isolated anecdotes do not survive scrutiny during audits or budget reviews. A common failure mode is fragmented data, where transport vendors show OTP percentages, HR shows attendance complaints, and Finance cannot trace any of it back to payroll, productivity, or trip-level costs.

Finance leaders respond when commute data is fused with HRMS and operations data in a governed way. They look for evidence that higher OTP correlates with fewer late logins, lower no-show rates, and more stable shift productivity. They place high weight on outcome-linked KPIs such as cost per trip, trip fill ratio, dead mileage, and exception-closure times.

The proof that typically shifts a CFO’s view includes:

- Time-series comparisons showing OTP% vs. late logins and no-show rates across similar sites or timebands.

- Before/after analyses where route optimization or centralized command-center monitoring lifted OTP and simultaneously reduced overtime, exception cabs, or make-up trips.

- Trip-level and shift-level reconciliations where vendor logs, HRMS attendance, and billing match, demonstrating that improved OTP reduced leakage and rework.

- Documented outcome-based contracts where payouts are indexed to OTP, incident rates, and exception-closure SLAs, creating a defensible financial narrative.

In practice, a CFO’s stance changes when OTP improvements are expressed as a verified reduction in CET/CPK, fewer exception payments, and more predictable rosters—not as a standalone operational KPI but as a driver of cleaner books and smoother audits.

For measuring whether routing changes reduced late logins, when should we use pre/post vs matched cohorts, and when is each good enough for leadership?

B0104 Pre/post vs matched cohorts — In India shift-based Employee Mobility Services (EMS), how does a basic pre/post analysis differ from a matched-cohort comparison when measuring whether routing changes reduced late logins, and when is each approach credible enough for leadership decisions?

In shift-based Employee Mobility Services in India, a basic pre/post analysis tests whether late logins changed after a routing change, while a matched-cohort comparison tests whether late logins changed for “similar” employees or routes that did and did not receive the change. A basic pre/post view is usually enough for quick operational decisions and early SOP tuning, while matched-cohort evidence is needed when leadership will tie budgets, contracts, or policy changes to those results.

A basic pre/post analysis compares late-login rates before and after the routing change across the same operation. This approach is fast and easy to run from existing EMS trip and attendance logs. It works best when nothing else material changed in the period, when the organization only needs directional evidence, and when the decision is localized, such as adjusting seat-fill targets, buffer times, or vendor allocations within one site.

A matched-cohort comparison constructs two comparable groups. One group is exposed to the new routing logic or fleet mix, and the other continues on the old pattern. Each cohort is matched on factors like shift window, route length, time band, vendor, and employee mix. This approach reduces bias from external changes such as traffic patterns, hybrid-work attendance shifts, or vendor performance. It is credible for leadership when EMS results will influence contracts, penalties or incentives, EV adoption decisions, or multi-city rollouts.

Matched-cohort methods demand better data quality and governance. They need stable integration between routing engines, HRMS attendance, and command-center logs, along with clear KPI definitions for on-time performance and late logins. Basic pre/post methods are appropriate when transport heads need a quick read to calm operations, but leadership should insist on matched cohorts before claiming structural improvement or baking outcomes into SLA-linked commercials.

What data do we need to join from HRMS, attendance, and access control to link commute reliability to attendance, and where do these joins usually break?

B0105 Critical HRMS-attendance-access joins — In India enterprise-managed employee transport, what specific data joins between HRMS, attendance, and access-control logs are usually required to attribute commute reliability changes to attendance outcomes, and what are the common failure points in those joins?

In India enterprise-managed employee transport, organizations usually need to join trip-level commute data with HRMS master data, shift/roster records, attendance logs, and physical access-control logs on a per-employee, per-shift basis to attribute commute reliability changes to attendance outcomes. The joins that matter most are employee-identity joins, shift-window joins, and site/route joins, and the common failure points are inconsistent IDs, misaligned timestamps, and missing or siloed data between transport, HR, and access systems.

The primary join is an identity join between the mobility platform’s rider ID and the HRMS employee ID. This join lets organizations link each trip or route manifest to a specific employee record, which is required to compare transport KPIs like on-time performance with HR-linked KPIs like attendance or attrition. A second join is a shift/roster join between the transport roster, the HRMS shift schedule, and the attendance system’s expected in/out times. This join allows comparison of planned pickup/drop windows with actual trip times to see if late arrivals correlate with late logins or early logouts. A third join is a site/access join between the transport drop location, the employee’s mapped work site, and access-control entry/exit events, so that trip timestamps can be validated against actual gate swipes or entry logs.

Common failure points include inconsistent or duplicated employee identifiers across systems, which break the identity join and force manual reconciliation. Timestamp misalignment across trip logs, HRMS shift definitions, and access-control systems often makes it hard to decide whether a delay is operational or just a clock-skew artifact. Data silos, where transport vendors, HR, and access-control providers hold their own partial datasets without a shared schema, frequently block end-to-end attribution. Another frequent issue is incomplete event capture, such as missing no-show flags, unlogged manual trips, or gaps in access logs, which leads to biased or inconclusive correlations between commute reliability and attendance changes.

How do we account for seasonality, site ramp-ups, and hybrid policy changes when we try to link OTP improvements to attendance or shift adherence?

B0106 Controlling confounders in EMS — In India corporate Employee Mobility Services (EMS), how do you control for confounders like seasonality, site ramp-ups, and hybrid WFO/WFH policy shifts when attributing a change in OTP to a change in attendance or shift adherence?

In India EMS programs, attribution between On-Time Performance (OTP) and attendance or shift adherence is only reliable when operations teams explicitly normalize for demand, capacity, and policy shocks. Confounders such as seasonality, site ramp-ups, and hybrid WFO/WFH shifts need to be modeled as separate control variables before linking OTP movements to changes in login adherence or absenteeism.

Most mature operators treat OTP and attendance as correlated but distinct outputs. They create a canonical data layer where roster data, trip logs, and HRMS attendance sit together, and then segment performance by site, timeband, and policy regime. Without that segmentation, a genuine OTP gain can be mistaken for improved attendance when the real driver is a temporary demand dip, a hiring slowdown, or a policy change that reduced the number of commuters.

A common failure mode occurs when buyers track only headline OTP and monthly attendance. This approach hides the impact of seasonal weather, exam periods, festival calendars, project go-lives, and ramp-downs. It also ignores how hybrid policies reshuffle who is eligible for cabs, which days are “peak commute,” and how seat-fill and dead mileage behave. In practice, EMS teams that control for confounders rely on shift windowing, trip-level adherence logs, and explicit tags for special conditions such as ramp-up weeks, BCP events, or temporary routing rules.

Practical control steps that operators use include: - Tagging each week or month with demand context such as headcount changes, new-project onboarding, or known low-volume seasons. - Comparing OTP and attendance at the level of consistent cohorts, such as the same process, site, or timeband, before and after operational changes. - Anchoring OTP and attendance analysis to a stable baseline period, then treating policy changes like WFO mandates or EV-routing pilots as discrete interventions.

In an RFP, what proof should we ask for when a vendor says their routing/NOC will cut attrition or late logins, without making it overly complex?

B0107 RFP proof for causality claims — In India corporate ground transportation procurement, what attribution evidence is reasonable to demand in an RFP when a vendor claims their routing or NOC will reduce attrition and late logins, without creating an unrealistic “science project” evaluation?

In India corporate ground transportation, buyers should demand attribution evidence that links routing or NOC improvements to late-logins and attrition through traceable operational proxies, not broad HR outcomes. The most reasonable approach is to insist on auditable baselines, KPI deltas, and simple comparative designs that can run inside normal operations without becoming a separate “science project.”

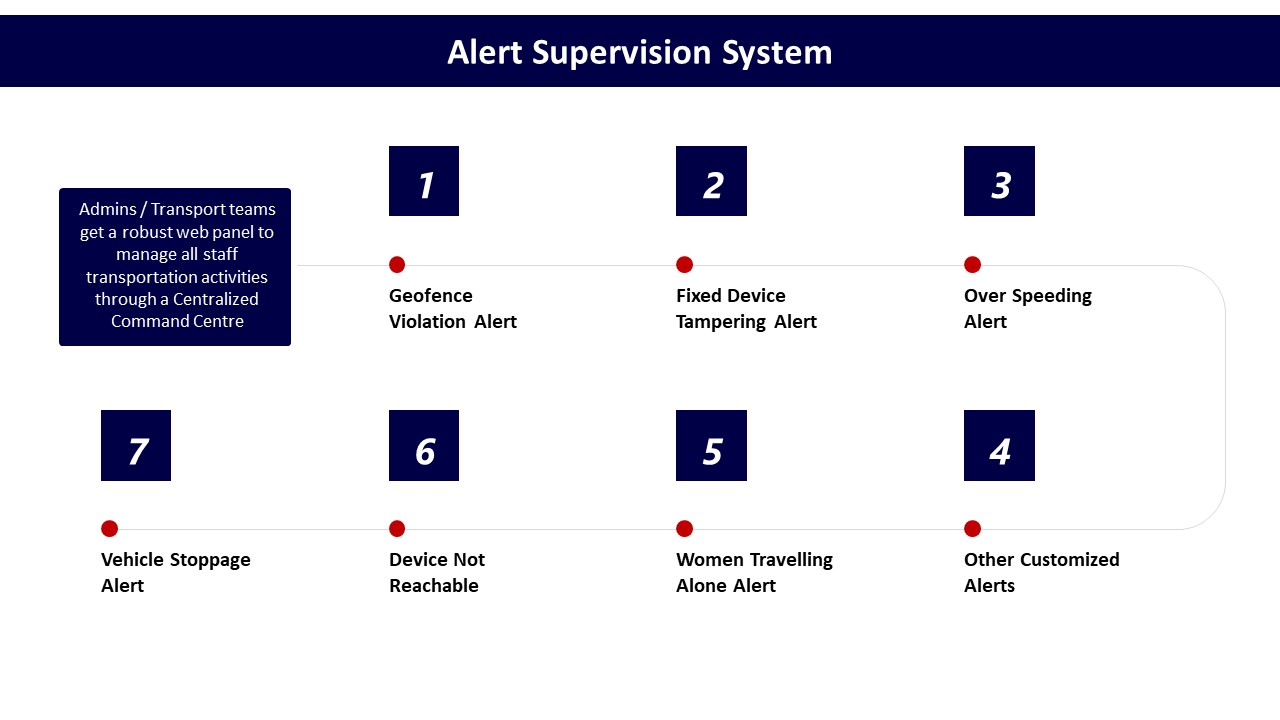

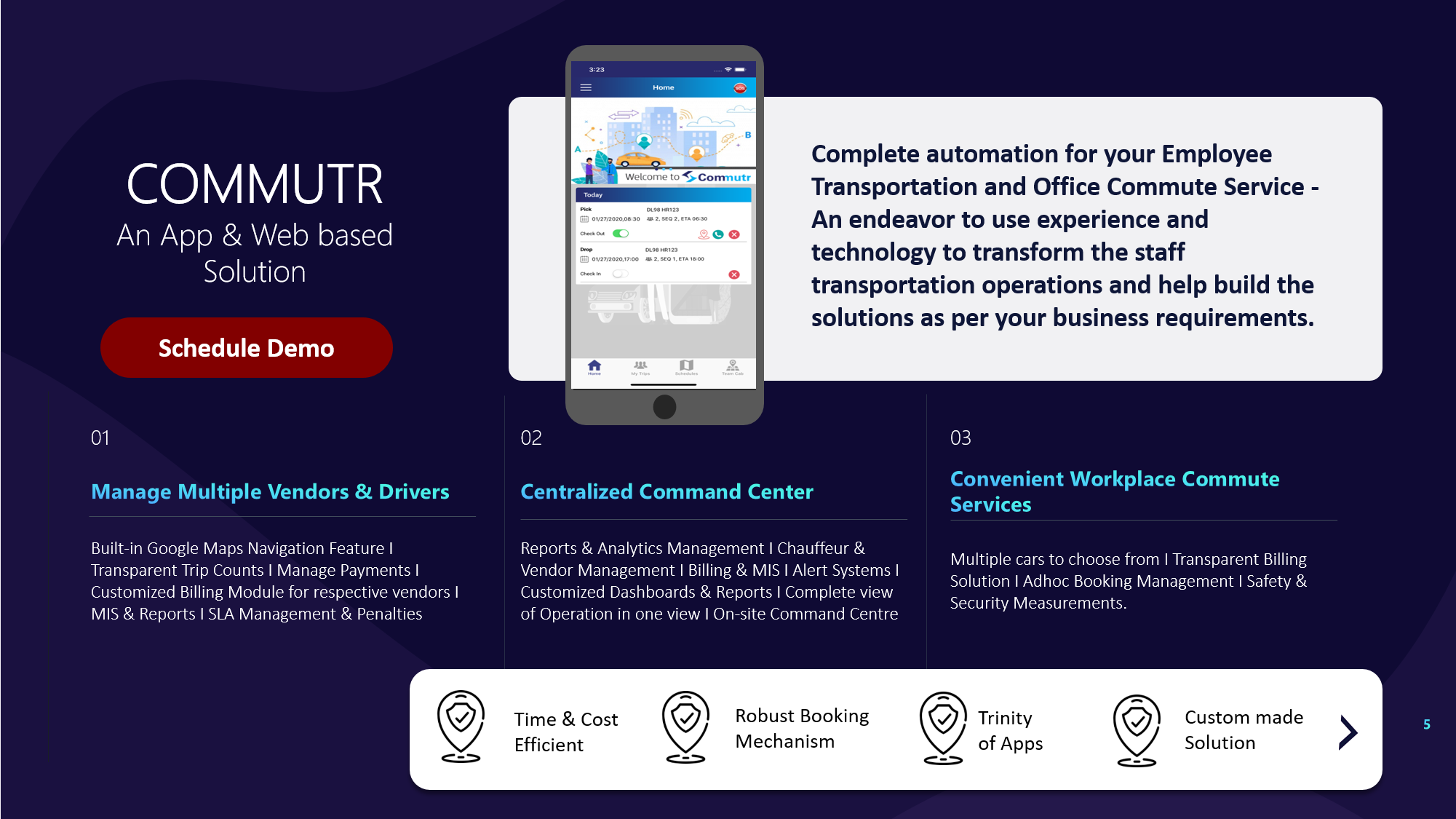

Vendors should first show how their routing engine and 24x7 NOC improved core commute KPIs in comparable environments. Relevant evidence includes changes in on-time performance, trip adherence, no‑show rate, seat fill, and exception closure time, ideally with before/after data or six‑month trend views. For EMS, this often ties to dynamic routing, live GPS tracking, and command‑center alert supervision rather than generic “AI” claims.

The RFP can then require vendors to map those commute KPIs to HR-facing indicators in a light-touch way. Reasonable asks include correlation to late login counts by shift band, attendance volatility in high‑dependence transport sites, and commute-related grievance volume, with clear definitions and time windows. HRMS–transport integration is important here, but the expectation should be a pragmatic link, not a full-blown analytics lab.

To avoid an unmanageable evaluation design, most organizations run simple A/B or phased rollouts instead of experimental trials. A practical RFP condition is a three-to-six-month pilot on selected routes or sites, comparing OTP, exception rates, and commute complaints before and after vendor onboarding. Vendors who already operate centralized command centers and incident-ready NOCs can also be asked to share anonymized case studies where improved OTP and safer routing coincided with better employee satisfaction scores.

This structure keeps attribution grounded in transport-controllable variables, allows HR, Transport, and Finance to read the same numbers, and still gives CHROs a defensible narrative about reduced late logins and transport-linked attrition without over-promising causal precision.

Which outcomes are actually defensible to link to commute improvements—attendance, late logins, shift adherence, retention—and which ones usually don’t pass Finance scrutiny?

B0108 Defensible outcomes for attribution — In India enterprise employee transport, what are the most defensible outcome metrics to attribute to commute improvements—attendance stability, late login minutes, shift adherence, retention—and which of these typically fail attribution scrutiny with CFOs?

In India enterprise employee transport, the most defensible outcome metrics are those that sit closest to raw operational logs and HRMS data. Attendance stability, late-login minutes, and shift adherence are usually defensible if they are tied directly to HRMS time stamps and trip logs. Retention uplift is where attribution usually fails CFO scrutiny because too many non-transport variables influence it.

Attendance stability is defensible when HRMS swipe / SSO login events are correlated with routed employees and shift-wise route rosters. Late-login minutes are defensible when trip ETAs and actual drop times are mapped to application login windows for commute-tagged employees. Shift adherence is defensible when on-time arrival (OTP%) and Trip Adherence Rate are stored as audit-ready metrics in a command center dashboard.

Retention improvements usually fail CFO scrutiny. The failure mode is that HR cannot isolate transport from compensation changes, manager behavior, role changes, or macro labor-market shifts. Overall employee satisfaction or NPS also fails scrutiny when it is not commute-specific or when survey methodology is weak.

The CFO lens favors outcome metrics that can be reconciled with Finance and HR data. Cost per employee trip, cost per kilometer, and exception-to-closure SLAs are easier to defend than “culture” or “morale” outcomes. Commute-linked attendance deltas at specific sites or time bands are more credible than broad claims like “lower attrition due to better transport.”

CFOs usually require three conditions before accepting attribution. The baseline and post-change periods must be clearly defined. The commuter cohort must be explicitly tagged and stable. The explanation of confounding factors must be documented, not ignored.

How can we figure out whether late logins are due to routing/dispatch vs employee readiness, gate delays, or access-control delays before we blame the vendor?

B0109 Diagnose causes of late logins — In India shift-based EMS operations, how can a Facilities/Transport Head diagnose whether late logins are caused by routing/dispatch issues versus employee readiness, gate delays, or access-control bottlenecks before blaming the mobility vendor?

In Indian shift-based EMS operations, a Facilities or Transport Head can separate routing/dispatch faults from employee or access-related delays by building a simple, evidence-based comparison between planned trip data and site-side events for every late login incident. The core principle is to timestamp each handover in the chain and then see where the first deviation appears.

First, the transport team should lock a standard “trip lifecycle” view for each shift. This view should cover roster freeze time, vehicle reporting time at origin, actual pick-up times, gate-in at the site, and employee login time. A centralized command center or NOC dashboard with real-time GPS, alert supervision, and route adherence monitoring gives early visibility of whether the cab actually ran late or reached on time and queued at the gate. When GPS logs show on-time arrival at the facility and the delay appears only between gate entry and system login, the root cause usually lies in access-control queues, lift congestion, or internal walk time rather than routing.

Second, the Facilities or Transport Head should correlate mobility data with non-transport systems. HRMS or attendance logs indicate when swipe or OTP-based login actually occurred. Access-control and security data show queue lengths or screening delays. If the routing engine, driver app, and command center data consistently show on-time performance but HRMS shows scattered late logins from the same drop, the pattern points to employee readiness or internal process gaps rather than vendor OTP failure.

Third, the team should standardize a short investigation SOP for each late login escalation. The SOP should define which reports to pull, time-window thresholds, and who validates each segment of the journey. Key checks include whether the roster was published before cut-off, whether the employee boarded at the scheduled stop, whether any geo-fence or route-deviation alerts fired, and whether there were known gate, lift, or security slowdowns recorded by facilities or security teams. Over a few weeks, this creates a pattern library that clearly separates routing or dispatch issues from gate and readiness bottlenecks.

A simple triage checklist can help the Transport Head maintain control-room calm and avoid blame loops: - Compare planned ETA versus actual GPS ETA at campus or gate. - Compare gate-entry timestamp versus system login or workstation-ready time. - Check for app or GPS downtime in the same window in the command center logs. - Overlay incident notes from security and access-control teams for that shift.

If we change a transport policy (pickup windows, escort rules, etc.), how do we measure the impact credibly without creating a ‘Big Brother’ feeling for employees?

B0110 Policy change comparisons without backlash — In India corporate Employee Mobility Services (EMS), what does a credible “policy change comparison” look like—for example changing pickup windows or women-safety escort rules—and how do you isolate mobility impact without triggering employee backlash about surveillance?

A credible “policy change comparison” in Indian Employee Mobility Services compares old and new rules on a small, controlled slice of operations, shows clear before–after impact on OTP, safety incidents, and cost per trip, and does this using auditable transport data rather than broad employee tracking. A defensible comparison isolates mobility variables like pickup windows, routing logic, and escort deployment while keeping HR, legal, and Security satisfied that employee privacy and consent are respected.

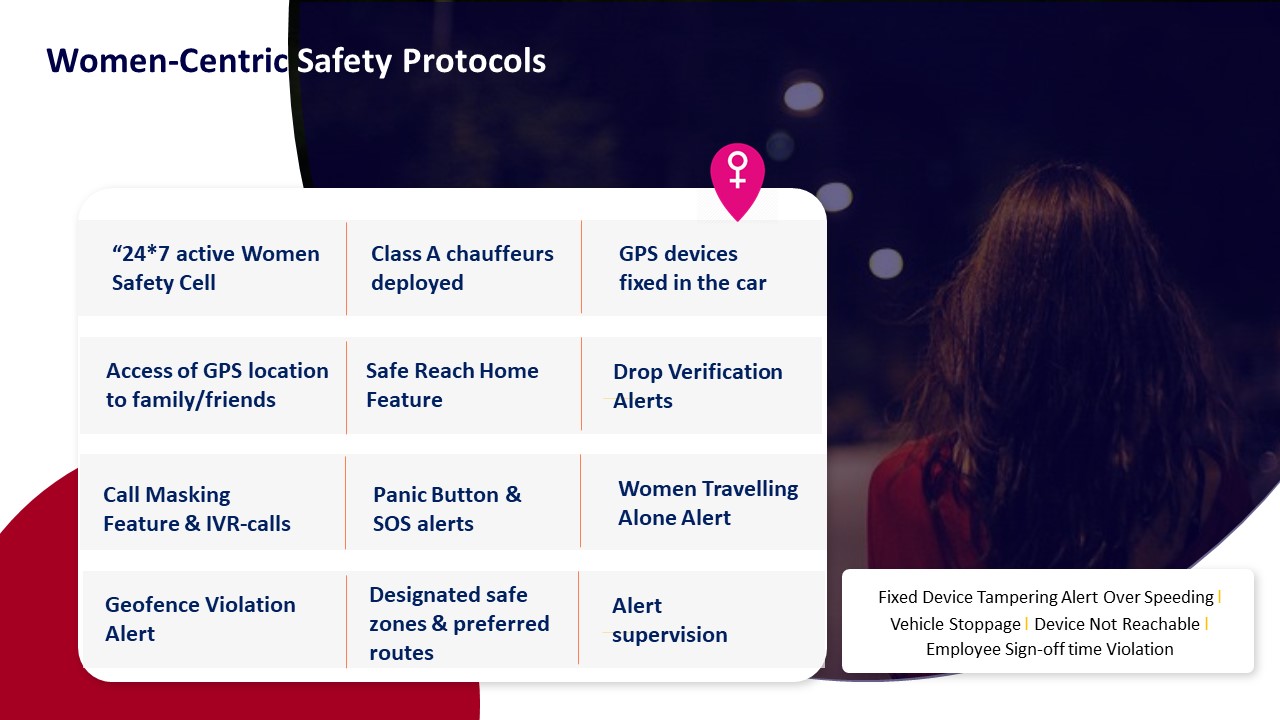

A robust comparison starts from a clearly defined policy lever. Typical levers in EMS include pickup windows, night-shift routing rules for women, guard or escort requirements, and seat-fill thresholds. Transport heads usually test one lever at a time. They then track deltas in On-Time Performance, Trip Adherence Rate, incident reports, dead mileage, and cost per employee trip. Command center dashboards and route-level analytics provide this evidence, with SLA-linked KPIs such as OTP% and exception-closure times making the comparison objective.

To avoid surveillance backlash, most organizations limit measurement to trip and vehicle data. They anchor all tracking in trip manifests, GPS device feeds, routing engines, and SOS or alert systems rather than continuous user location. HR and Security frame the change as safety and reliability improvement, not behavior policing. Privacy-sensitive practices include time-bounded location use during active trips, role-based access to data, and clear retention rules aligned with incident investigation and compliance needs under India’s data protection context.

In practice, operations teams often run short pilots on selected shifts or sites. They keep core controls constant, such as driver KYC, vehicle compliance, and night-shift women-safety protocols, while changing only one variable. They then benchmark pilot routes against matched control routes. Facility heads watch for failure modes like increased no-shows, driver fatigue, or new safety complaints, using command center alerts and user feedback to decide whether the new policy becomes standard or is rolled back.

If we link trip GPS logs with HRMS and attendance for analysis, what’s the minimum we should do for DPDP privacy and consent so we don’t get into trouble?

B0111 DPDP-safe data joins for analysis — In India corporate mobility programs governed under DPDP Act expectations, what minimum privacy and consent practices are needed when joining commute telemetry (GPS/trip logs) with HRMS and attendance data for causality analysis?

In India corporate mobility programs that fall under DPDP Act expectations, organizations need explicit, purpose-specific consent, strict data minimization, and role-based technical controls before joining commute telemetry with HRMS and attendance data for causality analysis. They also need documented legal basis, retention limits, and audit-ready logs that show who accessed what joined data and why.

Organizations should first define a narrow, written purpose for the data join, such as reliability and safety analysis, cost optimization, or ESG reporting. The same telemetry and HRMS data should not be repurposed silently for performance appraisal or disciplinary action. Any new purpose requires fresh notice and, where appropriate, fresh consent.

Employee-facing notices should clearly describe what telemetry is collected, what HRMS/attendance fields are linked, why the linkage is done, and how long the combined dataset is retained. Consent should be specific and informed, not bundled into generic “terms,” and employees should have a simple way to withdraw consent for non-essential analytics without losing basic commute entitlements.

Only the minimal fields needed for causality analysis should be used. For most mobility KPIs, pseudonymous identifiers and shift windows are sufficient instead of full names or detailed PII. Analysts should work on de-identified or aggregated datasets whenever possible, reserving identifiable views for exception handling with clear SOPs and approvals.

Joined datasets should be protected with role-based access control, encryption, and well-defined approval workflows. Access, exports, and downstream sharing should be logged to create an audit trail that can be shown to internal audit, regulators, or data protection officers. Retention schedules should delete or irreversibly anonymize raw trip logs and HRMS-linked datasets once the analysis window or statutory requirement ends.

Organizations should also document a privacy impact assessment for the combined telemetry–HRMS processing. This assessment should explicitly consider risks like employee surveillance, profiling, or discrimination. Controls and SOPs should ensure that safety and reliability analytics do not become covert monitoring of individual productivity, which would increase DPDP and employee-trust risk.

How do we keep an audit-ready trail so our claims (like OTP improved shift adherence) don’t fall apart when audit asks for reconciliation?

B0112 Audit-ready attribution evidence — In India enterprise EMS, how do you set up an “audit-ready” evidence trail so that attribution claims (like OTP improved shift adherence) don’t collapse during an internal audit because the underlying trip logs or attendance records can’t be reconciled?

In India enterprise EMS, an audit-ready evidence trail is built by treating every commute event as a single, reconciled “trip record” that links trip logs, GPS data, and HR attendance in one governed flow. The core rule of thumb is that shift adherence claims must be reproducible from raw trip logs forward and from attendance records backward, with no manual edits that break traceability.

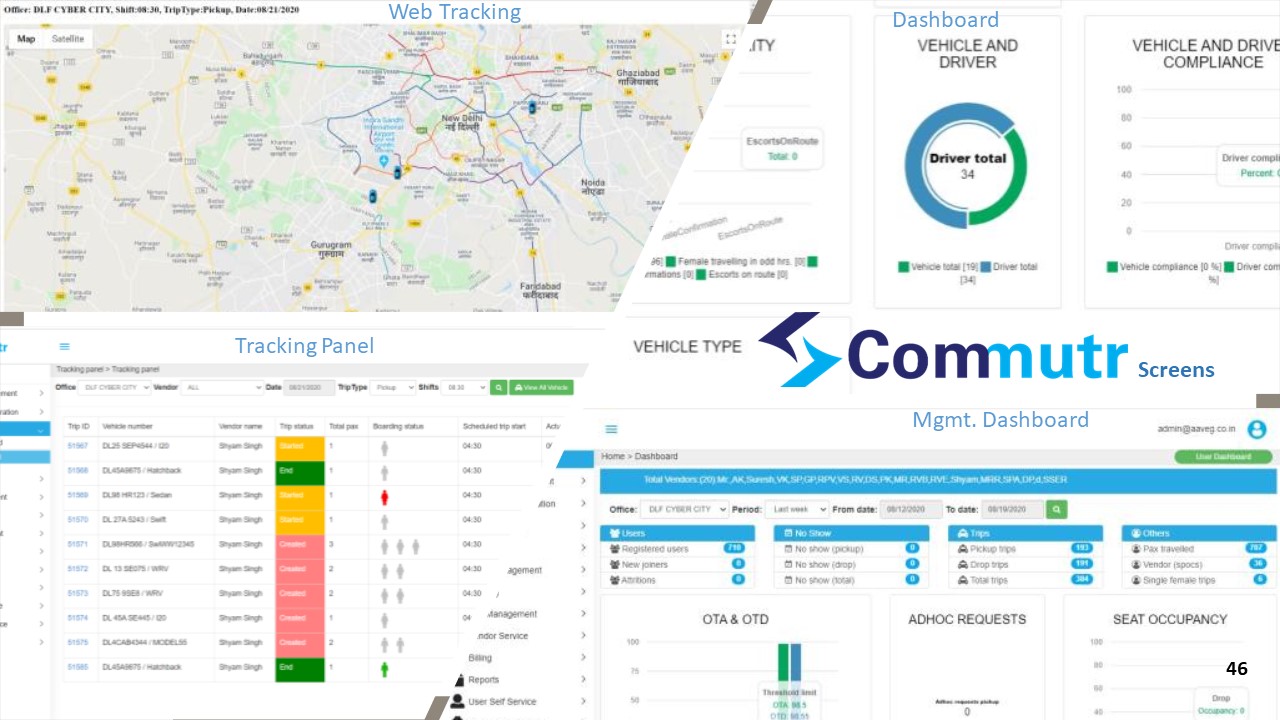

A robust setup starts with a centralized command center and ETS platform that captures trip creation, routing, and closure in a single system. Each trip receives a unique ID that is consistently used across driver app, employee app, GPS feed, billing, and MIS reports. Trip-level data includes planned vs actual times, route, vehicle and driver identity, employee manifest, and SOS or safety deviations. Systems like Commutr and the WTi Mobility dashboard are positioned to provide such single-window visibility and trip lifecycle management.

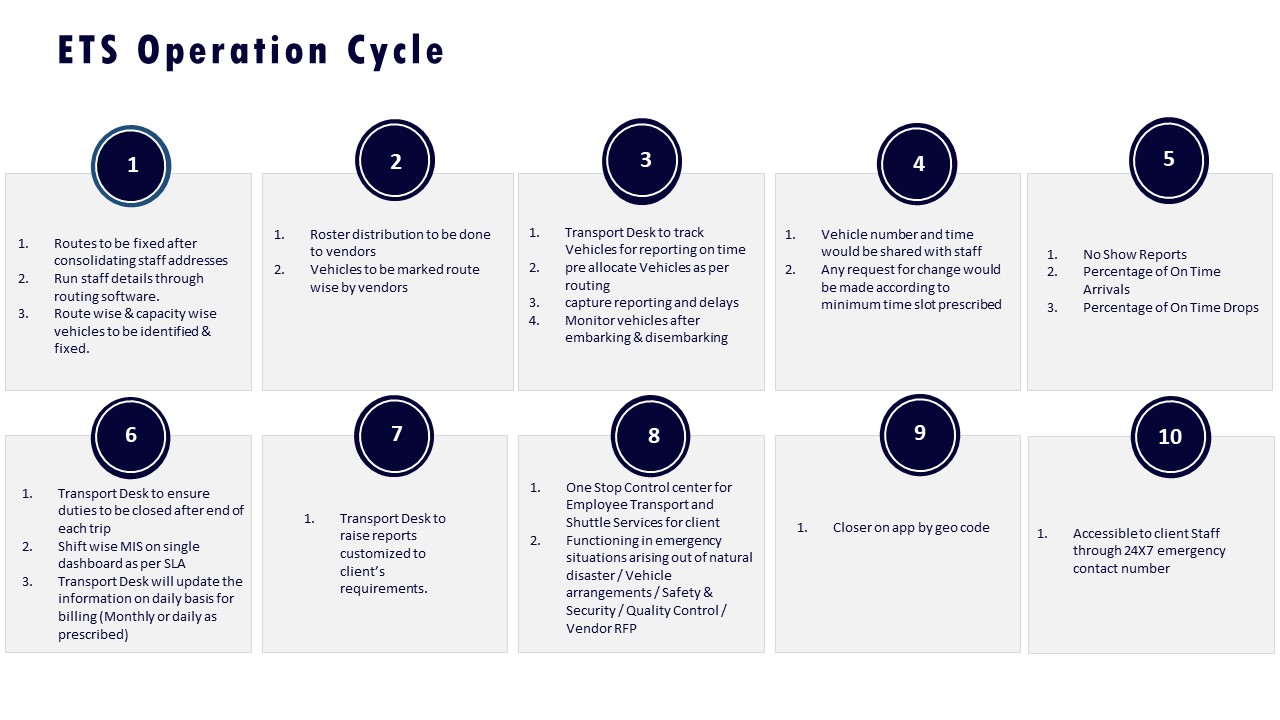

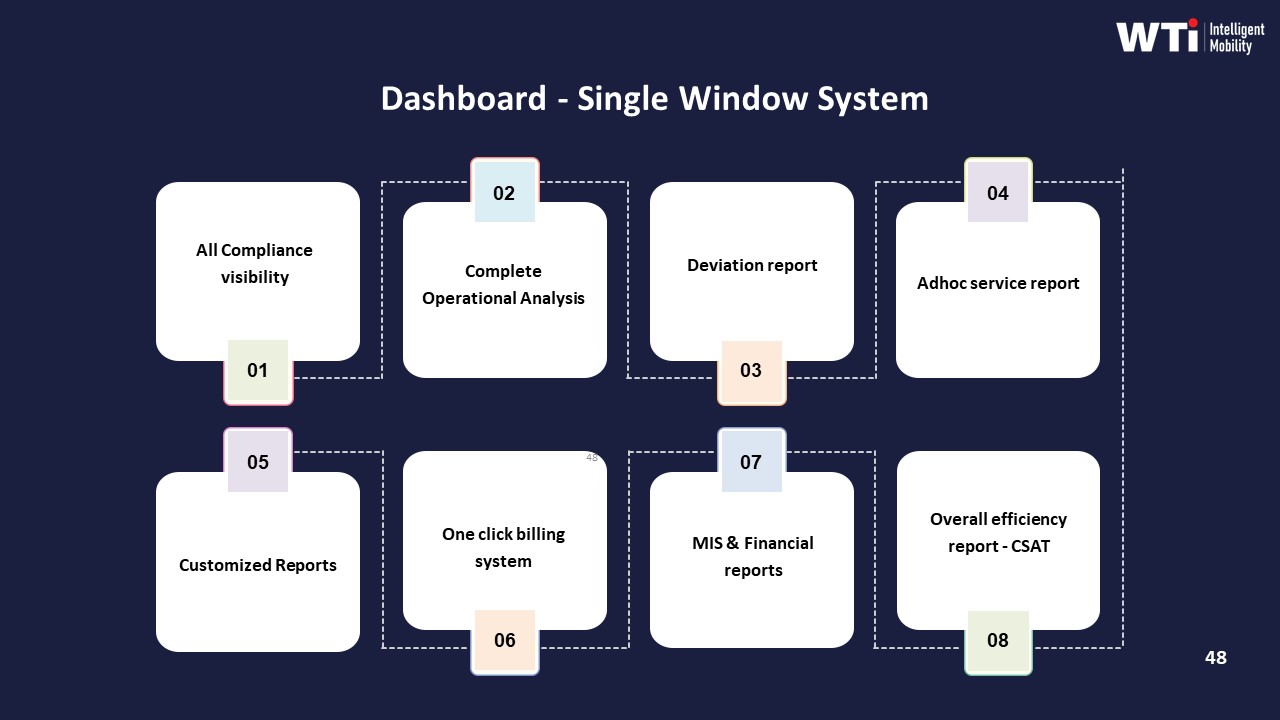

Audit resilience increases when this mobility data is integrated with HRMS and attendance so that login times, shift rosters, and commute trips share reference IDs or time-keyed joins. Evidence dashboards such as the “Measurable Sustainability Outcomes” and “Dashboard – Single Window System” models show how CO₂ reduction and SLA metrics are backed by trip-level data and summarized in a governed KPI layer.

To prevent attribution claims from collapsing under audit, organizations typically enforce:

- Immutable or versioned trip logs with maker–checker policies for changes, as illustrated in fleet and billing compliance collaterals.

- Clear KPI definitions (OTP, Trip Adherence Rate, CEI) and consistent calculation logic in data-driven insights platforms.

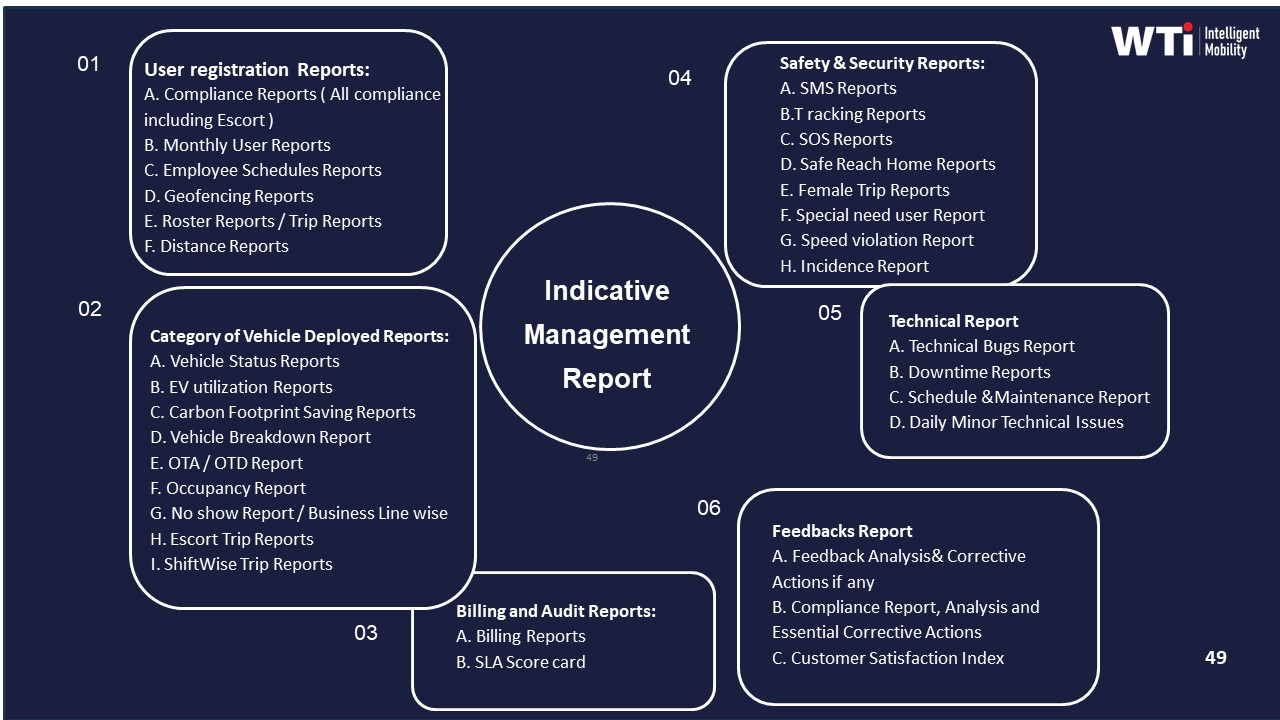

- Cross-system reconciliation between ETS operation cycles, billing systems, and HR-linked rosters, supported by centralized billing and indicative management reports.

- Documented SOPs for incident handling, safety audits, and route adherence checks, as shown in safety, compliance, and command centre governance materials.

Failure modes usually occur when multi-vendor or fragmented tools are used without a unified command center, when manual rosters are not synchronized with trip systems, or when billing and ESG dashboards are built from separate spreadsheets instead of the primary trip ledger.

Before HR presents a ‘transport improved outcomes’ story to the CEO, what sanity checks should we do so it doesn’t look like dashboard storytelling?

B0113 HR credibility checks before CEO — In India corporate Employee Mobility Services (EMS), what practical thresholds or confidence checks should HR use before presenting a causality narrative to the CEO—so it doesn’t feel like “dashboard storytelling” and damage HR credibility?

In India EMS, HR should only present a “cause–effect” story to the CEO when the patterns are repeatable, cross-validated across systems, and tightly scoped to specific variables like OTP, safety incidents, or attendance. HR credibility improves when commute → outcome narratives are framed as “evidence-backed hypotheses with known limits,” not as absolute claims.

HR teams should first check that core EMS metrics are stable and clearly defined. On-time performance, trip adherence rate, no-show rate, incident rate, and seat-fill or utilization should be measured the same way every month with consistent cut-offs. HR should avoid building narratives on a one-week spike, first-month after go-live, or festival/monsoon periods because EMS operations are inherently volatile in those windows.

A basic threshold is to look for the same directional trend over at least 8–12 weeks for any claimed link, such as “improved OTP has reduced late logins.” This pattern should appear across multiple sites or shifts, not just a single outlier location or team. HR should check that sample sizes are meaningful and that the effect is not driven by one large account, one vendor, or a single high-volume shift.

HR should also cross-check with at least one independent system outside transport dashboards. For example, any claim that “better commute reliability improved attendance” should be supported by HRMS attendance data sliced by shift bands where EMS is actually used. Any claim that “safer night commutes improved women’s retention” should be cross-checked with attrition and complaint data, and with Security or EHS incident logs rather than only vendor reports.

Before talking about causality, HR should test a “confounding factors” checklist. Demand changes from hybrid work, policy changes, salary revisions, project ramp-ups or ramp-downs, and manager-level interventions can all move attendance, attrition, or NPS in the same period. If multiple levers moved together, HR should downgrade the language from “caused by EMS improvement” to “likely contributed, alongside X and Y.”

It is safer to present “causal weight” as ranges or scenarios. For example, “Where OTP improved from 85% to 95% over 3 months on night shifts, late logins dropped by 20–25% for the same employee cohort, with no changes in shift policy.” HR should avoid making this claim where EMS coverage is partial, or where large teams do not depend on EMS for commute.

Practical confidence checks before going to the CEO can be framed as a short internal SOP:

- Has the metric trend held for at least 2–3 reporting cycles with the same definition?

- Is the data reconciled between vendor dashboards, HRMS, and, where relevant, Finance or Security logs?

- Can the same pattern be reproduced on at least one more site, shift band, or time-period?

- Have obvious confounders (policy changes, pay cycles, major org events) been identified and disclosed?

- Is the statement framed as “contribution” or “correlation” unless a controlled pilot or A/B pattern exists?

HR can strengthen the narrative by using “before–after in comparable cohorts” rather than global averages. For example, compare teams where EMS routing was changed or EV fleets introduced to similar teams where nothing changed in the same quarter. Narrower, well-controlled slices reduce the risk of overclaiming.

Finally, HR should always bring caveats and residual risks into the CEO conversation. A short “what we know / what we don’t know yet” slide protects HR from appearing to oversell dashboards. CEOs tend to trust narratives that explicitly name data gaps, acknowledge operational noise in EMS (traffic, weather, vendor changes), and propose next steps such as running a defined pilot or formalizing outcome-linked SLAs, rather than treating one quarter’s trend as permanent proof of causality.

If ROI is uncertain, how should Finance protect downside—phased rollout, outcome-linked pricing, clawbacks—without pushing vendors to game the data?

B0114 Downside protection without data gaming — In India corporate ground transportation, how should a CFO structure “downside protection” if mobility ROI claims are uncertain—e.g., phased rollout, outcome-linked commercials, or clawbacks—without creating perverse incentives that distort operations data?

In India corporate ground transportation, a CFO should structure downside protection by phasing commitments, tying a limited portion of payouts to clearly defined mobility outcomes, and hard‑wiring data integrity rules that make KPI manipulation costly for all parties. The protection has to sit in contract design, not in ad‑hoc negotiation, and it must separate “service reliability and safety baselines” from “stretch ROI claims” like aggressive cost or EV benefits.

A pragmatic starting point is phased rollout with explicit gates. The first phase should be a limited pilot across a few sites or one service vertical such as Employee Mobility Services or Corporate Car Rental Services. Contract language should cap spend, freeze commercials for the pilot, and require a baseline KPI pack for OTP, Trip Adherence Rate, Cost per Kilometer, Cost per Employee Trip, incident rate, and EV utilization ratio before any scale‑up. Progression to the next phase should trigger only when both operations and Finance agree the data is stable and reconciled to Finance systems.

Outcome‑linked commercials work best when applied to a modest band of spend. A CFO can keep a fixed “floor” for essential services and apply incentives or penalties to 10–20% of monthly value indexed to OTP, safety incident rate, seat‑fill, and dead mileage. Reliability, women‑safety compliance, and incident response SLAs should remain non‑negotiable baselines, and only efficiency deltas such as route cost reduction or improved fleet utilization should carry upside or downside. This reduces pressure on vendors to over‑optimize one visible metric at the expense of hidden risks.

To avoid perverse incentives and distorted data, the contract should define a data governance spine. The CFO should insist on API‑level access to raw trip, GPS, and billing data, clear event definitions for each KPI, and a right to independent route adherence audits. Exception closure SLAs should be tracked in the same system that generates invoices, and any KPI used for payouts should be traceable from raw trip ledgers to Finance reconciliation. Random Route Audits and cross‑checks between HRMS attendance logs and transport data help prevent “ghost trips” or manipulated OTP reporting.

Clawbacks should be reserved for structural mis‑reporting, not normal variance. A well‑designed clause can state that if later audits find material gaps between reported KPIs and raw trip evidence over a defined threshold, previously paid incentives can be adjusted or recovered. This protects the enterprise from long‑term overpayment without forcing continual micro‑disputes. It also encourages vendors to maintain internal controls around Audit Trail Integrity, not just surface dashboards.

A CFO can further cap downside risk through lock‑in and exit design. Multi‑year contracts should include annual break options tied to transparent KPI scorecards rather than vague dissatisfaction. Data portability and open APIs should be mandatory, so that if the organization exits a vendor, historical trip and cost data remains usable for rebids and benchmarking. This reduces the fear of being trapped in a weak ROI situation as commute patterns, hybrid work, or EV economics change.

Finally, commercial structures should avoid single‑metric obsessions. CFOs should insist on a balanced KPI basket that spans reliability (OTP%, exception closure time), cost (CPK, CET), safety/compliance (incident rate, credential currency), and experience (complaint closure SLA). Each KPI should carry limited commercial weight, with an aggregate service level compliance index driving incentives. This approach protects downside exposure while preserving truthful operations data, because no one metric is worth gaming at the expense of overall performance.

In a multi-site setup, what are common false wins when we try to attribute improvements, and how can we spot them early?

B0115 Common false wins in attribution — In India multi-site EMS, what are the most common “false wins” in attribution—like improvements that are really due to site ramp-downs, manager strictness, or access-control changes—and how do you detect them early?

In multi-site Employee Mobility Services in India, the most common “false wins” happen when core KPIs improve on paper but are actually driven by external changes such as headcount shifts, policy tightening, or access-control tweaks instead of better transport operations. These false wins usually show up first in reliability, cost, and safety metrics that improve faster than routing quality, driver behavior, or vendor performance.

A frequent false win is an apparent jump in on-time performance that is mainly caused by laxer measurement. This happens when sites start using broader grace windows, treat late but allowed gate entries as “on time,” or stop logging early-route deviations. A second pattern is cost-per-trip reductions that are actually the result of ramp-downs at expensive sites, removal of difficult remote drops, or stricter booking eligibility by managers. A third pattern appears in safety statistics, where incident rates fall sharply after changes in access control, female-escort rules, or complaint logging channels, even though underlying route risk and driver fatigue indices remain unchanged.

Early detection of false wins relies on tying each KPI to a stable denominator and cross-checking against adjacent operational signals. Transport teams can watch for mismatches between OTP improvement and static or worsening dead mileage, trip-fill ratios, and driver attrition. They can compare cost-per-km and cost-per-employee-trip trends with seat-fill and attendance by shift window. They can benchmark incident reductions against unchanged geo-fencing alert volume, SOS activations, and night-shift route density.

The most practical safeguards are simple and repeatable. Operations can lock metric definitions in SOPs so sites cannot quietly change what counts as “on-time” or “incident.” They can tag dataset snapshots by site, timeband, headcount, and policy version so trend lines are always read in context of ramp-ups, ramp-downs, and access rule changes. They can institute exception reviews whenever any metric improves by more than a defined band without parallel improvement in routing efficiency, fleet uptime, or employee satisfaction scores.

Useful signals to monitor include: - Sudden metric improvements coinciding with site closures, large WFH shifts, or removal of remote locations. - Better KPIs where routing complexity, night-shift exposure, and traffic conditions have not changed. - Divergence between dashboard metrics and floor feedback, such as persistent complaints despite “green” OTP.

By treating OTP, CET, safety incidents, and employee feedback as a connected set of indicators rather than isolated scores, organizations can spot attribution errors early and avoid over-crediting short-term policy or footprint changes as sustainable EMS performance gains.

Retention is influenced by so many things—pay cycles, ramps, managers—so how can we credibly link any retention change to commute improvements, and what’s realistic to claim?

B0116 Attributing retention to commute — In India shift-based employee transport, how do you attribute retention changes to commute improvements when attrition is influenced by compensation cycles, project ramps, and manager behavior, and what level of attribution is realistically defensible?

In India shift-based employee transport, organizations can link commute improvements to retention only at a directional and contributory level, not as a single precise percentage driver of attrition. The defensible position is that better mobility improves attendance stability, perceived safety, and commute experience, which in turn reduces avoidable attrition risk, but compensation, project volatility, and manager behavior will always remain dominant co-drivers.

A realistic approach is to treat transport as one controllable factor in a multi-factor environment and benchmark only those deltas that can be tightly tied to mobility data. Organizations can compare teams or sites with similar pay bands and projects but different commute conditions, and they can track changes in attendance patterns, no-show rates, and commute-related complaints before and after mobility interventions such as route optimization, EV introduction, or safety enhancements. When employee surveys explicitly include commute satisfaction questions, movement in those scores alongside stable pay and policy conditions becomes a stronger, though still not exclusive, indicator.

The most defensible attribution level is usually “commute as a secondary but material driver.” In practice, organizations can credibly say that improved EMS reduced transport-frustration exits and night-shift drop-offs, especially among women employees, rather than claiming that transport alone “reduced attrition by X%.” A conservative narrative is to show that commute improvements correlate with better attendance, safety perception, and employee satisfaction scores, and to position retention benefit as a supported hypothesis rather than a mathematically isolated effect.

What’s a realistic minimum setup we can deliver in 4–8 weeks to do basic causality analysis, without launching a full data lake project?

B0117 Minimum viable causality setup — In India enterprise mobility analytics, what is a realistic “minimum viable” causality setup that IT can deliver in 4–8 weeks—data sources, joins, and basic controls—without building a full data lake program?

In India enterprise mobility, a realistic “minimum viable” causality setup in 4–8 weeks focuses on a narrow trip-level spine, 3–4 core source systems, and simple, auditable joins rather than a full data lake program. IT teams can deliver useful cause–effect views on reliability, cost, safety, and emissions by standardizing a canonical trip ID, enforcing basic quality checks, and exposing a handful of governed KPIs in a lightweight analytics layer.

The practical anchor is a single trip lifecycle table. This table should hold trip IDs, timestamps, route and shift data, vehicle and driver tags, and employee or cost-center identifiers taken from the mobility platform’s trip ledger and routing engine. IT can then join this spine to HRMS for shift windows and attendance, to ERP or finance for tariff and billing attributes, and to telematics feeds for GPS and EV battery telemetry where available. This enables basic causality such as “routing pattern → OTP%,” “seat-fill → cost per employee trip,” and “EV utilization → CO₂ intensity per pax-km.”

Minimum controls should include validation of timestamp order within each trip, referential checks for driver and vehicle compliance status, and simple outlier rules for distance and duration. Governance can rely on a small semantic layer defining terms like On-Time Performance, Trip Adherence Rate, Cost per Kilometer, and Emission Intensity per Trip in a consistent way. This approach improves observability and supports outcome-based procurement while avoiding the complexity of a full mobility data lake or advanced anomaly detection engine.

If OTP improved, how do we separate what came from better NOC incident response vs what came from routing changes?

B0118 Separate NOC vs routing impact — In India corporate EMS with a centralized NOC, how do you separate the impact of better incident response (faster escalations) from the impact of routing optimization when attributing improvements in OTP and shift adherence?

In India corporate EMS with a centralized NOC, organizations separate the impact of better incident response from routing optimization by defining different KPI “families,” tagging every trip with event data, and attributing improvements based on when issues are detected and resolved versus when they are avoided by design. Routing optimization shows up in fewer exceptions and structurally better ETAs, while NOC-driven incident response shows up in faster closure times and reduced impact when exceptions still occur.

Routing optimization is primarily a planning and dispatch lever. It changes baseline ETA predictions, dead mileage, seat-fill, and route structure before the trip starts. Its effect on OTP and shift adherence is visible when there are no major disruptions, and when shift windowing and dynamic route recalibration still deliver on-time performance under normal traffic and demand patterns. Improvements from routing are quantified through metrics such as Trip Adherence Rate, Trip Fill Ratio, and reductions in planned versus actual variance on routes without NOC interventions.

Centralized NOC impact is operationalized through real-time monitoring and exception handling. The NOC uses alerts, escalation matrices, and incident workflows to detect deviations early, triage them, and minimize passenger and shift impact. Its value appears in shorter exception detection-to-closure times, lower SLA breach rates for trips that had disruptions, and a higher proportion of “saved” trips that would otherwise have missed shift windows. OTP improvements here come from containment of issues, not from route design itself.

To separate impacts in practice, organizations typically:

- Segment trips into “clean” trips with no exception events and “exception” trips where the NOC intervened.

- Attribute OTP gains on clean trips and reductions in dead mileage predominantly to routing optimization.

- Attribute improved OTP and reduced shift-impact within the exception segment to faster detection, escalation, and closure controlled by the NOC.

- Use pre/post analyses for NOC maturity and routing engine changes separately, avoiding overlapping rollouts where possible.

After a pilot that claims attendance improvement, what’s a practical rule for when we have enough evidence to scale without over-committing?

B0119 Scale decision rule after pilot — In India corporate ground transportation governance, what should be the decision rule for “enough evidence to scale” after a pilot that claims attendance improvement—so HR doesn’t over-commit and Operations doesn’t get stuck with an unproven rollout?

In corporate ground transportation in India, “enough evidence to scale” exists only when HR and Operations have hard, jointly-owned proof across attendance, reliability, safety, and cost—not just a lift in attendance or positive anecdotes. A practical decision rule is to gate any scale-up on a minimum set of quantified outcomes, traceable data, and repeatable SOPs, tested over at least one full roster cycle including night shifts and peak-disruption periods.

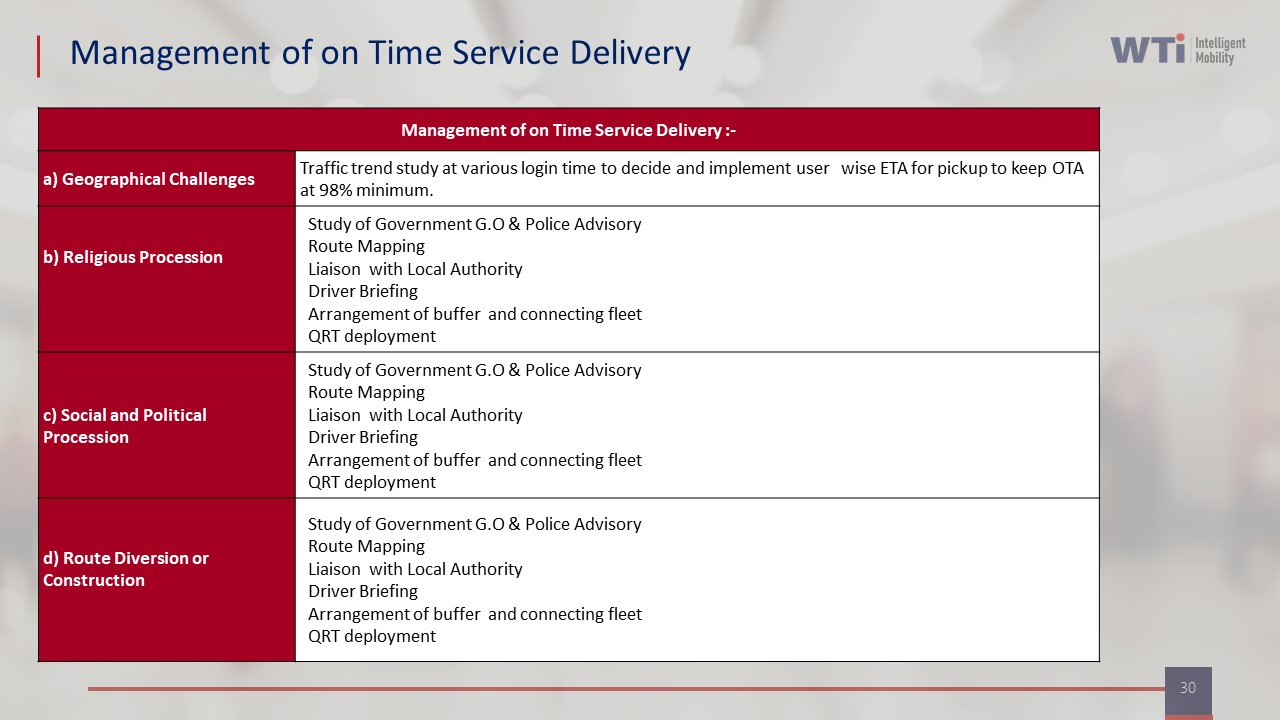

HR and Transport leaders should first insist that attendance gains are explicitly linked to commute KPIs. Attendance improvement should correlate with better on-time performance, fewer no-shows, and reduced escalations, rather than coinciding with HR policy changes or seasonal demand dips. This requires a single-window dashboard with auditable trip logs, as seen in WTi’s command center, real-time tracking, and ETS operation cycle collaterals. Evidence must be exportable and verifiable by Finance and Internal Audit, not locked in vendor slides.

A common failure mode is scaling on soft feedback without stress-testing operations under bad weather, driver shortages, or technology glitches. Operations should therefore demand proof that the pilot held its OTP and safety incident rates during at least one disruption scenario, supported by clear BCP playbooks and alert supervision outcomes. HR should also insist that women-safety protocols, SOS flows, and compliance checks worked end-to-end in real trips, with audit-ready logs.

Before committing to wider rollout, decision-makers can use a simple go/no-go checklist: - Attendance and OTP: Attendance uplift is ≥ a pre-agreed threshold, and OTP/route adherence meet target bands with WTi-style “Management of on Time Service Delivery” reporting. - Safety and compliance: Zero serious safety incidents, full driver and fleet compliance documentation, and working SOS/women-safety mechanisms, supported by central compliance dashboards. - Operational stability: Command-center workflows, escalation matrix, and BCP steps were actually used during the pilot and reduced firefighting, not just documented. - Cost and leakage: Cost per trip and per km are stable or improving, and billing data reconciles cleanly with trip logs and Finance, using the kind of centralized billing models and reports shown in the billing collaterals. - Repeatability: SOPs for routing, driver management, and shift briefings are written, trained, and followed in at least two locations or teams, so the model is not dependent on a single “hero” manager.

Only when these conditions are met, and when HR, Transport, Finance, and Security each sign off on their slice of evidence, does a pilot move from “promising” to “proven enough to scale” without over-committing HR or overloading Operations.

causality framing & governance for leadership credibility

Define attribution versus correlation, agree on pre/post windows and confounders, and set governance so CFOs and executives trust the analysis.

How do we manage the HR vs Finance conflict when HR wants EX-based impact stories but Finance only trusts hard metrics like OTP and cost per trip?

B0120 Resolve HR-Finance attribution conflict — In India employee transport programs, how do you handle stakeholder conflict when HR wants to attribute improvements to “employee experience” initiatives while Finance insists on hard operational metrics like OTP and cost per trip?

In Indian employee transport programs, stakeholder conflict between HR’s “employee experience” narrative and Finance’s OTP and cost-per-trip focus is best handled by designing one shared measurement framework where experience outcomes and hard ops metrics are explicitly linked instead of competing. The transport or facility head usually stabilizes this by running a command-center style operation with common KPIs, clear SOPs, and a single source of truth that both teams can defend during reviews and audits.

Most organizations reduce friction when they treat commute experience as an output of governed operations rather than a parallel initiative. HR’s commute NPS, satisfaction scores, and complaint trends sit on the same dashboard as OTP%, Trip Adherence Rate, seat fill, dead mileage, and cost per employee trip. This allows HR to show that “experience” improved after concrete levers such as routing optimization, driver training, business continuity playbooks, and centralized alert supervision were tightened. It also allows Finance to see that better experience coincides with higher fleet uptime, lower incident rates, and more predictable billing.

A common failure mode is when HR runs narrative-led “experience” programs without access to trip-level data, while Finance blocks investments because improvements are not tied to CO₂ reduction, cost per km, or SLA performance. A shared transport command center with real-time visibility, integrated HRMS rosters, and measurable safety controls gives both sides what they need. HR gets auditable proof on safety, women-centric protocols, and satisfaction lifts. Finance gets reconciled OTP and cost baselines, automated billing logic, and clean audit trails.

In practice, conflict eases when quarterly reviews are reframed around a small, agreed set of joint KPIs, for example:

- Reliability KPIs owned by Ops but reported to both HR and Finance.

- Safety and women-safety KPIs co-owned by HR and Security.

- Cost and utilization KPIs owned by Finance but explained with operational context.

Once those links are visible in one dashboard, HR can legitimately attribute improvements to “employee experience” while Finance remains comfortable that every claim is backed by operational and financial metrics that are continuously monitored and auditable.

What metric definitions do we need to lock upfront (late login, no-show, pickup OTP, gate time) so later analysis doesn’t become a definition fight?

B0121 Lock metric definitions upfront — In India corporate mobility contracting, what operational data definitions must be agreed upfront (late login, no-show, pickup on-time, gate time) so that later causality analysis isn’t derailed by arguments about metric definitions?

In India corporate mobility contracts, organizations need explicit, written operational data definitions for each trip milestone and exception before go-live. Clear, time-stamped definitions prevent blame-shifting and make root-cause analysis and penalties auditable instead of negotiable after incidents.

The core principle is that every KPI must be linked to a precise “clock” (which system time, which event), a location reference (gate, GPS point, or app event), and a responsibility boundary (vendor vs employee vs client-side infra). Ambiguity at any of these three layers later turns into disputes between HR, Transport, vendors, and Finance when reconciling OTP, billing, and safety escalations.

At minimum, the following should be contractually defined and system-implemented as fields in the trip lifecycle:

- Pickup On-Time. Define the pickup time reference (scheduled roster time vs revised time after official change). Define the tolerance band (e.g., +/– X minutes). Specify which timestamp is authoritative (driver app “arrived at pickup” event vs employee boarding time) and how GPS/device failures are treated.

- Employee No-Show. Define the exact cut-off window (e.g., driver waits Y minutes from scheduled time or arrival, whichever is later). Define required driver actions (call attempts, app status update, photo/log). Specify when the trip converts from “delay” to “no-show” and who bears cost and SLA impact.

- Late Login / Late Logout. Clarify whether “late login” is measured against shift start or contracted buffer (e.g., employee must be at gate Z minutes before shift). Define which system is the source of truth (HRMS access log vs manual attendance) and how transport-caused vs employee-caused lateness will be distinguished during RCA.

- Gate Time / Campus Time. Define “gate-in” and “gate-out” as specific GPS or access-control points. Decide whether OTP is measured to gate entry, building entry, or seat-at-desk and which is in vendor scope. Capture separate timestamps for gate arrival vs actual drop so traffic vs internal campus delays can be separated.

- Trip Start and Trip End. Define when trip start time is recorded (engine on, driver swipe, first pickup boarded, or gate exit). Define trip end as last drop, gate entry, or engine off. These anchors drive per-km billing, dead mileage classification, and utilization metrics.

- Routing & Deviation. Define “approved route” (system-generated vs transport-approved manual route). Specify what counts as a “route deviation” (distance or time threshold) and how exceptions for diversions (police barricades, road closures, monsoon detours) are logged and exempted from penalties.

- Vehicle Reporting Time. Define when a vehicle is considered “reported” for duty (arrival at client-designated point vs first pickup). This underpins standby payments, buffer fleet usage, and event/project SLAs.

- Cancellation & Cut-off. Define employee, admin, and vendor-side cancellation cut-off times and the status codes (auto-cancel, chargeable cancel, system error). This is crucial to avoid later disputes about whether a missed pickup is a no-show or an approved cancellation.

- Incident & SOS Event. Define what constitutes a “safety incident” vs a “service complaint” and the required data elements (time, location, vehicle/driver ID, trip ID, SOS trigger). This separation is essential for Safety/EHS investigation versus routine service reviews.

A robust practice is to maintain a shared “Trip Data Dictionary” between client, vendor, and command center. This dictionary should map every event code and timestamp to a business meaning, a system of record, and SLA/commercial impact. Without this shared schema, causality analysis across HRMS, transport apps, and billing becomes opinion-driven and undermines both OTP governance and outcome-based contracts.

If our sites have different shifts, gate processes, and vendor mixes, how do we design matched cohorts that aren’t biased from the start?

B0122 Matched cohorts across diverse sites — In India corporate EMS, how do you design a matched-cohort study when different sites have different shift timings, security gate processes, and vendor mixes, so the comparison is not fundamentally biased?

In India corporate employee mobility services, a matched‑cohort study remains unbiased only when cohorts are defined at the same “operational grain size” and are matched on the variables that actually drive reliability, cost, and safety. The design must therefore match at the level of shift‑window, route‑type, and operational model, not at the level of whole sites or vendors.

A common failure mode is comparing “Site A vs Site B” when Site A has different shift bands, security gate dwell, and vendor capabilities. That approach confounds core EMS outcomes such as on‑time performance, seat fill, and incident rates. A more reliable design isolates comparable commute patterns by stratifying the population first and matching only within those strata.

In practice, organizations typically stratify routes by shift window, urban context, and security process complexity. They then define matched cohorts as routes or employee groups that share similar timebands, route lengths, and gate requirements, while holding women‑safety rules, escort policies, and compliance baselines constant. Vendor mix differences are treated as an exposure variable only inside these tightly matched buckets, and outcomes such as OTP, cost per employee trip, incident rate, and complaint closure time are then compared within each bucket.

To guard against residual bias, most teams use centralized command‑center telemetry to standardize measurement definitions across sites. They also ensure consistent data capture for GPS traces, roster patterns, gate dwell times, and safety incidents before drawing any conclusions about the impact of a new routing engine, vendor mix, or EV penetration on service reliability or cost.

What are signs vendors or ops teams might game the metrics (like calling delays ‘no-shows’), and how do we catch it early?

B0123 Detect gaming in attribution data — In India corporate employee mobility, what are the operational signs that an attribution model is being “gamed” (for example, marking trips as employee no-shows to protect OTP), and how should governance catch that early?

In India corporate employee mobility, “gaming” an attribution model usually shows up as patterns that protect vendor SLAs on paper while real on-ground reliability or safety is deteriorating. Governance needs to treat OTP, no-shows, routing, and incident data as a single audit trail, not isolated metrics that vendors can manipulate.

Operational gaming often appears as abnormal label patterns in trip data. A common example is a rising “employee no-show” rate on certain routes or timebands while HR attendance data does not show corresponding absenteeism. Another pattern is trips marked “on time” even though drivers or employees report late actual arrival, which can be detected by comparing GPS traces, app check-in times, and access-control or HRMS punch-in logs. Frequent last‑minute route changes or manual overrides that conveniently restore OTP on dashboards while complaint volume or escalation calls increase is another strong signal.

Governance should design the command center, routing engine, and billing workflows so no single party can change attribution outcomes without leaving an auditable trace. The command center can run periodic route adherence audits that cross-check OTP and no-show tags against raw telematics, SOS events, and complaint tickets. HR, Finance, and Transport can review exception reports jointly so misalignment between reported OTP, billing claims, and employee feedback is surfaced early.

Practical controls include: - Threshold alerts when “no-show” or “trip cancelled by employee” rates spike for specific shifts, vendors, or locations. - Randomized route and trip audits with playback of GPS and app logs to verify the assigned attribution tag. - Outcome-linked commercials that use blended metrics such as OTP, complaint closure SLAs, and Trip Adherence Rate rather than a single OTP percentage. - Clear SOPs for when a trip can be labelled “no-show” or “cancelled” and mandatory evidence (timestamps, call logs, screenshots) stored in a central mobility data lake.

How do we share results with employees/managers using GPS and access-control data without it feeling like surveillance?

B0124 Communicate results without surveillance optics — In India enterprise mobility analytics, how do you communicate attribution results to employees and managers in a way that improves trust—especially when GPS and access-control data are involved—so it doesn’t feel like surveillance?

In India enterprise mobility analytics, trust improves when organizations frame GPS and access‑control attribution as a safety and service tool with strict boundaries, not as individual surveillance, and then prove those boundaries through transparent policies, limited metrics, and visible benefits for employees. Trust collapses when commute data is used opaquely, or when managers infer performance or discipline decisions from location traces without clear consent and governance.

Communication works best when it follows a few clear rules. Organizations should explain in plain language what trip data is captured from the employee app, driver app, IVMS, and access control systems and why it is needed for OTP, roster accuracy, women‑safety compliance, and business continuity. They should state explicitly what the data will never be used for, such as individual productivity scoring, informal attendance policing outside agreed HRMS rules, or any non‑transport purpose, and then align this with documented HSSE and compliance frameworks. This approach mirrors how centralized command centers, alert supervision systems, and safety dashboards are presented as safeguards and audit tools rather than spy layers.

Attribution dashboards for managers should be designed at the level of routes, shifts, vendors, and fleets, not at the level of named individuals unless a safety or incident investigation SOP is triggered. Employees should see their own trip history, SOS logs, and “safety proof” (for example, time‑stamped pickup/drop and escort compliance), so that the same data that protects the organization also protects the rider in case of disputes. When presenting results, transport teams should talk in terms of service KPIs like on‑time performance, seat fill, CO₂ saved through EVs, or incident‑free night shifts, rather than “who was where at what time.” This reinforces that analytics are being used to improve routing, uptime, and safety protocols, similar to how EV dashboards and CO₂ reduction reports are used for ESG and CSR transparency rather than for individual monitoring.

Clear SOPs and escalation matrices are essential. A documented process should define who can see identifiable GPS and access‑control data, under what conditions, and for how long it is retained, keeping chain‑of‑custody and auditability in mind. Safety and compliance teams should anchor communications in existing frameworks such as centralized compliance management, women‑centric safety protocols, and business continuity plans, demonstrating that data access is exception‑based and logged, not discretionary. Regular floor connects, shift briefings, and “safety first” sessions give employees a forum to ask questions about how attribution works and to see that controls like SOS panels, geo‑fencing alerts, and command centers are active safeguards.

When attribution insights are shared with leadership, they should stay aggregated and outcome‑linked. For example, organizations can show that dynamic routing during monsoon conditions improved OTP to 98%, that EV adoption reduced carbon emissions compared to diesel baselines, or that safety inspection checklists and driver training led to lower incident rates. This positions mobility analytics as part of operational excellence and ESG commitments rather than as an HR surveillance channel. Over time, consistent behavior—using data to fix routes, adjust vendor capacity, and invest in safety tools, instead of to micro‑monitor individuals—is what ultimately convinces employees and managers that enterprise mobility analytics serves their interests as much as the organization’s.

How can Internal Audit validate a claim like reduced late logins using trip logs and attendance data, without disrupting daily operations?

B0125 Audit validation without operational disruption — In India corporate ground transportation, what’s the best way for Internal Audit to validate a causality claim (like reduced late logins) using traceable trip logs and attendance records without disrupting daily EMS operations?

The most reliable way for Internal Audit to validate a causality claim such as “improved transport reduced late logins” is to run a controlled, evidence-backed time-series analysis that links immutable trip logs to HR attendance data, while keeping all checks off the live EMS workflow and using read-only data access.

Internal Audit should first treat the EMS platform and command-center stack as the system of record for trip events. Trip-level logs, GPS traces, route adherence reports, OTP% and exception tickets form the auditable evidence pack for “service reliability.” HRMS attendance and login data form the independent evidence pack for “employee punctuality.” Causality is validated only when patterns in these two data sets move together in a consistent and time-aligned manner.

The least disruptive approach is for Internal Audit to request a fixed look-back window, for example three to six months, covering “before” and “after” a transport change such as a new routing engine, EV fleet deployment, or vendor transition. Data extraction should be done via read-only dashboards or exports from the EMS command center and HRMS, without pausing or altering live rosters, routing, or driver apps.

For each day in scope, audit can construct a linked, depersonalized table with:

- a transport-side view with route ID, shift window, scheduled vs actual pickup/drop times, OTP flag, and any incident or exception code

- an HR-side view with shift code, planned start time, actual login timestamp, and late-login flag as per policy

The link key should be an anonymous employee identifier and shift date, so privacy and DPDP principles are respected while preserving analytical integrity.

Auditors can then segment the time-series into “pre-change” and “post-change” periods and compute:

- change in OTP% and Trip Adherence Rate (transport KPIs)

- change in late-login rate and average login delay (attendance KPIs)

A causal claim gains strength when improved transport KPIs and improved attendance KPIs align in time, are consistent across multiple weeks, and hold across major routes or sites, not just a single corridor.

To avoid disrupting daily EMS operations, Internal Audit should:

- use the existing command-center dashboards, deviation and service reports, and mobility data lake views as primary evidence

- align with the transport head on audit windows and sampling, so route planners and dispatch teams are not asked for ad-hoc manual data during peak shifts

- rely on already-configured indicative management reports, compliance dashboards, and ETS/EMS operation cycle logs rather than introducing new manual reporting

A common failure mode is to accept correlation at face value without checking for confounders like shift-pattern changes or HR policy tweaks. Internal Audit should therefore cross-check with HR and Transport for any parallel changes in hybrid-work rules, shift definitions, or leave policies during the same window, and explicitly document these as part of the causality assessment.

After an incident, how can HR quickly answer ‘how often does this happen?’ using joined HRMS, trip, and attendance data without contradicting ops logs?

B0126 Panic-button reporting after incidents — In India corporate EMS, when an auditor or leadership asks “How often does this happen?” after a commute incident, how can HR use the same joined datasets (HRMS + trips + attendance) to answer quickly and consistently without contradicting Operations’ incident logs?

In Indian corporate EMS programs, HR can answer “How often does this happen?” reliably only when HRMS, trip data, attendance, and incident logs are treated as one governed dataset with a shared definition of incidents and a single reporting pipeline. The core principle is that HR, Transport, and Security must read from the same “source of truth” KPI layer instead of maintaining parallel spreadsheets or ad-hoc reports.

The first requirement is a unified trip lifecycle record. Each trip needs a unique ID that is consistent across the EMS platform, the command centre dashboard, driver apps, and HR-linked systems. Incident flags, SOS activations, geo-fence violations, delays, and safety non-compliances must be attached to that trip ID rather than stored as free-text narratives. Operations incident logs then become metadata on the same record, not a separate narrative system.

The second requirement is HRMS-attendance linkage at employee-trip level. Each employee’s trip manifest should be linked to rostered shifts and actual login/logout data in HRMS. This linkage allows HR to distinguish between commute-related issues and unrelated attendance variance. It also supports repeatability, because HR can define standard ratios such as “incident rate per 1,000 trips” or “late arrivals explicitly tagged as commute-related.”

The third requirement is a canonical KPI and definition library. HR, Transport, and Security must agree on precise definitions for an “incident,” “near miss,” “safety violation,” “SLA breach,” and “commute-related late login.” These definitions must be encoded in the reporting logic, so that dashboards, management reports, and audit extracts always compute the same numbers. This reduces the risk of HR presenting softer counts while Operations shows a stricter log.

To operationalize this, organizations typically centralize observability in a command centre or NOC. Trip events, alerts, and closures are streamed into a mobility data store that also receives HRMS roster data and basic attendance fields. A governed semantic layer on top of this store then exposes agreed metrics: total trips, trips with any safety flag, SOS usage, verified women-safety escorts, on-time performance, and commute-linked late logins. HR can then answer leadership and auditors using these metrics without manual reconciliation.

A disciplined incident management workflow is also critical. Each incident should move through a standard cycle of detection, triage, escalation, investigation, closure, and preventive action, all time-stamped against the trip ID. Operations teams continue to work in their native consoles, but the status and categorization fields they update feed directly into the shared dataset. HR can then report how many incidents occurred, how many are closed, and what corrective actions were implemented, using the same numbers that Operations uses for SLA reviews.

To make this usable for HR in real time, three practical outputs are helpful:

- A standard “Commute Incident Summary” view that shows counts and rates over any selected period, segmented by site, shift band, gender, vendor, and route cluster.

- A simple filter for “safety-critical” versus “service-quality” events, so HR can immediately separate reputationally sensitive cases from routine delays.

- Drill-through capability from aggregate numbers to anonymized trip-level entries for audit discussions, while keeping personal data governed under privacy rules.

When this structure is in place, HR can respond to “How often does this happen?” with stable, repeatable metrics such as “X safety incidents per 100,000 trips in the last quarter, all investigated and closed,” instead of ad-hoc counts. Operations’ logs no longer contradict HR, because both teams are consuming different views of the same joined dataset. This reduces defensive conversations, supports audit readiness, and lets leadership see commute safety and reliability as governed, rather than anecdotal.

What review cadence works best so attribution insights actually lead to policy changes and don’t become a report nobody trusts?

B0127 Governance cadence for attribution learning — In India enterprise employee transport, what governance cadence (weekly ops review vs monthly steering committee) best supports attribution learning—so insights turn into policy changes instead of becoming a report nobody trusts?

In India enterprise employee transport, a weekly operational review paired with a monthly cross-functional steering committee creates the strongest attribution learning loop, because it separates fast, data-driven troubleshooting from slower policy and commercial decisions. Weekly reviews keep OTP, safety, and cost signals close to the ground, while monthly governance turns repeated patterns into contract terms, routing policies, or SOP changes.

A weekly cadence works best when it is transport-led and NOC-anchored. It should focus on a tight KPI set such as OTP%, Trip Adherence Rate, exception closure time, seat-fill, incident logs, and driver fatigue or absenteeism patterns. The goal of this forum is not to “explain” performance but to agree on specific corrective actions for routes, vendor shifts, driver coaching, or app workflows. Weekly reviews fail when every metric is debated and no owner or closure SLA is assigned.

A monthly steering committee is most effective when HR, Finance, Procurement, Transport, Security/EHS, ESG, and IT participate. This forum should only discuss items that have appeared multiple weeks in a row in the ops reviews. It can then safely adjust policies such as escort rules, hybrid-work routing norms, vendor tiers, EV vs ICE fleet mix, or outcome-linked commercial levers. Monthly governance fails when it re-questions the underlying data instead of using weekly evidence packs from the command center.

The most reliable pattern is:

- Weekly: command-center–driven service review with root causes, actions, and owners.

- Monthly: steering committee that only consumes pre-validated weekly trends and converts them into policy, contract, or technology roadmap changes.

What should HR leadership ask to make sure our causality analysis doesn’t expose PII or create a DPDP issue when we join HRMS and trip data?

B0128 CHRO checks for PII risk — In India corporate Employee Mobility Services (EMS), what should a CHRO ask to ensure a causality analysis won’t accidentally expose sensitive PII or create a DPDP compliance incident while joining HRMS and trip telemetry?

In India EMS programs, a CHRO should insist that any causality or correlation analysis between HRMS data and trip telemetry is designed so that the analytics team never needs raw, directly identifiable employee data and that every data join is governed by explicit legal basis, minimization rules, and technical controls aligned to the DPDP Act.

The CHRO should first ask for a written description of the exact data fields that will be pulled from HRMS and from the transport platform. The CHRO should require that the vendor or internal team justifies why each field is needed for the analysis and confirms that directly identifying fields such as full name, personal phone number, or government IDs will not be used. The CHRO should also ask whether pseudonymized or tokenized employee IDs will be used for joining HR and trip data instead of direct identity fields.