How to translate SRE mindsets into an EMS stability playbook

In the control room, you live the problem every shift—driver shortages, weather and traffic disruptions, and late pickups. This playbook converts SRE ideas into repeatable guardrails you can actually use: clear ownership, predictable escalation, and runbooks you can follow even during peak or night shifts. It’s not a demo; it’s a practical plan to reduce firefighting and keep leadership confident in reliability.

Is your operation showing these patterns?

- 2 a.m. escalation spikes with driver no-shows and unreachable vendors

- GPS/app outages create blind spots just as dispatch needs visibility

- Vendor tickets pile up with conflicting updates, delaying decisions

- Dashboards show green OTP while riders experience long ride times

- Night shift fatigue and rushed substitutions after hours

- Routes blocked or vehicle issues trigger manual fallbacks that feel chaotic

Operational Framework & FAQ

Operational guardrails and escalation discipline

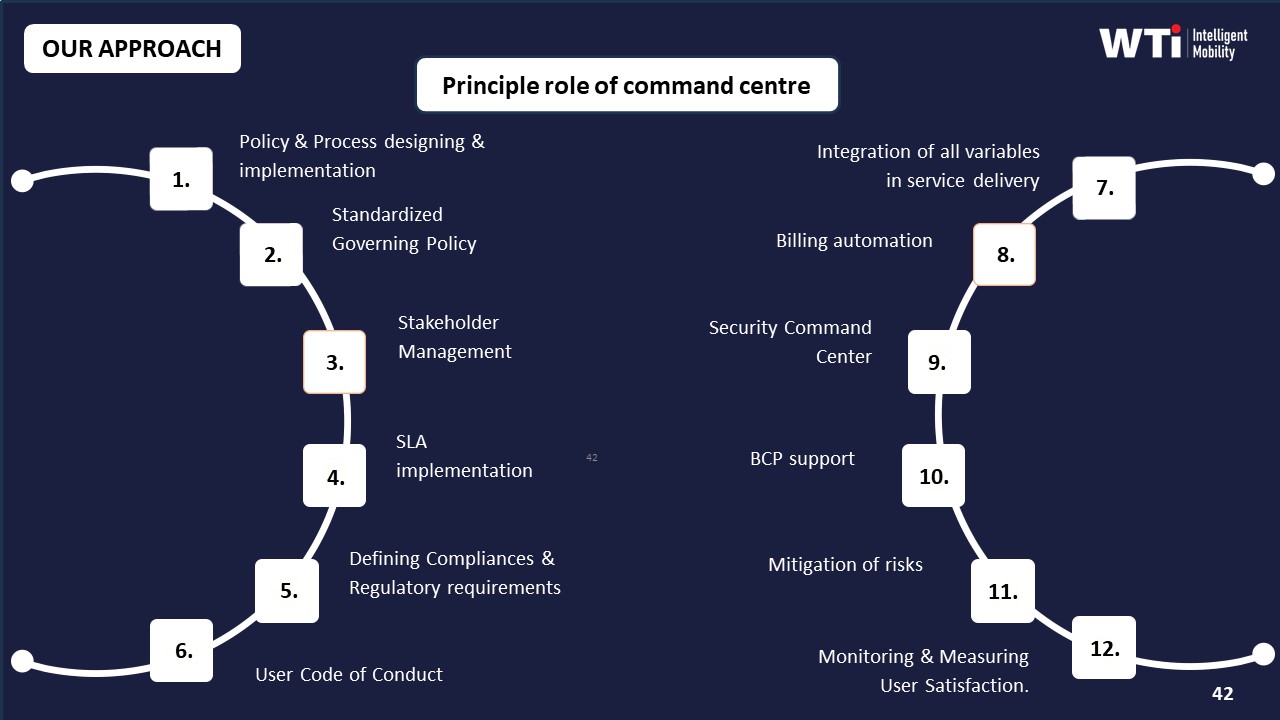

Defines who acts during outages, how to escalate, and how to recover without chaos; establishes fallback, communications, and ownership you can rely on in off-hours.

For our employee commute ops, what does an SRE mindset mean in day-to-day terms, and how is it different from our usual SLA reviews?

B1454 Meaning of SRE mindset — In India corporate Employee Mobility Services (EMS) commute operations, what does an "SRE mindset" actually mean day-to-day for transport reliability, and how is it different from a normal transport SLA review meeting?

An SRE mindset in Indian Employee Mobility Services means running daily transport like a mission‑critical system using reliability engineering, live telemetry, and incident playbooks, not just chasing monthly SLA numbers. It focuses on preventing failures, reducing impact when they occur, and continuously improving OTP, safety, and compliance with data, rather than blaming vendors after things break.

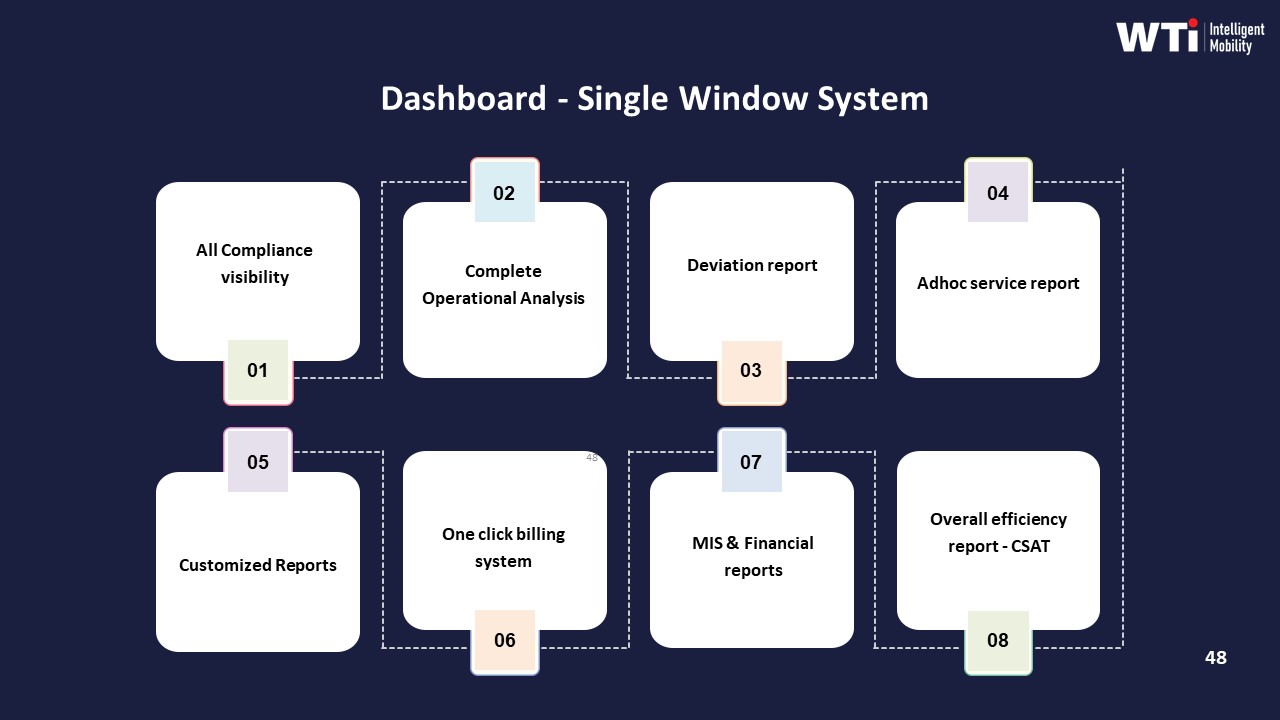

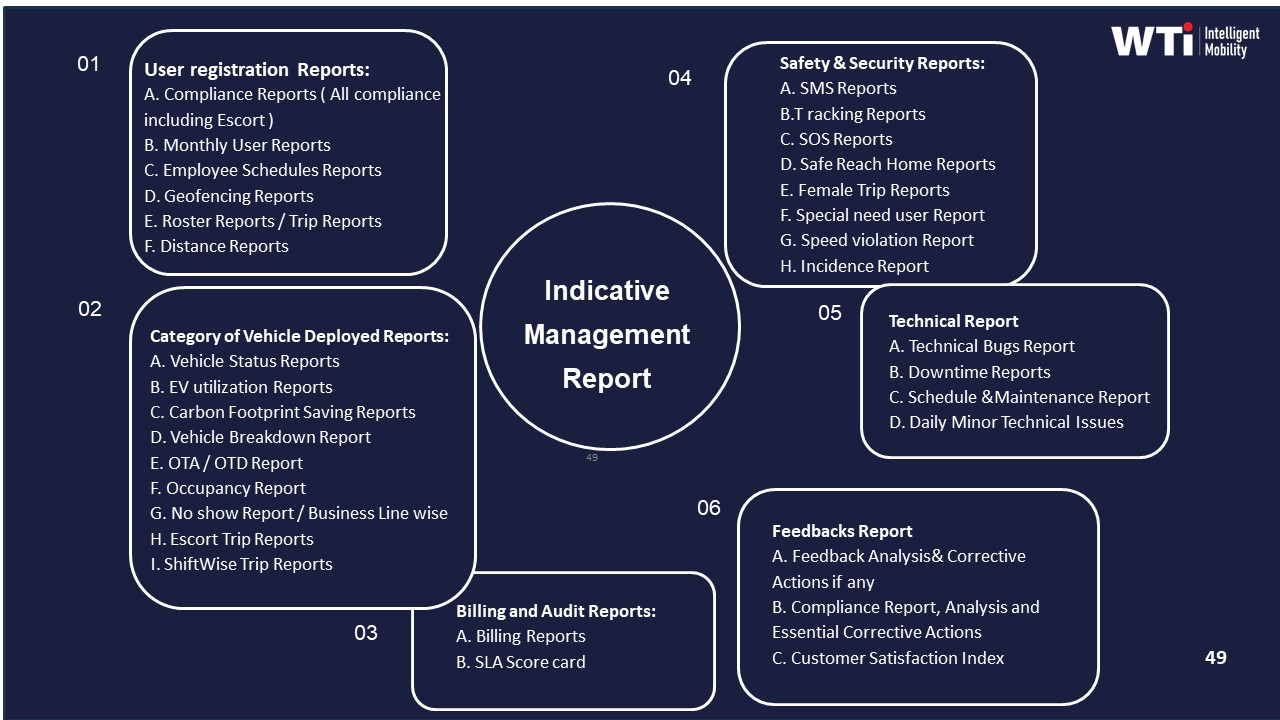

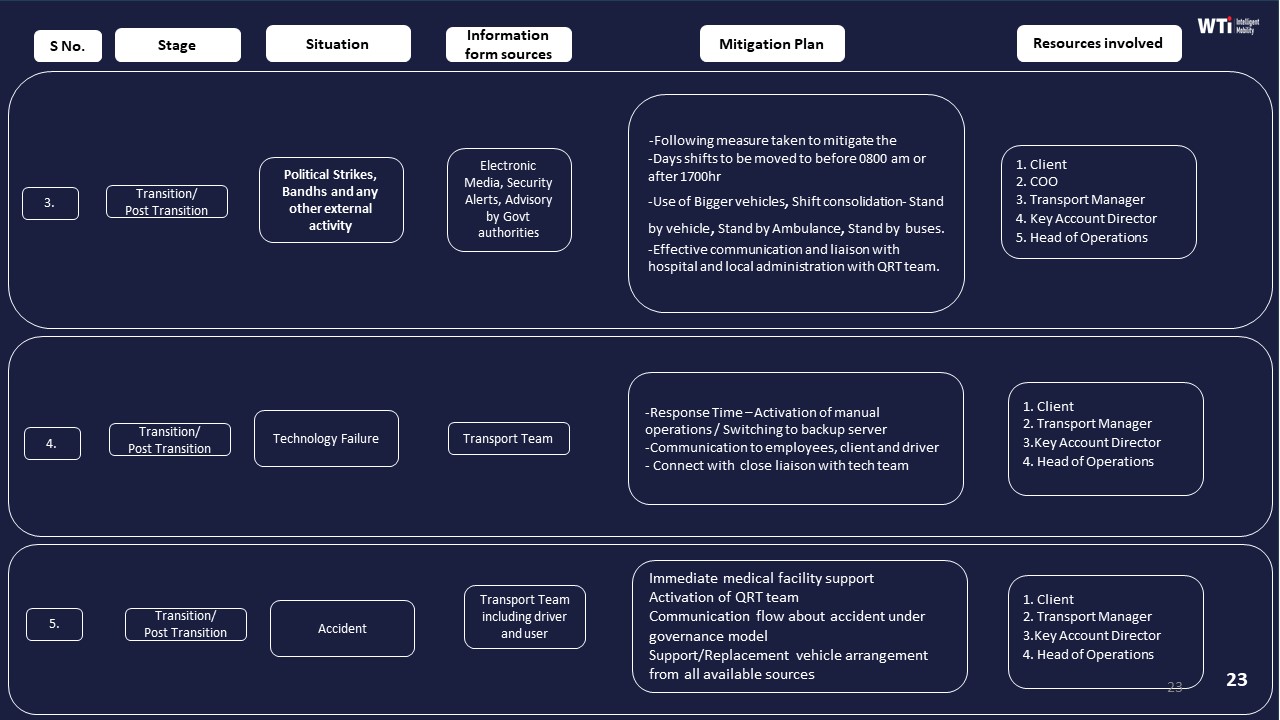

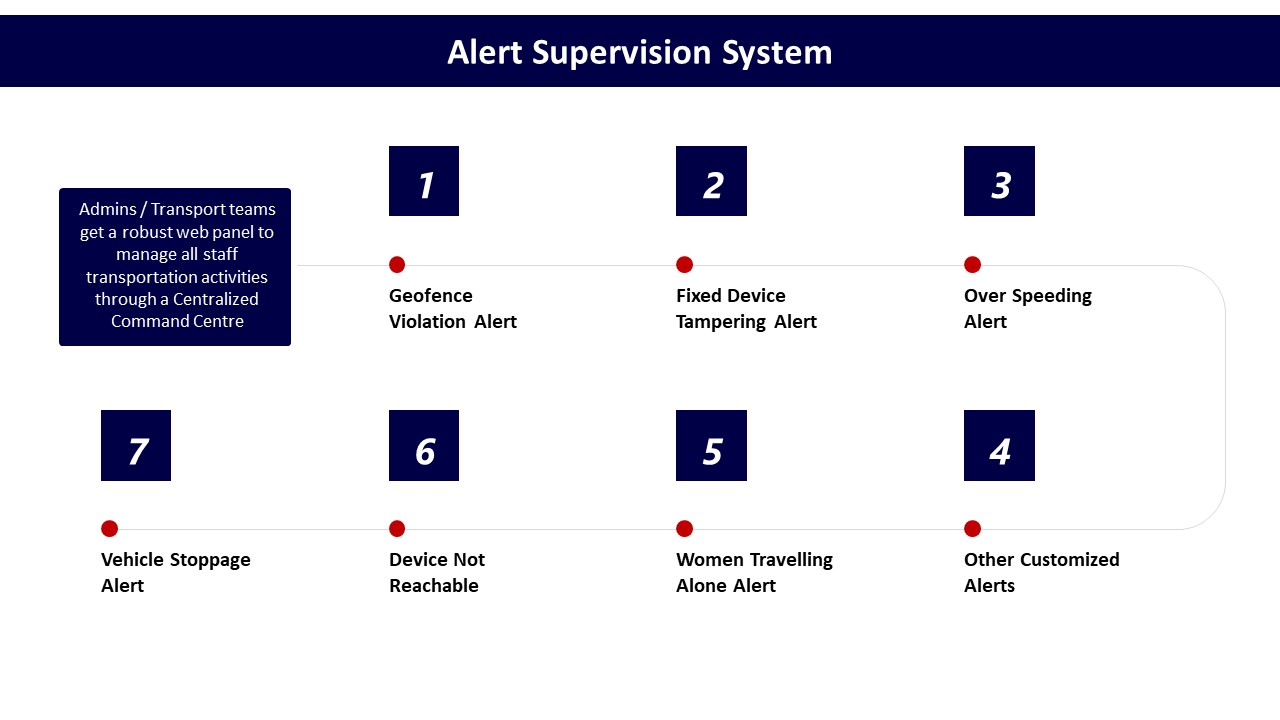

In day‑to‑day EMS ops, an SRE mindset changes how the transport or facility head, command center, and vendors work. Command center operations, alert supervision systems, and dashboards are treated as core tools to detect issues early and triage them fast. Route optimization, fleet utilization, and driver performance are tuned continuously using data‑driven insights rather than adjusted only after escalations. Business continuity plans, mitigation for technology failures, and contingency routing are rehearsed so that GPS outages, EV charging delays, or monsoon traffic do not turn into night‑shift crises.

A normal transport SLA review is retrospective and commercial. It checks OTP%, incident counts, billing disputes, and penalties once a month or quarter. An SRE mindset is operational and continuous. It asks why specific trips were late, how exception detection and closure time can be reduced, and what automation or SOP changes will prevent recurrence. A normal review focuses on whether SLAs were met. An SRE mindset focuses on making the next week more stable through change management, capacity buffers, and better observability.

For a facility or transport head, the practical differences show up as:

- Daily reliability stand‑ups using live command‑center dashboards instead of waiting for MIS.

- Runbooks for geofence violations, over‑speeding, GPS failure, or app downtime, with clear escalation matrices.

- Continuous tuning of routing, fleet mix (EV/ICE), and driver rosters based on OTP and incident data.

- Structured post‑incident reviews that change SOPs, alerts, or vendor configuration, not just “noted for future.”

An SRE mindset therefore reduces firefighting and 2 a.m. calls by designing reliability, safety, and continuity into EMS operations, while a normal SLA review mostly reports how bad the firefighting was after the fact.

Why should our transport team use SLOs and error budgets instead of only tracking OTP, and what pain does it actually solve?

B1455 Why SLOs over OTP — In India corporate ground transportation for employee commute (EMS), why would a Facilities/Transport Head adopt SLOs and error budgets instead of just tracking on-time pickup/drop, and what business pain does that shift solve?

In Indian employee commute operations, a Facilities/Transport Head adopts SLOs and error budgets to convert vague “good service” into explicit, tolerated failure ranges that can be governed, traded off, and defended. This shift reduces constant firefighting, stabilizes night-shift operations, and gives transport teams a clear control-room language to align HR, Procurement, and vendors around reliability, safety, and cost.

Traditional on-time pickup/drop (OTP) tracking is backward-looking and coarse. It shows how many trips were late, but it does not define how much unreliability is acceptable, for which routes or shifts, and at what cost. It also ignores other critical EMS dimensions like exception closure time, route adherence, safety incidents, and dead mileage, which directly affect hybrid-work elasticity, driver fatigue, and vendor performance under SLAs.

Service Level Objectives (SLOs) define reliability targets across multiple EMS KPIs. Error budgets define how much deviation from these targets is acceptable in a given period before a response is triggered. This creates predictable rules for when to relax constraints (e.g., during extreme weather or political disruption) and when to tighten operations or invoke business continuity playbooks.

This shift solves several recurring pains for the Facilities/Transport Head. It reduces subjective blame when traffic, charging gaps, or vendor constraints cause delays, because agreed error budgets and escalation matrices exist. It enables data-driven conversations with vendors under outcome-linked contracts, where payouts and penalties are mapped to SLO adherence, not just OTP snapshots. It also supports centralized command-center operations by tying alert thresholds and triage workflows to explicit error budgets instead of ad-hoc reactions.

For hybrid and EV-heavy EMS programs, SLOs and error budgets help balance reliability, fleet utilization, and ESG outcomes. A transport head can decide, for example, how much OTP degradation is tolerable to gain higher EV utilization or reduced dead mileage, and then monitor that trade-off transparently. Over time, this reduces operational noise, protects the Facilities/Transport team from 2 a.m. escalations without context, and provides audit-ready evidence that reliability and safety are being managed proactively rather than reactively.

How do runbooks help with recurring issues like late pickups or app/GPS problems, and what should a solid runbook include so night shifts aren’t dependent on one person?

B1456 Runbooks for commute incidents — In India corporate Employee Mobility Services (EMS), how do runbooks work in practice for recurring commute incidents (late pickups, no-shows, GPS/app outages), and what should a good runbook contain so the night shift doesn't depend on one heroic person?

In Indian corporate Employee Mobility Services, effective runbooks convert recurring commute incidents into predefined, stepwise responses that any night-shift coordinator can execute. A good runbook specifies clear triggers, roles, time-bound actions, and communication templates so late pickups, no-shows, and GPS or app outages are handled predictably, not via individual heroics.

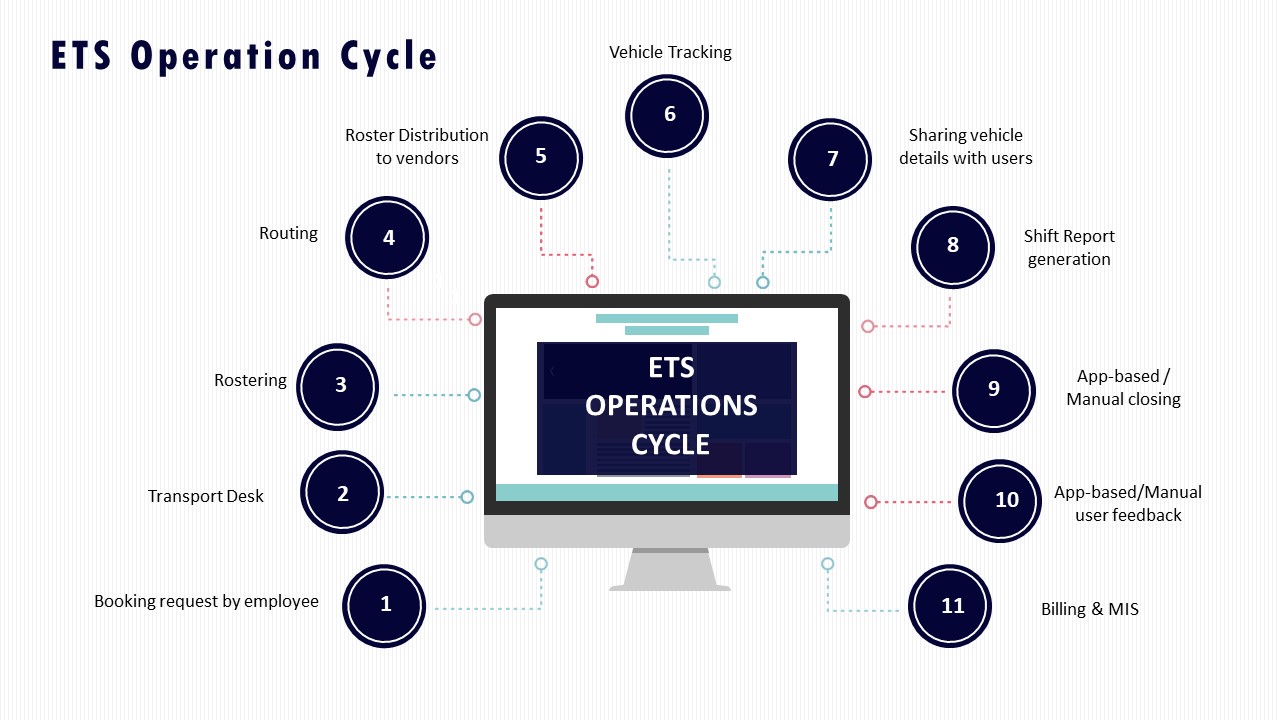

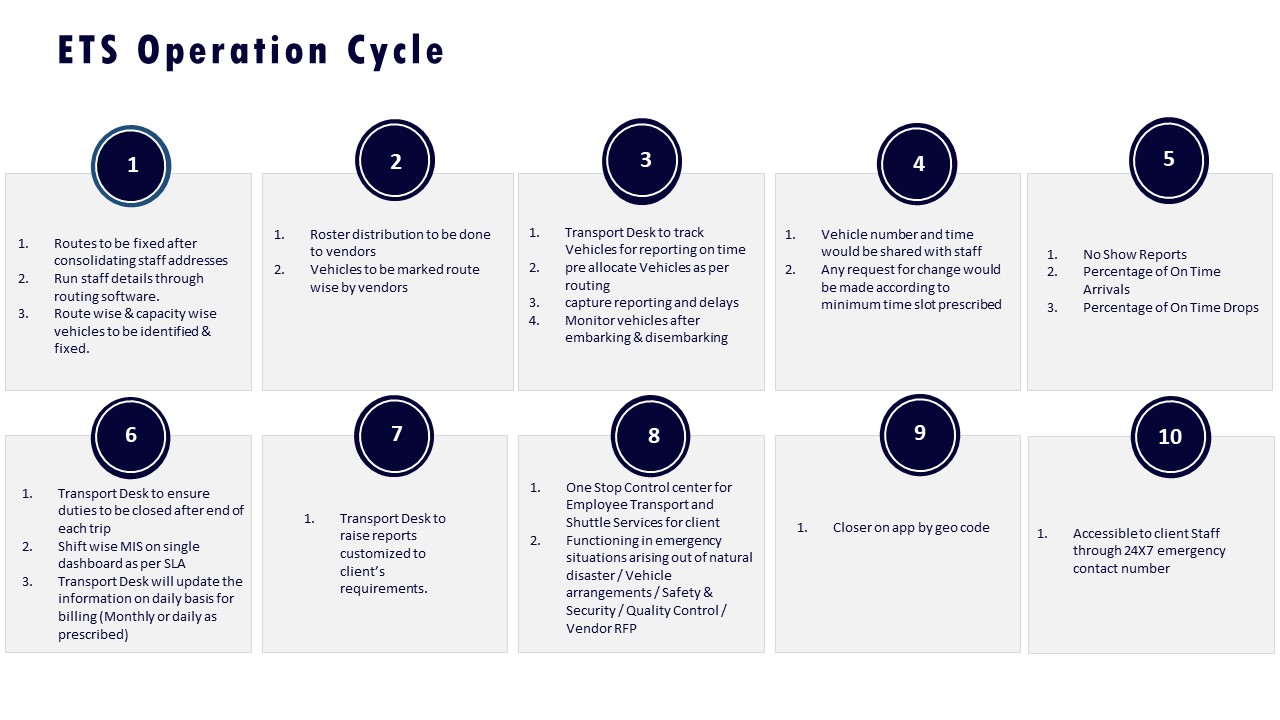

In practice, runbooks sit between the command center SOPs, the EMS operation cycle, and the escalation matrix. Operations teams use real-time dashboards, alert supervision systems, and transport command centres to surface incidents, then follow the runbook to triage and close each case within defined SLAs. A common failure mode is a runbook that describes “what should happen” but not “who does what in which minute,” which forces the night-shift lead to improvise.

A robust EMS incident runbook for recurring issues should include:

- Precise triggers per scenario. Example: “Pickup delay >10 minutes vs ETA,” “driver not reachable for 5 minutes,” “vehicle icon not moving for 8 minutes,” “app downtime > 5 minutes for >10% users.”

- Role-by-role actions. Specific steps for transport desk, vendor supervisor, command center operator, and, when needed, security/EHS.

- Minute-by-minute response timelines. For late pickup: T+0 detection, T+5 driver contact attempts, T+10 backup dispatch, T+15 mandatory escalation, T+20 alternative arrangement rule.

- Communication scripts. Short, pre-approved SMS/IVR/app message templates for employees, team managers, and security for each state change.

- Decision trees and fallbacks. For example, when GPS fails, the runbook must define how to switch to phone-based location checks, how often to call, and when to downgrade to manual trip sheets.

- Escalation matrix linkage. Clear thresholds for when to push from desk-level to duty manager, vendor owner, or security, aligned to the documented escalation mechanism and MSP governance structure.

- Safety overlays. Different paths for women night-shift routing, missed check-ins, or lone-traveller incidents, aligned with women-centric safety protocols, chauffeur controls, and SOS processes.

- Data capture and closure. Mandatory fields for incident logs, including timestamps, root cause tags, and closure notes, feeding into management reports and data-driven insights.

Typical runbook sections for the three common scenarios:

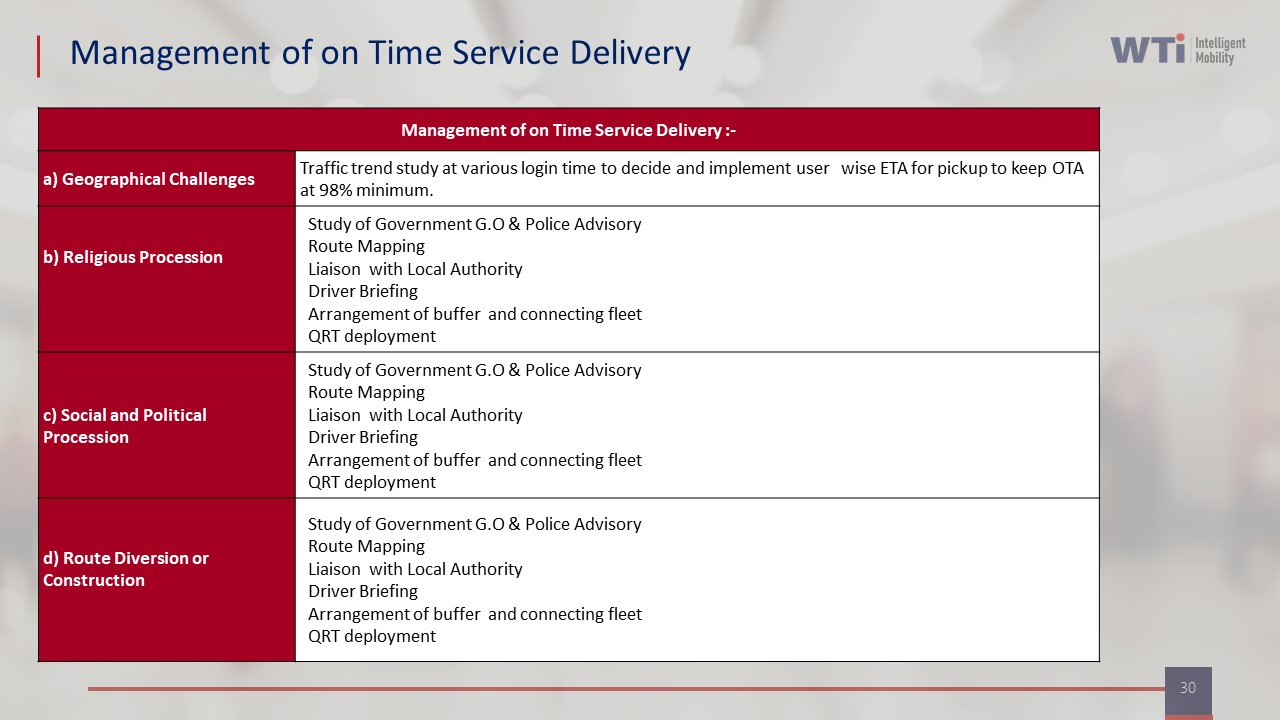

- Late pickups and delays. OTP thresholds, backup vehicle pools or standby cars, dynamic re-routing rules, and coordination with HR or team managers when shift start is at risk. This aligns with “Management of on Time Service Delivery” practices and the ETS operation cycle.

- No-shows (driver or employee). Rules for wait time, verification steps, photographic or IVR evidence, re-routing of the remaining roster, and billing/penalty logic mapped back to centralized billing SOPs.

- GPS / app outages. Switchover SOP to manual mode, use of call-center scripts, SMS-based OTP and trip confirmation, and later reconciliation with telematics data once systems recover.

A good runbook also ties into the business continuity plan and contingency slides so that during larger disruptions (strikes, heavy rain, partial tech failure), the same night team can follow pre-agreed playbooks instead of escalating everything to leadership. When runbooks are explicit, tested in drills, and wired into dashboards and alert systems, the “2 a.m. shift” depends on predictable systems and buffers, not on one exhausted coordinator’s memory.

Beyond OTP, what SLOs should we track—like response time or time-to-restore—and how do we pick measures that people can’t game?

B1457 Selecting meaningful commute SLOs — In India corporate employee transport (EMS), which service-level objectives (SLOs) are most meaningful for live commute reliability beyond on-time pickup/drop—like incident response time, escalation latency, or "time to restore" after breakdowns—and how do you choose ones that won't be gamed?

In Indian corporate employee transport, the most meaningful SLOs for live commute reliability measure how fast operations detect, respond to, and close disruptions, not just how often cabs are on time. Strong SLOs focus on detection time, escalation latency, incident response and “time to restore” normal service, with definitions that are tightly scoped, auditable, and hard to game.

The core live-reliability SLOs usually build around a few operational moments. Detection SLOs measure the maximum allowed delay from a real-world issue occurring to the command center flagging it, using GPS, missed-OTP, geo-fence or no-movement alerts. Escalation SLOs measure how long the command center can take to escalate an exception from first detection to the responsible role on the vendor, transport desk, or security side. Response SLOs track how quickly a human takes control after escalation, such as calling the driver, rider, or dispatching a backup. “Time to restore” SLOs measure how long it takes to get every impacted employee either en route or safely rescheduled after a breakdown, accident, or extreme delay.

These SLOs become credible when they are defined on top of immutable trip logs, standard incident codes, and independent command-center dashboards. A common failure mode is defining SLOs only on self-reported vendor data, which encourages under-reporting or late tagging of incidents. Another is tracking only averages, which allows chronic outliers that ruin night shifts but do not breach the mean.

To make SLOs hard to game, organizations should anchor them to data that is generated automatically from telematics and apps, not only from manual status changes. They should define clear inclusion criteria such as “all trips that cross X minutes delay from ETD or lose GPS for Y minutes are auto-classified as exceptions.” They should measure both median and tail performance, for example “95% of safety incidents acknowledged within 2 minutes” and “99.5% of trips have no unacknowledged SOS beyond 60 seconds.” They should combine live SLOs with audit mechanisms such as random route adherence checks, command-center quality sampling, and cross-checks against HRMS attendance or no-show patterns.

Over time, organizations select the most useful SLOs by linking them directly to shift adherence, safety incidents, and escalation noise reaching HR and senior leadership. The SLOs that matter are the ones that reduce 2 a.m. firefighting and still stand up under audit.

How do we set an error budget for late or missed trips across shifts, without it turning into a blame tool for coordinators or vendors?

B1458 Error budgets without blame — In India corporate EMS commute operations, how should an Operations/NOC lead set an error budget for late pickups or missed drops across shifts, and how do you prevent the error budget from becoming a tool for blaming individual coordinators or vendors?

In Indian corporate EMS operations, an Operations or NOC lead should set an explicit, shift-wise error budget for late pickups and missed drops that is tied to overall OTP targets, route risk, and demand variability, not to individual coordinators or single vendors. The error budget should be treated as a shared system capacity for failure that drives process improvement and routing changes, rather than as a quota for punishing people.

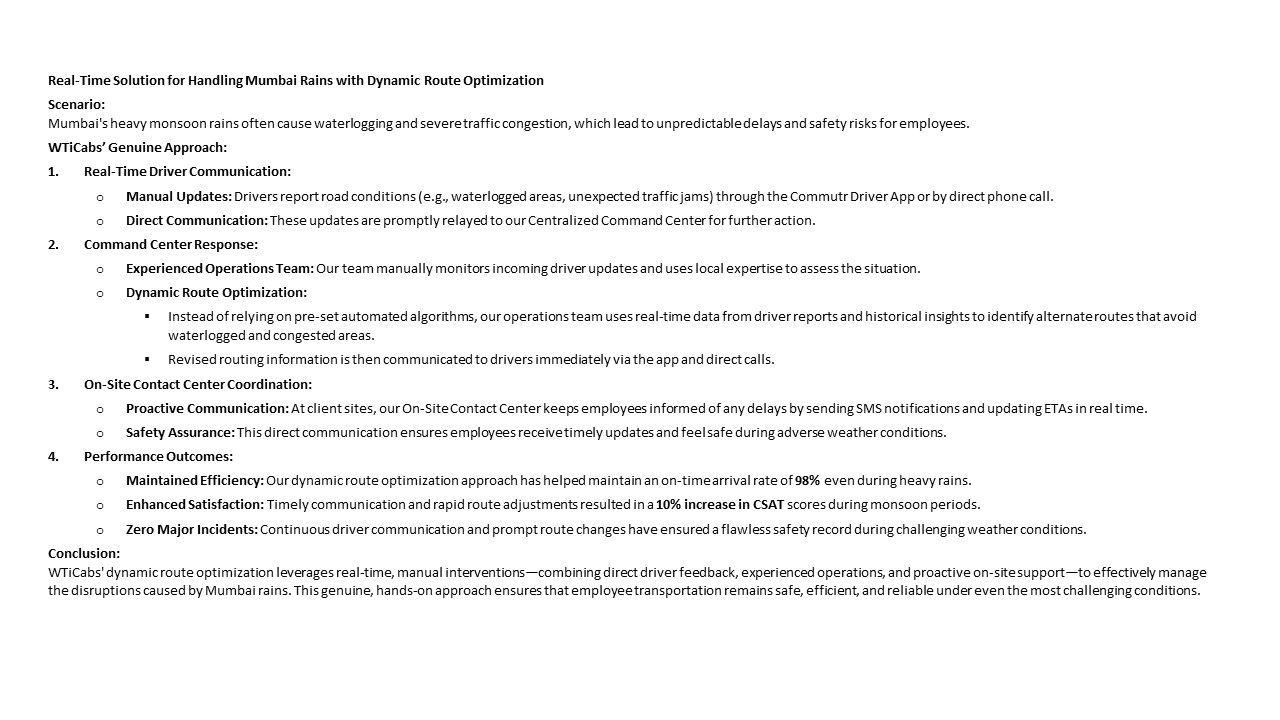

A practical pattern is to start from a top-line OTP target that leadership accepts as “good enough” for reliability and shift adherence. Many mature EMS programs target 98% on-time arrivals across routes and timebands. The residual 2% can be treated as the total error budget for late pickups and missed drops combined. The Operations or NOC lead can then slice this 2% by shift window, criticality level, and root-cause category. Night shifts, women-first routes, and critical production shifts should be given stricter sub-budgets, because safety and business-continuity risk is higher in these windows. Day-shift and low-risk routes can tolerate slightly higher variance. High-disruption periods like monsoon or known city events should have pre-agreed temporary adjustments with explicit mitigation plans, as seen in WTicabs’ monsoon-routing case where dynamic re-routing supported a 98% on-time arrival rate even under adverse conditions.

Error budgets remain healthy when most exceptions map to known, controlled causes such as extreme weather, law-and-order issues, or major infrastructure failures. Error budgets become a red flag when a growing share of exceptions cluster in controllable buckets such as routing quality, driver allocation, vehicle readiness, app failure, or poor command-center response. The NOC should categorize every late pickup and missed drop into operational root causes tied to routing, fleet, driver, technology, or external conditions. This allows the error budget to guide where to act, rather than who to blame.

To prevent the error budget from turning into a personal or vendor-blame instrument, the NOC lead should embed three structural safeguards. First, performance reviews should focus on trend lines and systemic patterns across time, not on isolated incidents. Coordinators and vendors should be assessed on how quickly they detect and recover from exceptions, and how they contribute to route optimization, driver training, and preventive maintenance. Second, accountability should be layered through a clear escalation matrix and a command-center governance model so that no single individual is seen as the “owner” of every failure. Combined central and site command centers, as described in WTi’s MSP governance structure, show how responsibilities can be distributed between central supervision and local on-ground response.

Third, the error budget should be integrated with continuous-improvement loops, not with incident-driven punishment. Real-time dashboards, such as those used for CO₂ tracking and operational visibility in EV fleet programs, can be extended to show OTP% by shift, route, and root cause. Weekly NOC reviews should focus on which process changes, routing rules, or vendor adjustments reduce future consumption of the error budget. This reframes late pickups and missed drops as signals for redesigning routing logic, boosting standby capacity, or tightening driver and vehicle induction protocols, instead of as reasons to penalize individuals. Over time, the error budget becomes a stabilizing tool for the Operations and NOC team, helping them reduce firefighting, manage high-risk shifts more calmly, and demonstrate to HR and leadership that commute reliability is controlled through governed processes rather than reactive blame.

If our data is split across vendors and spreadsheets, what’s a practical way to baseline reliability—OTP, exceptions, incidents, and time-to-resolve—so we know what’s broken?

B1459 Baseline reliability with messy data — In India corporate ground transportation for employee commute (EMS), what is a practical way to baseline reliability today (OTP, exception rate, incident frequency, mean time to resolve) when data is fragmented across vendor apps and manual registers?

A practical way to baseline reliability with fragmented data is to define a single “trip ledger” for the enterprise, then backfill it weekly from all available sources using a light, SOP-driven process, instead of trying to fix every system first. The baseline becomes a governed spreadsheet or database that standardizes trip IDs, timestamps, and exceptions across vendor apps, GPS exports, and manual registers.

The first step is to lock the reliability definitions before touching data. Operations teams need a simple, written one-pager that defines what counts as On-Time Performance, what is tagged as an exception, what constitutes a safety or service incident, and what “resolved” means for Mean Time to Resolve. This reduces arguments later when HR, vendors, or Security look at the same numbers.

The next step is to nominate a weekly “trip ledger owner” within the transport or command-center team. That person should receive exports from vendor apps, GPS tools, and Excel logs from sites, then normalize them into a common schema. The schema should minimally include trip date, site, vendor, vehicle, employee count, planned vs actual pickup time, exception flags, incident flags, and closure timestamps.

Once that schema is stable, organizations can calculate OTP as on-time trips divided by total trips, exception rate as trips with any operational deviation, and incident frequency as safety or service incidents per 1,000 trips. Mean Time to Resolve can be calculated from first logged time of an incident to its documented closure in the ledger, even if initial inputs were emails or phone calls.

A simple control-room style SOP keeps this manageable. Transport heads can fix a weekly cut-off, run spot checks against raw logs, and review outliers with vendor supervisors. Over time, this ledger becomes the single source for SLA discussions, vendor scorecards, and compliance audits, even while integration with HRMS, NOC dashboards, or command centers matures in parallel.

How do we run post-incident reviews without it becoming vendor-bashing or blaming HR, and what data do we need to make it action-oriented?

B1460 Blameless post-incident reviews — In India corporate Employee Mobility Services (EMS), how do post-incident reviews (PIRs) work without turning into a vendor-bashing or HR-blaming session, and what evidence (trip logs, call records, GPS traces) is typically needed to make the PIR action-oriented?

Post-incident reviews in Indian Employee Mobility Services work best when they are run as a governed, evidence-led process owned by a cross-functional group, not by any single vendor or HR. A structured PIR uses verified trip data, GPS traces, and call/chat logs to reconstruct the trip lifecycle and then assigns corrective actions to transport, vendor, security, and HR based on where controls actually failed.

A common failure mode is an unstructured review that starts with blame. That usually happens when the command center does not have a clean trip ledger, when GPS data is fragmented across vendors, or when there is no clear escalation matrix. In those cases, people argue over narratives instead of checking a unified trip record, and PIRs tend to stall on “who is at fault” rather than “which control broke”.

An effective PIR in EMS usually pulls data from the command center tools, alert supervision systems, and mobility apps. Typical evidence includes trip creation and approval records from the EMS platform, GPS traces and route adherence reports from telematics or IVMS, and event timelines from SOS or geofence alerts. Call-center or helpdesk logs, driver app manifests, and employee app check-in or OTP data help validate timestamps, while compliance dashboards confirm whether driver KYC, vehicle fitness, escort rules, and women-safety protocols were in force.

To stay action-oriented, the PIR bench-marks the incident against defined SLA and safety KPIs such as on-time performance, route adherence audit scores, incident response time, and escort compliance. Responsibilities are then tied to specific control gaps, like roster design, routing decisions, driver fatigue management, command-center escalation, or vendor governance, rather than to broad categories like “HR” or “the vendor”. A follow-up cadence, often run through a command-center governance model, tracks whether routing rules, SOPs, and tech configurations were updated so the same pattern of failure does not repeat.

What early signals predict a 2 a.m. escalation, and how do we monitor them without flooding coordinators with alerts?

B1461 Early warnings without alert fatigue — In India corporate EMS commute operations, what are the early warning signals that predict a 2 a.m. escalation (driver no-show, app latency, route deviations), and how should an SRE-style monitoring approach reduce coordinator cognitive load rather than increase alerts?

In Indian corporate employee mobility operations, the most useful early warning signals are those that show a pattern shift before a driver no‑show, app failure, or route deviation reaches employees. An SRE‑style monitoring approach should therefore track a small, opinionated set of “golden signals” tied to OTP, safety, and app reliability, and should auto‑correlate and suppress noise so that coordinators only see 5–10 prioritized incidents per shift, not hundreds of raw alerts.

Early warning signals for a 2 a.m. escalation typically appear 30–90 minutes earlier at the system, fleet, and roster levels. Repeated driver app reconnects on poor networks, rising ETA variance on a specific corridor, or an unusual spike in unassigned or re‑routed trips in the routing engine often precede visible no‑shows and deviations. A sudden increase in manual overrides by coordinators and last‑minute roster edits is also a strong predictor that the system is trending into a reactive mode.

An SRE-inspired monitoring design should treat the transport command center like a production NOC. It should define 4–6 shift‑level SLOs such as OTP%, trip adherence rate, routing engine latency, and driver app uptime, and trigger incidents only when these breach pre‑set thresholds by corridor, vendor, or timeband. The command layer should absorb raw GPS, HRMS, and app telemetry, group related failures into one incident, and attach a playbook step list so coordinators execute a known SOP instead of improvising under stress.

To reduce cognitive load rather than increase alerts, monitoring should focus on a few practical patterns:

- Turn many low‑level metrics into one health score per route cluster or vendor for each shift window.

- Escalate early only when trends persist across multiple trips or drivers, not for single blips.

- Route non‑critical anomalies into summary reports while keeping the live dashboard for only action‑requiring events.

A transport head gains operational calm when the command center surfaces only those incidents that can still be influenced before employees are affected, while the system silently logs everything else for SLA, billing, safety, and ESG reporting.

How can SLOs and runbooks help me defend our transport performance when leadership asks why an incident happened and what we changed?

B1462 Defensible performance for leadership — In India employee transport (EMS), how can a Facilities/Transport Head use SLOs and runbooks to protect job security—i.e., make performance defensible when leadership asks, "Why did this incident happen and what changed so it won't repeat?"

In India employee transport, a Facilities/Transport Head protects job security by converting messy daily operations into explicit Service Level Objectives (SLOs) and pairing them with clear, tested runbooks. This creates a defensible record that incidents were anticipated, monitored, and handled according to agreed standards.

SLOs give leadership a shared definition of “good enough” for EMS. Typical SLOs cover on-time performance, exception detection-to-closure time, route adherence, safety incident rate, and driver or fleet compliance. These SLOs should be aligned to business context such as shift windowing, women’s night-shift policies, and business continuity expectations documented in continuity plans. When an incident occurs, the Transport Head can show whether the relevant SLO was breached, how quickly it was detected through command-center monitoring, and what mitigation was triggered.

Runbooks translate SLO breaches into predictable actions. For example, there can be specific runbooks for GPS failures, cab shortages, political strikes, monsoon disruptions, or app downtime, similar to the documented business continuity and mitigation plans. Each runbook defines triggers, steps, escalation paths, and communication templates, and is wired into command center operations, alert supervision systems, and escalation matrices.

To make performance defensible, the Transport Head needs three practices:

- Anchor SLOs and runbooks in formal governance, escalation, and BCP documents, so they are approved expectations, not ad-hoc reactions.

- Use dashboards, alert systems, and incident logs to prove detection, response times, and closure actions for every material event.

- Continuously update SLOs and runbooks after each root-cause analysis, so leadership sees a closed loop from incident to structural change.

When leadership asks “Why did this incident happen and what changed?”, the Transport Head can walk through the relevant SLO, show the timestamped incident record from the command center or transport control centre, the executed runbook steps, any gaps found, and the updated SOP, routing, or vendor-compliance rule. The narrative becomes “this is how our designed system behaved and here is how we have raised the guardrail,” rather than a personal defence based on memory.

This approach directly supports centralized command-center models, business continuity playbooks, and safety and compliance frameworks already common in Indian EMS, and it shifts accountability from individual heroics to governed, auditable process.

How do we align HR’s safety/experience goals with Finance’s cost pressure when SRE practices look like they add cost?

B1463 HR vs Finance on reliability cost — In India corporate ground transportation (EMS), how do you reconcile HR’s focus on employee experience and safety with Finance’s demand for cost control when SRE practices (extra monitoring, redundancy, on-ground support) appear to increase costs?

In Indian employee mobility (EMS), organizations reconcile HR’s safety and experience priorities with Finance’s cost pressure by treating SRE-style investments (monitoring, redundancy, on‑ground support) as cost re-allocation that improves reliability, reduces leakage, and prevents high‑severity risk rather than as pure cost add-ons. The shift is from “more spend on transport” to “more predictable unit economics and lower incident risk per trip.”

Most enterprises start with fragmented vendors, manual rostering, and reactive firefighting. This creates hidden dead mileage, poor On‑Time Performance, and escalations that hit HR’s safety narrative and Finance’s CET/CPK targets. SRE-like practices in EMS—24×7 command center supervision, alert systems, defined escalation matrices, and business continuity playbooks—directly improve OTP, incident response, and auditability, which are shared KPIs across HR, Finance, and Security. The Industry Insight Summary frames this as moving from manual control to “Centralized NOC & Observability” and “Assurance by design,” where exceptions are surfaced early and closed faster.

From a Finance perspective, these controls are justified when they are tied to measurable outcomes such as reduced SLA breach rate, lower incident rate, and better Vehicle Utilization Index and Trip Fill Ratio. Cost control then focuses on total cost of ownership—cost per employee trip plus the avoided cost of disputes, audits, and serious incidents. Outcome-linked contracts and analytics (for example, dead mileage reduction and vendor rationalization) allow SRE capabilities to pay for themselves over time by cutting waste and improving transparency. HR gains a defensible safety and experience story with audit-ready evidence, while Finance gets predictable, explainable numbers rather than recurring “exception” spend.

What review cadence actually reduces incidents—daily, weekly, monthly—and how do we avoid adding meetings that don’t improve reliability?

B1464 Cadence for continuous improvement — In India corporate EMS commute operations, what governance cadence actually works for continuous improvement—daily ops review, weekly trend review, monthly SLO/error budget review—and how do you avoid creating a meeting-heavy process that still doesn’t reduce incidents?

In Indian corporate employee mobility operations, a three-layer cadence works best for continuous improvement. Daily operational huddles keep shifts stable, weekly trend reviews catch patterns, and a monthly service-level and error-budget review realigns contracts and routing. Each layer only works when it is tightly scoped to specific data, clear ownership, and decision rights, or the process becomes meeting-heavy without reducing incidents.

Daily reviews are most effective when they behave like a control-room stand-up rather than a long meeting. Operations teams should focus on the last 24 hours of On-Time Performance, exception closure time, and critical safety deviations surfaced by the command center or alert supervision system. A common failure mode is mixing long-term discussions into these daily huddles, which dilutes focus and increases fatigue for the facility or transport head.

Weekly trend reviews work when they stay analytical and cross-functional. Transport, HR, and Security can study route adherence audits, Trip Adherence Rate, driver fatigue signals, no-show clusters, and complaint themes from rider apps. This weekly lens is where continuous improvement sprints, routing tweaks, and driver coaching actions are agreed. A common failure is turning these into status updates instead of decisions tied to measurable changes in OTP or incident rate.

Monthly SLO and error-budget reviews are the right place for Procurement, Finance, and ESG leads. These sessions should reconcile SLA breaches, cost per employee trip, EV utilization ratios, and carbon-abatement metrics with billing, penalties, and incentives. Most organizations overload this meeting with operational detail and vendor storytelling. The meeting works only if it stays contractual and KPI-driven, using standardized dashboards, audit trails, and command-center reports.

To avoid a meeting-heavy culture that still fails to reduce incidents, organizations need three guardrails. Each recurring meeting must have a single owner, a fixed metric set, and predefined decisions that can be taken in that forum. Incident prevention actions must be time-bound and traced back to subsequent KPIs so that repetition is avoided. Finally, command-center tooling and automated alerts should absorb routine variance so that human meetings focus only on exceptions that truly need escalation or design change.

Who should own SLOs, runbooks, and post-incident reviews—transport, HR, vendor NOC, or IT—and what RACI prevents night-time gaps?

B1465 RACI for SRE-style operations — In India corporate Employee Mobility Services (EMS), what roles typically own SLO definitions, runbook upkeep, and post-incident reviews—Facilities/Transport, HR, vendor NOC, or enterprise IT—and what RACI prevents gaps when incidents happen at night?

In Indian corporate Employee Mobility Services, Facilities or Transport teams usually own day-to-day SLO definitions and runbooks, while HR owns safety and experience policies, the vendor’s NOC runs live monitoring and first response, and Security/EHS and sometimes IT provide oversight and evidence for post-incident review. A clear RACI that anchors operational ownership with Transport but formalizes HR, Security/EHS, and vendor roles is what prevents gaps when incidents occur at night.

Facilities or Transport heads act as the internal “operations command center” for EMS. They are responsible for shift-aligned routing, daily reliability, SLA-bound delivery, and command-center operations. They therefore typically lead SLO setting for OTP, route adherence, and exception closure, maintain runbooks for routing, escalation, and vendor coordination, and chair or co-chair operational post-incident reviews.

HR owns the duty-of-care narrative and women-safety policies. HR is accountable for ensuring commute safety, night-shift provisions, and employee experience, and participates in post-incident reviews to address trust, policy gaps, and communication back to employees and leadership.

The vendor’s centralized NOC or command center runs real-time monitoring, alerts, and escalation workflows. The NOC is responsible for following the agreed runbooks, logging incidents and responses, and providing auditable trip and GPS data into post-incident reviews.

Security or EHS leads own safety compliance and incident governance. They are accountable for safety-by-design controls, HSSE compliance, and reconstructing incidents from evidence, so they should co-own SLOs for safety (incidents, escort compliance) and lead safety-focused RCAs.

Enterprise IT guards data, integration, and uptime. IT is usually consulted for SLOs on platform availability, data retention, audit trails, and privacy but is not the operational owner of transport incidents. IT supports post-incident reviews where data, logs, or integrations are implicated.

A practical night-shift RACI that reduces gaps looks like this:

- Responsible (R): Vendor NOC for real-time detection, first-line containment, and notifications; Transport/Facilities for on-ground coordination, rerouting, and immediate continuity actions.

- Accountable (A): Transport/Facilities head for operational SLO adherence and runbook execution; HR for duty-of-care outcomes and employee communication on safety incidents; Security/EHS for safety compliance and final RCA sign-off on safety-related cases.

- Consulted (C): HR, Security/EHS, and IT for SLO design, runbook updates, and complex incident reviews that touch on safety, policy, or data integrity.

- Informed (I): Senior leadership, Procurement, and CFO for major incidents, SLA breaches, or patterns that affect contracts or risk posture.

When this RACI is explicit for EMS, with Transport owning the “2 a.m.” operational response and the vendor NOC bound to clear escalation SLAs, organizations reduce ambiguity, close safety and compliance gaps, and make post-incident reviews evidence-driven rather than blame-driven.

Where can SLOs look green but employees still complain, and how do we design SLOs that match real commute experience?

B1466 Green metrics, bad experience — In India corporate employee commute operations (EMS), what are the common failure modes where SLOs look green but employees still complain (e.g., punctuality met but long ride time, poor escalation handling), and how do you design SLOs to reflect lived experience?

In Indian corporate employee commute operations, SLOs often look “green” when they track only vendor-centric metrics like on-time pickups or trip completion, while employees judge the commute on total journey effort, perceived safety, and how issues are handled in real time. A more accurate design links SLOs to the full trip lifecycle, including routing quality, safety controls, communication, and complaint closure, rather than just punctuality or kilometers.

Common failure modes emerge when routing and operations are optimized for cost or SLA optics instead of lived experience. Route planners can hit high seat-fill and OTP while creating excessively long routes and detours, leading to fatigue and dissatisfaction. Centralized command centers can show good GPS uptime and trip adherence even when escalation handling is slow or fragmented. Safety and compliance dashboards can show “all clear” if they measure document validity but not escort rules, women’s night-shift protocols, or SOS responsiveness.

Better SLOs tie directly to how employees experience each shift. Organizations can define separate targets for door-to-door commute time bands, not just route start OTP. They can measure Trip Adherence Rate for route stability, not only whether a cab eventually arrived. They can track complaint closure SLAs and incident latency from alert to action, alongside safety metrics like incident rates and audit trail integrity. Integrating HRMS and feedback data allows commute SLOs to correlate with attendance, attrition, and Commute Experience Index, making “green” dashboards reflect reality instead of only operational inputs.

How do we use GPS/app/coordinator telemetry for reliability without it feeling like surveillance, while still staying audit-ready for incidents?

B1467 Telemetry without surveillance culture — In India corporate ground transportation (EMS), how do you prevent SRE-style telemetry (GPS traces, app events, coordinator actions) from feeling like "Big Brother" surveillance for coordinators, drivers, and employees while still being audit-ready for incidents?

In Indian employee mobility programs, telemetry works when it is framed as safety-and-reliability tooling with clear limits, not as people-surveillance, and when those limits are documented, communicated, and technically enforced while still preserving incident-ready audit trails.

Most organizations reduce the “Big Brother” perception by explicitly separating what is monitored for safety and SLA assurance from what is not monitored about individual behavior. Telemetry such as GPS traces, app events, and coordinator actions is positioned as evidence for on-time performance, route adherence, SOS readiness, and women’s safety compliance, not as a productivity or disciplinary tool. This orientation is reinforced through written policies, driver and employee induction, and HSSE briefings that explain purpose, scope, and retention in plain language.

Audit readiness is maintained by treating telemetry as a structured “trip ledger” rather than a live feed on people. Organizations retain time-bounded trip data with chain-of-custody for specific use cases such as incident reconstruction, compliance audits, and SLA disputes. Access to raw traces is restricted through role-based controls so that command-center teams see what is needed for live operations, while detailed historical data is exposed only to designated safety, HR, or audit stakeholders under defined SOPs.

Practical guardrails that reduce surveillance anxiety include limiting tracking to rostered duty windows, disabling or obfuscating precise location outside trips, aggregating metrics for dashboards, and using telemetry primarily to power routing, exception alerts, and EV uptime rather than individual scoring. A common failure mode is silent data expansion, where new fields or longer retention are added without updating policies or communication; this quickly erodes trust even if the technical intent is benign.

Early involvement of Transport, HR, and Security in defining “acceptable monitoring” standards typically improves adoption. Driver and coordinator training that links telemetry to concrete benefits like fewer disputes, faster SOS response, and protection against false allegations helps reposition the system as a shield rather than a spotlight. In practice, programs that publish clear escalation matrices, incident SOPs, and data-access rules achieve both operational observability and social acceptability more reliably than those that rely only on technology design.

In an EMS RFP, what should we ask vendors to prove they can support runbooks and post-incident reviews, not just quote high OTP?

B1468 RFP requirements for SRE ops — In India corporate Employee Mobility Services (EMS), what should Procurement ask for in an RFP to ensure vendors can support SRE-style runbooks and post-incident reviews (e.g., evidence retention, escalation participation, change management) rather than just promising high OTP?

In Indian corporate Employee Mobility Services, procurement should explicitly require vendors to submit SRE-style runbooks, incident workflows, and evidence models as evaluated deliverables, not just commit to OTP percentages. RFP questions and scoring should focus on how vendors detect, handle, and learn from failures across command-center operations, data trails, and governance, with OTP treated as an outcome of that system rather than the only KPI.

Procurement teams should ask vendors to attach concrete runbooks for typical EMS failure modes. These runbooks should cover driver no-show, GPS or app downtime, vehicle breakdown, women-safety escalation, and severe-weather disruption. Each runbook should show step-by-step actions, decision thresholds, handoffs between the 24x7 command center and local teams, and targeted recovery times for restoring service and communication.

The RFP should demand a clear post-incident review process. Vendors should describe how they conduct root-cause analyses, which stakeholders are involved, what data is used from trip logs and command-center dashboards, and how corrective actions are tracked to closure. Procurement should ask for sample redacted incident reports to verify depth, not just templates.

To make SRE-style governance auditable, RFPs should require detailed evidence retention and audit-trail descriptions. Vendors should specify how long they store GPS traces, manifests, SOS logs, and escalation timestamps. They should explain how they ensure audit trail integrity and chain-of-custody for safety and compliance reviews. Procurement can link payment or performance evaluation to the completeness and accessibility of this evidence during audits.

The RFP should also cover change management for routes, apps, and policies. Vendors should describe how they roll out routing-engine changes, app updates, and new safety protocols without destabilizing operations. They should present approval workflows, rollback plans, and communication SOPs when shift patterns, fleet mix, or escort rules change. This aligns with centralized command-center practices and hybrid-work elasticity.

To embed vendor participation in governance, procurement can require structured escalation matrices and joint review cadences. The RFP should ask for the vendor’s proposed escalation ladder, participation in monthly SLA reviews and quarterly business reviews, and how incident and SRE learnings will update EMS operating procedures. Evaluation should favor vendors who already run centralized NOCs, use data-driven insights, and treat EMS as a governed service with continuous improvement rather than a dispatch-only operation.

How do we tie contracts to SLOs and error budgets so vendors improve behavior, without triggering constant SLA disputes and billing fights?

B1469 Contracts aligned to SLOs — In India corporate employee transport (EMS), how do you structure outcome-linked contracts so SLOs and error budgets drive behavior (vendor staffing, response times, preventive actions) without creating endless SLA disputes and invoice holds?

In Indian corporate employee transport, outcome-linked contracts work best when a small set of clear SLOs and explicit error budgets govern behavior, and when the contract hardwires how data, reviews, and penalties are applied automatically. Contracts fail when they overload SLAs, use ambiguous data sources, or leave disputes to monthly negotiation instead of pre-defined rules.

The most stable structure starts with a narrow KPI set that reflects real EMS priorities. On-time performance for pick‑up/drop, safety incident rate, and Trip Adherence Rate are usually primary. Vehicle utilization, dead mileage caps, and complaint-closure time can sit as secondary levers. Each KPI needs a precise definition, a single system of record, and a measurement window that both sides accept in advance.

Error budgets should be defined as explicit tolerance bands rather than binary pass/fail triggers. For example, the contract can allow a 3–4% OTP shortfall in a month before any penalty applies. This protects both client and vendor from normal volatility in traffic, weather, or one-off events. The error budget also acts as a trigger for preventive actions, such as joint route recalibration, driver retraining, or adding standby capacity during certain shifts.

Dispute volume drops when the contract codifies a hierarchy of causes and exclusions. Most organizations map “vendor‑controllable” breaches separately from external or client‑driven causes and agree a standardized exception list. This separation is essential for tying penalties only to controllable failure modes, such as driver shortages, roster errors, or missed dispatch, and not to last‑minute roster changes or security holds.

Contracts are easier to administer when SLAs are linked to an agreed command-center view. Centralized dashboards, trip logs, and alert histories become the authoritative data. This is strongly supported by industry practice around 24x7 NOCs, geo-fencing, SOS events, and real-time route monitoring described in the context. The same dashboards can also surface CO₂ metrics and EV utilization where sustainability outcomes are part of the scope.

To keep behavior positive, many enterprises pair monetary penalties with structured performance tiers. Higher tiers can unlock eligibility for more lanes, renewal weightage, or gain-share on demonstrated cost or emission reduction. Lower tiers can trigger corrective action plans rather than immediate commercial punishment. This tiering makes vendors invest in preventive measures such as better driver training, fatigue management, or buffer vehicles.

A predictable governance rhythm is critical. Quarterly business reviews aligned to a fixed template can review SLO performance, error-budget consumption, incident RCA packs, and planned improvements. Between reviews, a short weekly or fortnightly operational huddle can focus on early warning signals such as rising exception rates, specific night-shift clusters, or recurring complaint themes.

The contract also needs clear playbooks for “what happens when we miss.” That usually includes root‑cause analysis timelines, agreed corrective steps, and how quickly metrics must return within the error budget. When these steps are defined upfront, both sides can avoid ad‑hoc argument and focus on repairs rather than blame.

Finally, data ownership, API access, and auditability clauses should be explicit. Continuous assurance depends on preserved GPS logs, incident records, and compliance dashboards that can be revisited during audits. This supports HR, Security, and ESG leads in defending numbers and safety narratives, and it reduces Finance’s reliance on manual reconciliations that often drive invoice holds.

Should we pilot SLOs and runbooks on one site/shift first or roll out everywhere, and what early proof would Finance accept before scaling?

B1470 Pilot vs scale SRE practices — In India corporate EMS commute operations, what implementation approach reduces risk: piloting SLOs/runbooks on one site or shift band first versus rolling out enterprise-wide, and what early results should a CFO accept as proof before scaling?

For Indian corporate EMS commute operations, piloting SLOs and runbooks on one site or a narrow shift band is the lower‑risk path, and CFOs should treat that pilot as a controlled “proof of unit economics and reliability” before any enterprise‑wide rollout. A focused pilot limits exposure if routing, vendor behavior, or tech integration underperform, and it creates clean before/after baselines that can be defended in Finance and Audit reviews.

A limited-scope pilot also fits how EMS actually operates. Most issues concentrate in specific high‑risk zones such as night shifts, monsoon-heavy locations, or high-volume tech parks. Running new SLOs and SOPs there lets teams validate real on-time performance, driver behavior, and safety compliance under stress conditions. Centralized command center practices, alert supervision, and business continuity playbooks are easier to tune with one control room plus a small number of routes before extending them to other regions.

For a CFO, pilot “success” should be defined by a small, auditable set of metrics that link directly to cost, risk, and reliability rather than anecdotal satisfaction. These early proof points should include a stable or improving cost per km and cost per employee trip, a clear uptick in fleet uptime and trip adherence, and visibly lower escalation volume from that site or shift band. Reduction in dead mileage and idle time, fewer billing disputes due to cleaner trip data, and evidence that business continuity plans work during disruptions are additional signals that the SLOs and runbooks are mature enough to justify scaling.

SRE-minded reliability metrics, SLOs, and runbooks

Translates reliability concepts into practical targets and repeatable procedures; specifies runbooks, non-gameable SLOs, and how to avoid gaming or misreporting.

After a post-incident review, how do we make sure action items actually get closed with owners, deadlines, and verification instead of becoming a forgotten report?

B1471 Closing actions after incidents — In India corporate employee transport (EMS), how do you operationalize "continuous improvement" after post-incident reviews so action items actually close (owner, due date, verification) rather than becoming a report nobody revisits?

Continuous improvement after transport incidents only works when post-incident actions are treated as operational work with owners, SLAs, and verification, not as recommendations in a PDF. Effective EMS teams in India convert every significant incident into a tracked “mini-project” with a defined owner, due date, and closure proof, and they review these items in the same forum that reviews OTP and safety KPIs.

A common failure mode is treating incident reviews as one-off meetings. Transport heads often circulate a report by email without linking actions to the existing command center, escalation matrix, or compliance dashboards. This causes the same GPS gaps, driver issues, or routing errors to reappear in later incidents because no one is accountable for finishing the fixes. Continuous improvement fails when action items are not logged into a system that operations already use daily, such as the command center ticketing tool, alert supervision system, or transport MIS.

Strong operators use a simple but strict loop. They register each incident in a central log, identify root causes that tie back to controllable levers such as routing, driver behavior, fleet compliance, or app reliability, and then create specific actions with named owners and realistic deadlines. They align these actions with existing governance mechanisms like HSSE audits, business continuity plans, or centralized compliance checks so that follow-up happens during routine reviews, not as extra work that gets dropped.

To keep control-room life manageable, most teams limit “improvement projects” to a small set of high-impact themes. They pick recurring issues such as late pickups in specific timebands, driver fatigue, or monsoon routing failures and define a short list of measures, for example route rule changes, driver retraining, or buffer vehicles. They then track success using existing KPIs such as OTP, incident rate, or audit scores, rather than creating new parallel metrics.

In practice, the operational guardrail is to insist that no incident is considered closed until three elements exist. There must be a recorded action plan with owners and dates, an implemented change such as a modified SOP or system rule, and a verification step, such as a random route audit or command center check, that is visible in regular dashboards or management reports.

When the app or GPS fails, what should our fallback look like, and how do runbooks keep service running without chaos?

B1472 Graceful degradation in commute ops — In India corporate EMS commute operations, what does "graceful degradation" look like during app downtime or GPS failure—manual fallback, phone tree, paper manifest—and how do runbooks ensure service continuity without chaos?

Graceful degradation in Indian corporate EMS commute operations means that when apps or GPS fail, core shift movements continue through predefined manual fallbacks, and every action still leaves an auditable trail. It replaces “smart automation” with simple, rehearsed SOPs so drivers, coordinators, and command centers can run the operation on phones, paper, and spreadsheets without collapsing OTP or safety controls.

In practice, most mature EMS operators already design for failure alongside automation. Command centers use alert supervision systems and transport command center dashboards to monitor trips, but they also maintain manual tools like duty slips, phone-based confirmations, and SMS/IVR updates. Centralized compliance and safety frameworks remain in force, because driver vetting, fleet compliance, and women-centric safety protocols do not depend on an app being live at that moment.

Runbooks define very specific fallbacks for app downtime and GPS loss. Typical steps include switching from app manifests to pre-generated route sheets, using operator phone trees and WhatsApp broadcast lists for last-mile coordination, and updating shift and no-show reports manually for later reconciliation in billing systems. Business continuity plans and contingency slides in the collateral show that operators map these failure modes in advance alongside other disruptions such as strikes, disasters, or cab shortages.

Well-written runbooks also hard-code who does what in the first 5–15 minutes of a failure. They assign responsibility to command center staff, routers, supervisors, and vendor partners for actions such as confirming critical pickups by call, enforcing escort and women’s safety rules manually, and logging exceptions for later audits. This preserves SLA governance, safety and compliance even when technology partially fails, and it keeps the Facility/Transport Head out of emergency firefighting on every minor outage.

As HR, how do we tell if reliability work is actually improving employee trust—what signals matter beyond dashboard numbers?

B1473 Measuring trust beyond dashboards — In India corporate Employee Mobility Services (EMS), how should a CHRO judge whether SRE-style reliability work is improving employee trust—what signals matter (complaint recurrence, escalation sentiment, perceived safety) beyond a dashboard score?

In corporate Employee Mobility Services in India, a CHRO should judge whether reliability work is improving employee trust by tracking whether safety incidents, commute disruptions, and escalation noise actually reduce and stay down over time. Dashboard scores are useful, but trust becomes visible when complaints stop recurring, sentiment in escalations softens, and night-shift and women employees report that “commute is a non-issue” in HR and EX forums.

A first signal is recurrence. A serious complaint or incident should never look identical twice. If SRE-style reliability is working, similar issues cluster early, are fixed with clear SOP or system changes, and then disappear from logs. If the same failure modes keep reappearing by route, time-band, or vendor, then reliability work is not translating into trust, regardless of OTP%.

A second signal is escalation sentiment and channel. When trust improves, fewer commute issues jump directly to CHRO, senior leaders, or social media–like forums. Employees use normal transport helpdesks or in-app feedback instead of bypassing them. Language inside tickets shifts from fear and anger (“unsafe,” “ignored,” “no one responded”) to inconvenience (“late,” “confusing”) and then to low-volume, neutral feedback.

A third signal is perceived safety, especially for women and night shifts. Night-shift and women-centric routing, escort compliance, SOS responsiveness, and audit-ready evidence are central in EMS. If women employees and ERG groups report feeling safer, if night-transport is not discussed as a risk topic in townhalls, and if Security/EHS can reconstruct and close incidents with clean trip logs, then reliability work is supporting trust, not only SLA charts.

Additional practical signals a CHRO can use include: - Attendance and attrition deltas for heavy-commute teams, particularly in late shifts. - The ratio of raw incidents to “near-miss” or early alerts detected by the command center before employees escalate. - Closure SLAs for safety-linked complaints and the quality of communication back to employees. - Whether HR can answer leadership questions like “How often does this happen, and what changed after last time?” with precise, consistent data rather than anecdote.

When SRE-style reliability is aligned with EMS operations, centralized command-center practices, and safety-by-design controls, the commute moves out of HR’s crisis agenda. The strongest indicator of improved trust is that employees no longer talk about transport as a daily risk, and the CHRO is no longer on the defensive after every incident review.

How do we train coordinators and vendors on runbooks and post-incident reviews without creating fear that mistakes will be punished?

B1474 Training without fear culture — In India corporate employee commute operations (EMS), how do you coach coordinators and vendor teams to use runbooks and post-incident reviews without creating fear that mistakes will be used for punishment or performance downgrades?

In Indian EMS operations, coordinators and vendor teams use runbooks and post‑incident reviews constructively when leaders explicitly separate “learning events” from “disciplinary events,” and back this separation with clear SOPs, metrics, and behavior from the command center and management. Fear reduces incident reporting and hides weak signals, while a learning-focused review culture improves OTP, safety, and ESG compliance over time.

A common failure mode is using post‑incident reviews mainly to find a person to blame. This discourages coordinators from escalating early when GPS fails, a driver is fatigued, or a vehicle is non‑compliant. In contrast, mature EMS operations treat runbooks like cockpit checklists. The runbook defines what a 2 a.m. coordinator does during cab shortages, app downtime, or monsoon disruption, and the review asks, “Was the playbook clear and realistic?” before asking, “Did the person follow it?”

To make this work in daily EMS reality, supervisors should coach teams that:

- Runbooks are protection tools, not surveillance tools. If coordinators follow them and document exceptions in the command center or ETS/Commutr systems, that becomes evidence they acted responsibly under pressure.

- Post‑incident reviews are about strengthening the playbook and infrastructure. For example, adding backup routing when the mobility app or GPS fails, tightening BCP triggers for political strikes, or adjusting vendor buffers when driver attrition spikes.

- Only willful negligence or repeated ignoring of critical safety steps (like escort rules for women at night, or skipping SOS escalation) triggers a different, HR/EHS‑led disciplinary track. This boundary must be written, socialized, and repeated in daily shift briefings.

Linking reviews to system fixes also reduces fear. When teams see outcomes like extra standby vehicles added via the Business Continuity Plan, adjusted routing in heavy rain based on the Mumbai monsoon case study, or new alerts via the Alert Supervision System, they experience incident reviews as a path to operational calm, not as a threat. This is reinforced when command centers share aggregate indicators (OTP%, incident closure times, audit scores) rather than naming and shaming individuals.

Over time, coordinators trust the process when three things are consistent. First, leaders use data from dashboards and NOC tools to fix processes and infrastructure gaps before questioning individual capability. Second, reviews focus on “What made it hard to follow the runbook at that hour?” instead of “Who dropped the ball?” Third, vendors see that honest self‑reporting of near‑misses feeds into structured improvements in compliance, BCP, and driver training, rather than automatic commercial penalties.

If reliability doesn’t improve even after runbooks and PIRs, how do we figure out if it’s the vendor, our internal policies, or lack of visibility—without triggering a blame war?

B1475 Diagnosing root causes politically — In India corporate EMS commute operations, when reliability stays poor despite runbooks and PIRs, how do you tell whether the root cause is vendor behavior, internal policy constraints (shift changes, approvals), or lack of observability, without starting a political blame war?

In Indian corporate EMS commute operations, the fastest way to separate vendor failure from internal policy constraints and observability gaps is to move the discussion from opinions to trip-level evidence and KPI timelines. A transport team can do this by reconstructing the full trip lifecycle against agreed SOPs and SLAs and asking, at each step, “Who had control here, and what data proves it?”

A practical pattern is to start from symptoms that matter to operations, such as repeated OTP misses on specific shifts, frequent last‑minute roster edits, or unexplained no‑shows. Each symptom is then traced along the ETS / EMS operation cycle, from booking request to routing, vendor allocation, dispatch, trip execution, and closure, using logs from the EMS platform, NOC, and HRMS integration. Where the evidence trail breaks, the likely root domain is lack of observability. Where the trail is clear but shows conflicting instructions or late approvals, the root domain is internal policy and process. Where the trail is clear, policies were respected, and allocations were stable, yet OTP or safety still fails, the root domain is vendor behavior.

To avoid a “blame war,” most organizations define a neutral, shared KPI set for EMS covering OTP%, Trip Adherence Rate, exception detection‑to‑closure time, and Vehicle Utilization Index. These KPIs are sliced by control dimension, such as “cases with last‑minute roster change,” “cases with on‑time roster but late vehicle arrival,” or “cases with app or GPS downtime flagged by command center.” A pattern where OTP collapses only when rosters move after cutoff indicates policy constraints. A pattern where OTP is poor even on stable rosters across multiple vendors indicates observability or routing issues. A pattern where OTP is poor only for a specific vendor, timeband, or cluster—despite on‑time rosters and clean telemetry—points to vendor discipline, driver fatigue, or fleet adequacy.

Command centers are critical for this separation because they create tamper‑evident trip ledgers and alert histories that everyone can see. Centralized NOC or Transport Command Centre tooling allows neutral classification of every exception as routing/technology, policy/roster, or vendor/driver. This reduces personal blame and shifts the conversation to structural levers such as changing shift‑change cutoffs, tightening vendor SLAs, adjusting fleet mix, or improving HRMS integration.

For our employee transport ops, how do we set SLOs and error budgets that match realities like OTP, missed trips, and response time—without committing to unrealistic “zero issues”?

B1476 SLOs and error budgets mapping — In India corporate Employee Mobility Services (EMS) operations, how do SLOs and error budgets translate into practical service expectations like on-time pickup/drop, missed-trip rates, and incident response—so the Transport Head can defend performance without promising “zero issues”?

In Indian Employee Mobility Services operations, SLOs and error budgets convert abstract “99% reliability” into explicit, defendable thresholds on OTP, missed trips, and incident response times. The SLO defines the target performance level. The error budget defines how many failures are acceptable before the vendor and transport team must slow changes or trigger corrective actions.

In practice, a transport head can use SLOs to set clear expectations on on-time pickup and drop performance. For example, an EMS SLO can specify that a defined percentage of trips must meet shift windowing and Trip Adherence Rate targets. The error budget then allows a small, quantified buffer for delays due to traffic, weather, or roster changes without labeling the operation as a failure. This framing makes “occasional exceptions” acceptable as long as overall OTP and exception-closure metrics stay within the agreed window.

Missed-trip and no-show rates can be similarly governed through SLOs on Trip Adherence Rate and No-Show Rate. The error budget here identifies the allowable percentage of missed or aborted trips in a period. This keeps pressure on vendors to maintain fleet uptime, driver retention, and routing quality, while protecting the transport head from unrealistic zero-failure expectations.

Incident response SLOs focus on exception detection and closure time. Centralized command-center operations, alert supervision systems, and escalation matrices are measured on how quickly they detect a route deviation, SOS trigger, or compliance breach and close the ticket. The error budget defines how many slow or failed responses are tolerated in a cycle before governance actions and root-cause analysis are mandatory.

For a facility or transport head, the key is to tie every SLO and error budget to traceable KPIs such as On-Time Performance percentage, Trip Adherence Rate, exception detection-to-closure time, and incident rate. This linkage allows them to show leadership that most trips run within agreed thresholds, exceptions are within the error budget, and every breach produces an audit-ready root cause and corrective action rather than vague excuses.

When teams try SRE-style ops for mobility but still get late-night escalations, what usually goes wrong—and how do we figure out if it’s process, vendor behavior, or tool gaps?

B1477 Why SRE still causes escalations — In India corporate ground transportation (EMS/CRD), what are the common failure modes when teams adopt an SRE mindset (SLOs, runbooks, post-incident reviews) but still end up with 3 AM escalations—and how can Operations diagnose whether the issue is process, vendor behavior, or tooling gaps?

Most transport teams that adopt an SRE-style model still get 3 a.m. escalations because the SLOs and runbooks are defined on paper but not anchored to real-world mobility constraints, fragmented vendors, and live command-center behavior. Operations can usually diagnose whether the root cause is process, vendor behavior, or tooling by tracing a few concrete signals: where did detection happen, who had authority to act, and what data was actually visible in the command center at the time of the incident.

Process failure is common when ETS/CRD ops cycles remain partially manual. Manual rostering, ad-hoc route overrides, and weak hand-offs between HR, Transport, and vendors undermine even well-written SOPs. A typical symptom is that OTP% and TAR targets exist but shift windowing, dead-mile caps, and business continuity playbooks are not enforced in day-to-day routing and capacity decisions.

Vendor behavior is usually the core issue when a multi-vendor aggregator model exists without firm vendor governance frameworks and periodic capability audits. A high SLA breach rate concentrated in specific timebands, sites, or vendors indicates that driver fatigue management, fleet uptime, and standby buffers are being offloaded to the customer. A pattern of last-minute no-shows, poor escort compliance, or repeated RTO/permit non-compliance despite clear SOPs points to structural vendor gaps rather than process design.

Tooling gaps are evident when the command center lacks real-time observability, automation, and integrated data flows across EMS/CRD, HRMS, and finance. If alerts are reactive rather than predictive, if routing engines are not traffic- or hybrid-work aware, or if the NOC cannot see cross-vendor fleet utilization, then SLOs are not backed by actionable telemetry. Data silos between telematics, employee apps, and billing also block fast root cause analysis, leading to repeated 3 a.m. escalations.

To separate these dimensions, Operations leaders can run three focused diagnostics:

- Process: Map the ETS/CRD operation cycle against actual incident timelines and check where manual workarounds bypassed the defined TOM, escalation matrix, or business continuity plans.

- Vendor: Compare OTP%, incident rate, and compliance audit findings by vendor and timeband to see if failures cluster around specific operators, geographies, or vehicle types.

- Tooling: Review whether the command center’s alerts, routing decisions, and audit trails surfaced the risk before impact, and whether data from driver and rider apps, telematics, and billing could be reconciled quickly post-incident.

When these diagnostics are run consistently, most 3 a.m. escalations resolve to a visible blend of all three: process that tolerates exceptions, vendors without real teeth in their SLAs, and tooling that reports yesterday’s state instead of predicting tonight’s risk.

What early warning signals should we track to prevent missed pickups, without making drivers or employees feel like we’re policing them?

B1478 Leading indicators without surveillance — In India enterprise employee commute operations (EMS), how should a Facility/Transport Head choose a small set of reliability signals (leading indicators) that predict breakdowns before they become missed pickups—without creating a ‘Big Brother’ surveillance culture for drivers and employees?

In Indian enterprise employee commute operations, a Facility or Transport Head should focus on a small set of operational leading indicators that sit close to the trip lifecycle, tie clearly to On-Time Performance (OTP), and can be explained to drivers and employees as safety and reliability tools rather than surveillance. These indicators should be built from existing EMS data such as routing, GPS tracking, driver duty cycles, and exception logs, and should be governed through clear SOPs, communication, and limited access rules to avoid a “Big Brother” perception.

The most predictive reliability signals usually come from the command center view of trip adherence and fleet health. Early signs such as recurring pre-shift routing changes, clusters of last-minute roster edits, or repeated manual overrides of the routing engine often precede missed pickups. Patterns in Vehicle Utilization Index, dead mileage, and shift windowing can expose routes that are too tight to absorb normal Indian traffic or weather disruptions, as shown in case studies where dynamic route optimization maintained a 98% on-time arrival rate even in monsoon conditions. When combined with simple driver-side metrics like duty cycles and repeated late sign-ins, these signals give early warning of potential OTP drops without monitoring personal behavior beyond what is operationally necessary.

To avoid a surveillance culture, each chosen indicator must be purpose-limited, transparent, and aggregated where possible. Trip-level GPS and route adherence data should be used to monitor fleet performance and safety KPIs like Trip Adherence Rate, not to micro-manage individual drivers outside duty hours. Driver fatigue or overwork risks can be inferred from shift patterns and duty slips rather than invasive tracking. Command-center teams can use structured escalation matrices and exception workflows that focus on resolving operational risks early, while compliance and safety dashboards can provide anonymized or role-appropriate views to HR, Security, and ESG stakeholders.

Practical selection criteria for a small reliability signal set include:

- Each signal must have a proven link to missed pickups, late logins, or high exception latency.

- Each signal should be calculable from data already captured for routing, billing, or safety compliance.

- Each signal should be explainable to drivers and employees as a safety, compliance, or reliability control.

- Access to raw trip-level data should be restricted, with aggregated views used for leadership reporting.

For night-shift safety cases like SOS or driver no-show, what should the runbook include—and how do HR and Security split ownership so reviews don’t become blame games?

B1479 Night-shift safety runbook design — In India corporate EMS night-shift transportation, what does a good runbook look like for women-safety escalations (SOS, geo-fence breach, driver no-show), and how do EHS/Security and HR align on escalation ownership so post-incident reviews don’t turn into blame?

A good women-safety escalation runbook in India EMS night-shift transport is explicit, time-bound, and evidence-backed. It defines what is “critical,” who owns each minute of response, and how EHS/Security and HR split roles between incident control and employee care. It also bakes in audit-ready data from SOS, geo-fence, and no-show events so post-incident reviews stay factual instead of becoming blame discussions.

A strong runbook starts with clear trigger definitions. An SOS press by a woman employee, a geo-fence breach into a disallowed zone, a route deviation beyond a defined buffer, driver/app device tampering, or a night-shift driver no-show past a set threshold must all be classified and tiered. The Alert Supervision System, SOS control panel, and transport command centre tooling should map each trigger to an automated alert, with escalation paths and time-stamped logs for when the command centre, vendor, EHS/Security, and HR were notified.

EHS/Security should own the live incident response. EHS/Security leads immediate controls such as contacting the vehicle/driver, activating SOS workflows, coordinating with local security or police if needed, and ensuring safe rerouting or evacuation. HR owns parallel employee support. HR ensures that the affected employee is contacted by a designated, trained responder, that safe accommodation or alternate commute is arranged, and that managers are informed in a way that protects confidentiality and avoids victim-blaming.

Post-incident, the runbook should mandate a joint EHS–HR review structured around data, not opinion. Command centre trip logs, GPS traces, geo-fence alerts, driver compliance records, and SOS timelines feed into a standard RCA template. EHS documents control failures and process gaps. HR captures employee impact and communication gaps. Both sign off on a corrective-action plan that may include driver retraining or de-induction, routing rule changes, or app-level improvements, and this is then fed into safety and compliance dashboards for continuous assurance.

To keep reviews from becoming blame cycles, the runbook should codify ownership boundaries and language. EHS/Security is accountable for “safety controls worked or failed.” HR is accountable for “employee was supported and heard.” Transport operations is accountable for “SOPs and routing were feasible and followed.” Vendors are accountable for “driver behavior and fleet compliance.” Joint governance forums, such as command-centre led service reviews, should use predefined KPIs like incident rate, route adherence, and complaint closure SLAs so that leadership looks at patterns and systemic fixes instead of single-person fault.

How do we run post-incident reviews that actually reduce repeat issues and speed up recovery, instead of creating reports that just sit in a folder?

B1480 Post-incident reviews that stick — In India enterprise-managed employee mobility services (EMS), how do mature teams structure post-incident reviews so they drive measurable improvement (fewer repeats, faster recovery) instead of producing reports that nobody trusts or uses?