Routing & Rostering: a 3-lens playbook for daily reliability

This isn’t a demo. It’s an operational guardrail for the dispatch floor: guardrails, SOPs, and escalation paths that keep drivers, sites, and vendors aligned when weather turns or a gate blocks progress. It’s built for nights, weekends, and peak weeks when the risk of chaos is highest. The three lenses map to: stability-first guardrails for fast recovery, codified real-world constraints and inputs, and governance with clear ownership and measurable ROI. Use them to cut firefighting in half and give leadership an auditable, repeatable plan.

Is your operation showing these patterns?

- 3 a.m. escalations spike despite supposed stability

- Last-minute edits flood the desk and push night-shift workloads

- GPS outages or app downtime surface as chaos only after events

- Vendor response delays threaten night coverage during weather events

- Drivers report fatigue from an 'always-on' dispatch cycle

- Leadership asks for ROI proof that survives late changes and edits

Operational Framework & FAQ

stability-first routing & rostering governance

Guardrails that keep rosters and routes stable during peak shifts; define escalation paths, freeze policies, and graceful degradation when systems glitch.

For our employee commute program, what all comes under routing and rostering beyond just making routes, and why does it usually break in day-to-day operations?

B1125 What routing & rostering includes — In India-based Employee Mobility Services (EMS) for corporate ground transportation, what does “routing & rostering” actually include beyond making routes—specifically shift windowing, clustering, seat-fill targets, and day-of-operations recalibration—and why do these pieces fail in real operations?

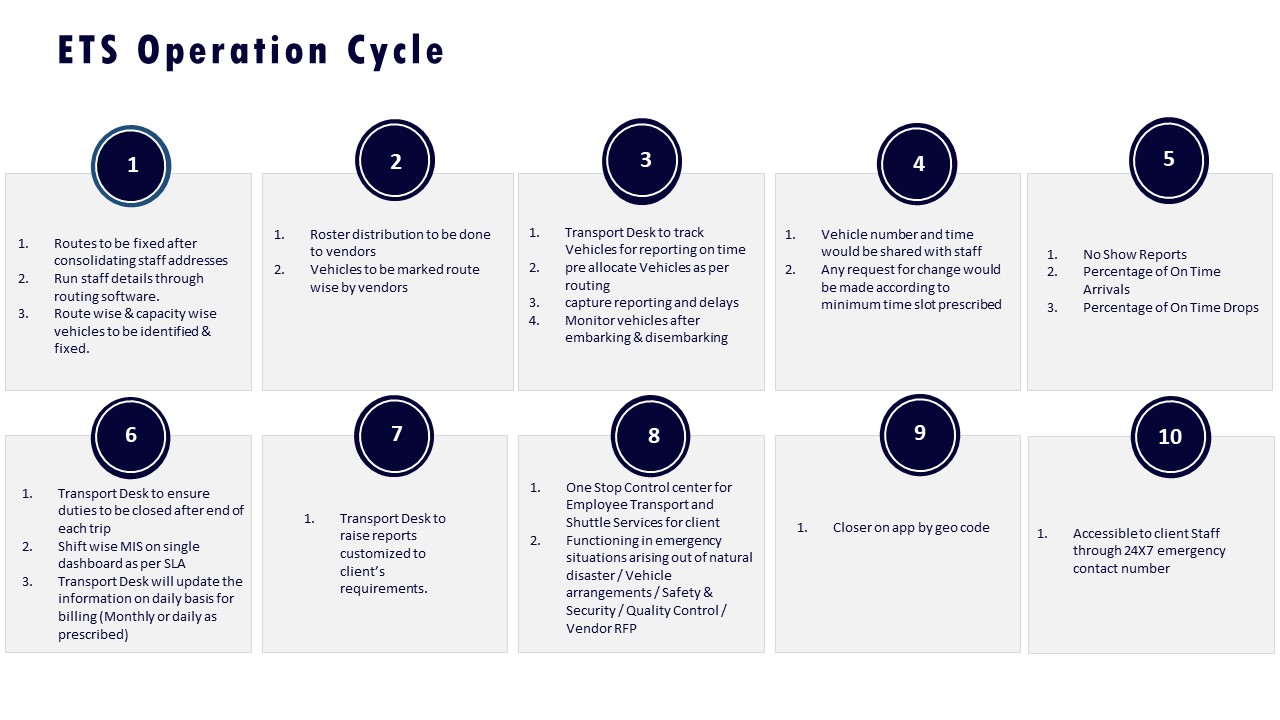

Routing and rostering in India-based Employee Mobility Services include four tightly linked layers of control-room work. Each layer goes beyond drawing static routes on a map. Each layer tends to fail when data, SOPs, and vendor behavior are not aligned.

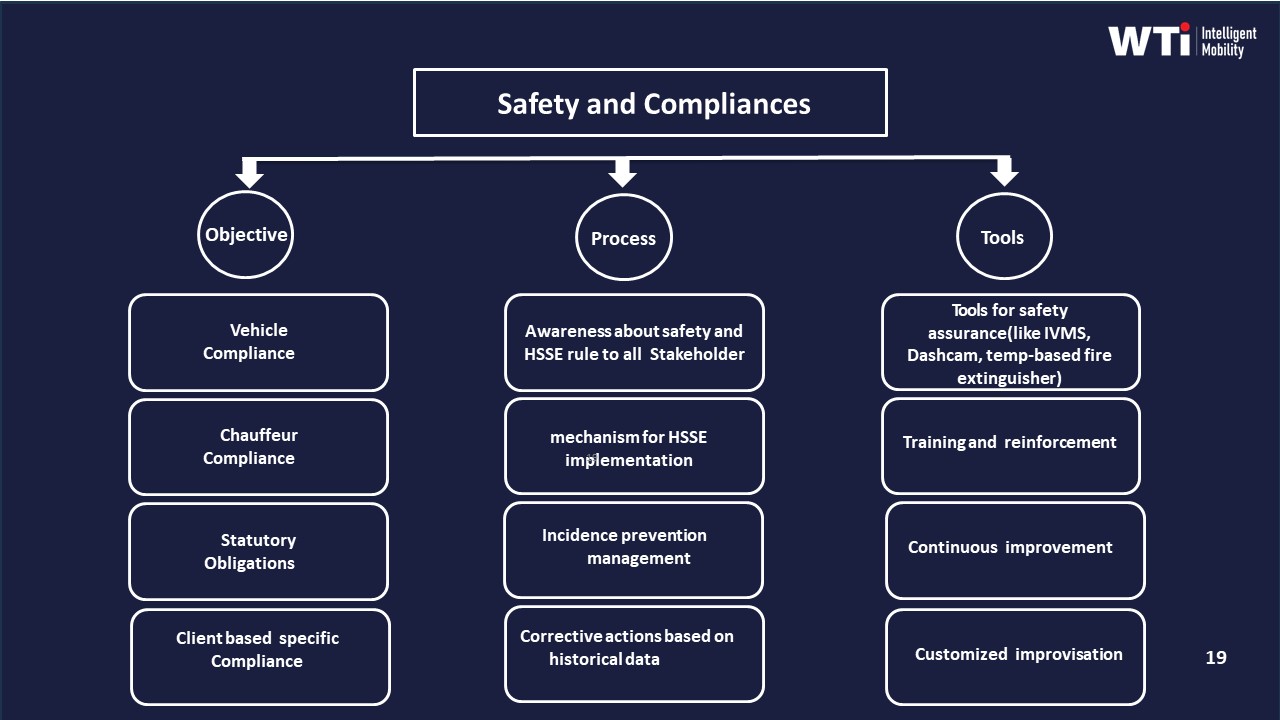

Routing and rostering start with shift windowing. Shift windowing groups employees by permissible pickup and drop bands around official shift times. It also applies policy rules for night shifts, women-first routing, and escort requirements. This layer fails when HRMS data is outdated, when actual login times differ from declared shift times, or when policy rules are not encoded into the routing engine and are left to manual judgment at the last minute.

The second layer is clustering. Clustering groups employees by geography, traffic corridors, and permissible detour limits before any vehicle assignment. It sets the base for dead mileage caps and time-on-road norms. This layer fails when address data is inaccurate, when frequent relocations are not updated, or when routing tools are not integrated with live traffic patterns. In those situations, transport teams are forced back into manual cluster adjustments every shift.

The third layer is seat-fill targets. Seat-fill targets define how many seats must be utilized per cab to meet cost-per-trip and cost-per-kilometer baselines. They also influence the fleet mix between sedans, MUVs, and shuttles. This layer fails when there is no clear policy on maximum ride time, when cancellation behavior is not modeled, or when targets are driven only by cost and not linked to employee experience and safety limits.

The fourth layer is day-of-operations recalibration. Day-of-operations recalibration responds to no-shows, last-minute bookings, breakdowns, and weather or traffic disruptions while protecting OTP and safety constraints. It depends on a command center, driver and rider apps, geo-fencing, and exception SLAs. This layer fails when GPS or apps are unstable, when vendor response is slow, or when there are no clear escalation matrices and playbooks for rerouting under stress.

A common failure pattern is that routing logic is treated as a one-time planning exercise instead of a continuous loop with live data and SLA feedback. Another frequent issue is that procurement, HR, and Transport set conflicting priorities across cost, safety, and experience, so routing and rostering teams operate with inconsistent signals.

Why do our pickup/drop time windows and cut-off times matter so much, and how can we tell our current rules are causing last-minute changes?

B1126 Why shift windows drive stability — In India corporate employee transport (EMS), why do shift window definitions (pickup/drop tolerances, grace periods, and cut-off times) matter so much for on-time performance and fewer last-minute roster edits, and what are common signs the shift-windowing rules are wrong?

Shift window definitions in Indian corporate employee transport determine whether routing can be planned as a stable “batch” or forced into continuous firefighting. Clear pickup/drop tolerances, grace periods, and booking cut‑offs allow routing engines and vendors to lock rosters, cap dead mileage, and protect on‑time performance (OTP), while loose or ambiguous rules push operations into last‑minute edits, unstable routes, and higher exception rates.

Shift windowing is the mechanism that converts hybrid attendance and variable demand into predictable shift windowing and route design. When windows are tight but clear, routing optimization, seat‑fill targets, and dead‑mile caps can be applied reliably. When windows are vague, every late request or exception becomes a manual override, which erodes OTP, increases command‑center load, and raises driver fatigue. Stable windows also enable outcome‑linked procurement, because OTP%, Trip Adherence Rate, and Cost per Employee Trip can be measured against consistent operating assumptions.

Misconfigured or “wrong” shift-window rules usually show up first as operational symptoms rather than policy debates. Typical signs include chronic last‑minute roster changes close to cut‑off times, persistent no‑shows clustered around specific timebands, and frequent ad‑hoc vehicle requests outside planned fleet capacity. Another signal is high exception latency in the command center because manual route recalculation is needed every time someone misses a cut‑off. Repeated driver complaints about unrealistic routing between back‑to‑back windows indicate that seat‑fill and travel times are not aligned with real traffic patterns. A widening gap between planned versus actual OTP% by shift, despite sufficient fleet, is often the clearest proof that the shift window logic, not the supply, is the underlying problem.

When vendors say “clustering logic,” what data should actually go into it for our commute, and what goes wrong when clustering is done badly?

B1127 Clustering logic inputs and failures — In India enterprise-managed employee commute programs (EMS), how should a buyer interpret “clustering logic” in routing—what inputs (home locations, site gates, shift bands, gender rules, traffic patterns) typically matter, and what failure modes create repeated escalations and employee complaints?

Clustering logic in Indian enterprise employee commute programs is the set of rules and inputs a routing engine uses to group employees into shared cabs within a shift window. Clustering determines who travels together, which route is chosen, and how pick-up and drop sequences are planned, so it directly affects on-time performance, women’s safety compliance, cost per trip, and daily escalation volume.

In practice, clustering logic usually combines several core inputs. Home locations define geo-clusters so employees in nearby neighborhoods are pooled. Site gates and entry points define where vehicles must report, including separate gates for security or gender-specific entries. Shift bands define pick-up and drop buffers around rostered login and logout times, including different windows for day, evening, and night shifts. Gender and escort rules define where women must be first pick-up or last drop, where escorts are mandatory, and which routes are approved at night. Traffic patterns define preferred corridors and blacklisted roads based on congestion, monsoon impact, and local risk, and they influence how the route is sequenced even for employees who live close together.

Repeated escalations often come from ignoring one of these inputs or applying them inconsistently. A common failure mode is clustering only by geography while ignoring shift bands, which causes early pickups, late drops, or missed logins. Another is violating women-safety or escort rules when trying to maximize seat-fill, which leads to HR and Security complaints even if OTP is high. Static routes that do not adapt to real traffic patterns or weather cause chronic delays on the same corridors, especially during monsoon or peak events. Over-aggressive cost optimization that pushes long detours and high ride times per employee creates perception of unfairness and fatigue complaints from specific pockets. Poor HRMS integration and stale rosters lead to incorrect manifests, no-shows, and repeated routing for employees who are on leave or working from home.

Signals that clustering logic is failing include the same routes and cabs appearing in daily complaint logs, recurrent late logins from one corridor or shift band, frequent exceptions raised by women employees on specific routes, and on-ground supervisors repeatedly asking for manual overrides. When these patterns appear, buyers should treat clustering rules and inputs as tunable policy parameters, not a black box, and insist on auditable routing criteria, route-level KPIs, and the ability for transport teams to adjust buffers, risk rules, and max ride-time limits without breaking the entire system.

What does a seat-fill target really mean for our routes, and how do we push utilization without hurting OTP, safety rules, or employee satisfaction?

B1128 Seat-fill targets vs experience — In India corporate ground transportation for shift-based EMS, what does “seat-fill target” mean operationally, and how do organizations balance seat-fill versus on-time pickups, women-safety constraints, and employee experience without triggering morale backlash?

Seat-fill targets in Indian shift-based employee mobility are route-level goals for how many available seats in each vehicle should be occupied on average, so that cost per employee trip comes down without increasing dead mileage or eroding service reliability. Seat-fill is treated as an operational KPI alongside On-Time Performance, Trip Adherence Rate, safety metrics, and experience indicators, not as a standalone cost lever.

Operations leaders typically define seat-fill within the broader routing and capacity strategy that also includes shift windowing, fleet mix policies, dead-mile caps, and dynamic route recalibration. Seat-fill targets are then enforced through the routing engine and rostering rules, but are bounded by safety and duty-of-care constraints for women’s night shifts, escort policies, and compliance with labor and transport norms. A common failure mode is treating seat-fill as a hard target without these constraints, which tends to push longer detours, tighter buffers, and higher fatigue for drivers.

Most mature EMS programs balance seat-fill against on-time pickups by giving OTP and Trip Adherence Rate primary status in procurement scorecards and vendor SLAs. Seat-fill is then optimized within a defined service envelope that fixes maximum route duration, maximum pickups per trip, and strict shift window constraints. This reduces the risk that aggressive optimization quietly converts into late logins and manager complaints, which HR and Transport heads then absorb as daily firefighting.

Women-safety constraints are usually encoded as non-negotiable routing rules, especially for night shifts. These rules can include female-first drop policies, escort compliance, geo-fenced no-go areas, and approvals for high-risk routes. Dynamic routing and geo-AI risk scoring are then used to route within these constraints, so that seat-fill improvements never require compromising escort rules or extended detours for lone female passengers. A common risk is manual overrides during peak-load pressure, which is where centralized command-center operations and real-time monitoring provide an additional governance layer.

Employee experience is protected by designing seat-fill policies around commute comfort thresholds rather than pure arithmetic averages. Operations teams typically specify maximum in-vehicle time, reasonable detour limits, and predictable boarding times as design constraints for the routing engine. HR and ESG stakeholders then link commute experience indices and complaint closure SLAs to the mobility governance board, so that any seat-fill push that triggers morale backlash is visible early through NPS, attendance deltas, and escalation patterns.

In practice, organizations that avoid backlash treat seat-fill as one KPI in an outcome-based commercial framework, alongside OTP%, safety incident rate, and complaint closure SLAs. Payments and penalties are indexed to this broader set of outcomes, which discourages over-optimization on utilization alone and encourages vendors to use data-driven routing, EV telematics, and predictive maintenance to reduce cost per employee trip without visibly degrading experience or safety.

When our roster changes mid-day, how should dynamic re-routing work, what should stay locked, and how do we keep it from becoming chaos?

B1129 How dynamic recalibration should work — In India EMS routing & rostering for corporate employee transport, how does “dynamic recalibration” work during the day when rosters change—what triggers a re-route, what stays frozen, and how do you avoid chaos for drivers and riders?

Dynamic recalibration in Indian EMS works by tightly controlling what can change, and when, based on a few well-defined triggers and frozen “guardrails.”

It is not continuous reshuffling.

Most organizations limit recalculation to specific events, preserve core constraints like shift windows and safety rules, and push only a small set of clear, time-bounded changes to drivers and riders.

Dynamic recalibration is usually triggered by events like last-minute roster edits, employee cancellations or no-shows, new ad-hoc trip requests, vehicle or driver breakdowns, or major traffic or weather disruptions.

A routing engine or command center detects these events using HRMS-linked rosters, GPS/telematics, and exception alerts, then evaluates whether a recalculation will improve on-time performance and seat-fill without breaching safety or compliance rules.

Certain elements stay frozen to avoid chaos.

Shift start and end times remain fixed.

Night-shift women-safety rules, escort requirements, and geo-fence constraints cannot be violated.

Vehicle capacity and maximum duty hours stay locked, and a cutoff is enforced close to pickup time after which a rider’s stop is no longer moved.

These frozen components protect the Facility / Transport Head from constant micro-changes and preserve predictable patterns for drivers.

To avoid chaos for drivers, recalibration is constrained to limited re-sequencing within a route or controlled swapping between a small number of nearby routes.

Changes are batched and pushed at defined intervals rather than every few minutes.

Driver apps receive a new manifest with a clear “effective from” time, updated stop order, and turn-by-turn navigation, while the NOC monitors adherence via route-adherence audits and alerts for overspeeding, tampering, or missed geofences.

To avoid chaos for riders, organizations define hard communication and cutoff rules.

Riders receive route and pickup time only after a planning freeze for that shift window.

Post-freeze, only critical changes are allowed, such as swapping vehicles while keeping the pickup time and stop constant.

Employee apps show updated ETAs, driver details, and SOS options, and complaint closure SLAs ensure riders see issues resolved rather than ignored.

Most mature EMS operations also tie recalibration logic to outcome metrics.

On-time performance, no-show rates, trip fill ratio, and exception-closure time are monitored on a command-center dashboard.

Recalibration is considered successful only if these KPIs improve without increasing driver fatigue risk or breaching compliance and safety audit trails.

How do we measure the manual effort in our routing and rostering—edits, calls, Excel/WhatsApp work—so we have a clear baseline before we look at tools?

B1130 Measuring routing/rostering toil baseline — In India corporate employee commute operations (EMS), how can a Facility/Transport Head quantify “toil” in routing & rostering today (manual edits, exception calls, Excel dependence, WhatsApp coordination), and what baseline metrics are practical before evaluating new routing tools?

In Indian employee commute operations, a Facility/Transport Head can quantify “toil” in routing and rostering by directly measuring the human effort and failure-handling around every shift. Practical baselines focus on time spent, volume of manual interventions, and escalation patterns before any new routing tool is evaluated.

A Facility/Transport Head should first measure how much time the team spends each day on core routing and rostering tasks. A simple baseline is total planner hours per shift spent on building or editing rosters, reconciling Excel sheets, and manually sequencing routes. A second layer is micro-metrics, such as number of roster versions created per shift window and number of last-minute edits made after “final” routes are published.

Toil is most visible in exception handling and back-channel communication. A practical baseline is the count of exception calls and WhatsApp interventions per shift, including driver no-shows, vehicle swaps, location clarifications, and last-minute employee changes. Another useful metric is average time to resolve each exception from first alert to closure, which shows both stress on the control room and the effectiveness of current SOPs.

Spreadsheet and manual-tool dependence can also be quantified. A Facility/Transport Head can track how many active Excel files or manual trackers are used daily, how many times routing data is re-entered into different systems, and how often teams must manually reconcile trip data for billing, compliance, or reporting. These counts link directly to error risk and after-shift clean-up work.

Before evaluating any new routing tool, a minimal baseline set that most operations can capture is: - Planner hours per day spent on rostering and routing. - Number of manual route edits after first publish per shift window. - Exception calls/WhatsApp escalations per 100 trips. - Average exception resolution time during peak and night shifts. - Number of distinct spreadsheets/templates used daily for transport coordination.

These metrics give a Facility/Transport Head a grounded “before” picture of toil. They also align naturally with broader EMS KPIs such as On-Time Performance, Trip Adherence Rate, Vehicle Utilization Index, and No-Show Rate, which will be influenced once routing tools and command center practices start to automate more of the manual workload.

What usually causes the 2–5 a.m. roster blowups in employee transport, and how should we track root causes so they don’t keep repeating?

B1131 Root causes of night shift blowups — In India EMS routing & rostering for corporate ground transportation, what are the most common root causes behind 2 a.m.–5 a.m. schedule blowups (no-shows, vehicle mismatch, late roster freeze, wrong clustering, gate constraints), and how should an operations team instrument and track them?

In Indian EMS night-shift operations, most 2 a.m.–5 a.m. schedule blowups are caused by brittle rostering inputs, weak real-time visibility, and un-instrumented “last 500 meters” constraints. Operations teams reduce these failures when every failure mode is tagged, time-stamped, and linked to a small, standard root-cause taxonomy that is visible in the command center and in post-shift reports.

The most common root causes sit across three layers. At the planning layer, late or inaccurate HRMS rosters, last-minute shift changes, and manual clustering often create unrealistic routes and duty cycles. At the fleet and driver layer, thin buffers, driver fatigue, poor standby logic, and fragmented vendor supply cause no-shows, vehicle mismatch, and unplanned substitutions. At the ground-execution layer, campus gate rules, security checks, geo-fencing, and local disruptions like weather or political events convert small delays into complete route failures.

Operations teams should implement a single command-center view where every exception is captured as a structured event. Each event should record route ID, time band, vehicle, driver, location, and one primary root-cause code such as “Late roster freeze,” “Driver no-show,” “Vendor substitution,” “Gate hold,” “HR data mismatch,” or “Geo-fence / security delay.” Exceptions should be linked to KPIs like On-Time Performance, Trip Adherence Rate, dead mileage, and Vehicle Utilization Index, with filters for the 2 a.m.–5 a.m. window to detect patterns.

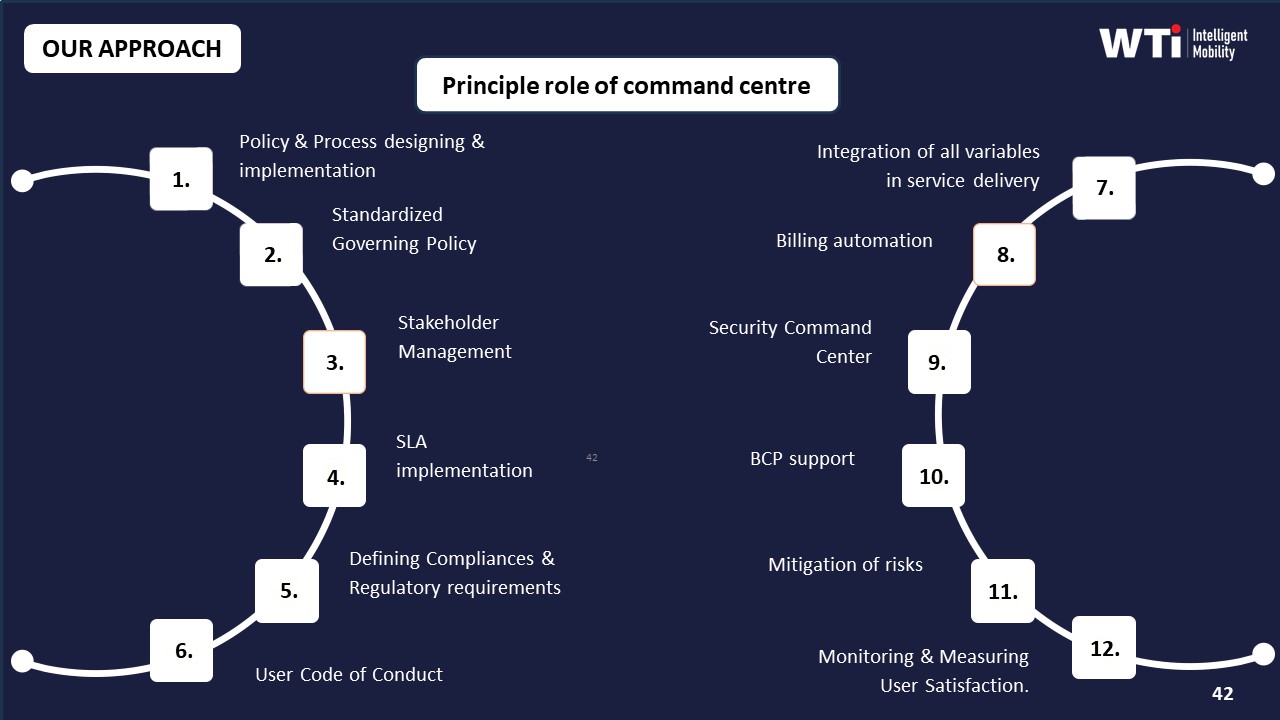

Effective instrumentation typically includes real-time GPS and geo-fencing alerts, IVMS-based fatigue and over-speeding indicators, automated roster ingest from HRMS, and a ticketing workflow from SOS and call-center inputs. Daily shift debriefs and route-adherence audits should use the same codes as the live system, so case studies, business continuity playbooks, and analytics on weather or city-specific challenges feed back into routing rules, standby buffers, and vendor tiering for night operations.

Where do routing decisions usually create fights between HR, Finance, and Facilities, and how do mature programs settle those trade-offs without daily escalations?

B1132 Resolving HR-Finance-Ops conflicts — In India employee mobility services (EMS), where do routing & rostering failures typically create political conflict between HR (employee experience), Finance (cost per trip), and Facilities (operational feasibility), and how do strong programs resolve these trade-offs without constant escalations?

In India employee mobility services, routing and rostering failures most often create conflict at three pressure points. These points are shift alignment versus cost, pooling efficiency versus employee experience, and last‑mile feasibility versus policy rigidity. Strong programs reduce conflict by making routing rules explicit, data‑backed, and jointly governed instead of leaving them to ad‑hoc nightly decisions by Facilities.

Routing and rostering tensions usually start when HR pushes for employee-friendly routing that protects attendance and safety, while Finance pushes for higher seat fill, lower dead mileage, and tighter cost per employee trip. Facilities then struggles to execute both under constraints of actual traffic, driver fatigue, and vehicle availability. A common failure mode is static routes that ignore hybrid-work variability, which drives low utilization and cost complaints, while also generating late pickups and NPS drops that HR cannot defend.

Another recurring conflict arises when pooling logic is optimized only for cost. High pooling can reduce cost per kilometer, but it often increases detours, travel time, and missed shift windows for some employees. HR then faces escalations around long rides and women’s night-shift routing, while Facilities deals with unworkable manifests during bad weather or roadblocks. Finance, meanwhile, sees only the monthly CET/CPK numbers and questions any deviation from pooling targets.

Strong EMS programs address these trade-offs by moving to outcome-based and rule-based routing rather than purely cost-based routing. Organizations define clear, cross‑functional guardrails such as maximum ride time by zone, hard cutoffs for first/last pickup, women‑safety route rules, and minimum seat‑fill targets by shift band. These rules are encoded into the routing engine and published as part of the EMS policy so that HR, Finance, and Facilities are aligned on when it is acceptable to break pooling for reliability or safety.

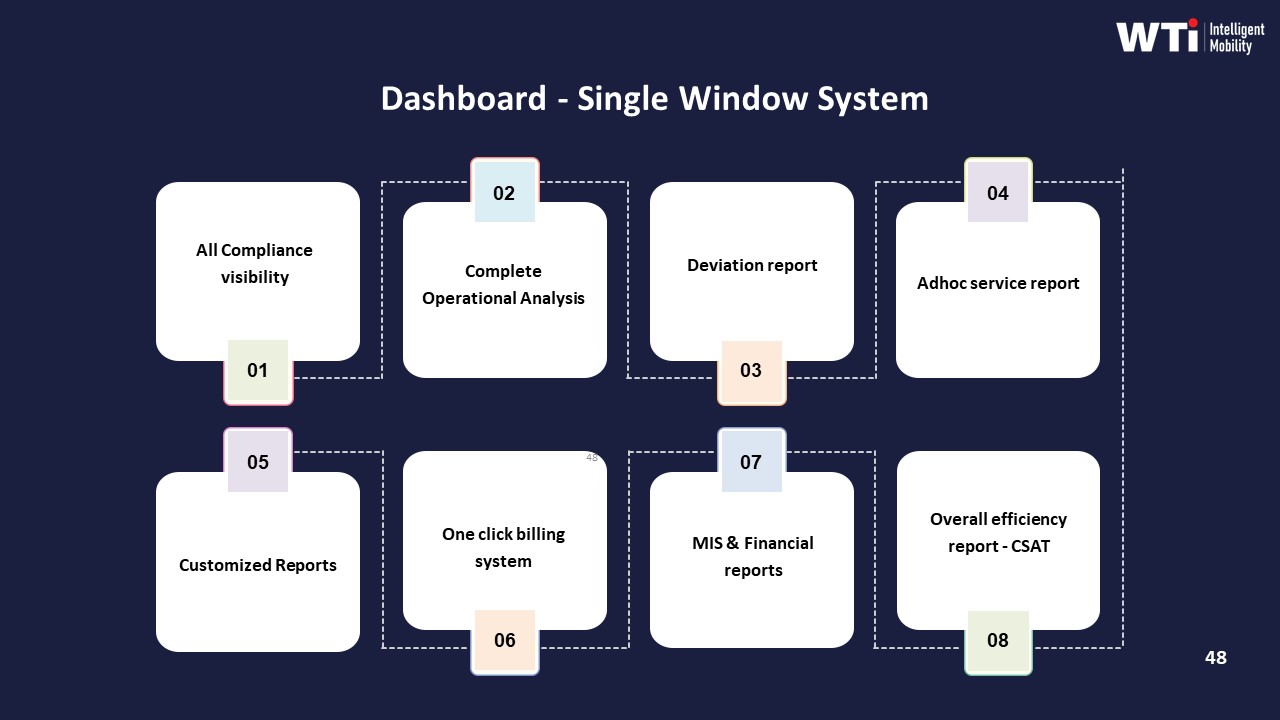

Effective programs also centralize observability through a 24x7 command center and agreed KPIs. On‑time performance, trip fill ratio, dead mileage, and complaint closure SLAs are tracked in one dashboard instead of fragmented spreadsheets. HR can see how routing choices impact commute experience metrics. Finance can see cost per trip trends tied directly to seat‑fill and dead‑mile data. Facilities gets early alerts on risk (driver shortages, route congestion, charging gaps) and can trigger predefined playbooks instead of improvising under pressure.

To avoid constant escalations, mature EMS setups use structured governance rather than one‑off exceptions. There is a documented escalation matrix and a quarterly review where HR, Finance, and Transport jointly review routing performance, exceptions, and cost. Policy changes, like adjusting pooling thresholds or adding standby vehicles for critical shifts, are decided in that forum and then codified into SOPs and the routing engine. This reduces nightly negotiation and shifts the debate to data and trends, not anecdotes.

Successful programs also accept that some cost is “protective spend.” For example, running buffer vehicles or allowing lower pooling in certain night windows can increase CET slightly but prevent service failures, safety incidents, and attrition. Finance is more likely to support this when there is a clear, quantified baseline and when these exceptions are transparent and capped by predefined rules rather than informal requests from Facilities.

Over time, this approach turns routing and rostering from a nightly political argument into a governed system. The core ingredients are explicit cross‑functional rules, integrated data, a central command center, and outcome‑linked contracts that balance OTP, safety, and utilization. These elements together reduce reactive firefighting and give each stakeholder a defensible position backed by shared evidence instead of conflicting narratives.

How do we set a roster freeze time and late-change policy that feels fair to employees but still keeps routes stable and on time?

B1133 Roster freeze and late-change policy — In India corporate EMS, how should HR and Facilities decide the “roster freeze” time and late-change policy (cutoffs, approvals, exception categories) so the program is fair to employees but doesn’t destroy routing stability and on-time performance?

In India corporate EMS, HR and Facilities should set roster freeze and late-change rules by working backward from shift start times and OTP targets, then layering exception categories, approval paths, and penalties or guardrails on top. The freeze time must protect routing stability and driver fatigue limits, while the exception policy must be narrow, auditable, and clearly communicated so employees see it as fair rather than arbitrary.

A practical pattern is to define a standard freeze window for all “normal” bookings and then a tighter, capped window for late requests and changes. Roster freeze needs to sit before the last routing and vendor distribution run, so dynamic routing engines and command centers can finalize manifests, seat-fill, and dead mileage control. If freeze is too close to shift start, route recalculation becomes reactive, which erodes OTP% and increases driver stress.

Late-change policy needs three elements. There should be explicit cutoffs by timeband (day vs night shifts), with anything beyond those treated as an exception rather than a right. There should be exception categories defined in HR policy (for example medical emergencies or critical business continuity cases) with specific approval owners, so command center staff do not have to negotiate edge cases on the fly at 2 a.m. There should be telemetry-backed review of exception volumes and their OTP impact so HR can refine rules without guesswork.

To make the policy feel fair, HR should link it to clear communication, visible data, and consistent enforcement. Employees need simple app workflows for regular bookings within the freeze window and transparent messaging when requests fall into “late change” buckets. Facilities teams need SOPs that explain who can override routing after freeze, what SLA still applies, and how these overrides are logged for later KPI and cost analysis. Shared dashboards that show OTP, no-show rates, and late-change trends help CHROs defend the policy to leadership and employees as a necessary guardrail for reliability, safety and driver well-being.

When we say the roster is editable, who should be allowed to change it, what approvals are needed, and how do we avoid edit wars and finger-pointing?

B1134 Editable roster governance and controls — In India corporate employee transport (EMS), what does “editable roster” mean in practice—who is allowed to edit what (HR, transport desk, vendor, NOC), what approvals are needed, and how do you prevent edit wars and blame-shifting when something goes wrong?

An “editable roster” in Indian corporate employee transport means the shift-wise pickup/drop list can be changed after it is first generated, but only within a controlled, role-based workflow with audit trails. The roster is not a free-for-all spreadsheet. It is a governed object where HR, transport desk, NOC, and vendors each have clearly defined permissions, cut-off times, and approval rules.

In practice, most organizations treat HR or line managers as owning who is eligible to travel and what shift they are on. HR or the HRMS feeds master shift data and eligibility flags into the transport platform. The transport desk or central NOC then owns operational routing. The desk can add or remove employees from a particular run, swap vehicles, and re-sequence routes within policy limits. Vendors usually only see the final driver manifest. They cannot unilaterally change who rides where. They may propose changes back to the desk when there is a breakdown or driver shortage, but the command center approves and publishes any change.

Edit rights are often tiered by risk. Low-risk changes like correcting a phone number, toggling a pickup landmark, or tagging an escort can be done by the transport desk without extra approvals. High-impact changes like adding a new woman employee to a night drop, changing escort rules, or overriding cut-off times usually require HR or Security/EHS approval, often via a short in-app workflow. The EMS system logs who initiated the change, who approved it, and when. This enables the Facility or Transport Head and HR to reconstruct exactly what happened on a problematic trip.

To prevent edit wars and blame-shifting, mature EMS setups rely on a few operating rules. There is a defined roster freeze or cut-off time per shift, after which only the NOC or duty manager can override the roster. Any override is visible to all parties on a single dashboard, with version history and change reasons captured. The vendor app receives read-only manifests linked to that version, so drivers are never improvising based on phone calls alone. Escalation matrices and SLAs define who can change a trip inside the “red zone” before departure, and any late edits trigger alerts rather than silent updates.

Most teams find it safer to keep eligibility and shift assignment editable by HR, operational routing editable by the transport desk and NOC, and vendor input constrained to exception signals such as “vehicle unavailable” or “driver no-show.” That division of control reduces scope for vendors to be blamed for decisions they did not take, and it protects transport heads from being blamed for HR-driven last-minute changes. The NOC acts as final arbiter during live operations. This structure also supports auditability and compliance, especially for women’s night-shift routing, escort compliance, and incident investigations.

How do we check if a vendor will actually cut down manual roster changes, not just show better-looking routes, and what should we demand in a pilot?

B1135 Proving toil reduction in pilot — In India EMS routing & rostering, how should a buyer evaluate whether a vendor’s system reduces manual roster edits (the real toil) rather than just producing nicer route outputs, and what should be demonstrated in a pilot to prove it?

In India EMS routing and rostering, buyers should evaluate vendors on how much they reduce real control-room toil per shift, not on how “smart” the routes look on slides. The core test is whether manual edits, last‑minute calls, and spreadsheet juggling drop sharply while OTP, seat‑fill, and compliance remain stable or improve.

A common failure mode is routing engines that optimize once on static data. Transport teams then spend hours fixing missed shift windows, wrong escort allocation, and HRMS mismatches. Systems that reduce toil ingest roster changes, hybrid-work patterns, and attendance updates continuously. Systems that do not reduce toil generate “optimal” routes that are constantly overridden by operators.

Buyers should insist on a pilot where the vendor runs live routing and rostering on a real site with real constraints, under joint supervision from Transport, HR, and Security. The routing engine should integrate with HRMS or roster sources, apply escort and women-safety rules, and expose a command-center interface for exceptions and SLA monitoring.

In the pilot, the buyer should track a small, explicit metric set:

- Manual interventions per shift. Count how many trips or routes the control room edits after auto-routing.

- Time to lock roster. Measure time from HRMS roster freeze to “routes final and shared with employees.”

- On-Time Performance and Trip Adherence Rate. Confirm OTP% and route adherence do not degrade as edits reduce.

- Seat-fill and dead mileage. Check that seat utilization and dead mileage move in the right direction.

- Exception latency. Measure detection-to-closure time for no-shows, cancellations, and last-minute shift changes.

A strong pilot demonstrates that, over a few weeks, manual edits per shift and total planning time trend down, while OTP and safety/compliance metrics remain stable or improve. A weak pilot shows “good looking” routes that still require heavy editing, with no measurable drop in control-room workload.

What real constraints usually break our routes—gates, security, escorts, vehicle rules, driver shift limits, weather—and how do we design routing so it doesn’t become daily exceptions?

B1136 Accounting for real-world constraints — In India corporate EMS, what practical “real-world constraints” typically break routing plans (site gate queues, security checks, escort availability, vehicle type rules, driver shift limits, monsoon disruptions), and how should routing & rostering processes account for them without daily exceptions?

In India corporate employee mobility services, routing plans usually fail when they ignore fixed on-ground constraints like gate queues, security protocols, escort rules, driver duty limits, and weather or traffic patterns. Routing and rostering need to treat these as inputs to the plan and as hard rules in the routing engine, not as exceptions that are “handled later” by the transport desk.

Real-world breakdowns usually come from non-negotiable steps at the site or on the road. Site-gate queues and security checks create predictable bottlenecks that stretch boarding and de‑boarding times. Women-escort availability, women-first policies, and night-shift safety protocols create rigid ordering and pairing rules for routing. Driver duty-hour limits and fatigue norms restrict maximum driving and shift lengths. Monsoon and festival traffic patterns create recurring corridor-level delays that make theoretical ETAs meaningless on certain routes and timebands.

If routing ignores these, operations teams end up “re-routing by phone” every day. This increases exception handling, stresses drivers, and erodes on-time performance and safety compliance. Transport heads then spend night shifts firefighting GPS failures, last-minute roster changes, and vendor response gaps instead of running a calm control room.

Routing and rostering processes work better when they encode these constraints upfront instead of treating them as afterthoughts. Boarding and security times must be modeled as time penalties per site and timeband. Escort constraints and vehicle-type rules must sit inside the routing logic as hard constraints that cannot be violated. Driver shift limits should drive maximum trip length and number of trips per duty cycle.

Operations teams benefit when the routing engine is tuned with monsoon-specific and corridor-specific travel times and when there is an agreed buffer policy by shift window. The ETS Operation Cycle and dynamic route optimization practices used during Mumbai monsoon management, which achieved a 98% on-time arrival rate and higher customer satisfaction, show that weather and traffic can be pre-encoded into route planning rather than managed as daily surprises.

To reduce daily exceptions, organizations can adopt a few clear practices in their routing and rostering playbooks. Transport and HR teams should lock site-specific rules such as gate opening times, minimum check-in lead times, and security slot capacities into master data. Safety and EHS teams should define explicit rules for women-centric routing, escort pairing, and night corridors, and these should be applied as non-editable constraints in the routing engine.

Driver duty and fatigue rules need to be translated into simple caps, such as maximum duty hours per shift and minimum rest periods before the next shift, and routing should be prevented from assigning trips that would break these limits. Seasonal playbooks, like those used for monsoon management, should define alternative routes, added buffers, and higher fleet buffers for high-risk corridors and timebands, so that the routing engine automatically switches profiles during those periods.

When these rules are embedded into centralized command-center operations and automated routing, the transport desk receives plans that are realistic and compliant by design. This reduces the volume of last-minute modifications, keeps on-time performance near targets, and cuts down escalations to HR and senior leadership.

With hybrid attendance changing daily, how do seat-fill goals affect routing, and what rules stop us from chasing utilization and hurting reliability or trust?

B1137 Seat-fill under hybrid demand swings — In India employee commute routing (EMS), how do seat-fill targets interact with hybrid demand elasticity (variable attendance, WFH swings), and what governance prevents the system from over-optimizing utilization at the expense of reliability and employee trust?

Seat-fill targets in Indian employee commute routing improve cost efficiency, but they must be explicitly subordinated to reliability, safety, and employee experience when demand is hybrid and attendance is elastic. Most mature EMS programs treat seat-fill as a constrained optimization outcome inside a governed routing and SLA framework, not as the primary objective.

In hybrid environments with variable WFH/WFO patterns, routing engines use shift windowing, dynamic clustering, and trip fill ratio (TFR) thresholds to pool employees. This reduces dead mileage and lowers cost per employee trip. However, the same elasticity creates higher volatility in no-show rates, last‑minute bookings, and shift changes. If algorithms chase maximum seat-fill without guardrails, a common failure mode is brittle routing. Employees experience tighter pickup windows, detours, and more frequent reassignments, which degrade on-time performance and trust.

Governance frameworks counter this by hard-coding reliability and safety as non-negotiable constraints. On-time performance (OTP%), trip adherence rate, maximum routing deviations, and night-shift escort or women‑first rules are set as policy boundaries within the routing logic. Central 24x7 command centers monitor real-time telematics, exceptions, and SLA dashboards, and they intervene when optimization begins to threaten adherence or safety norms. Penalty and incentive ladders are often tied to OTP, incident rate, and complaint closure SLAs rather than pure utilization, which discourages over-optimization of seat-fill.

Procurement and Finance teams increasingly adopt outcome-based contracts where payouts link to reliability, safety, and experience KPIs alongside utilization. This creates a multi-objective equilibrium in which high TFR and low dead mileage are rewarded only if they coexist with governed OTP, incident-free operations, and acceptable Commute Experience Index scores. HR, Security/EHS, and ESG leads then use audit trails, feedback data, and emission metrics to validate that routing policies respect employee trust, duty-of-care obligations, and ESG commitments rather than treating employees purely as capacity to be maximized.

How do we catch routing and rostering issues early—like infeasible routes or wrong manifests—before they turn into night-shift escalations?

B1138 Preventing silent routing failures — In India corporate ground transportation EMS, what are the most credible ways to detect and prevent “silent failure” in routing & rostering (routes generated but not feasible, bad ETAs, wrong manifests) before it becomes a night-shift escalation?

In Indian EMS operations, the most credible way to prevent “silent failure” in routing and rostering is to treat routes as hypotheses that must be stress‑tested by data and a command center before drivers and employees see them. Routes that are not validated against live traffic, shift windows, seat‑fill, and compliance rules tend to look fine on paper but fail on the road.

Silent routing failures usually appear where demand is volatile, data is fragmented, or vendors are managed manually. Unstable hybrid attendance, incomplete HRMS integration, and weak NOC observability mean the routing engine works in isolation. Most organizations only discover infeasible ETAs or wrong manifests when night‑shift employees start calling, which pushes Transport back into firefighting and escalations to HR.

Operations teams reduce this risk by building a pre‑shift “route assurance” layer in the command center. This includes automated checks on trip adherence risk, seat‑fill anomalies, dead mileage, female‑first routing, escort rules, and driver duty cycles before routes are locked. Command‑center tools that fuse telematics, HRMS rosters, and historical OTP% give early warnings on unrealistic ETAs or overloaded sequences while there is still time to fix them.

Pragmatic operators also run live pilots and case‑study style validations for new patterns. For example, some providers demonstrate 98% on‑time arrival in monsoon conditions through dynamic route recalibration, real‑time driver communication, and a dedicated command desk, which is strong proof that their routing engine and SOPs survive real‑world stress.

To keep silent failures from turning into 2 a.m. crises, facility heads typically rely on three control levers:

- Upstream data discipline from HRMS and attendance systems so the routing engine sees the real roster.

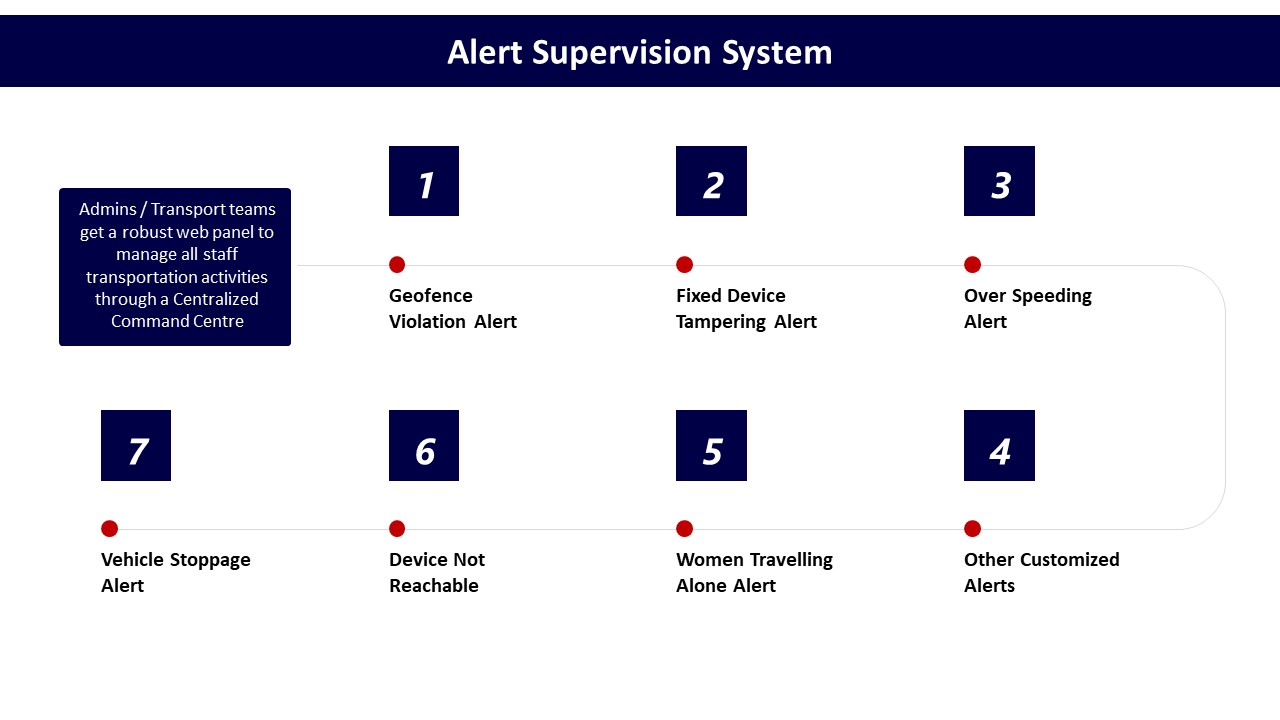

- A 24x7 command center with alert supervision, geo‑fencing, and escalation matrices for early intervention.

- Outcome‑linked KPIs such as OTP%, Trip Adherence Rate, and exception closure SLAs embedded in vendor contracts and dashboards.

When route design, command‑center oversight, and SLA governance work as a single loop, most routing and rostering failures are caught hours before the shift instead of during it.

If routing changes claim savings from higher seat-fill, what should Finance ask to uncover hidden costs like delays, escalations, extra cabs, or overtime?

B1139 Finance questions on seat-fill savings — In India EMS programs, how should Finance challenge routing & rostering changes that claim savings via higher seat-fill—what questions expose hidden costs like late pickups, escalations, extra vehicles, and overtime that don’t show up in per-seat math?

Finance teams should challenge “seat-fill driven savings” by forcing vendors to connect routing and rostering changes to full-trip economics, reliability KPIs, and downstream impact on shifts and overtime. Finance exposes hidden costs by asking for reconciled data across OTP, trip adherence, dead mileage, extra deployments, and escalations instead of accepting narrow per-seat or per-km math.

Finance should first insist that any new routing or pooling logic is evaluated at the level of cost per employee trip and total cost of ownership, not just cost per seat or per route. A higher Trip Fill Ratio can reduce nominal per-seat cost but often increases detours, dwell times, and risk of late pickups that erode value through productivity loss, night-shift penalties, or extra vehicles. Finance should require that vendors show route-level On-Time Performance, exception rates, and dead mileage before and after the change, and that these numbers reconcile with GPS logs and billing.

A common failure mode is when aggressive pooling mandates cause longer ride-times and more complex shift windowing. This typically drives more escalations to the Facility / Transport Head, more manual re-routing, and unplanned “backup” cabs that are not visible in headline seat-fill metrics. Finance should therefore ask specific questions that tie operations to cost and risk:

- “Show OTP%, Trip Adherence Rate, and exception closure time for these routes before and after the routing change.”

- “What was the change in dead mileage, average trip duration, and number of stops per trip after we increased seat-fill?”

- “How many extra vehicles, ad-hoc trips, or backup deployments were triggered due to missed or tight shift windows?”

- “Quantify overtime payout, extra shift allowances, and any additional guard/escort costs linked to delayed pickups after the new routing.”

- “How many safety or service escalations, especially for women’s night shifts, were logged in the same period, and what was their closure SLA?”

- “Did No-Show Rate, cancellation rate, or employee complaints increase for these optimized routes?”

- “Provide a route-wise comparison of Cost per Employee Trip and total monthly spend, including ad-hoc cabs and incident-driven trips, not only rostered trips.”

- “How does the routing engine respect shift windowing and female-first policies, and what guardrails prevent over-pooling or unsafe detours?”

- “What is the impact on Vehicle Utilization Index and fleet uptime—are we over-stretching a smaller fleet and increasing breakdown or downtime risk?”

- “Can we simulate a week of disruption (rain, strikes, events) under the new design and show how many extra vehicles or manual overrides would be required?”

These questions force vendors and internal teams to surface hidden overtime, buffer vehicles, and escalation handling that usually sit outside simple seat-fill reporting. They also align Finance with Operations and HR by linking routing decisions to reliable OTP, safety compliance, and employee experience, rather than accepting “paper savings” that increase night-shift firefighting.

What are the signs our transport desk is hitting change-fatigue from routing/rostering—too many edits and edge cases—and how do mature teams fix the process before adoption collapses?

B1140 Detecting change-fatigue in transport desk — In India corporate EMS, what operational behaviors indicate routing & rostering is creating change-fatigue for the frontline transport desk (too many edits, too many edge cases), and how do mature teams redesign the process so adoption doesn’t collapse?

In India corporate employee mobility, change-fatigue at the transport desk usually shows up as rising manual edits to routes and rosters, increasing exception volume per shift, and growing reliance on informal workarounds instead of the official routing engine. When routing and rostering rules change too often, or are over‑fitted to edge cases, frontline teams stop trusting the system and adoption quietly collapses into manual firefighting.

Operational stress becomes visible when rosters are re-run many times close to shift start. It also shows when command center staff frequently override the algorithm, reassign vehicles ad hoc, or bypass apps for calls and WhatsApp. A common failure pattern is routes that look optimal on paper but ignore driver fatigue, city‑specific traffic patterns, or EV charging realities. Another pattern is policy churn from HR or security that keeps adding micro‑rules for women-safety, escorts, and exceptions without simplifying how the desk executes them.

Mature teams stabilize routing by freezing core rules for defined review cycles and separating “policy” from “exceptions.” They use the command center as a control room that absorbs demand variability through capacity buffers and standby vehicles instead of constant re‑routing. They codify only a small set of exception types with clear SOPs and escalation paths, and keep the majority of trips on standard templates.

They also link the routing engine tightly with HRMS rosters so attendance changes flow automatically, reducing manual data entry. EV-using programs design fixed charging windows aligned to shift bands instead of ad hoc top-ups that cause last-minute route edits. Mature operations track OTP, trip adherence, driver fatigue, and re-route counts together, and use data-driven reviews to adjust fleet mix, shift windowing, and seat-fill targets rather than tweaking individual routes daily.

To protect the desk from burnout, leading operators define a simple “playbook per shift” that clarifies what must be handled by the system, what the desk can decide locally, and what goes to escalation. They treat new rules like change management, not just configuration, with short field pilots, daily shift briefings, and feedback loops from drivers and supervisors before scaling changes systemwide.

How do we set leadership expectations on what routing/rostering can realistically guarantee, so Ops isn’t set up to fail when exceptions happen?

B1141 Setting leadership expectations for routing — In India employee mobility services (EMS), how do you set realistic expectations with senior leadership on what routing & rostering can and cannot guarantee (OTP, seat-fill, fewer exceptions), so the Transport Head isn’t set up to fail politically?

In Indian employee mobility services, realistic expectations are set by converting routing and rostering from a “magic lever” into a governed, data-backed capability with clearly defined guarantees, preconditions, and failure modes. Leadership confidence comes from codified SLAs, visible constraints, and evidence-based reporting, not from blanket promises on OTP, seat-fill, and exception elimination.

Transport leaders need to frame routing and rostering as one layer in a broader operating model that also depends on driver availability, fleet mix, shift patterns, and command center responsiveness. Unrealistic promises emerge when leadership sees routing tools as standalone “AI” that can fix structural issues like under-sized fleets, chronic no-shows, or charging gaps in EV operations. A common failure mode is committing to near-perfect OTP without linking that target to shift windowing, dead-mile caps, and buffer capacity policies.

Realistic expectation-setting usually includes explicit operating bands for OTP and seat-fill linked to time bands, corridors, and attendance variability. It also includes a shared understanding of which exceptions can be predicted and contained by routing logic, and which are fundamentally external disruptions that require playbooks and buffers rather than punitive SLAs. Outcome-linked procurement helps here, because it ties payouts to specific KPIs under defined conditions rather than generic “best-effort” claims.

Transport Heads are politically protected when routing and rostering performance is monitored via a central command center with agreed KPIs, exception taxonomies, and closure SLAs. This structure makes it clear which breakdowns are process leaks versus vendor non-performance versus genuine force majeure. Structured governance, escalation matrices, and periodic performance reviews turn routing from a personal promise into an institutional contract that leadership can inspect and refine instead of blame.

When selecting a routing/rostering vendor, what proof should Procurement ask for so we don’t buy a great demo that fails with real constraints and late changes?

B1142 Procurement proof against demo-ware — In India EMS vendor selection for routing & rostering, what proof points should Procurement insist on to avoid over-promised “smart routing” (repeatable outcomes, constraints handling, late-change performance) rather than impressive demos?

Procurement teams shortlisting EMS routing and rostering vendors in India should insist on hard, repeatable proof of performance under real constraints rather than accepting generic “smart routing” demos. The most reliable proof points focus on outcome metrics over time, behavior under late changes and disruptions, and evidence that India-specific constraints and compliance rules are encoded into the routing engine rather than handled manually by ops teams.

Vendors should demonstrate sustained on-time performance for shift-based EMS across multiple sites and months, not just a few good days. Procurement should ask for anonymized historical OTP%, Trip Adherence Rate, and seat-fill data, plus evidence of dead-mileage control and fleet utilization. Repeatable outcomes become credible when supported by consistent on-time performance, stable exception-closure times, and verified reduction in route cost per employee trip after deployment.

Constraints handling is best validated by examining how the routing engine incorporates real-world India EMS rules. Procurement should request configuration evidence and sample route outputs that respect female-first policies, night-shift escort rules, cab capacity and pooling policies, rest-hour norms for drivers, geo-fencing of red-flagged localities, and security approvals for certain routes. A common failure mode is when “optimization” silently breaks safety or compliance rules to reduce kilometers, so Procurement should insist on explicit documentation of which business and safety constraints are hard-coded and which are operator overrides.

Late-change performance should be assessed through live or recorded scenarios rather than static demos. Procurement can ask vendors to replay real cases showing how the system handles last-minute roster changes, no-shows, cab breakdowns, sudden traffic disruptions, or weather events while preserving shift adherence. The quality of the SOPs, alerting, and escalation surrounding the routing engine often matters more than the UI, so documentation of command-center workflows, exception SLAs, and business continuity playbooks is as important as the algorithm itself.

To make these assessments practical and defensible, Procurement can convert them into evaluation criteria, such as:

- Provision of time-bound, multi-site historic KPI data with defined calculation methods.

- Evidence of encoded safety and compliance rules specific to Indian EMS operations.

- Demonstrated ability to re-route and re-roster within defined time limits after late changes.

- Audit logs and trip ledgers that show how routes were generated, overridden, and executed.

These proof points reduce the risk of over-promised “AI routing” and anchor selection on verifiable performance under the same constraints that the buyer’s transport and HR teams face every night.

From an IT angle, how do we judge if a routing/rostering setup will create long-term workarounds and operational debt even if the initial rollout looks fine?

B1143 IT assessment of operational debt risk — In India corporate employee transport (EMS), how should a CIO assess whether routing & rostering workflows will create long-term operational debt (manual workarounds, brittle integrations, spreadsheet side-processes) even if the first rollout seems successful?

A CIO should assess routing and rostering workflows for long-term operational debt by stress‑testing how they behave when demand, policies, and integrations change, not just whether the first rollout works. Operational debt usually appears where platforms cannot absorb hybrid-work variability, HRMS changes, multi-vendor realities, or new safety/ESG rules without manual side-processes, spreadsheets, or ad‑hoc scripts.

Early in evaluation, routing and rostering should be reviewed as part of a broader EMS stack that includes the routing engine, driver and rider apps, HRMS integration, and the 24x7 command-center workflow. Long-term stability depends on whether routing rules, shift windowing, seat-fill targets, and escort policies are configurable in the system or repeatedly “fixed” by manual overrides in the NOC. A CIO should look for clear API-first integration patterns into HRMS and ERP rather than flat-file exchanges that will proliferate reconciliation work later.

A common failure mode is when hybrid attendance, changing shift patterns, and EV/ICE mix require ongoing parameter changes that only the vendor can do. This often leads to cloned spreadsheets, shadow routing, and alternate trip ledgers. Another red flag is when the platform cannot expose canonical trip and roster data into a governed mobility data lake, which prevents automated KPI tracking for OTP, Trip Adherence Rate, dead mileage, and seat-fill.

Practical signals that routing and rostering will not create operational debt include: admin users can change routing constraints without code, HRMS and approval workflows are integrated via stable APIs, the command center runs primarily from a single dashboard instead of Excel, and all trip lifecycle events feed into auditable logs and analytics without re-keying.

After go-live, what weekly/monthly routine should Ops run to keep routing and rostering stable—rule tuning, RCA, guardrails—so we don’t slip back into firefighting?

B1144 Post-go-live governance for stability — In India EMS post-go-live, what operating rhythm should a Facility/Transport Head run to keep routing & rostering stable (weekly rule tuning, exception RCA, seat-fill guardrails) without drifting back into constant firefighting?

An effective EMS operating rhythm for a Facility/Transport Head in India combines a tight daily command-centre routine with one structured weekly review and a lighter monthly reset. This rhythm keeps routing and rostering stable, protects seat-fill economics, and pushes exceptions into predictable review slots instead of 2 a.m. firefighting.

The daily backbone is a control-room style routine anchored on the command centre and dashboards. Transport teams should use tools like real-time GPS tracking, alert supervision, and compliance dashboards to monitor OTP, no-shows, over-speeding, and SOS events in-shift. Exceptions such as geofence violations, app downtime, EV low-battery alerts, or driver shortages should follow clear SOPs and escalation matrices, so decisions are playbook-driven rather than improvised.

The weekly cadence should be a fixed “routing and rostering clinic” with Transport plus HR/ Security representation. This forum reviews route-level KPIs like OTP%, Trip Adherence Rate, Trip Fill Ratio, dead mileage, and incident logs. The team should tune rules such as seat-fill thresholds, buffer vehicles, night routing for women, EV vs ICE mix by shift, and vendor allocation using data from dashboards and management reports. Root-cause work on late trips, repeat driver or route issues, and recurring safety alerts should close with explicit corrective actions and owners.

A light monthly cycle should recalibrate policies against hybrid-attendance patterns, seasonal disruption (e.g., monsoon) and ESG/EV goals. This is where leaders can adjust fleet mix, long-term rental versus on-demand share, and update BCP scenarios for strikes, tech failures, or infrastructure disruptions. The Facility/Transport Head protects their own bandwidth by insisting that ad-hoc changes feed into these weekly or monthly slots, so continuous improvement happens systematically and the command centre operates in a calm, predictable way rather than permanent crisis mode.

How can we handle last-minute roster changes fairly without pushing the transport team into endless edits and 3 a.m. calls?

B1145 Fair late-change handling without chaos — In India corporate EMS, how do you handle late roster changes (employee cancellations, shift swaps, WFH toggles) in a way that feels fair to employees but prevents the transport team from getting dragged into endless manual edits and 3 a.m. calls?

In Indian corporate EMS, late roster changes are best handled by codifying clear cut-off rules in policy, enforcing them in the transport system, and giving employees self-service tools with transparent consequences so operations is not editing rosters manually at 3 a.m.

A practical pattern is to define tiered cut-off windows for each shift and lock routing after that point. Any change before the cut-off is auto-accepted and re-routed by the system. Any change inside the “late window” is either disallowed or allowed with defined conditions, such as loss of guaranteed pickup or a nominal charge-back to the cost centre. This keeps rules consistent across sites and vendors and removes subjective case-by-case negotiation.

Transport heads avoid manual firefighting when employee apps, driver apps, and the command center all work off the same live roster and routing engine. In many EMS stacks like Commutr, employees can mark WFH, cancel, or request ad-hoc trips directly in the app, while the routing engine automatically recalculates routes, seat-fill, and ETA where still feasible. The command center only intervenes on genuine exceptions or safety cases rather than every minor change.

For fairness, organizations usually align roster cut-offs with HRMS shift rules and communicate them as part of employee mobility policy, not as a “transport rule.” HR broadcasts SOPs, uses in-app notifications, and publishes what is guaranteed versus “best-effort” after cut-off. When outcome-linked contracts and SLAs are in place, OTP and seat-fill targets are protected because last-minute volatility is bounded by design rather than absorbed by the transport team.

What are the warning signs we’re over-optimizing routing—seat-fill, clustering, freeze times—and building in reliability risk that will later blow up?

B1146 Warning signs of over-optimization — In India EMS routing & rostering, what are practical indicators that the organization is “over-optimizing” (too aggressive seat-fill, too tight clustering, too late freeze) and creating hidden reliability risk that will eventually surface as incidents and escalations?

In employee mobility routing and rostering, over‑optimization shows up as rising operational friction even when dashboards still look “efficient.” The most practical indicators are patterns of stress in OTP, drivers, and exception handling that repeat across shifts instead of one‑off bad days.

One clear sign is when seat‑fill and dead‑mile metrics improve, but on‑time performance starts to fluctuate by shift or corridor. Another is when the routing engine keeps recomputing till the last minute, so manifests and vehicle details reach employees and drivers very close to pickup. Operations heads then rely on manual calls and WhatsApp to “stabilize” each shift, which means the algorithm is pushing beyond what the field can safely execute.

Hidden reliability risk also shows up as over‑tight clustering in difficult geographies or timebands. In India this typically means routes that look optimal on paper but ignore local congestion, monsoon patterns, security requirements, or escort rules. Facility and Transport Heads then see more near‑misses: drivers rushing to cover unrealistic legs, escort or guard rules bent to save one more seat, and informal rerouting without traceable approval. These do not break SLAs every day, but they accumulate into eventual incidents and escalations.

Common practical signals that routing and rostering are over‑optimized include:

• OTP% is still within contract, but variance between best and worst routes or shifts is widening.

• Exception closure time during night or monsoon shifts is rising, despite “better” route KPIs.

• Drivers report fatigue, missed breaks, or repeated requests to “adjust just this one trip” to accommodate late additions.

• The command center spends more time manually overriding or splitting “perfect” routes than monitoring and learning from data.

When these patterns persist, most organizations are trading short‑term cost and seat‑fill gains for higher safety, incident, and business‑continuity risk that will surface later as women‑safety escalations, audit gaps, and visible service failures.

How should we explain routing and rostering changes to employees—pooling changes, pickup time shifts—so trust stays intact and complaints don’t flood HR?

B1147 Employee communication for roster changes — In India corporate employee transport (EMS), how should the Transport Head communicate routing & rostering trade-offs to employees (why pooling changed, why pickup time moved) to preserve trust and adoption instead of triggering complaints and escalations to HR?

In corporate employee transport in India, Transport Heads preserve trust during routing and rostering changes by explaining decisions in simple operational language, linking them to clear safeguards on OTP, safety, and fairness, and backing every change with predictable SOPs for exceptions and feedback closure. Employees accept pooling and pickup shifts more easily when they see that route logic is rule-based, not arbitrary, and when they know how issues will be fixed before they have to escalate to HR.

Transport teams should avoid presenting pooling or time changes as “system decisions.” They should frame them as part of a governed EMS operation that balances three explicit constraints. These constraints are shift adherence and OTP, safety and compliance rules such as women-first routing or escort norms, and practical limits like fleet availability and dead mileage. Each message to employees should name which constraint is being protected. For example, a 10-minute earlier pickup should be explained as necessary to keep the entire route within OTP and statutory duty cycle limits.

A common failure mode is silent or last-minute change communication. This failure mode pushes frustrated employees straight to HR. To avoid this, routing changes should be accompanied by three predictable elements. These elements are advance notification with a reason in plain language, a simple option path for edge cases such as medical or childcare constraints, and a visible loop that shows complaints are converted into route reviews or exception approvals. When employees see that feedback drives periodic roster optimization, they are more likely to stay on pooled routes instead of opting out or escalating.

For our employee transport ops, how do we confirm that routing/rostering is what’s causing the 3 a.m. escalations (not vendor or GPS issues), and what logs or proof should we pull to show leadership?

B1148 Proving routing as root cause — In India corporate Employee Mobility Services (shift-based employee transport), how can a Facility/Transport Head tell whether routing and rostering problems are the real root cause of 3 a.m. escalations versus issues like vendor behavior or GPS gaps, and what evidence should they collect from shift windowing, clustering, and late-change logs to prove it internally?

In shift-based employee transport, a Facility/Transport Head can distinguish routing/rostering failures from vendor or GPS issues by tying every 3 a.m. escalation back to time-stamped, route-level evidence across the full trip lifecycle. Routing and rostering are usually the root cause when the pattern shows structurally bad shift windowing, poor clustering, or unmanageable last-minute changes rather than isolated vehicle or device failures.

Routing and rostering are likely at fault when recurring delays cluster around specific shift windows or routes. Patterns such as pickups scheduled too close to shift start, over-long routes across high-traffic corridors, or under-filled vehicles generating dead mileage indicate a planning problem rather than vendor behavior. If the Vehicle Utilization Index and Trip Fill Ratio are poor while vendor fleet uptime is acceptable, routing logic and capacity planning need correction.

Vendor behavior is more likely the driver when planned ETAs and routing look reasonable but actuals show repeated no-shows, vehicle breakdowns, or driver cancellations on otherwise healthy routes. GPS or app gaps usually show up as short, localized blackouts where driver and rider apps fail simultaneously despite stable route plans and historical OTP on those corridors.

To prove the root cause internally, Facility/Transport Heads should build a basic evidence pack drawn from three specific data streams: • Shift windowing: planned vs actual pickup times by shift, OTP% by shift band, and average buffer between last pickup ETA and shift start. • Clustering and routing: route distance vs actual travel time, seat-fill per route, dead mileage between first and last pickup, and repeated “problem routes” with low Trip Adherence Rate. • Late-change logs: time-stamped roster edits within defined cut-off windows, frequency of last-minute add/drops, and correlation of these edits with exception spikes.

A simple SOP can make this repeatable:

• Tag every escalation to a specific trip ID, route, and shift window.

• Pull the planned route manifest (roster, sequence, timings) and compare with GPS/telematics traces.

• Overlay vendor fleet uptime and driver behavior records to check whether the plan was operationally feasible.

When planned ETAs are unrealistic even under normal conditions, the evidence supports a routing/rostering root cause. When plans are sound but exceptions correlate with specific vendors, drivers, or GPS failures, the evidence supports targeted vendor governance or tech remediation. This approach reduces blame noise and allows the Facility/Transport Head to walk into internal reviews with auditable proof instead of anecdotal explanations.

In our routing and rostering, which real-world constraints usually break an otherwise ‘good’ roster, and how do we pressure-test those before we blame drivers or vendors?

B1149 Constraints that break rosters — In India corporate Employee Mobility Services routing and rostering, what are the most common real-world constraints (shift windowing, pickup sequencing rules, guard/escort constraints, location clustering, vehicle-type mix) that cause ‘perfect-looking’ rosters to fail in execution, and how should operations teams pressure-test those constraints before blaming drivers or vendors?

Most routing and rostering failures in Indian employee mobility look like driver or vendor issues, but usually come from unrealistic constraints in the roster design itself. Operations teams need to pressure-test shift windows, pickup rules, escort policies, clustering, and vehicle mix against real traffic, driver duty cycles, and charging or fueling realities before holding vendors accountable.

The most common constraint patterns that break “perfect” rosters in execution are timing-related. Shift windowing is often set to theoretical login/logout times without realistic buffers for gate security, elevator time, and known congestion corridors, which pushes even a single delayed pickup into a cascading OTP failure. Pickup sequencing rules that enforce strict “ladies first / last drop” or fixed first-pickup locations can conflict with live traffic patterns and one-way or no-parking zones, so a route that is optimal on a map becomes impossible on road.

Escort and women-safety constraints introduce additional fragility. Night-shift requirements for guard or escort pairing, female-first policies, and “no single woman alone in cab” rules can fail when attendance fluctuates, last-minute cancellations occur, or escort rostering is not synchronized with vehicle routes, leading to either non-compliance or forced last-minute rerouting. Location clustering also creates problems when micro-clusters ignore real choke points, construction, seasonal disruptions such as monsoon flooding, or campus gate rules, so geographically tight routes still run late.

Vehicle-type mix is another hidden failure source. EV deployment without matching charging windows to shift bands, high-mileage routes, and charger availability can produce “range anxiety” and unplanned swaps, while overuse of small sedans on high-load corridors drives dead mileage and re-routing. These problems increase when hybrid work patterns cause volatile seat-fill and when routing engines are tuned for average conditions rather than worst-case peaks.

Operations teams should pressure-test constraints through simple but disciplined checks before escalating to vendor blame. They should run dry-run drive tests on critical routes during actual shift bands, measure door-to-desk times instead of only gate-to-gate, and compare planned ETAs with historical telematics or GPS data from similar days, traffic, and weather patterns. They should simulate common disruptions such as one or two no-shows, a late escort, or a vehicle breakdown and see whether the roster still respects safety rules and shift windows.

Practical pressure-tests often include: - Validating that guard/escort rosters are generated from the same source data and time bands as cab rosters. - Checking EV or specific vehicle allocations against route length, known charger locations, and predicted dwell times. - Reviewing night-shift women’s routes jointly with Security/EHS to confirm policies can be met without impossible detours.

A common failure mode is designing constraints in isolation. HR defines safety rules, Security defines escort norms, Finance defines cost caps, and Transport is left to “make it work” on a live map. Operations leaders reduce firefighting when they insist on joint reviews of constraint sets, use data-driven route performance analysis, and feed back real exceptions into the routing logic, so constraints are adjusted before the next roster cycle rather than after the next escalation.

real-world inputs, constraints & boundary rules

Codified shift windows, clustering inputs, seat-fill targets, and real-world constraints; test against edge cases like weather disruptions and vendor delays to prevent chaos.

How do we set seat-fill targets that save money but don’t create fairness complaints—like longer ride times or forced pooling—especially on night shifts and women safety routes?

B1150 Seat-fill vs perceived fairness — In India shift-based Employee Mobility Services, how should HR and Facilities define seat-fill targets in routing and rostering so they reduce cost without triggering employee backlash about fairness (longer ride times, forced pooling, perceived ‘punishment’ routes), especially for night shifts and women safety protocols?

In India shift-based employee mobility, HR and Facilities should define seat-fill targets as banded ranges linked to explicit guardrails on ride time, detours, and safety rules, not as a single “maximize pooling” number. Seat-fill should be optimized only after hard limits are set for maximum ride duration, wait time, and women-safety protocols, and these limits must be coded into routing, contracts, and SOPs so employees never feel pooling is a punishment for speaking up or working unpopular shifts.

Seat-fill targets work best when they are contextual rather than uniform. Higher ranges suit dense, safe corridors and day shifts, while lower ranges are appropriate for sparse routes, late-night windows, and women-first routing that may require escorts, restricted pickups, or “door-to-door” patterns. A common failure mode is to push uniform 90–100% pooling on all routes, which lowers cost on paper but increases ride time, route zig-zags, and perceived unfairness, particularly for women on night shifts.

Cost reduction is more sustainable when seat-fill is one variable in a broader routing policy that includes dead-mile caps, shift windowing, and vehicle-mix rules. Contracts and routing engines should prioritize compliance and duty of care first, then optimize seat-fill within those non-negotiable boundaries. HR can protect experience by tracking commute complaints and attrition correlations, while Facilities watches OTP, dead mileage, and Trip Fill Ratio, and both functions jointly adjust seat-fill bands only where service-level and safety KPIs remain green.

What’s a practical way to measure how much manual roster work we do (edits, exceptions, re-routes) and turn that into a finance-friendly automation ROI case?

B1151 Quantifying rostering toil for ROI — In India corporate employee transport routing and rostering, what is a practical way to measure ‘toil’ for the roster team (manual edits, exception handling, re-clustering, last-minute cancellations) and translate it into a business case that a CFO will accept beyond generic ‘automation’ claims?

A practical way to measure roster-team “toil” is to time‑stamp every manual intervention in the routing lifecycle, convert it into a per‑shift “intervention minutes per 100 trips” metric, and then translate those minutes into fully loaded payroll cost, error/leakage cost, and escalation cost that the CFO can audit. This approach converts vague “automation saves time” claims into a defensible before/after unit‑economics story at the route and trip level.