How to run a 24x7 command center that detects early, triages fast, and keeps disruptions from hitting leadership

In peak shifts, a single missed alert can cascade into missed pickups, driver churn, and leadership firefighting. You need more than a demo—you need a repeatable, battle-tested playbook that keeps operations calm even when GPS drops or apps go down. This playbook groups questions into four operational lenses that translate vendor talk into on-ground procedures, with clear ownership, escalation paths, and auditable evidence that survives audits and leadership reviews.

Is your operation showing these patterns?

- Escalation ownership ping-pong between vendor, site, and HR creates delay at 3 AM

- Alerting fatigue with a flood of alerts and unclear ownership

- Vendor dispatch delays during night shifts despite available capacity

- No-shows or late substitutions trigger ad-hoc workarounds that bypass SOPs

- Platform outages or GPS gaps cause rider clusters and stranded drivers

- Audits require incident timelines but data isn’t readily exportable or tamper-evident

Operational Framework & FAQ

Command center governance, escalation ownership, and 24x7 coverage

Defines clear escalation ladders, single-owner accountability, and enforceable 24x7 coverage practices to prevent chaos during peak disruptions.

For our employee transport ops in India, what does a 24x7 command center actually handle day to day—what do they monitor, what do they do first when something goes wrong, and what’s not their job?

B1198 What a 24x7 command center does — In India’s corporate Employee Mobility Services (EMS) operations, what does a 24x7 mobility command center practically do minute-by-minute—what alerts does it watch, what triage steps happen first, and what problems are explicitly out of scope for the command center versus on-ground supervisors?

A 24x7 mobility command center in Indian EMS operations runs a continuous “control-room loop” of monitoring, alerting, triage, and escalation for shift-based employee transport. The command center focuses on data, exceptions, and coordination, while on-ground supervisors own physical interventions, driver handling, and site logistics.

What the command center watches minute‑by‑minute

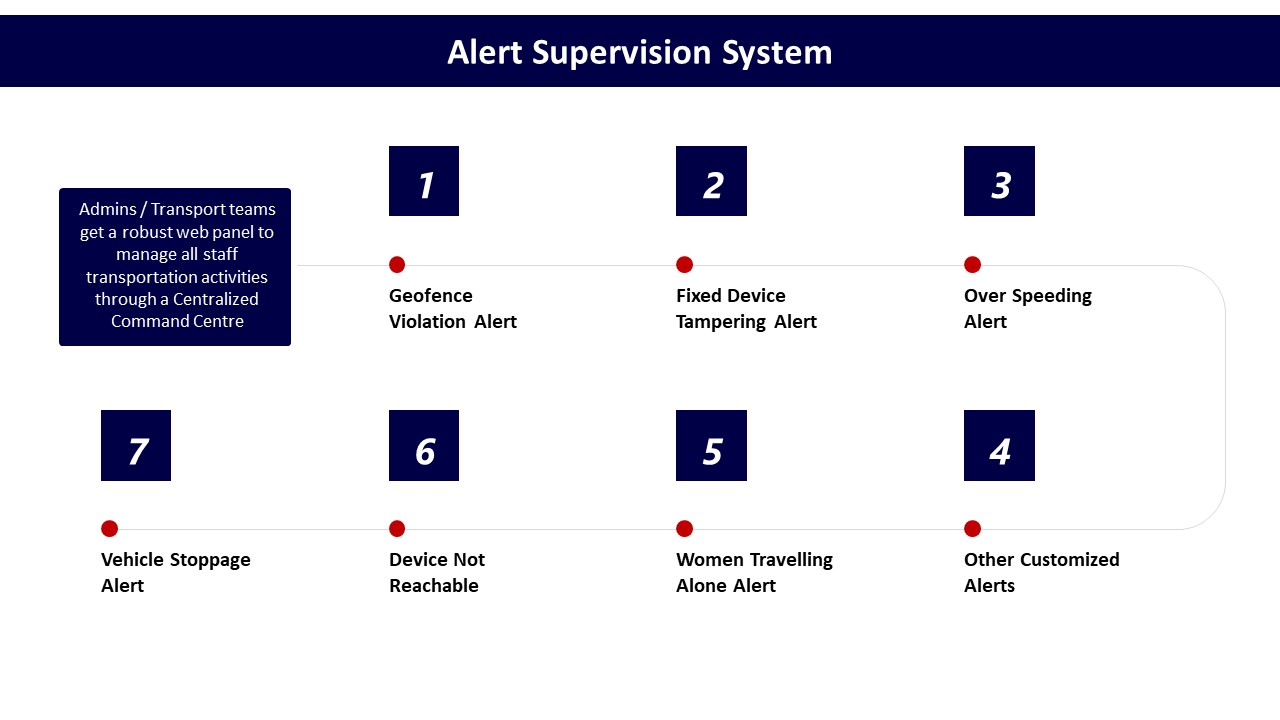

The command center monitors real-time GPS feeds and trip dashboards for all active cabs. It watches on-time performance, route adherence, and geofence violations using alert supervision systems and command-centre dashboards. It tracks over‑speeding, device tampering, and IVMS or dashcam health as part of safety and compliance monitoring. It also supervises SOS triggers from employee apps and driver apps, including panic buttons and safety alerts. It keeps a live view on battery levels and charger status in EV fleets using EV command layers and telematics dashboards. It observes trip fill, dead mileage, and fleet uptime as leading indicators of capacity or reliability risk. It tracks ticket queues, complaint logs, and SLA timers on incident closure using transport command centre tools.

First triage steps when an alert fires

The command center first validates the alert against GPS traces, trip logs, and recent routing decisions. It checks whether the issue is technology-side, such as app downtime, GPS dropout, or telematics failure, versus field-side, such as driver deviation or traffic disruption. It contacts the driver or vendor via defined escalation matrices when route deviation, over-speeding, or device tampering is confirmed. It informs employees and security teams for SOS events and coordinates with safety cells or EHS where women-centric protocols apply. It triggers playbooks for business continuity when patterns suggest wider disruption, such as cab shortages, monsoon traffic, or political strikes. It logs every incident with time stamps and evidence for later audits and SLA reviews.

What is out of scope for the command center

The command center does not physically intervene in accidents, breakdowns, or law‑and‑order situations; these remain with on-ground supervisors, local security, and authorities. It does not perform vehicle maintenance or repairs, which fall under fleet owners and local workshops. It does not directly manage driver hiring, firing, or long-term coaching, which sit within driver management and HR-led training programs. It does not redesign fundamental site layouts, parking flows, or gate procedures, which remain a facilities and security responsibility. It does not unilaterally change contracts, tariffs, or commercial terms, which are governed by procurement and finance. It does not override company policies on women’s safety, escort norms, or shift eligibility; it executes these policies and routes exceptions into the proper governance forums.

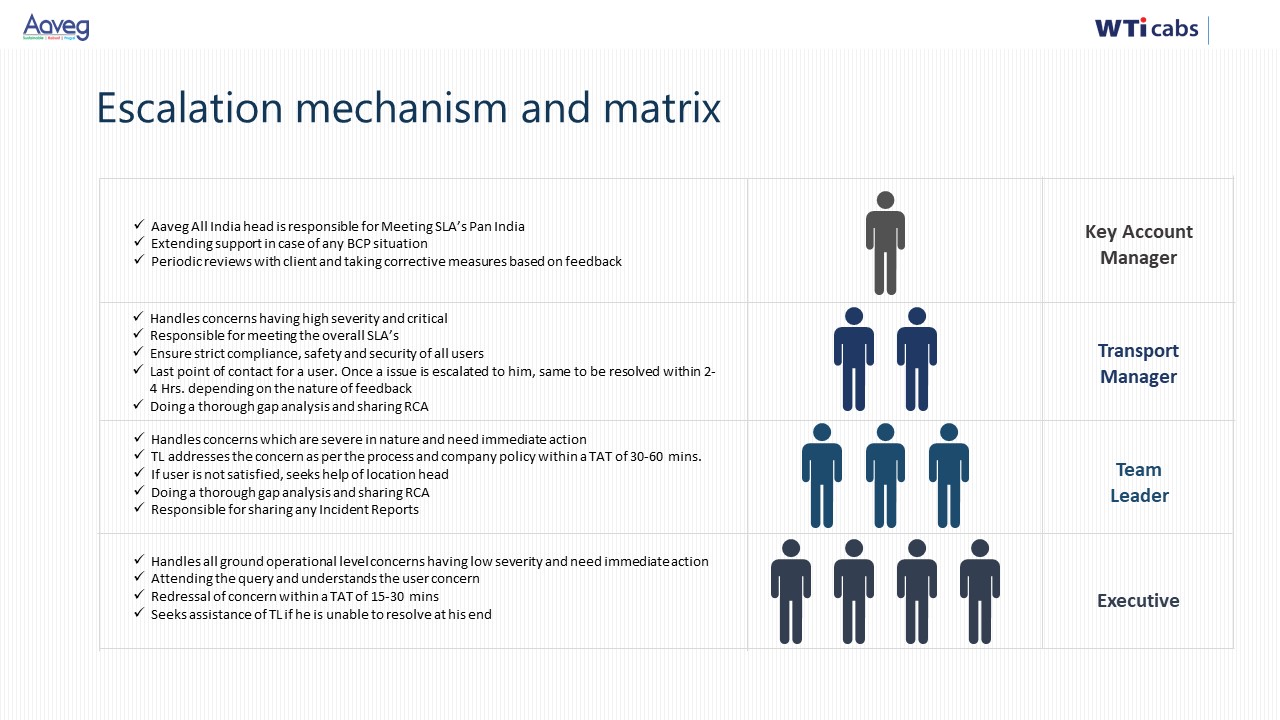

How should we set up L1/L2/L3 escalation for late pickups, deviations, SOS, or app issues so it doesn’t become a WhatsApp mess between HR, vendors, and security?

B1199 Escalation matrix that avoids chaos — In India’s corporate ground transportation programs (EMS/CRD), how should a buyer define a clear escalation matrix (L1/L2/L3) so that a late pickup, route deviation, SOS trigger, or app outage doesn’t devolve into WhatsApp chaos and blame-shifting across HR, vendors, and security?

In India’s corporate EMS/CRD programs, a clear escalation matrix works when each incident type has a pre-defined owner, time-bound response, and an L1–L2–L3 path that runs through a command-center style workflow rather than informal WhatsApp groups. A robust matrix separates “who watches”, “who fixes”, and “who explains to leadership”, and links every incident to a ticket, not a chat thread.

An effective design starts by routing all first-line escalations to a single L1 operations desk or command centre rather than to individual vendor managers or HR. The L1 desk owns live monitoring, ticket creation, and immediate triage for late pickups, route deviations, SOS triggers, and app/GPS outages. The L1 team uses tools like alert supervision, geo-fence and over-speeding alerts, and real-time dashboards to spot issues early instead of waiting for employee complaints.

L2 should be defined as the accountable resolver by domain, not by seniority. For service failures such as recurrent late pickups or routing errors, L2 is the Transport / Facility Lead plus the vendor’s city operations manager under a documented SOP. For safety issues or SOS triggers, L2 automatically pulls in Security/EHS with clear rules about trip suspension, escort deployment, and incident documentation. For technology outages, L2 is the internal IT application owner plus the vendor tech SPOC, working from a joint playbook that covers fallback to manual rosters and SMS/voice communication.

L3 escalation is reserved for pattern and risk, not for individual trips. L3 is typically a joint forum of HR, Security/EHS, Procurement/Finance, and senior vendor leadership that reviews repeated SLA breaches, serious safety events, or systemic app failures. L3 owns corrective action plans, commercial penalties, vendor rebalancing, and any board- or audit-facing narrative.

To prevent WhatsApp chaos, the matrix must also specify channel, clock, and closure at each level. L1 receives incidents only via the official app, IVR, or command-centre hotline and acknowledges within a fixed window such as 2–5 minutes for SOS and 10–15 minutes for OTP deviations. L2 gets auto-escalated through a ticketing or alert system when defined thresholds are breached, for example two consecutive missed OTP SLAs on a route or any SOS that remains “open” beyond a defined time. L3 is invoked based on weekly or monthly deviation reports from the command centre rather than ad-hoc midnight calls.

For buyers, a practical checklist to define and enforce this matrix is:

- Define incident categories and severity bands in the contract and SOPs.

- Map a named L1, L2, and L3 owner for each category across client and vendor teams.

- Mandate use of a centralized command centre or dashboard as the only system of record.

- Set acknowledgement and resolution SLAs per level and tie them to vendor penalties and internal KPIs.

- Review escalation logs and closure quality in monthly governance and quarterly business reviews.

What MTTD/MTTR targets make sense for breakdowns, driver no-shows, GPS issues, and roster changes—and should night-shift women-safety trips have tighter targets?

B1200 MTTD/MTTR targets for disruptions — In India’s enterprise-managed employee commute operations (EMS), what are reasonable targets for mean time to detect (MTTD) and mean time to recover (MTTR) for common disruptions like vehicle breakdowns, driver no-shows, GPS dropout, and sudden roster changes—and how do these targets change for night-shift women-safety routes?

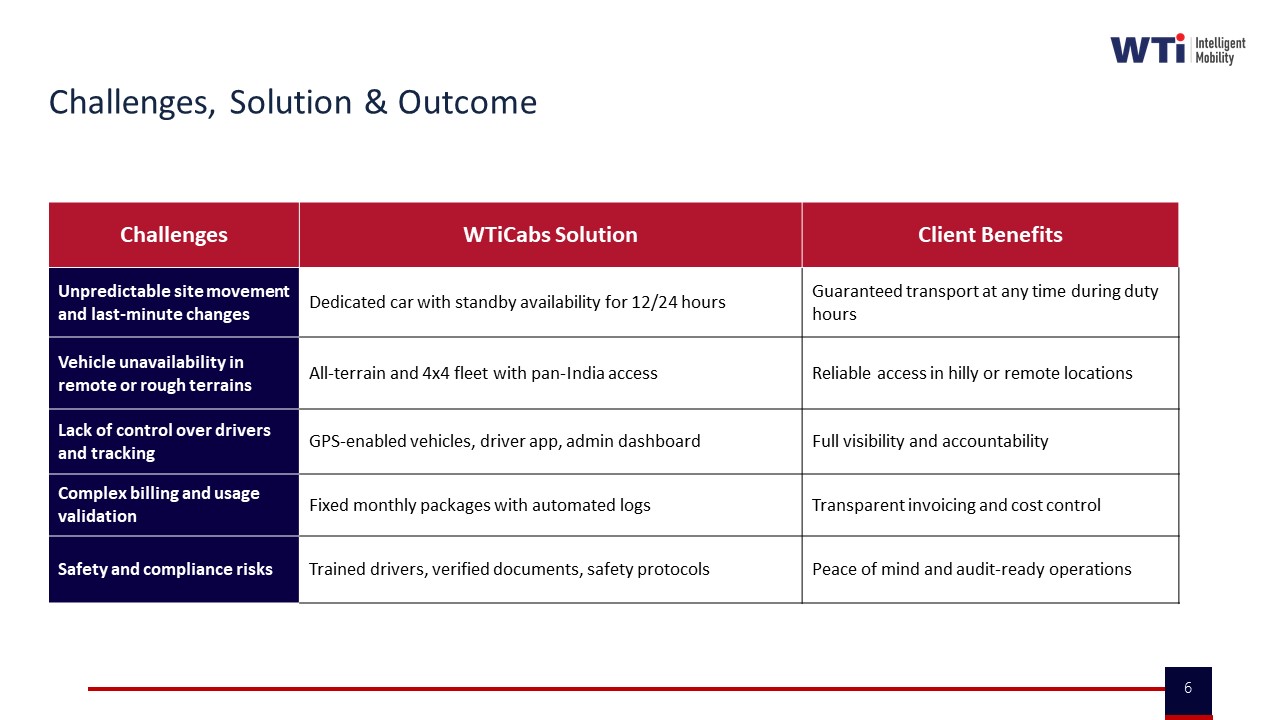

In enterprise-managed employee commute operations in India, most mature transport teams target detection of critical disruptions within a few minutes and recovery within one shift window at most. Tighter targets are expected for night-shift women-safety routes, where both detection and recovery windows are compressed and heavily governed by safety SOPs and audit expectations.

For routine EMS operations, mean time to detect is driven by the presence of a 24x7 command center, alert supervision systems, and real-time dashboards. Organizations using centralized monitoring, geofencing, and automated exception alerts can surface issues like vehicle breakdowns, driver no-shows, GPS failures, and major route deviations very early in the trip lifecycle. The collateral around transport command centers, alert supervision systems, and data-driven insights consistently positions real-time or near real-time visibility as “table stakes” rather than a differentiator.

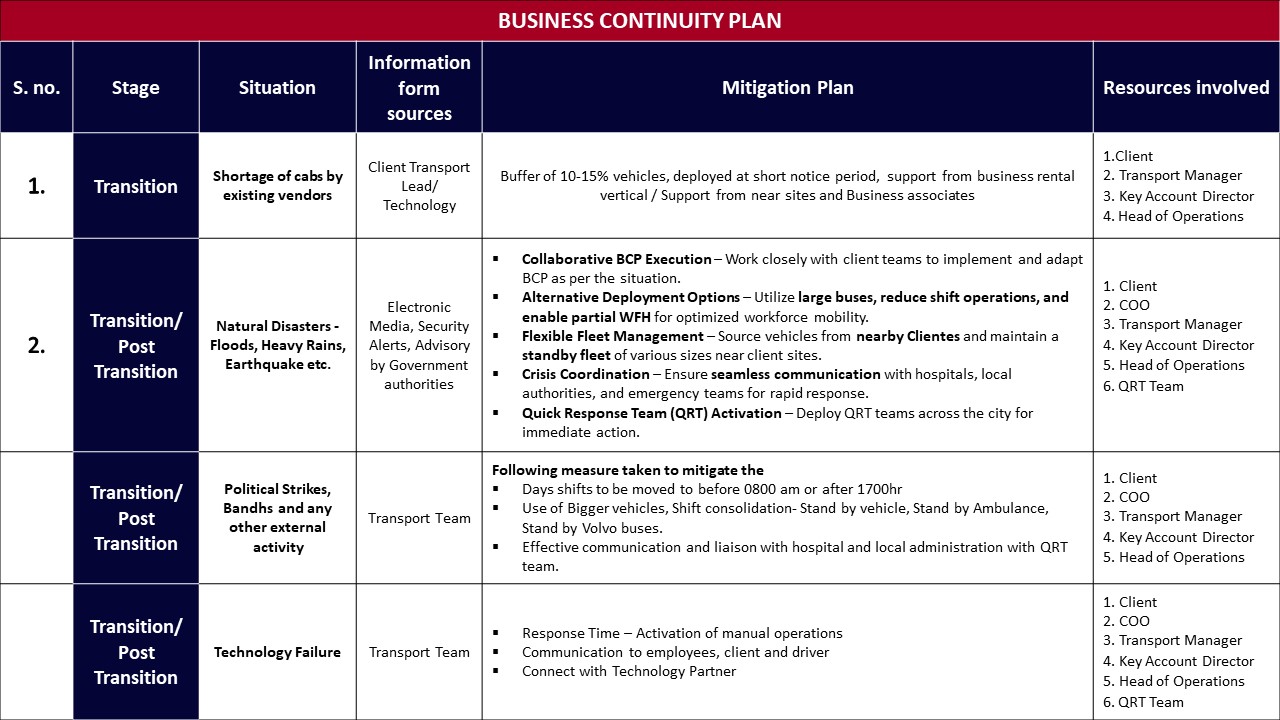

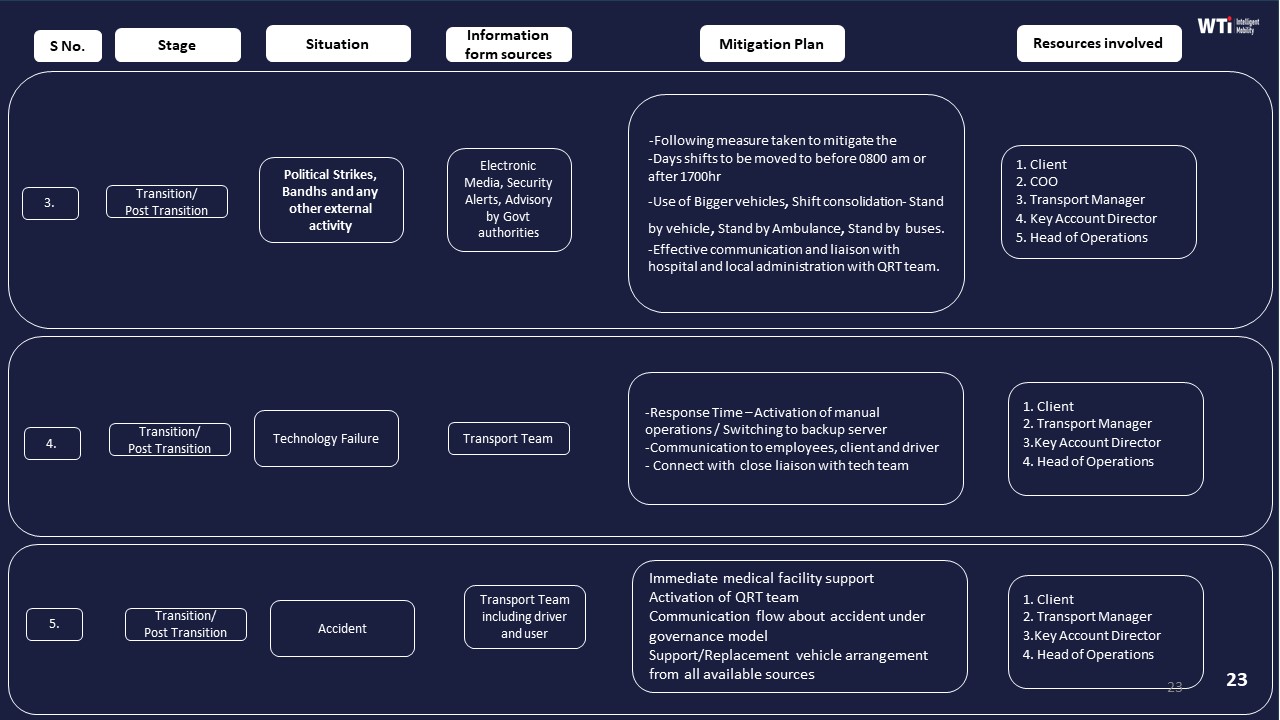

Mean time to recover in EMS is shaped by buffer capacity, standby vehicles, and pre-defined business continuity playbooks. Business continuity plans in the material explicitly describe buffers of additional vehicles, use of associated businesses, and shift-time adjustments to sustain service during cab shortages, natural disasters, political strikes, or technology failures. Centralized command centers, rapid EV deployment models, and project planners are all designed to reduce recovery time from a disruption to a managed, predictable window, rather than leaving ops in reactive firefighting.

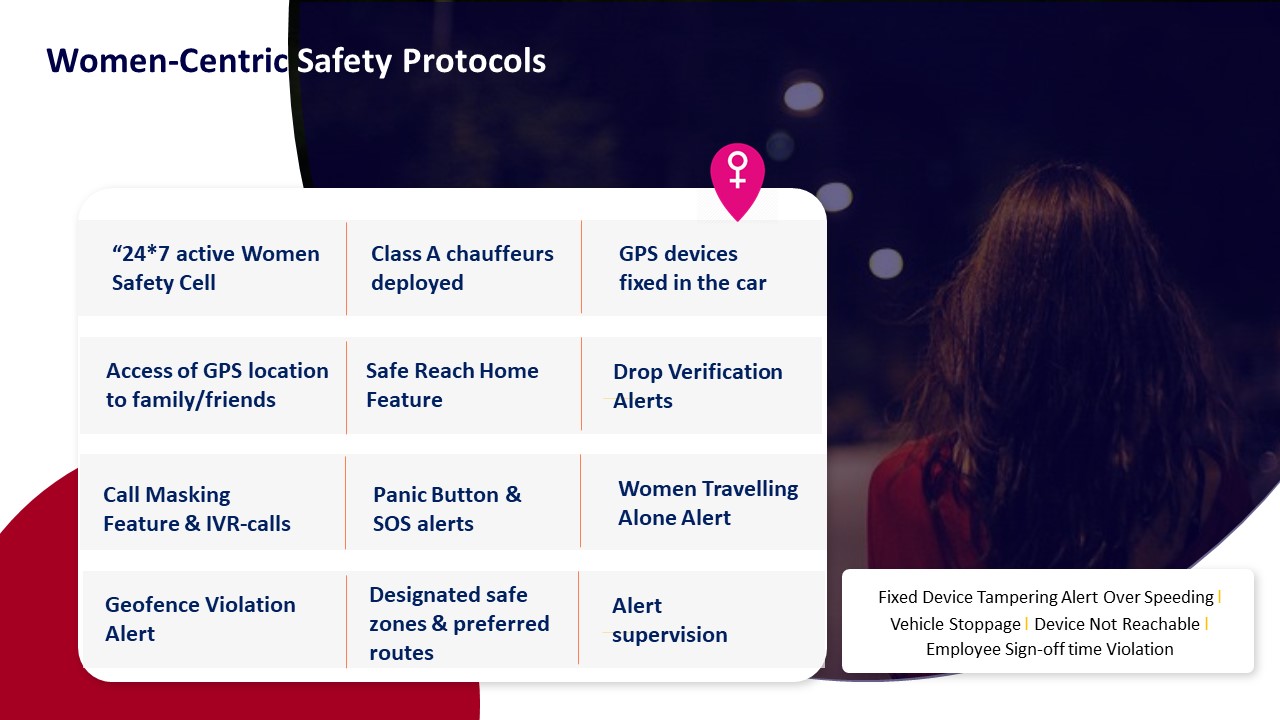

Night-shift women-safety routes sit under a stricter envelope. Safety collateral for women-centric protocols, escort policies, SOS infrastructure, and safety-and-compliance frameworks make clear that any deviation affecting a female employee at night is treated as a safety incident, not just an operational delay. In practice this means detection targets are aligned with immediate or near-immediate alerting from SOS buttons, geo-fencing violations, or route anomalies, and recovery expectations are governed by zero-incident postures and auditable proof of timely response.

To make these targets actionable at a control-room level, facility and transport heads can define internal thresholds along four dimensions:

- How quickly the command center must see a disruption on its dashboard or via automated alerts.

- How fast a fallback plan must be initiated using standby cabs, alternate drivers, or rerouted vehicles.

- How long it is acceptable for employees to remain at-risk or stranded before physical backup arrives, especially on women-only or night routes.

- What evidence (trip logs, GPS traces, SOS tickets) must be captured to demonstrate safe handling for HR, Security/EHS, and audit teams.

I do not have this information (please fix this gap).

How do we check that command-center alerts won’t be noisy—what thresholds and severity levels should we expect for late pickup risk, geo-fence breaches, deviations, and SOS?

B1201 Actionable alerting vs alert noise — In India’s corporate ground transportation command-and-control for EMS, how do buyers verify that alerting is actionable (not noise)—for example, what thresholds, deduplication rules, and severity levels should exist for late pickup risk, geo-fence breach, route deviation, and SOS events?

In Indian enterprise EMS command-and-control, buyers verify that alerting is “actionable, not noise” by enforcing explicit thresholds, severity tiers, and deduplication rules for each event type. Transport heads expect late-pickup, geofence, route-deviation, and SOS alerts to be tightly linked to shift windows, safety policies, and escalation matrices, with clear SOPs for who does what in the NOC when an alert fires.

For late pickup risk, most organizations set a predictive “risk” alert several minutes before contractual pickup time based on ETA models. A common pattern is a low‑severity alert when ETA exceeds a soft threshold, and a higher‑severity alert when it crosses the SLA breach limit or risks shift login. Command centers then link late‑risk alerts to rerouting or replacement-cab playbooks, and report OTP% and exception-closure times as governance KPIs.

For geofence breaches and route deviation, buyers treat perimeter exits near employee homes, campuses, and no‑go zones as higher‑severity than minor detours. Systems typically define distance or time thresholds before firing a full incident, and they suppress duplicate alerts when GPS jitter or small route corrections occur. Route adherence audits and random route checks are then used to validate that deviation alerts correlate with real non-compliance, not map noise.

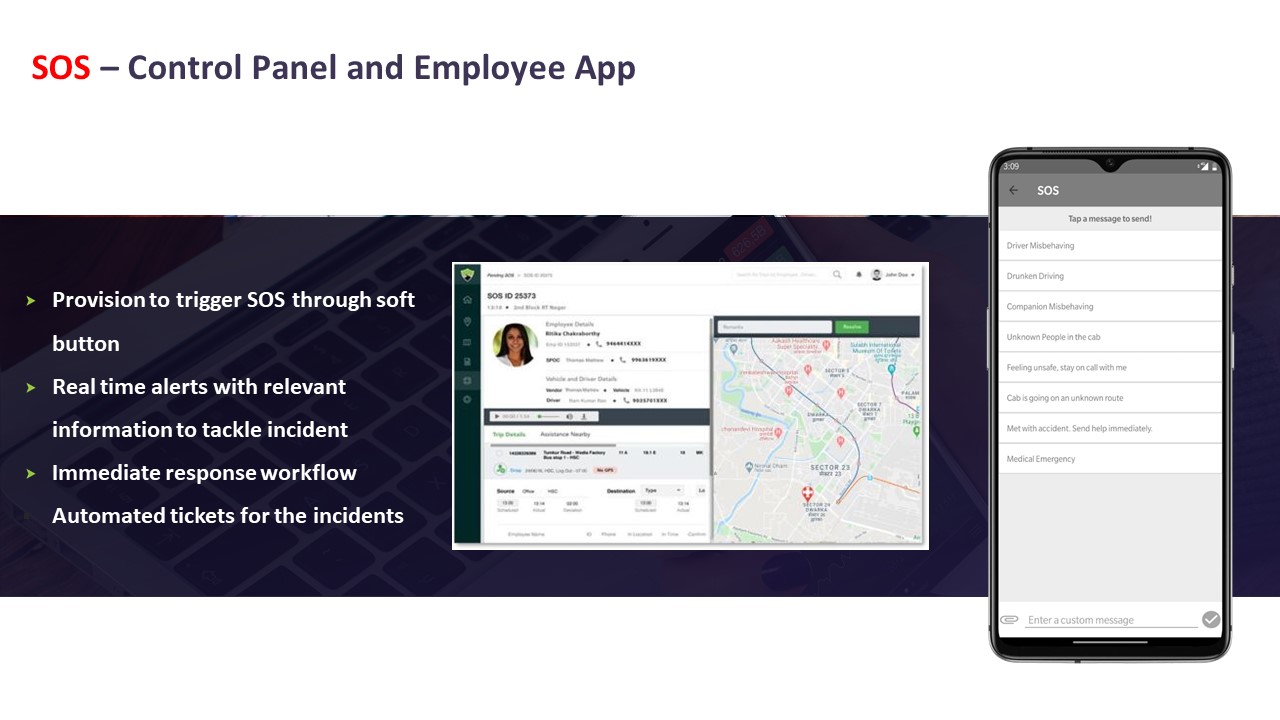

For SOS events, buyers expect a strict highest-severity tier, with no rate‑limiting that could hide a real incident. However, they still configure validation steps such as mandatory callback, location verification, and linkage to driver and trip manifests. SOS alerts must integrate with safety escalation matrices, women‑safety protocols, and business continuity plans, so command centers can prove incident timelines and responses later to HR, Security, and auditors.

If the mobility platform goes down, what backup SOP should the command center follow for dispatching trips, handling attendance escalations, and keeping audit proof when apps/GPS are down?

B1202 Backup SOPs for platform outages — In India’s enterprise mobility programs (EMS/CRD), what backup SOPs should a command center run during a full platform outage—how are trips dispatched, attendance-impact escalations handled, and audit evidence preserved when mobile apps or GPS feeds are down?

A resilient command center treats a full platform outage as a pre-defined SOP event, not an ad-hoc crisis. During outages, trips are run on pre-approved offline rosters and fallback dispatch channels, attendance-impact risks are escalated through a clear call-tree, and all manual actions are captured in temporary logs that are later reconciled into the main audit trail.

Reliable EMS/CRD operations in India require a documented “technology failure” playbook that assumes loss of rider/driver apps, GPS, or core routing. Command centers keep printable rosters and route books for all major shift windows, with tagged vehicles, primary and backup drivers, and standard pickup sequences. Dispatch switches to voice and messaging using driver call lists, SMS or WhatsApp groups, and pre-agreed reporting checkpoints, while simple spreadsheet or paper-based trip sheets capture start/end times, route deviations, and passenger manifests.

Attendance and shift-impact escalations are managed through a separate, non-dependent channel. Command center teams update HR/transport stakeholders on “reduced visibility mode,” send early alerts for high-risk routes or sites, and agree on temporary policies such as extended grace periods for logins, staggered shift starts, or pooled pickups from common hubs. For women’s night-shifts, escort and safety rules are enforced manually through phone verification, cab-rotation controls, and security desk coordination until automated geo-fencing and SOS return.

Audit evidence is preserved by maintaining structured outage logs. Command centers timestamp the start and end of the outage, record all manual dispatch decisions, vehicle and driver assignments, route changes, and incident calls in a structured log, and attach supporting artefacts such as SMS screenshots, call records, and signed duty slips. After recovery, these records are reconciled with system data to rebuild the trip ledger, document SLA deviations with root-cause notes, and create a consolidated “outage incident report” that satisfies HR, Security/EHS, Finance, and internal or external auditors.

How can HR tell if the command center is actually improving the commute experience—not just closing tickets—using things like repeat incidents, escalation volume, and grievance closure quality?

B1203 Measuring command center impact on EX — In India’s corporate Employee Mobility Services (EMS), how can an HR head measure whether the command center is reducing employee pain—not just closing tickets—using indicators like repeat incidents per route, escalation volume by timeband, and grievance closure quality?

In India’s corporate Employee Mobility Services, an HR head can measure whether the command center is truly reducing employee pain by tracking repeat incident patterns, escalation intensity, and the quality of grievance closure as distinct, auditable KPIs that sit next to OTP and cost metrics. These indicators must focus on trend reduction and resolution depth, not just ticket counts or closure speed.

HR leaders should first insist on route- and timeband-level incident analytics rather than generic “issues closed” reports. Command center data should show repeat incidents per route and vendor, categorized by incident type, so HR can see whether problems on a given corridor or shift window are actually declining over weeks. A common failure mode is that the command center closes tickets quickly but the same driver, vehicle, or route keeps appearing in the logs.

Escalation volume is best monitored by timeband, day of week, and shift type. This helps HR distinguish chronic structural issues such as night-shift routing, driver fatigue, or EV charging gaps from random noise. If the command center is effective, the ratio of escalations to total trips in high-risk bands should trend down, especially for women’s night shifts and peak entry/exit windows.

Grievance closure quality should be measured by whether each case has a documented root cause, preventive action, and validation that the issue did not recur within a defined cool-off period. HR should ask for sampling-based “closure audits” where a subset of employees confirm if they feel the problem was actually fixed. This approach links command center operations, safety compliance, and commute experience into one integrated view of employee pain.

For night-shift women-safety cases (SOS, escort not available, unsafe areas), what escalation path and response-time SLAs should we demand, and how do we keep audit proof without breaching DPDP privacy rules?

B1204 Women-safety escalation and audit proof — In India’s corporate ground transportation (EMS) night-shift operations, what escalation paths and response-time commitments should exist specifically for women-safety scenarios (SOS, escort non-availability, unsafe geo-zone), and how should those be evidenced for audits without violating privacy obligations under the DPDP Act?

In Indian EMS night-shift operations, women-safety escalation paths need a clearly tiered chain of responsibility, hard response-time commitments, and audit-ready but privacy-aware evidence. Escalations must be anchored in a 24x7 command center, integrated SOS, and geo-fencing, with incident data minimized and access-controlled under DPDP-compliant governance.

Escalation Path and Response-Time Commitments

Night-shift women-safety events should always enter a single, 24x7 command center or Transport Command Centre rather than ad-hoc calls to drivers or local supervisors. The Alert Supervision System and SOS control panel should generate immediate alerts for SOS triggers, escort non-availability, and entry into unsafe or non-approved geo-zones.

First-line response should sit with command-center executives who acknowledge an SOS or geo-fence breach in near real time and contact the employee and chauffeur. A defined escalation matrix should push unresolved or serious cases within minutes to shift leads, security or EHS leads, and then to senior operations or key account managers, following the structured escalation mechanism already used for service issues.

For women-safety scenarios, most organizations should define hard response-time bands. SOS alerts should be acknowledged within 60–90 seconds and have an action initiated (call, diversion, or dispatch of support) within 3–5 minutes. Escort non-availability or unsafe routing should be detected before trip start or at first routing deviation, so command-center and routing teams can correct the route or replace the vehicle before the employee is exposed.

Audit-Ready Evidence Without Breaching DPDP Obligations

Evidence for audits should rely on trip logs, GPS and geo-fence events, and SOS/ticket workflow data rather than full-content recordings of personal communications. Command-center dashboards and Alert Supervision Systems can provide immutable event timelines that show when an SOS was triggered, when it was acknowledged, what routing changes or vehicle actions were taken, and when the incident was closed.

Incident records should be pseudonymized or minimized after operational closure. Identifiers not required for compliance or legal retention should be stripped or masked, while retaining time stamps, location events, and action logs to support HSSE audits and ESG or safety reporting. Access to detailed logs should be restricted to defined roles such as security, HSSE, or internal audit, with role-based access controls and formal approval for case review.

Under DPDP-aligned practice, organizations should document lawful purpose, retention windows, and deletion routines for safety-related location data. They should also maintain chain-of-custody logs for who accessed trip and incident records, so auditors can verify integrity and due process without exposing unnecessary personal details.

When a vendor says “24x7 coverage,” what does that mean for staffing—how many incidents can a shift handle, what skills do agents need, and what happens during peak shift changes or city-wide disruptions?

B1205 What 24x7 coverage staffing means — In India’s corporate mobility command center setup for EMS/CRD, what does “24x7 coverage” really mean in staffing terms—how many concurrent incidents can one shift handle, what skills are required, and what happens during peak shift-changes or city-wide disruptions?

In an Indian corporate mobility command center for EMS/CRD, “24x7 coverage” means a continuously staffed control-room that can watch all trips in real time, triage safety and reliability alerts within minutes, and escalate according to pre-defined SOPs without gaps between shifts. It usually relies on staggered shifts, a small but multi-skilled night-core team, and surge playbooks for peak shift-changes and city-wide disruptions.

A properly designed command center uses layered staffing rather than only headcount. A base layer of controllers handles routine trip monitoring, GPS exceptions, OTP delays, geo-fence violations, and SOS alerts. A governance and data layer supports SLA tracking, reporting, and audits. A response layer owns incident escalation, BCP activation, and coordination with drivers, vendors, HR, Security/EHS, and local authorities.

Skills must cover live-ops coordination, familiarity with routing and telematics dashboards, understanding of HSSE rules, women-safety and night-shift protocols, and clear communication under pressure. Teams also need comfort with data-driven tools such as alert supervision systems, compliance dashboards, and CO₂ or EV-uptime monitoring where fleets are electrified.

During peak shift-changes, the command center workload increases sharply due to high trip volumes and tighter OTP expectations. A scalable model uses temporary staffing uplift, pre-validated rosters, dynamic route optimization, and pre-briefed drivers and supervisors to reduce “last-minute” decisions. During city-wide disruptions such as monsoon flooding, strikes, or technology failures, formally documented business continuity plans and contingency playbooks become active. These plans define alternative routing, buffer vehicles, backup communication channels, and revised shift timings so the same control-room can handle multiple concurrent incidents without losing overall control.

In practice, a 24x7 command center succeeds when it is treated as a governed, SOP-led operation with clear escalation matrices, not just a screen-watching function. It stabilizes daily EMS and CRD performance, reduces escalations to HR and leadership, and supports safety, compliance, and ESG reporting from a single operational nerve center.

How do we write escalation SLAs into the contract so exception handling is measurable—ack time, first action time, and resolution time by severity—instead of informal promises?

B1206 Contracting measurable escalation SLAs — In India’s corporate Employee Mobility Services (EMS), how should procurement and operations define escalation SLAs in the contract so that “exception handling” is measurable—e.g., acknowledgment time, time-to-first-action, and time-to-resolution by severity—and not left to informal promises?

In India’s corporate Employee Mobility Services, escalation SLAs become measurable when procurement and operations define explicit time-bound targets for each severity level and hard‑link them to reporting, audit trails, and commercial consequences in the contract. Contracts that only state “prompt” or “best effort” response create unmeasurable exception handling and persistent disputes.

Procurement and operations teams should first align on incident severity definitions for EMS, such as missed pickup affecting shift start, women-safety alerts, app/GPS failures, vehicle breakdowns, or vendor no‑shows. Each severity band should then carry three separately measured timers in the EMS operating model. One timer should capture acknowledgement time, another should capture time‑to‑first‑action, and a third should capture time‑to‑resolution, with clearly defined start and stop conditions based on trip logs, NOC records, and ticketing data.

A common failure mode is defining SLAs without tying them to the EMS command center workflow or to the mobility platform’s alert and ticket lifecycle. Exception handling remains informal when there is no integrated command center, no escalation matrix, and no centralized dashboard for OTA/OTD deviations, SOS triggers, or compliance alerts. In practice, measurable escalation SLAs require centralized command‑center operations, tech‑based measurable and auditable performance, and incident workflows that automatically capture timestamps for alerts, acknowledgements, actions, and closures as part of a continuous assurance loop.

Once timers and data sources are defined, procurement can embed outcome‑linked clauses that connect SLA adherence to penalties, earn‑backs, or performance guarantees, and can require periodic SLA reports as part of an indicative management report pack. Operations can then use these SLA metrics alongside on‑time performance, incident rate, and complaint closure SLAs within vendor governance, ensuring exception handling is governed like any other EMS KPI rather than left to subjective interpretations.

How do we link command-center performance to penalties/credits for high MTTR or missed response SLAs without encouraging the vendor to hide incidents?

B1207 Incentives without hiding incidents — In India’s corporate ground transportation operations (EMS/CRD), what is the right way to tie command-center performance to commercial outcomes—penalties or credits for high MTTR, chronic escalations, or missed response SLAs—without creating perverse incentives to under-report incidents?

Tying command-center performance to commercial outcomes works best when payouts are linked to response quality and evidence completeness, not just low incident counts or few escalations. Contracts that reward fast, well-documented closure and transparent reporting, while penalizing hidden or repeated failures, reduce the incentive to suppress incidents and increase the incentive to run a clean, honest NOC.

Most organizations in EMS/CRD achieve this by defining SLAs around observable behaviors in the command center. These behaviors include detection-to-acknowledgement time, acknowledgement-to-mitigation time, accuracy of communication to employees and HR, and the quality of the root-cause analysis and evidence trail. The SLAs are applied to all “valid incidents” that are actually logged, and not to a target of “having fewer incidents,” which would otherwise push the vendor to avoid logging issues.

A common failure mode is indexing penalties only to “number of incidents” and “escalation count.” This structure makes a vendor safer if they keep the dashboard quiet, delay logging, or downgrade severity. It also leaves the Facility / Transport Head with surprise failures during peak or night shifts and fragmented audit trails when HR, Security, or ESG teams later ask, “What really happened and when did you know?”.

To avoid these perverse incentives, organizations can define a small set of command-center KPIs that are explicitly pay-linked but incident-volume neutral, such as:

- SLA for incident acknowledgement time from first alert or complaint.

- SLA for first corrective action or mitigation step and communication to employees or HR.

- Closure SLA with documented root cause, evidence attachments, and prevention action.

- Repeat-incident rate on the same route, driver, vehicle, or site within a defined window.

- Audit trail integrity of GPS logs, trip data, and communication records for sampled incidents.

Penalties are then tied to chronic breach of these response and closure SLAs, or to high repeat-incident rates, rather than to the mere existence of incidents. Credits or earn-backs can be offered for quarters where the vendor meets high thresholds on on-time performance, command-center responsiveness, and audit readiness, while still maintaining a healthy level of incident logging and transparency.

A second safeguard is to explicitly reward transparent reporting and self-disclosure. Contracts can state that self-reported incidents detected by the vendor’s own monitoring and logged within a short window are treated more favorably than issues discovered first by employees or client leadership. This makes it commercially rational for the command center to surface problems early, which in practice reduces night-shift firefighting for the Facility / Transport Head and improves trust with HR and Security teams.

Organizations also benefit from establishing a joint governance rhythm where incident and escalation data is reviewed in structured forums. These forums can align HR, Transport, Security/EHS, and Finance around patterns such as chronic under-reporting from certain sites, gaps in women-safety protocols, or specific vendor clusters with higher repeat events. This governance can then adjust the commercial levers, for example, tightening penalties for repeat-incident rates or relaxing them when the data shows genuine improvement driven by better routing, driver training, or EV uptime management.

In India’s regulatory and ESG context, auditability is an important additional lever. Making the completeness and traceability of command-center evidence a scored and pay-linked KPI encourages vendors to maintain clean GPS trip logs, incident tickets, and communication records. This supports HR and ESG teams in demonstrating duty of care, commute emissions reporting, and women-safety compliance without depending on manually reconstructed stories after a failure.

Overall, command-center commercials in EMS/CRD are most effective when they:

- Index payouts to speed and quality of response, not low incident counts.

- Penalize repeat or systemic issues, not individual, well-managed events.

- Reward self-detection and transparent logging instead of silence.

- Include explicit KPIs for evidence quality and audit readiness.

- Are reviewed periodically in joint governance forums so thresholds and weights can evolve with operational maturity.

How do we decide what the system should auto-fix (reassign cab, reroute, backup vendor) vs what needs a human escalation so we’re not relying on 3 AM heroics?

B1208 Human vs automated remediation rules — In India’s enterprise mobility operations (EMS), how do IT and operations decide what gets escalated to humans versus auto-remediated—like reassigning a vehicle, re-routing, or triggering a backup vendor—so that the system is ‘self-healing’ rather than dependent on heroics at 3 AM?

In India’s enterprise mobility operations, self-healing EMS setups use explicit, jointly defined playbooks where high-risk or ambiguous events route to humans and well-understood, low-risk patterns trigger automated fixes. Escalation versus auto-remediation is not left to the app alone. It is governed by severity bands, data confidence, and the time left to protect shift start or safety SLAs.

IT and operations first classify incident types into safety-critical, service-critical, and cost/experience deviations. Safety-critical events such as SOS triggers, suspected assault, serious accidents, or repeated geofence violations are always escalated to humans with only assistive automation like alert fan-out or location pinning. This aligns with the emergence of centralized command centers, women-safety protocols, and HSSE accountability.

Service-critical issues like vehicle breakdowns, no-shows, or ETA breaches beyond a pre-set threshold typically follow auto-remediation rules if the routing engine and vendor pool have enough options. The system can auto-trigger reassignment, re-routing, or a standby cab when confidence in the data is high, residual travel time can still meet OTP thresholds, and contractual rules with vendors allow substitution. When capacity is tight or constraints such as guard-escort, night-shift policies, or EV range limits apply, the event is escalated to the control room.

Cost or experience deviations such as minor delays within a tolerance band or suboptimal seat-fill are usually handled by background optimization. These are addressed through route recalibration and next-cycle planning rather than live human intervention.

To keep this “self-healing” and not ad hoc, most mature EMS teams define for each alert type:

- The KPI or threshold that triggers the alert.

- Whether the first action is auto-remediation, human review, or a hybrid (auto-suggest plus human confirm).

- Data prerequisites such as GPS health, HRMS roster sync, and vendor availability that must be valid before automation acts.

- Maximum time the system can attempt auto-fix before mandatory escalation to a human in the NOC.

Over time, IT and operations refine these thresholds using NOC logs, SLA breaches, and route adherence audits. Automation is expanded from predictable patterns like recurring traffic bottlenecks or dead mileage reduction into more complex areas such as dynamic clustering, but safety and reputationally sensitive scenarios remain human-led with stronger observability and audit trails.

Before go-live, how can we test a vendor’s escalation readiness—what mock incidents or war-room drills are realistic and worth doing?

B1209 Testing escalation readiness pre go-live — In India’s corporate Employee Mobility Services (EMS), how should a transport head test a vendor’s escalation readiness during diligence—what tabletop drills, mock incidents, or ‘war-room’ simulations are realistic and worth doing before go-live?

In India’s Employee Mobility Services, a transport head should treat escalation-readiness as a testable operational capability. The most useful drills simulate the exact night-shift failures, tech glitches, and safety events that usually wake up the team at 2 a.m, and they measure response time, ownership, and communication quality across command center, drivers, and local supervisors before go-live.

A realistic starting point is to design 3–5 controlled “war-room” scenarios mapped to common Indian EMS risks. Typical patterns include monsoon-related gridlock on critical corridors, simultaneous vehicle no-shows at a major pick-up hub, app or GPS failure during a night shift, and a women-safety–sensitive delay that triggers escort or routing rules. Each scenario should have a clear trigger, an expected SLA for detection and response, and defined roles for the vendor’s centralized command center, local site team, drivers, and your own transport desk.

The most valuable drills resemble full trip lifecycles, not isolated events. A transport head can run mock peak-shift rosters, inject incidents mid-route, and then watch how the vendor’s NOC tools, alert supervision systems, escalation matrix, and business continuity playbooks behave in real time. A common failure mode is vendors talking about command centers and dashboards but failing to demonstrate 24/7 ownership, cross-city coordination, and clean handovers between centralized and local command centres when multiple routes start degrading at once.

Before production launch, escalation-readiness drills are most effective when they explicitly test four dimensions. They test early detection and alerting by using geo-fence violations, over-speeding, GPS/device tampering, and route deviation alerts to see if issues are caught before employees complain. They test multi-level escalation behaviour by walking through the documented escalation mechanism and matrix, and then verifying if each escalation level actually responds within its promised timeband. They test business continuity by simulating cab shortages, political strikes, system downtime, or severe weather, and then observing how the vendor’s Business Continuity Plan switches to buffers, alternate vendors, route changes, or manual workarounds without losing shift coverage. They also test evidence and reporting quality by checking whether, after each mock incident, the vendor can produce auditable logs, RCA, timeline of calls, and corrective actions that would satisfy HR, Security/EHS, and Internal Audit.

To keep drills grounded and repeatable, transport heads can formalize them as a pre–go-live SOP. During diligence and pilot, they can run at least one monsoon-routing simulation using the vendor’s routing engine and command center to see if promised on-time arrival rates under adverse traffic are realistic. They can run one women-safety scenario that combines escort/route rules, SOS or panic workflows, and night-shift compliance, and then ask Security to review the evidence trail. They can run one technology failure scenario, such as GPS or app unavailability, where operations must fall back to manual call-tree, paper duty slips, and telephonic confirmations while still maintaining route adherence and OTP. They can run one large-site disruption, like multiple no-shows or last-minute roster change at a major campus, to see how quickly the vendor can rebalance fleet, use standby cars, and update riders through apps, SMS, or call center.

Escalation-readiness simulations are most useful when the transport head defines success metrics in advance. Typical metrics include detection-to-escalation time, escalation-level response time, time to stabilize OTP on impacted routes, quality of communication to employees and HR during disruption, and quality of post-incident reporting and RCA. A common failure mode is treating BCP slides, command-center diagrams, or safety frameworks as sufficient proof. In practice, vendors who handle EMS well in India are the ones who can walk into a war-room simulation with clear SOPs, named escalation owners, and the ability to run through their Business Continuity Plan, alert supervision system, centralized compliance management, and command center operations under observation without adding noise or confusion.

What incident data should the command center record—timestamps, call logs, GPS snapshots, decision notes—so RCA is credible and audit-ready?

B1210 Incident evidence for audit-ready RCA — In India’s corporate ground transport (EMS/CRD), what data should a command center capture per incident—timestamps, call recordings, GPS snapshots, decision logs—so that root-cause analysis is credible and audit-ready rather than anecdotal?

A command center needs a structured, time‑stamped “incident dossier” that ties together trip data, people, decisions, and evidence. Root‑cause analysis becomes credible and audit‑ready when every key event in the trip lifecycle is captured as a verifiable record rather than a memory or WhatsApp trail.

At the trip level, command centers should store a unique trip ID, employee and driver identifiers, rostered shift window, entitlement type, and SLA baselines. Each trip needs synchronized timestamps for booking, assignment, vehicle reporting at gate, employee boarding, departure, intermediate halts, and final drop, so investigators can reconstruct the full duty cycle and compare it with schedule and SLA commitments such as OTP%.

For spatial and telematics context, command centers should log GPS tracks and periodic geo‑tagged snapshots, route adherence versus the approved route, geo‑fencing violations, speeding or harsh‑driving events, and any device‑tampering or signal‑loss alerts. When EVs are involved, battery state, charger interactions, and range at key points in the journey should be included to separate infrastructure issues from planning or driver behavior.

For safety, security, and compliance, the incident file should contain SOS button activations, panic events, escort or women‑safety rule checks, driver credential status at trip start, vehicle compliance status, and any camera or IVMS event markers. Each incident should also link all two‑way communications: structured logs of calls to drivers and employees, call recordings where permitted, and key system notifications or app messages that influenced decisions.

For governance, a digital decision log is essential. Every manual override, route deviation approval, vehicle substitution, and escalation must be recorded with who acted, when, on what information, and under which SOP or BCP playbook. Closing the loop requires attaching investigation notes, RCA classification, corrective and preventive actions, and closure timestamps, so Finance, HR, Security, and ESG teams can trust the findings and reuse them in SLA reviews, safety audits, and EV or routing optimization.

With DPDP in mind, how do we check that the command center’s access to rider location/trip history is role-based, time-limited, and fully logged, especially when HR and security are involved?

B1211 DPDP-safe access for escalations — In India’s corporate mobility services under DPDP Act expectations, how should legal and IT evaluate whether command-center access to rider location and trip history is appropriately role-based, time-bound, and logged—especially when escalations involve HR and security teams?

Legal and IT should treat command-center access to rider location and trip history as a governed, audited “safety instrument,” with explicit roles, time-bounded access windows, and immutable logs that can be shown to auditors under the DPDP Act. Access that is not policy-linked, SLA-linked, and reconstructable as an evidence trail will be seen as surveillance risk rather than safety control.

They should first map personas and roles to minimum data needs. Command center operators, transport heads, and security teams should have real-time and historical location visibility strictly aligned to trip lifecycle management, incident response SOPs, and compliance dashboards. HR should not have open-ended map access and should instead see summarized, purpose-specific views for attendance, grievance handling, or investigation support.

Evaluation should then focus on three controls. Role-based controls must be implemented via identity and access management, where every user’s access level matches a defined function such as NOC operations, EHS incident handling, or HR grievance resolution. Time-bounded controls must restrict detailed location visibility to the active trip window plus a defined retention period required for safety, audit, and billing. Logging controls must capture who accessed which rider or trip records, at what time, for which declared purpose, and through which interface in the command center.

Legal teams should check that these controls align with stated purposes like safety, SLA governance, and auditability, and that consent language, privacy notices, and policy documents explicitly reflect those purposes. IT should validate that observability covers access logs, admin overrides, and data export events so that any HR or security escalation can be reconstructed without ambiguity and so that misuse can be detected and sanctioned.

What usually causes repeated escalations—driver no-shows, wrong pickup points, roster changes, GPS drift—and how do we confirm the command center is fixing root causes, not just closing tickets?

B1212 Proving root-cause fixes vs ticket closure — In India’s enterprise employee transport (EMS), what are the most common failure modes that create repeated escalations—driver no-show patterns, wrong pickup points, roster volatility, GPS drift—and how can a command center prove it is solving root causes rather than just closing incidents?

The most common failure modes in India’s enterprise employee transport are repeatable patterns in roster volatility, vehicle/driver availability, location accuracy, and weak closure discipline. A command center proves it is solving root causes when repeat incidents drop measurably, exception closure times shrink, and evidence is visible in audit-ready dashboards instead of only in call logs.

Persistent driver no-shows usually trace back to fatigue, poor incentive design, and last-minute rostering. Wrong pickup points and “vehicle not found” issues frequently come from inaccurate geo-tagged addresses, unvalidated employee locations, and GPS drift. High roster volatility stems from hybrid work, late approvals, and unmanaged cut-off times for bookings and cancellations. GPS drift and app failures are often symptoms of weak device policies, network gaps, and no fall-back SOP when tech misbehaves at 2 a.m.

A mature command center does not stop at answering the phone. It runs a continuous assurance loop with defined KPIs such as OTP%, Trip Adherence Rate, Vehicle Utilization Index, and exception detection-to-closure time. It links alerts like geofence violations, over-speeding, and device tampering from systems such as the Alert Supervision System to clear SOPs for rerouting, vehicle replacement, and safety escalation. It also integrates routing engines, driver apps, and HRMS rosters so that route changes, shift changes, and vendor allocations are controlled centrally rather than via ad-hoc calls.

To demonstrate root-cause control rather than ticket clearing, a command center should track and publish a small, stable set of signals over time. Examples include a fall in repeat incidents on the same route, a reduction in no-shows tied to specific drivers or vendors after targeted coaching, and decreased dead mileage after dynamic route recalibration. Evidence from dashboards like “Dashboard – Single Window System,” “Advanced Operational Visibility,” and “Transport Command Centre” shows that issues are predicted and prevented at the routing and planning stage. This creates operational calm for the Facility / Transport Head because fewer incidents ever reach escalation, and when they do, the command center can reconstruct what happened with tamper-evident trip data and compliance logs.

For airport trips, how should the command center manage flight delays and last-minute changes so we don’t miss pickups, but Finance also doesn’t get hit with messy waiting charges and disputes?

B1213 Airport delay escalations and billing control — In India’s corporate car rental and airport transfer operations (CRD), how should a command center handle flight delays and last-minute changes—what escalation logic prevents missed pickups while keeping Finance comfortable about waiting-time charges and billing disputes?

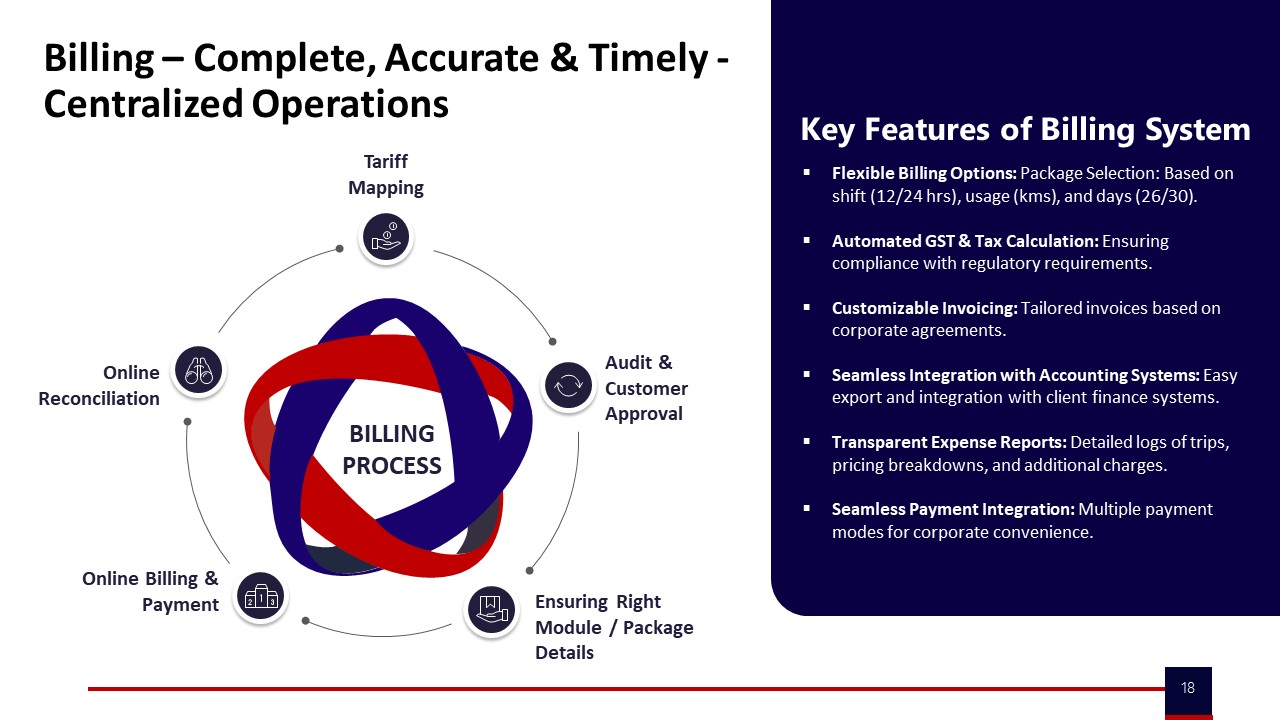

In corporate car rental and airport transfer operations, a command center should treat flight delays as a governed exception workflow with clear thresholds, automated triggers, and pre-agreed commercial rules. The core principle is that every minute of waiting or re-dispatch must be traceable to airline data and documented decisions, so Ops prevent missed pickups while Finance can defend waiting-time charges and avoid disputes.

A robust model starts with integration of flight-linked tracking into the booking and dispatch layer. The WTi platform already supports flight-linked airport tracking, 24/7 command-center monitoring, and automated alerts. The command center should subscribe to real-time airline status and set clear timebands for “normal”, “moderate delay”, and “severe delay” actions for each booking. Every status change should automatically update the trip in the command dashboard and the driver app, and log a time-stamped decision trail.

Escalation logic works best in short, predictable steps. For example, at a defined delay threshold the command center informs the driver and extends reporting time. If delay crosses a higher threshold, the command center can stand down the current vehicle and re-slot another closer to revised ETA, especially during peak hours or EV charging windows. This minimizes dead mileage and unnecessary waiting while preserving on-time arrival. The Alert Supervision System and Transport Command Centre collateral show how real-time alerts, geofencing, and centralized supervision reduce surprise failures.

Finance comfort depends on commercial rules that are visible, rule-based, and linked to auditable data. Waiting-time slabs and free buffers should be defined in the contract and encoded in the billing engine so that the system only charges waiting beyond the agreed free window and only when the GPS log and airline data align. WTi’s centralized billing, SLA-based models, and tech-based measurable performance framework demonstrate how OTA/OTP, delays, and billing can be tied together with audit trails. When invoices carry attached trip logs, airline delay proofs, and waiting-time calculations, disputes drop because every charge is explainable and repeatable.

How do we prevent shadow escalations—senior leaders calling the vendor directly—and keep the escalation process consistent and defensible?

B1214 Preventing shadow escalations by leaders — In India’s enterprise mobility command center operations (EMS), what governance prevents ‘shadow escalations’ where senior leaders bypass the matrix—like calling the vendor owner directly—and how do buyers set expectations so the escalation process stays consistent and defensible?

In Indian enterprise EMS command-center operations, organizations prevent shadow escalations by hard‑wiring a visible governance spine around a central NOC, a published escalation matrix, and audit‑ready incident workflows. Buyers keep the process defensible by making escalation rules part of policy, contracts, and SLAs, and by giving senior leaders clean, real‑time visibility so they do not feel the need to bypass the matrix.

A central Transport Command Centre or Command Center is treated as the single source of truth for all incidents and SLAs. Command-center micro‑functions and MSP governance structures establish clear roles, from front‑line executives up to key account managers and leadership, with defined response times and documentation at each level. An explicit escalation mechanism and matrix sets who is called when OTP, safety, or technology issues breach thresholds, and it links those steps to measurable and auditable performance outcomes.

Shadow escalations are reduced when leaders trust the system’s observability. Centralized dashboards, data‑driven insights, and alert supervision systems surface exceptions early and show closure status, which lowers the impulse to “call the owner.” Buyers reinforce this with contracts that route all official communication and penalties through the command center, not informal channels. Business continuity plans and safety & compliance frameworks further require that every incident, including VIP calls, is logged, triaged, and closed through the same ticketing and RCA workflow.

To keep the escalation process consistent, transport heads and CHROs typically:

- Publish the escalation matrix and SLAs to internal stakeholders and vendors.

- Anchor it in vendor governance models and QBRs so deviations are visible.

- Tie vendor rewards, penalties, and renewals to adherence to the formal command‑center process rather than ad‑hoc interventions.

As IT, how do we check if command-center tooling has real observability (logs, alert history, post-incident reviews) instead of manual screenshots that won’t hold up in an audit?

B1215 Observability vs manual proof — In India’s corporate Employee Mobility Services (EMS), how can a CIO evaluate whether the command center tooling supports real observability—centralized logs, traces, alert history, and post-incident reviews—versus relying on manual updates and screenshots that won’t stand up during audits?

A CIO can test whether command center tooling delivers real observability by checking if events, metrics, and incident workflows are captured automatically in a queryable, time-stamped system of record instead of being reconstructed from manual logs, screenshots, and emails. Genuine observability in Employee Mobility Services requires centralized, immutable trip and incident data with audit-ready traceability from raw telemetry to SLA and safety outcomes.

A strong EMS command center platform exposes a single-window operations dashboard with real-time tracking, alerts, and historical reports. The platform should integrate GPS/telematics, driver and rider apps, SOS controls, and routing engines into one data pipeline, rather than relying on operators to manually update spreadsheets or chat groups. In practice, tools like WTi’s Transport Command Centre and EV Command Centre dashboards are positioned to give 24/7 visibility, SLA monitoring, and CO₂ tracking with structured logs and analytics, not just live maps.

A CIO should probe how alert supervision is implemented. A mature system uses automated rules for geofence violations, overspeeding, device tampering, and SOS triggers, with each alert stored as a time-stamped event linked to vehicle, trip, and user IDs. The Alert Supervision System collateral, for example, highlights specific alert types, implying that these should be generated and closed by workflow rather than free-text notes.

Post-incident reviews are a critical test. A robust setup allows reconstruction of a trip or incident timeline directly from the system: route playback from GPS logs, driver credentials from centralized compliance management, vehicle health and induction status from fleet compliance modules, and communication history from the command center’s ticketing or case log. Where vendors rely on screenshots from WhatsApp groups or ad-hoc Excel trackers, audit trail integrity and chain-of-custody are weak.

During evaluation, CIOs can use a short checklist to distinguish real observability from manual patchwork:

- Ask to see raw alert and trip event tables filtered by time, vehicle, and site, not just dashboards.

- Request a live reconstruction of a past incident from the platform, including who acknowledged it, when it was escalated, and when it was closed.

- Verify that safety features like SOS, women-centric protocols, and route deviations automatically open tickets in an incident or helpdesk module rather than generating only push notifications.

- Confirm that compliance dashboards for drivers and fleet (license, permits, checks, training) are fed by structured processes like DASP, driver compliance verification, and fleet induction—not manual uploads alone.

- Check that real-time and historical emissions, uptime, OTP, and seat-fill insights visible on sustainability and operational dashboards are backed by consistent, exportable data rather than static images.

If the vendor cannot demonstrate end-to-end traceability across the command center, alert supervision, compliance management, and safety tooling, then the EMS observability posture is still manual and will not hold up under serious audit or incident investigation.

During a live incident, how should the handoff work between the central command center and site security/facilities—who contacts whom, what gets shared, and how do we avoid delays from unclear authority?

B1216 Command center to site handoff — In India’s corporate employee transport (EMS) operations, what is the practical handoff between the central command center and site security/facilities during a live incident—who calls whom, what information is shared, and how do buyers avoid delays caused by unclear authority?

In Indian EMS operations, the command center is expected to act as the single incident nerve‑centre, while site security/facilities act as local first responders who execute on-ground SOPs. Buyers avoid delays by pre‑defining who triggers what, what data must be shared from telematics and apps, and which role has final authority in each incident type.

During a live incident, the central command center usually receives the first signal. The signal can come from SOS buttons in employee or driver apps, geo‑fence or over‑speed alerts from the alert supervision system, GPS tampering alarms, or calls to a 24/7 helpdesk. The command team validates the alert using the EV or cab command dashboard, trip manifest, and live GPS trail, and then opens a ticket with time‑stamped details.

Once validated, the command center must call site security or the client’s transport desk as the first escalation, not the other way around. The command team shares explicit fields such as vehicle number and GPS location, driver identity and compliance status, employee details and contact, trip ID and route, event timestamp and alert type, and immediate risk assessment. This information is then mirrored into email or incident logs to create an auditable trail for HR, Security/EHS, and Facilities.

To avoid delays from unclear authority, buyers define a simple matrix before go‑live. They define which incidents are controlled centrally, such as tech failures, routing deviations, and multi‑city disruptions, and which require site‑led authority, such as physical threat, medical emergencies, and gate‑entry conflicts. They specify who has final say on decisions such as trip continuation, replacement vehicle dispatch, escort deployment, or police escalation.

The most effective governance models link the command center and site security through a documented escalation mechanism and matrix. These models define named roles, time‑bound response SLAs, and call order for each severity band. They also connect to a business continuity plan that already covers cab shortage, strikes, disasters, and technology failures, and to HSSE tools that define who logs, audits, and closes each incident.

A robust setup also ensures that the command center has access to centralized compliance management data. That data includes driver background checks, vehicle fitness and induction status, and safety equipment checklists. Site security is then not asked to “re‑verify” what is already digital, which reduces duplication and confusion at the gate or during a night incident.

How do we spot a vendor who claims 24x7 but doesn’t really deliver—what proof should we ask for like shift rosters, escalation logs, recent incident stats, and references for similar shifts?

B1217 Spotting fake 24x7 coverage — In India’s enterprise mobility services (EMS/CRD), how can procurement detect ‘24x7 in name only’ during vendor evaluation—what evidence should buyers ask for like shift rosters, escalation transcripts, last-30-days incident stats, and real customer references from similar timebands?

24x7 capability in enterprise mobility is proven through hard operational evidence across night shifts, not just a slide or a promise. Procurement teams should demand artefacts that show who was on duty, what actually went wrong, how fast it was handled, and what similar clients say about the vendor’s night and weekend performance.

Procurement teams can test “24x7 in name only” by pushing vendors for shift-wise operational proof rather than generic PPT claims. A reliable EMS/CRD operator will share documented command-center operations, business continuity playbooks, safety SOPs for women’s night shifts, and measurable on-time performance across timebands.

The most useful evidence bundles typically include: - Shift rosters and command-center coverage. Buyers should request anonymized last-3-month rosters for the centralized command center and location-specific command desks, including night, weekend, and holiday coverage. These should map roles like transport command center supervisors, escalation managers, and helpdesk agents to specific shifts, linking to the MSP governance structure or Transport Command Centre model.

-

Escalation matrix and real escalation logs. Procurement should ask for the live escalation mechanism and matrix with role names and response-time commitments. They should also request redacted incident or SOS transcripts from late-night shifts showing how an issue was detected, who responded, timestamps for each step, and how closure was confirmed against SLAs.

-

Incident and on-time performance statistics by timeband. A mature operator should present last-30–90-day metrics broken down by day vs night, including OTP%, exception count, safety incidents, and complaint closure SLAs. Evidence such as “98% on-time arrival during Mumbai monsoon” or command-center dashboards and alert supervision system screenshots helps separate marketing claims from live operational control.

-

Business continuity and contingency playbooks. Buyers should see the vendor’s business continuity plans for cab shortages, political strikes, tech failures, and natural disasters, with clearly assigned responsibilities and mitigation steps such as buffer vehicles, backup systems, and local authority coordination.

-

Real customer references from similar timebands and profiles. Procurement should insist on speaking with operational counterparts from current clients of similar size, city mix, and night-shift intensity, especially where women’s safety and EV operations are in scope. References are most credible when they confirm punctuality, escalation handling quality, and stability during crises.

If a vendor cannot provide timeband-sliced metrics, redacted incident logs, or concrete BCP documents, the 24x7 claim is likely marketing rather than a proven command-center capability.

What should Finance ask so disruption-related costs (backup cabs, towing, waiting, emergency substitutions) don’t turn into unbudgeted exceptions every month?

B1218 Controlling escalation-related exception costs — In India’s corporate mobility operations (EMS), what should a CFO ask to ensure escalation and disruption costs are predictable—like backup vehicle premiums, towing, waiting, and emergency substitutions—and that these don’t become ‘unbudgeted exceptions’ every month?

In Indian EMS operations, a CFO should insist that all escalation and disruption costs are defined as standard, pre-priced line items in the contract and tightly mapped to SLA breaches and exception codes in monthly MIS. The CFO should also demand trip-level data that reconciles every “extra” charge (backup vehicles, towing, waiting, emergency substitutions) back to a documented incident category, so nothing appears as an unexplained exception.

The first focus area is commercial structure. The CFO should ask how backup vehicle deployment, towing, peak-hour waiting beyond free limits, and emergency substitutions are priced, capped, and approved. The CFO should also ask whether these are covered via pre-agreed buffers, FTE-style standby, or explicit per-incident rates in the billing model. Every escalation cost should sit inside clear models such as monthly rental, per km, trip based, FTE, or pay-per-usage, not as ad-hoc line items.

The second focus area is data and reconciliation. The CFO should ask for an indicative management report that tags all escalations to specific trips, routes, and timebands and confirms that billing systems support centralized, timely, and accurate reconciliation. The CFO should also insist that the billing process flow, from duty slip to invoice, contains checkpoints for tariff mapping, customer approval, and online reconciliation, so exceptions cannot bypass controls.

The third focus area is governance and BCP. The CFO should ask to see the business continuity plan for cab shortages, technology failures, strikes, and disasters and how cost treatment is defined in each scenario. The CFO should also ask for a clear escalation matrix showing who can approve emergency substitutions or waiting extensions and how those approvals are logged for audit.

Operational risk is the final focus area. The CFO should ask how on-time delivery is managed through routing, traffic trend analysis, and buffer vehicles, because better OTP reduces emergency use of backup vehicles and waiting. The CFO should also demand periodic reviews where vendor, transport, and Finance jointly analyse exception patterns, convert recurring ones into revised SOPs or commercials, and document any residual discretionary spend as an agreed contingency line in the budget.

How do HR and operations agree on what’s a reportable incident vs a normal service issue so leadership reporting is consistent and employees don’t feel brushed off?

B1219 Defining reportable incidents consistently — In India’s corporate employee transport (EMS), how should HR and operations align on what constitutes a ‘reportable incident’ versus a routine service issue, so that leadership reporting is consistent and employees don’t feel issues are being minimized?

In India’s corporate employee transport, HR and operations need a shared, written incident taxonomy that clearly separates “safety and compliance incidents” from “service-quality issues,” and they must route, log, and report them through different but connected workflows. A common failure mode is mixing late pickups with women-safety or driver-misconduct cases in the same bucket, which dilutes leadership focus and makes employees feel serious issues are being downplayed.

HR and operations should first agree that anything touching physical safety, women’s night-shift security, legal compliance, or reputational risk is always “reportable.” Routine delays, routing changes, or vehicle swaps remain “service issues,” even if they cause dissatisfaction. Business continuity events like cab shortages during strikes or floods also need a separate “disruption” category, with clear BCP playbooks and ownership defined.

A practical alignment method is to co-design a transport incident matrix in the command center environment. Each incident type gets a severity band, mandatory escalation path, and evidence requirement based on safety SOPs, women-centric protocols, and HSSE expectations. HR, Security/EHS, and the Transport Head should review this matrix quarterly, using data from alert supervision systems, safety dashboards, and audit trails to refine what is tracked as “reportable.”

For leadership, only aggregated safety, compliance, and disruption metrics should appear in formal dashboards, supported by auditable trip logs and route data. Service issues should surface as operational KPIs like on-time performance and customer satisfaction, not as “incidents.” This separation keeps reporting consistent, preserves seriousness for true incidents, and reassures employees that safety concerns are never hidden inside routine service noise.

Measurement, alerting discipline, triage, and observability

Sets early-warning targets, actionable alert thresholds, and observable dashboards so teams triage consistently and leadership sees real reliability gains.

What post-incident review rhythm works in practice (daily review, weekly RCA, monthly governance), and how do we make sure actions actually get done so issues don’t repeat?

B1220 Post-incident reviews with follow-through — In India’s enterprise-managed mobility programs (EMS), what is a realistic post-incident review cadence and format—daily ops review, weekly RCA, monthly governance—and how do buyers ensure follow-through so repeat issues don’t erode trust in the command center?

In India’s enterprise employee mobility programs, most mature buyers converge on a three-layer cadence for post‑incident review. Daily operations huddles handle quick triage, weekly reviews close root causes, and monthly governance forums track patterns and enforce accountability. This layering keeps the Transport Head’s command center stable in real time while still giving CHRO, Security, and CFO the audit-ready view they need.

Daily reviews are usually run inside the command center or transport desk. The focus is fresh incidents, SLA breaches, women-safety exceptions, and GPS/app failures in the last 24 hours. The discussion stays tactical. The command team validates facts from trip logs and telematics, checks interim fixes, and decides which cases need structured RCA instead of ad‑hoc firefighting.

Weekly reviews work best as a short but formal RCA clinic. Operations, Security/EHS, and sometimes HR go through a limited set of “priority incidents,” for example repeat late pickups on a route or recurring driver non-compliance. Each case is tied to a specific cause category such as routing logic, driver fatigue, vendor gaps, or tech instability. The output is a written action owner, due date, and a measurable check like improved OTP on that route or zero reoccurrence over a week.

Monthly governance closes the loop at a leadership level. CHRO, Transport, Procurement, Security, and the vendor review aggregated trends rather than individual trips. They look at OTP%, incident rates, safety escalations, complaint closure SLAs, and any women-safety or night-shift deviations. Decisions here typically include route policy changes, vendor tiering, contract-level penalties or incentives, and if needed, changes in fleet mix such as more EVs or dedicated standby cabs.

Buyers ensure follow‑through by insisting that every incident and RCA lives in a single, auditable system rather than scattered emails. Command centers log each case with time-stamped trip data, GPS traces, driver details, and closure notes. This log feeds monthly dashboards that surface repeat patterns, for example the same corridor repeatedly breaching escort rules or the same vendor cluster driving most SLA failures.

Strong governance frameworks also tie incident outcomes directly into vendor SLAs and commercial levers. A common approach is to link payouts or penalties to on-time performance, safety incident counts, and complaint closure timelines. This creates an operational and financial reason for vendors and internal teams to close actions, not just discuss them.

A practical discipline is to limit weekly RCA to a small, highly visible “top 5 issues” list. Each item stays on the list until data proves it has been resolved for multiple weeks, such as through OTP recovery or zero recurrence. This prevents issues quietly resurfacing and eroding trust in the command center’s assurances.

When incident review is integrated with centralized command-center tooling, the same platform that monitors trips in real time can also generate end‑to‑end evidence packs for audits and governance. That alignment lets Transport Heads move from purely reactive firefighting towards predictive operations supported by clear SOPs, measurable KPIs, and stable review cadences.

How should we communicate escalations to employees during disruptions so updates are timely and respectful, but we don’t create panic or expose sensitive location details?

B1221 Employee communication during escalations — In India’s corporate ground transportation command center setup (EMS/CRD), how do buyers design escalation communications so employees receive timely, respectful updates during disruptions without increasing anxiety or exposing sensitive location data?

In India’s corporate ground transportation command centers, buyers design escalation communications as tiered, SOP-driven workflows that give employees short, factual, and low-anxiety updates, while keeping GPS and personal data inside the command stack and not exposed in raw form. Command centers use alert supervision systems, routing engines, and HRMS‑linked rosters to generate templated messages that describe impact and next steps, but they avoid sharing precise coordinates, other riders’ details, or internal risk flags.

Most organizations route disruption signals first into a 24x7 command center or Transport Command Centre rather than directly to riders. The command center aggregates GPS, IVMS, and driver app status, applies business continuity playbooks for strikes, monsoon traffic, or tech failures, and then pushes only necessary information out via employee apps, SMS, or calls. This pattern protects observability and evidence trails for OTP, safety, and compliance, while preventing real-time internal chatter about raw incident feeds.

A common failure mode is over-notifying with technical jargon or unfiltered alerts, which increases anxiety and escalations. Another is under-notifying, which forces employees to call the transport desk repeatedly and overwhelms night-shift teams. Command centers work best when they define clear “what to tell the employee” playbooks for delays, reroutes, escort changes, or vehicle swaps and keep a single-window contact route through the mobility app, call center, or helpdesk.

Typical SOP elements include:

- Strict role separation where only the command center and call center see detailed telematics, while employees see time estimates, vehicle identity, and high-level reasons for delay.

- Use of standardized, non-alarming templates that say what happened, what is being done, and what the employee should do now.

- Escalation matrices that govern when to move from in-app notifications to live calls, especially for women’s night shifts or safety-related deviations.

What should we ask to make sure the command center can run even with poor connectivity—offline workflows, fallback calling, and caching—especially in tier-2 cities or weak network areas?

B1222 Connectivity-resilient escalation operations — In India’s corporate Employee Mobility Services (EMS), what questions should a transport manager ask to ensure the command center can operate during connectivity issues—offline-first workflows, fallback calling trees, and local caching—especially for tier-2 cities or poor network pockets?

A transport manager should treat connectivity loss as an expected condition and probe whether the command center has clear offline SOPs, local data caching, and voice-based fallback so OTP, routing, safety, and escalations still run in tier‑2 cities and weak‑network pockets. The questions should test how rostering, trip monitoring, SOS handling, and reporting continue when apps, GPS, or data links partially fail.

Key question areas include command-center operations, field workflows, safety/compliance, and data integrity.

Command-center / NOC operations - How does the command center continue shift operations when the mobility platform or internet link is partially or fully down? - What are the defined offline-first workflows for rostering, routing, and vehicle assignment during outages? - Is there a documented playbook for “technology failures” in the Business Continuity Plan, with roles and timelines for activation? - What visibility does the NOC retain if live GPS feeds are delayed or missing for part of the fleet?

Fallback calling trees and communication - What is the fallback calling tree when apps or data connectivity fail for drivers or employees? - Are drivers, escorts, and local supervisors given printed or SMS-based escalation matrices with 24/7 numbers? - How is two-way confirmation (pickup, drop, last-mile arrival) handled via voice/SMS when app check-ins do not work? - Who is accountable at 2 a.m. for coordinating calls across drivers, security, and local site teams if the command center screens are frozen?

Driver and field SOPs in poor coverage - What SOPs are given to drivers for routes with known poor network pockets in tier‑2/3 cities? - Are route manifests, trip sheets, and employee lists cached locally on the driver app or shared in advance (PDF/WhatsApp/printed)? - Can drivers navigate and complete trips if the app cannot refresh mid-route? - How are no-shows, diversions, and unscheduled stops captured when live geo-fencing alerts are not available?

Safety, women’s security, and SOS handling - How are SOS and panic workflows handled if the SOS API cannot fire due to low data coverage? - Is there an alternate “voice-SOS” protocol, such as a dedicated emergency helpline or speed-dial from the employee app? - How are women-centric safety protocols (escort rules, home-drop confirmation, call-back on late-night trips) enforced without real-time tracking? - What evidence is retained for incident reconstruction if GPS trails are incomplete due to connectivity gaps?

Local caching, data sync, and audit trails - What specific trip data (roster, route, contact numbers, check-in/out status) is cached offline on driver and employee apps? - When connectivity is restored, how does the system backfill trip logs and ensure audit trail integrity for compliance and ESG reporting? - How are discrepancies between manual duty slips and system trip records reconciled after an outage? - Can the vendor demonstrate that CO₂ reporting and SLA dashboards remain defensible even with intermittent data loss?