How to turn scenario models into a 5-lens playbook for daily reliability

This is a practical playbook for Facility Heads who run in-the-trenches operations. It translates complex scenario models into repeatable guardrails your NOC can execute, even when GPS is flaky or drivers are late. Use the five lenses to align governance, resilience, data integrity, people safety, and site-transition planning into a single, auditable playbook that reduces firefighting and keeps leadership calm.

Is your operation showing these patterns?

- Escalations spike during peak/night shifts with no documented playbooks

- GPS/app outages derail live tracking and force last-minute re-planning

- Vendor response delays push manual coordination and scrambling

- NOC staffing buckles under sudden demand swings or after-hours pressure

- Roster changes and policy updates create late-night routing gaps

- Executives keep asking for status updates even when dashboards show 'green'

Operational Framework & FAQ

Defensible scenario governance

Establish repeatable modeling conventions, anchor baselines to SLA and policy, and require explicit sign-offs before decisions. Tie model outputs to guardrails and governance rather than marketing hype.

In our employee transport setup, what exactly is a scenario model, and how is it different from the regular monthly KPI dashboards?

B0552 Scenario models vs KPI dashboards — In India corporate Employee Mobility Services (EMS), what does a “scenario model” actually mean in day-to-day commute governance, and how is it different from a monthly KPI dashboard for OTP, cost per trip, and incidents?

A scenario model in Indian Employee Mobility Services is a forward-looking playbook and simulation tool that prepares transport teams for specific operating conditions, while a monthly KPI dashboard is a backward-looking report that summarizes what has already happened on metrics like OTP, cost per trip, and incidents. A scenario model changes routing rules, fleet mix, buffers, and SOPs before disruption hits, but a KPI dashboard only tells whether targets were met after the fact.

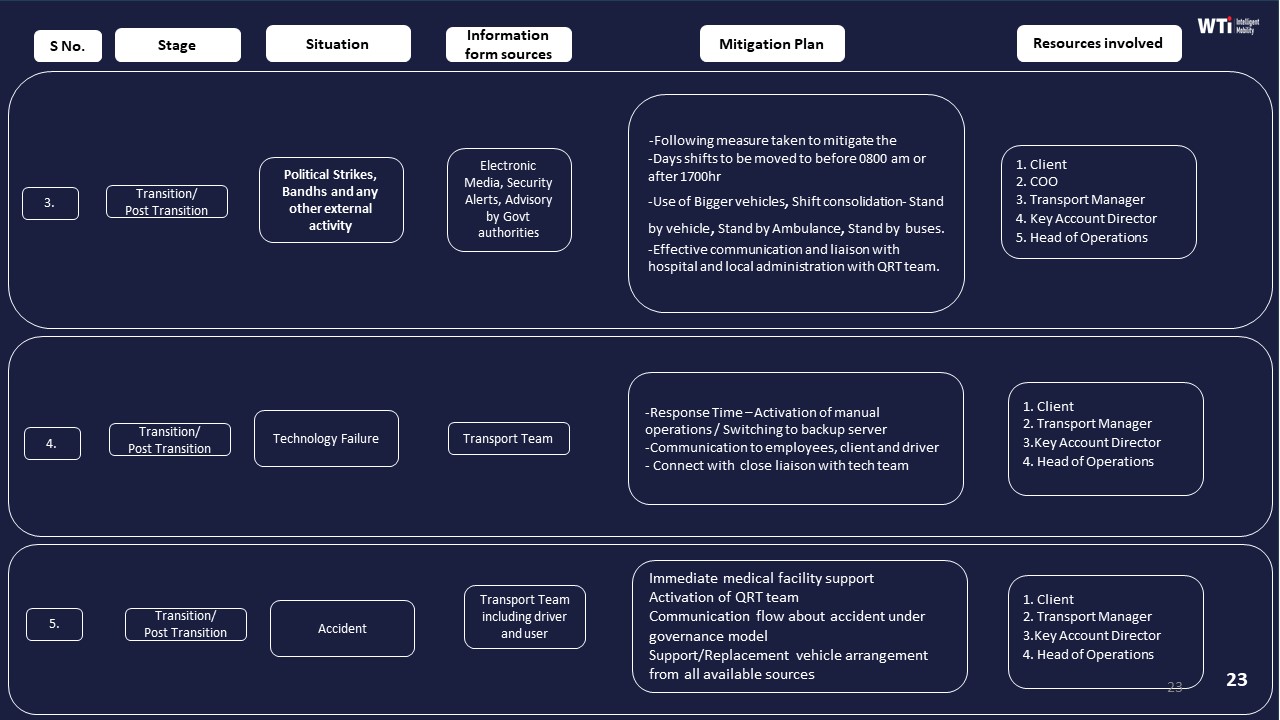

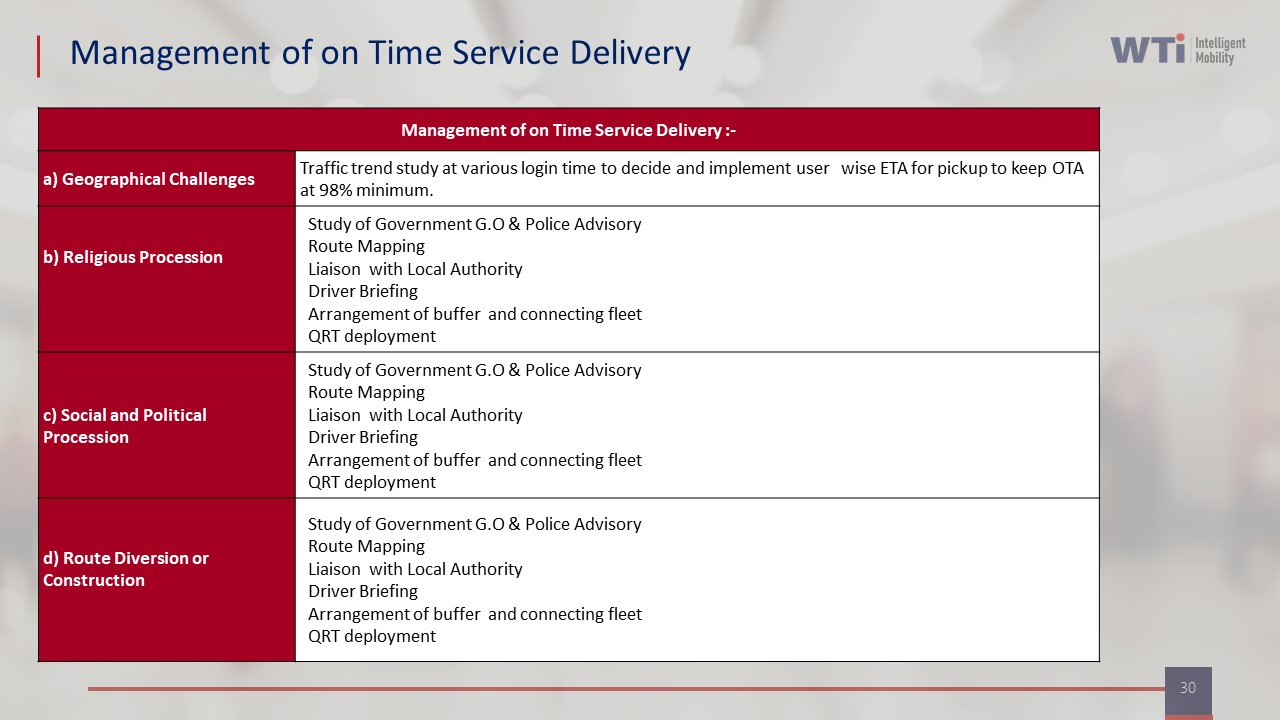

A scenario model is usually built around named operating situations such as “normal working day,” “heavy rain / monsoon,” “political strike,” “citywide event,” or “partial app / GPS outage.” In each situation, EMS teams define expected demand, risk level, fleet requirements, buffer vehicles, escort rules, and special routing logic. Documents like Business Continuity Plans and “Management of On Time Service Delivery” show this by mapping how operations adapt to cab shortages, natural disasters, or political strikes using predefined mitigation steps and additional vehicles.

Scenario models also encode trigger thresholds and decisions. For example, specific OTP deterioration, weather alerts, or road-closure information can trigger activation of the “monsoon” or “strike” scenario. This changes how the command center allocates vehicles, sets reporting times, manages vendor support, or escalates to leadership. Case studies, such as the Mumbai monsoon routing example, evidence how a scenario model plus real-time command-center supervision can maintain a 98% on-time arrival rate under stress.

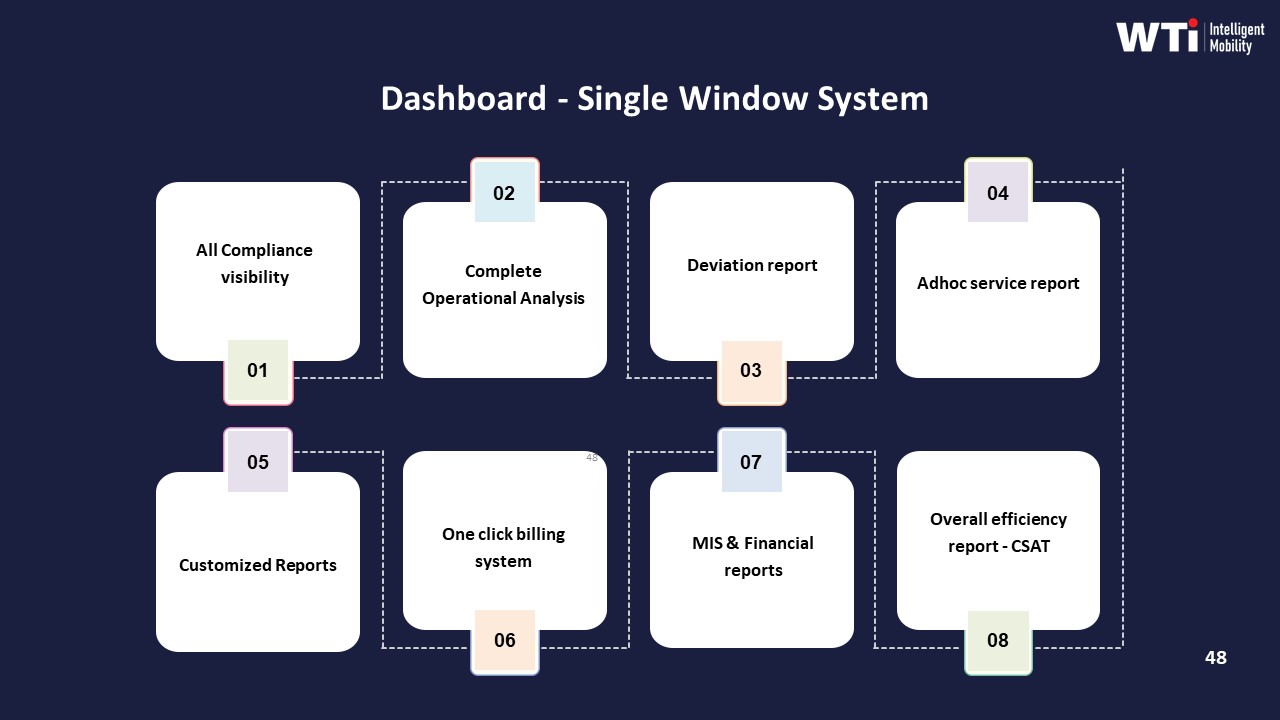

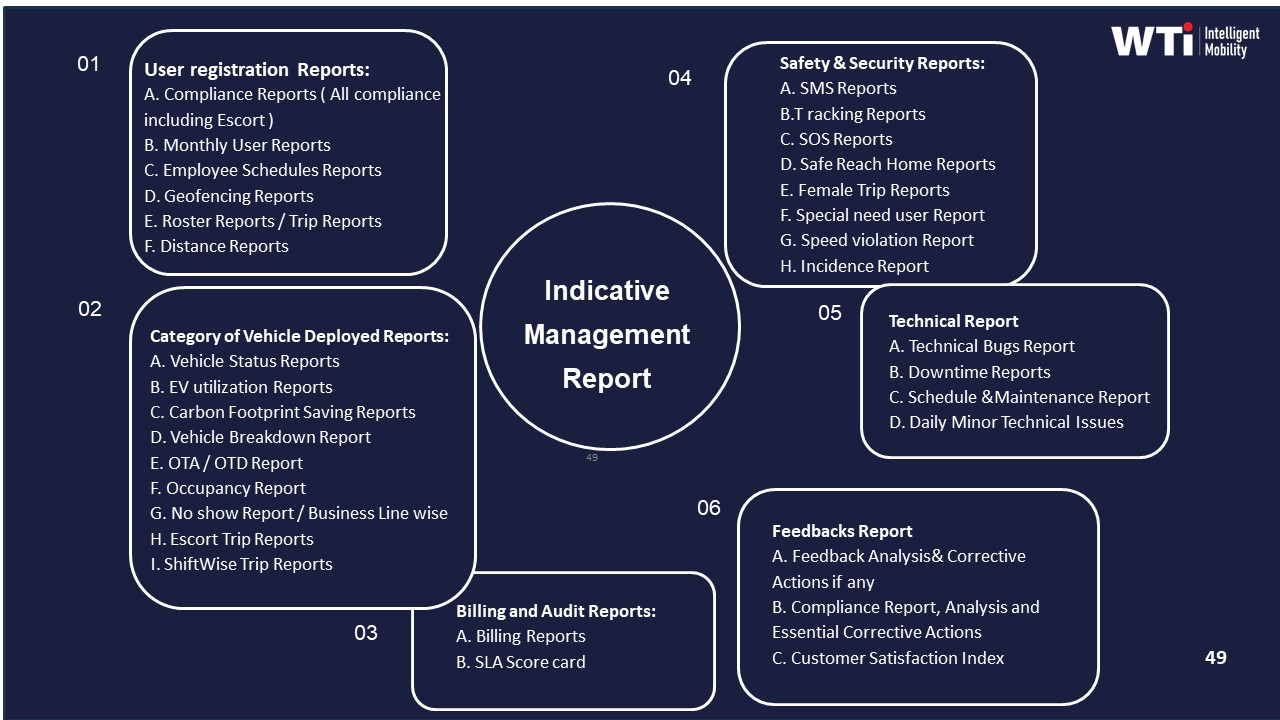

By contrast, a monthly dashboard aggregates performance indicators like OTP%, cost per kilometer, fleet uptime, incident counts, and user satisfaction into a single window for review. Artifacts such as the “Dashboard – Single Window System,” “Indicative Management Report,” and tech-based performance collateral illustrate these dashboards as tools for compliance visibility, operational analysis, and SLA tracking. They support governance, QBRs, and contract discussions, but they do not themselves change live routing or driver instructions.

For day-to-day commute governance, operations teams typically use scenario models to shape live decisions in the command centre and to drive early alerts and playbooks, and then use monthly dashboards to validate whether these choices delivered the expected reliability, safety, cost, and ESG outcomes over time.

Why do companies like ours need what-if models for demand and policy changes if we already have SLAs and penalties with the transport vendor?

B0553 Why scenario models are needed — For India corporate ground transportation programs (EMS/CRD), why do buyers build what-if scenario models for demand volatility and policy changes instead of relying on vendor SLAs and penalty clauses alone?

Buyers in India build what‑if scenario models for demand volatility and policy changes because SLAs and penalty clauses only address failures after they occur, while scenario models help design a transport program that remains stable under stress. Scenario modeling turns unpredictable attendance, hybrid work, and regulatory shifts into quantified capacity, routing, fleet-mix, and commercial decisions that reduce the need for firefighting and escalation.

Vendors can commit to OTP, safety, and uptime through SLAs, but they cannot control core enterprise drivers such as roster volatility, hybrid WFO/WFH switches, new night-shift rules, or sudden site expansions. Scenario models let organizations test different EMS and CRD configurations against these internal uncertainties. This enables buyers to define seat-fill targets, dead-mile caps, and buffer capacity policies that keep Vehicle Utilization Index and Cost per Employee Trip within acceptable bands even when patterns change.

Most Indian mobility programs also face structural constraints such as fragmented multi-city supply, evolving women-safety norms, DPDP-driven data policies, and emerging ESG disclosure needs. Scenario models help buyers understand how changes in escort rules, route approval logic, EV penetration, or shift windowing will impact On-Time Performance, safety incident risk, and gCO₂/pax‑km before they commit to contracts. This reduces dependence on post‑facto penalties and renegotiation.

A common failure mode is over-optimizing SLAs for a “steady state” that rarely exists. When demand spikes, routes change, or work patterns shift, static SLAs and penalty ladders generate disputes rather than operational resilience. What‑if models allow Procurement, Finance, HR, and Transport Heads to align on outcome-linked commercials, flexible fleet sizing, and routing policies that can be adjusted over time, while still remaining auditable and defensible to internal audit and regulators.

How can HR tell if our OTP issues are because attendance keeps changing or because the operator is underperforming, using a simple what-if model?

B0555 Diagnose volatility vs performance — In India corporate EMS shift transport, how can an HR head diagnose whether recurring OTP failures are mainly a demand-volatility problem (attendance swings) versus an operator performance problem, using simple scenario-model inputs rather than opinions?

An HR head can separate demand-volatility issues from operator-performance issues by building a few simple “what should have happened” scenarios from roster data and then comparing them to what actually happened in trip logs and OTP reports. The gap between planned demand and supplied capacity indicates volatility, while gaps between supplied capacity and on-time performance indicate operator failure.

The starting point is to lock one clean baseline week per major shift pattern and export three basic inputs per shift window. HR should collect planned attendance from HRMS rosters, actual swipe or login attendance, and transport trip logs with ETA, ATA, and vehicle counts. For each shift, HR can calculate percentage attendance deviation from plan, vehicle utilization per trip, and OTP by vendor or route cluster.

HR can then model three simple scenarios. In a “stable demand” scenario, HR assumes the actual attendance equals planned attendance and checks if OTP is still poor when seat-fill is within a reasonable band. In a “high volatility” scenario, HR uses actual attendance and checks whether large late bookings or cancellations correlate tightly with OTP drops on specific shifts or days. In a “capacity stress” scenario, HR identifies shifts where seat-fill is consistently very high and checks if OTP degrades mainly on those high-load trips.

Clear diagnostic signals are straightforward to read. If attendance swings are small but OTP is erratic across vendors, times, or depots, then operator performance and command-center discipline are the primary issues. If OTP failures cluster on days or shifts with big last-minute attendance changes or high seat-fill, then demand volatility and current routing rules are the real constraints, and HR should work on cutoff policies, roster-freeze times, and dynamic routing support rather than simply escalating the operator.

As Finance, what scenario-model outputs help us explain cost spikes during audits without relying on the vendor’s black-box assumptions?

B0556 Audit-defensible cost spike narratives — For a CFO evaluating India corporate employee transport (EMS), what scenario-model outputs are most defensible in an audit to explain cost spikes—dead mileage, seat-fill drops, or surge fleet add-ons—without getting trapped in vendor-provided black-box assumptions?

For a CFO evaluating Indian employee mobility services, the most defensible scenario-model outputs are those that decompose cost into simple, audit-traceable drivers such as cost per employee trip, dead mileage percentage, seat-fill ratio, and surge fleet exposure, with each metric directly reconcilable to trip logs, rosters, and invoices. Outputs that separate structural pattern shifts (policy, attendance, shift timing) from vendor-behavioral factors (routing efficiency, fleet mix, EV vs diesel) help explain cost spikes without relying on vendor “black-box” assumptions.

The strongest models start from a clean historical baseline for cost per kilometer and cost per employee trip. They then quantify how much variance comes from three primary levers in EMS: dead mileage above a defined cap, seat-fill drops versus target pooling, and incremental surge vehicles beyond contracted buffers. Each lever should be expressed as both a percentage change and a rupee impact, with a clear link to underlying operational data such as trip manifests, route plans, and attendance records.

A common failure mode is accepting composite “optimization savings” or “algorithmic” adjustments that cannot be re-created from first principles. Auditable models instead expose assumptions explicitly, such as average kilometers per shift window, minimum pooling thresholds, or buffer capacity for peak bands, and allow Finance to sensitivity-test them. This avoids vendor lock-in to proprietary indices and reduces dispute risk during audits.

For EMS in India, CFOs gain particular defensibility when scenario outputs align to outcome-linked procurement logic. Examples include OTP-linked penalties, seat-fill linked incentives, and caps on chargeable dead mileage per route or per vehicle. When scenario models forecast cost spikes, they should also show whether contracted SLAs, such as on-time performance or safety incident rates, improved sufficiently to justify higher spend.

Useful, audit-ready scenario outputs typically include: - Variance-bridges that reconcile month-on-month cost per employee trip changes into contributions from dead mileage, seat-fill, surge add-ons, and shift pattern changes. - Fleet utilization indices that separate contracted capacity from actual used capacity, highlighting under-utilization versus genuine demand spikes. - Route adherence and trip adherence scores that indicate whether higher costs correlate with increased reliability or merely inefficiency.

Scenario modeling becomes most robust when it incorporates basic ESG-linked parameters, such as the share of EV kilometers and emission intensity per trip, but keeps these separate from core financial drivers. CFOs should treat sustainability metrics as an additional layer, not as a substitute for hard unit-economics, so that EV adoption or green initiatives can be defended both on cost and on ESG grounds.

How can scenario models help Procurement avoid picking a vendor that looks cheapest on paper but fails when demand fluctuates?

B0559 Preventing lowest-bid trap — For India corporate EMS vendor governance, how do scenario models help Procurement avoid being blamed for “choosing the wrong vendor” when demand volatility makes the lowest-cost bid look artificially attractive on paper?

Scenario models help Procurement test vendor performance under realistic demand volatility instead of relying only on static, “average case” unit rates. They prevent “wrong vendor” selection by showing how low bids behave when shifts spike, attendance swings, and routing complexity increases, so Procurement can document why a resilient vendor beats the cheapest one in real operating conditions.

Scenario modelling replaces single-point comparison with a structured set of “what-if” runs that mimic hybrid work, peak seasons, and city-specific constraints. Each scenario can apply the same assumptions on OTP targets, seat-fill, dead mileage caps, escort rules, and SLA penalties to all bidders. Procurement can then compare not just rate cards, but cost per employee trip, SLA breach exposure, and fleet utilization across scenarios.

A common failure mode is evaluating vendors only on base CPK or CET without modelling late-night windows, low-demand tails, or event spikes. In practice, the lowest-cost bid often deteriorates fastest when driver retention, fatigue management, and buffer capacity are stressed. Scenario models surface these fragilities before award, so Procurement is not surprised later by hidden dead mileage, surge add-ons, or chronic OTP failures.

For India EMS programs, robust models usually include at least three dimensions.

- Hybrid attendance patterns across weeks, including WFO/WFH shifts and no-show rates.

- City and corridor differences in traffic, monsoon disruption, and night-shift women-safety routing.

- EV vs ICE fleet mix where relevant, including range, charging windows, and uptime parity.

Scenario outputs also create a defensible audit trail. Procurement can attach the model, input assumptions, and comparative outcomes to the RFP file, proving that vendor choice was based on lifecycle economics and SLA risk, not headline tariffs. This documentation is what shields the category manager when leadership later asks why the absolute lowest bidder was not selected, or why a chosen vendor remains the right call despite short-term cost noise.

In an RFP, what what-if questions should we ask to uncover hidden assumptions like peak buffers, dead-mile caps, and roster change delays?

B0565 RFP prompts for hidden assumptions — For India corporate EMS procurement, what scenario-model questions should be asked during RFPs to surface hidden assumptions around peak vs non-peak buffers, dead-mile caps, and roster change latency that vendors often gloss over in proposals?

For corporate EMS RFPs in India, buyers should use concrete “day-in-the-life” scenarios to force vendors to expose how they size buffers, control dead mileage, and handle roster changes under real constraints. Scenario questions work best when they ask for numbers, SOPs, and data, not generic assurances.

Below are concise, scenario-based prompts that surface hidden assumptions.

-

Peak vs non-peak capacity buffers

Ask vendors to respond with exact vehicle counts, lead times, and SLA commitments. -

“For a site with 1,000 daily commuters and three main shift windows (07:00–16:00, 14:00–23:00, 22:00–07:00), what percentage capacity buffer do you plan for each window on: a) a normal weekday, b) a month-end payroll day, c) a festival week with road closures?”

- “In the above scenario, how many standby vehicles per 100 active vehicles will be: a) physically on-site, b) within 30 minutes’ reach, c) purely ‘on paper’ via your wider network?”

-

“If OTP falls below 98% for the 22:00–07:00 window for three consecutive days due to high demand, what is your escalation and buffer-adjustment SOP, and what are the concrete timeframes for adding more fleet?”

-

Dead-mile caps and routing economics

Force vendors to show how they cap dead mileage and who pays when caps are breached. -

“For a campus located 25 km from the city center with dispersed employee residences, what dead-mile cap (as % of total km) do you design for: a) first trips of the day, b) last return-to-garage trips, c) inter-shift repositioning?”

- “If actual dead mileage exceeds your proposed cap by 5%, 10%, and 15% in any month: a) how is this detected, b) how is responsibility apportioned (client policy vs routing engine vs vendor network), c) who absorbs the additional cost under your commercial model?”

-

“Share an anonymized example (before/after) where you reduced dead mileage by ≥10% through route re-design. What design assumptions changed?”

-

Roster change latency and hybrid attendance

Make them quantify how fast they can safely react to late changes. -

“Assume 20% of employees in a shift change their status (WFH vs WFO, different pick-up location) inside the last 90 minutes before shift start.

a) What is your cut-off time for guaranteed routing with SLA-grade OTP?

b) What % of late changes will you accept with full SLA commitment, and what % will be treated as ‘best effort’?” - “Describe, step-by-step, what happens in your system if 10% of riders cancel and 5% new riders are added 45 minutes before shift start.

a) What is the maximum routing recalculation time you design for?

b) What is the maximum additional travel time you allow for existing riders when re-clustering routes?” -

“For each of these latency bands (T‑120, T‑60, T‑30, T‑15 minutes to shift start), specify: a) what types of changes are allowed,

b) what SLA you commit to,

c) how OTP is calculated and reported so Finance and HR can audit the impact.” -

Night-shift and adverse-condition stress tests

Tie buffers, dead miles, and latency to realistic Indian constraints. -

“On a heavy-rain evening with 30% traffic slow-down and 10% driver no-shows in a metro, what are your pre-defined rules for: a) increasing buffer vehicles,

b) temporarily relaxing or tightening dead-mile caps,

c) freezing further roster changes?” -

“In a night-shift scenario with women-first routing and escort compliance, what is the maximum additional buffer you add to fleet and time compared to day shifts, and how is this costed?”

-

Data, governance, and transparency around these assumptions

Ensure the assumptions are visible and auditable, not hidden in the black box. -

“Which specific dashboards or reports will show: a) daily peak vs non-peak buffer utilization,

b) dead-mile % by site and shift window,

c) average and 95th-percentile roster change latency from request to confirmed route?” - “How often can buffer levels, dead-mile caps, and change cut-off times be renegotiated under the contract, and what data triggers those reviews?”

These scenario questions push vendors to expose operational guardrails, not just headline SLAs. They also give Facility/Transport Heads concrete levers to manage OTP, cost per km, and daily firefighting once operations go live.

What’s the minimum scenario model we should run before approving a big policy change or a new site launch—without getting stuck in over-analysis?

B0569 Minimum viable scenario model — For India corporate EMS leadership, what’s the simplest “minimum viable” scenario model to run before approving a major policy change or site launch, so the organization avoids analysis paralysis but still de-risks the big unknowns?

The simplest “minimum viable” scenario model for India EMS leaders is a three-scenario stress test built around one route archetype, one shift band, and one bad-night event, using a small, fixed KPI set. This model trades perfect accuracy for fast learning, so leadership can see reliability, cost, and safety impact before any major policy change or new site launch.

The starting point is a single “reference corridor” per site. This reference corridor is a representative cluster of employees and routes that includes one high-risk band such as a 21:00–06:00 shift with women employees. EMS leadership defines a baseline using existing data for this corridor that includes OTP%, trip fill ratio, cost per employee trip, incident and escalation rate, and no-show rate. This becomes the control to compare against policy or design changes. Policy examples include seat-fill thresholds, escort rules, EV share, or aggregation rules during hybrid workdays.

The minimum viable scenario set then tests three cases on that corridor. The first case is a “business-as-usual” baseline under the current policy. The second case is the “new-policy steady state,” such as higher pooling or more EVs, simulated against the same shift windows and headcount. The third case is a “bad-night shock” where one constraint fails, such as a 20% vehicle shortfall, app downtime for 30 minutes, or a sudden storm, and leaders measure how fast operations recover and what buffers are required.

To keep the model practical, the calculation can stay spreadsheet-based instead of using a full routing engine. Route lengths can be approximated by historical averages per zone and time band. Fleet capacity can be represented by simple ratios like vehicles per 100 employees per shift window, with dead mileage caps or target seat-fill percentages applied. The focus is on relative deltas between scenarios rather than precision forecasting of every trip.

EMS leadership can then set simple go/no-go criteria tied to this corridor-level model. A typical guardrail is that the new policy must not push OTP% below a defined threshold, increase escalations per 1000 trips beyond a tolerance, or increase cost per trip beyond an agreed band unless there is a clear safety or ESG justification. If the bad-night scenario still stays within business continuity thresholds for backup vehicles, manual rostering, and command center load, the policy or site design can move from pilot to scale with confidence.

This minimum model also helps avoid analysis paralysis by deliberately excluding lower-impact variables from the first pass. Instead of modeling every micro-variant, the EMS team can freeze assumptions like driver mix, vendor mix, or small-time-band variations. Only high-leverage levers such as fleet mix, pooling rule, escort or women-safety rule, and EV penetration level are changed between scenarios. This keeps the question focused on whether the system holds under realistic stress, not whether the forecast is perfectly precise.

How do we test if a vendor’s AI routing actually works when we simulate attendance spikes, route blocks, or tighter pickup windows?

B0571 Stress-testing AI routing claims — For India corporate EMS, how can a buyer test whether a vendor’s “AI routing” claims hold up under scenario-model stress tests like sudden attendance spikes, blocked routes, or tightened pickup windows?

For India corporate EMS, a buyer can validate a vendor’s “AI routing” by running controlled, data-backed simulations against known stress scenarios and then checking hard KPIs like OTP%, seat-fill, dead mileage, and exception-closure time before signing scale contracts. The goal is to move from demo narratives to measurable, repeatable outcomes under hybrid-work volatility, safety constraints, and real traffic conditions.

A robust test starts with synthetic but realistic rosters that reflect shift windowing, hybrid attendance, women-first policies, and escort rules. Transport or HR teams should provide historical trip data and define clear KPI baselines such as On-Time Performance, Trip Fill Ratio, and dead mileage. The vendor’s routing engine should then run multiple “what-if” scenarios on this same data, particularly sudden attendance spikes, blocked routes, and tightened pickup windows. AI routing holds up when it consistently maintains OTP and fleet utilization while keeping exception counts and manual overrides low.

Scenario-model stress tests work best when the command center and NOC teams are involved. Operations should track how quickly the routing engine recalibrates under constraints like last-minute bookings, no-shows, and temporary geo-fencing blocks. A common failure mode is that algorithms look efficient on paper but rely on manual dispatch when GPS, driver apps, or telematics streams become unreliable, which exposes weak observability and routing logic. Strong vendors can show audit trails and explain route changes, while weak ones cannot connect their “smart routing” to verifiable service-level metrics.

How can Finance use scenario models to design outcome-linked pricing without creating incentives for the vendor to game closures or avoid tough routes when demand changes?

B0572 Avoiding perverse incentives in contracts — In India corporate EMS contracting, how can Finance use scenario models to set outcome-linked commercials that don’t accidentally incentivize vendors to game the system when demand volatility changes (e.g., manipulating closures or avoiding hard routes)?

Finance teams in India EMS contracts can use scenario-based modeling to stress-test outcome-linked commercials against demand volatility and explicitly cap where vendors can benefit from “gaming” behavior. The core principle is to simulate multiple demand and risk scenarios in advance, and then design KPIs, thresholds, and payout curves so that vendors earn more only when reliability, safety, and cost efficiency improve together, not when ticket closures are delayed or hard routes are avoided.

Scenario models work best when they use real EMS demand patterns, including hybrid attendance, shift windowing, peak vs non-peak capacity, and route difficulty clusters. Finance can combine trip logs, seat-fill data, OTP%, no-show rates, and dead mileage to build a baseline picture of costs per employee trip and per km under “normal,” “peak,” and “disrupted” conditions. These baselines allow Finance to model how different SLA-linked payout formulas behave when volumes spike, drop, or shift geographically.

To reduce gaming risk, outcome-linked commercials should avoid single-metric dependence. OTP-based payouts can be paired with route adherence audits, Trip Adherence Rate, and exception detection-to-closure time so vendors cannot simply cancel or reclassify difficult trips. Seat-fill incentives can be bounded by dead mileage caps so vendors do not over-consolidate at the expense of reliability. Finance can run sensitivity analyses to see how vendor revenue changes if demand swings between different occupancy levels, or if more trips fall into “hard route” buckets.

Three design moves are particularly useful for Finance teams setting these contracts:

- Define scenario bands and guardrails. Finance can predefine demand bands (e.g., low, medium, high attendance or route-mix profiles) and simulate vendor earnings under each band using historical EMS data. Guardrails can cap incentive payouts as a percentage of base revenue per band. This prevents windfall gains in unusual weeks and discourages behavior such as routing away from known congestion corridors purely to protect OTP, because payout upside is constrained unless overall reliability and coverage stay within agreed thresholds.

- Use composite indices instead of raw KPIs. A Service Level Compliance Index that blends OTP%, Trip Adherence Rate, exception closure time, safety incident rate, and seat-fill can dilute the effect of manipulating any single KPI. Finance can scenario-test how the index moves if, for example, OTP is protected by cancelling trips on difficult routes, versus actually improving routing through dynamic dispatch. In such a model, cancelling or avoiding hard routes would hurt Trip Adherence and coverage components, lowering the overall index and thus payouts.

- Encode “hard route” and disruption logic upfront. Finance and Transport can jointly tag routes and timebands by risk and difficulty, based on geo-analytics, monsoon impact, night-shift safety requirements, and congestion history. Scenario models can then test separate payout curves for normal vs hard routes, ensuring vendors are not penalized for taking on difficult corridors. Contracts can mandate minimum service levels per difficulty tier and use Random Route Audits to verify that vendors are not steering away from tagged hard clusters when demand becomes volatile.

When building these models, a common failure mode is ignoring vendor cost structures under low-utilization or high-variance scenarios. Finance should therefore test unit economics from both sides, modeling how fleets, duty cycles, and driver fatigue indices behave when volumes oscillate. If scenario modeling shows that certain outcome-linked penalties push vendors below viable margins in high-volatility bands, Finance can introduce buffers, such as peak-capacity retainers or banded penalties, to preserve vendor stability while still protecting On-Time Performance and safety outcomes.

By treating outcome-linked commercials as algorithms to be stressed under multiple operational scenarios, rather than static rate cards, Finance can systematically identify and patch incentive loopholes before contracts go live. This approach aligns with centralized command-center observability, real-time SLA tracking, and data-driven vendor governance, and it supports a shift toward outcome-based procurement without increasing the risk of hidden gaming or degraded service on hard routes.

What operating rhythm makes scenario models usable—who approves assumptions, how often do we refresh them, and what events trigger re-running them?

B0579 Operational governance for scenario models — For India corporate EMS, what governance rhythm makes scenario models actually get used—who signs off assumptions, how often they’re refreshed, and what triggers a re-run (site launch, vendor transition, policy change, or EV scale-up)?

Scenario models in Indian corporate employee mobility are used consistently only when they are embedded into a formal governance rhythm with clear owners, fixed cadences, and defined triggers for re-runs.

Transport or Facility Heads usually own the operational model and first-cut assumptions because they control rosters, routes, and vendor behavior. CHROs and HR Ops typically sign off people-side assumptions such as shift patterns, work-from-office policies, and women-safety routing norms. CFOs or Finance Controllers validate cost baselines, tariff structures, and savings hypotheses so that model outcomes can be defended in audits. ESG or Sustainability Leads sign off any EV or carbon assumptions before those numbers enter ESG or CSR reports.

Most mature organizations review and refresh scenario assumptions at least quarterly so that shift-windowing, seat-fill targets, and cost-per-trip benchmarks stay aligned with hybrid-work patterns. Some enterprises also include a lighter monthly check-in at a transport governance or command center review to catch obvious drifts in OTP, fleet utilization, or cost per kilometer trends. IT and Security teams usually join only when routing logic, data flows, or safety controls change, but their approval is required for any new data sources or telemetry.

Scenario models are typically re-run on specific triggers. Site launches or closures require fresh routing, fleet-mix, and vendor-allocation models. Vendor transitions or tier changes trigger re-modelling of capacity, SLAs, and standby buffers. Policy changes such as new hybrid-attendance rules, women-first night routing, or revised escort norms force updates to demand patterns and safety constraints. EV scale-up initiatives demand new assumptions on range, charging topology, uptime, and emissions so that EV utilization ratio and CO₂ abatement indices remain credible.

In practice, these models are discussed in scheduled transport governance forums such as monthly service-delivery reviews and quarterly business reviews. These meetings map model outputs directly to KPIs like on-time performance, trip adherence rate, vehicle utilization index, cost per employee trip, and incident rates so that scenario work translates into routes, SLAs, and commercial decisions instead of remaining a one-time planning exercise.

How can we use what-if models to set budget guardrails for demand spikes and expansions so we don’t get surprise invoices mid-quarter?

B0581 Budget guardrails for volatility — For a Finance Controller in India corporate EMS, how can scenario models be used to set a downside-risk cap (budget guardrails) for demand spikes and site expansions so Finance doesn’t face surprise invoices and ad-hoc approvals mid-quarter?

Scenario models allow Finance Controllers in Indian EMS programs to pre‑price “what if” demand patterns and site changes into explicit budget guardrails. Scenario models convert uncertain spikes and expansions into bounded, pre-approved ranges for volume, cost per trip, and vendor commercials so finance teams do not face surprise invoices or frequent mid‑quarter approvals.

Scenario modelling works best when it is built on the same KPIs and constructs used in EMS operations. Finance can collaborate with transport and HR to define baseline parameters such as cost per kilometer, cost per employee trip, trip fill ratio, fleet mix, and on‑time performance expectations. These baselines then anchor multiple demand and footprint scenarios, for example adding new shifts, opening a new site, or raising work‑from‑office targets at an existing location.

Finance Controllers can use three families of scenarios to set downside‑risk caps. The first family models volume variance at existing sites, such as +10% or +25% trips in a quarter, with seat‑fill and dead‑mileage assumptions held constant or tightened. The second family models network changes such as new sites or new cities, where route length, fleet mix, and SLA assumptions are recalibrated for each geography. The third family models strategic shifts such as EV adoption in long‑term rental or EMS, where unit‑economics and uptime assumptions are validated using live EV operations data and carbon abatement metrics.

In practice, guardrails emerge when Finance links each scenario to a specific budget envelope and commercial construct. Controllers can set a base budget for the “most likely” case and a clearly defined ceiling for “stress” cases like rapid headcount growth or mandatory full‑office weeks. Contracts can then codify per‑km, per‑trip, or per‑seat pricing bands, escalation rules, and outcome‑based incentives that are valid up to those scenario thresholds.

To keep scenarios auditable and actionable, Finance can insist on three design rules. Scenario inputs should be traceable to HRMS, ERP, and EMS operational data rather than vendor spreadsheets. Scenario outputs should map to the same reporting structure used for monthly reconciliations and cost reviews. Commercial clauses and SLAs should reference explicit scenario bands, so that any movement beyond the guardrail automatically triggers a review rather than silent cost drift.

This approach reduces ad‑hoc approvals and reactive explanations during audits. It also aligns EMS planning with hybrid‑work elasticity, multi‑vendor aggregation, and EV transition strategies without compromising financial discipline.

As CFO, how do I validate the what-if models for RTO and demand changes so our EMS cost-per-trip forecasts don’t fall apart at month-end?

B0583 CFO sanity-check demand scenarios — In India corporate Employee Mobility Services (EMS), how should a CFO sanity-check scenario models for demand volatility (RTO swings, seasonal hiring, ramp-downs) so the forecasted cost-per-trip and dead-mileage reduction won’t blow up during monthly close?

In India EMS, a CFO should sanity-check demand-volatility models by stress-testing them against real attendance patterns, clearly separating fixed vs variable cost blocks, and validating that routing assumptions match on-ground constraints before locking cost-per-trip targets into budgets. Forecasts that do not reconcile back to HRMS rosters, shift windows, and vendor SLAs usually understate dead mileage and inflate savings.

A robust check starts with data provenance. The CFO should insist that all demand and seat-fill assumptions are derived from HRMS or approved roster data, not from app sign-ups or “average utilization” claims. Monthly forecast volumes should map explicitly to known RTO policies, seasonal hiring plans, and planned ramp-downs. A common failure mode is using a single annual utilization number that ignores high-variance weeks such as quarter-ends or festive seasons.

Cost sanity checks work best when the model is decomposed. The CFO should ask to see cost-per-kilometer and cost-per-trip calculations split into fixed components such as minimum guarantees or base fleet buffers and variable components such as per-km charges or per-seat pricing. If the dead-mileage reduction claim is central to the ROI, then the routing engine’s assumptions about shift windowing, pooling rules, and no-show rates must be made explicit and benchmarked against historic Vehicle Utilization Index and Trip Fill Ratio.

To prevent surprises at monthly close, the CFO should request parallel runs where the EMS vendor’s projected billing is reconciled against current billing for at least one or two pilot sites. Any promised savings from dynamic routing, hybrid fleet mixes, or EV adoption should be validated through these pilots before being annualized. Outcome-linked commercials tied to OTP%, seat-fill, and dead mileage can then be layered on, but only after the CFO confirms that the underlying trip and cost data can be exported, audited, and re-calculated independently.

In our RFP, how can Procurement test the what-if models so vendors aren’t burying risk in utilization and dead-km assumptions?

B0585 RFP-proofing scenario assumptions — In India corporate ground transportation, how do procurement leaders pressure-test scenario models used in an RFP so vendors can’t hide commercial risk in assumptions about utilization, detention time, and dead-kilometers?

Procurement leaders in India corporate ground transportation pressure-test vendor scenario models by standardizing assumptions, forcing vendors onto comparable baselines, and then stress-testing commercial outcomes under multiple utilization and delay conditions before award.

Most organizations reduce hidden commercial risk by explicitly defining baseline inputs such as expected seat-fill, duty hours, dead mileage caps, and typical detention time instead of letting each vendor choose its own assumptions. Procurement teams insist that every bidder price against the same Employee Mobility Services or Corporate Car Rental Services service catalog and shift window definitions. This approach is especially important where hybrid work patterns and variable attendance can otherwise mask real Cost per Kilometer or Cost per Employee Trip exposure.

Scenario models are then stress-tested across low, medium, and high utilization bands to see how commercials behave as Trip Fill Ratio, dead kilometers, and wait times change. A common pattern is to run at least one “bad day” case that includes extended congestion, higher no-show rates, and route recalibration to expose how vendors treat detention and extra kilometers. Outcome-linked procurement language ties payouts to On-Time Performance, route adherence, and seat-fill so commercial models are anchored in observable KPIs instead of optimistic volume projections.

Procurement also uses outcome-based vendor governance concepts such as SLA breach rates and exception-closure SLAs to test whether proposed pricing remains viable when operations are managed through a centralized command center with real-time monitoring. Vendors that resist transparent scenario modeling, or that cannot reconcile their pricing to route design and fleet-mix assumptions, are flagged as higher risk even if headline rates appear lower.

How can leadership use a few clear what-if stress scenarios—like site launch, demand spike, vendor failure—to show we’re ready and stop this coming up in every board review?

B0594 Executive stress-case readiness — In India corporate ground transportation, how can a CEO or COO use scenario models to stop recurring board-level questions about “the transport problem” by defining a small number of stress cases (site launch, demand spike, vendor failure) and proving readiness against them?

A CEO or COO can stop recurring board-level questions about “the transport problem” by hard-coding a few recurring stress scenarios into the operating model and then proving, with data and SOPs, that employee mobility will stay reliable, safe, and cost-controlled under those exact conditions. The most practical stress cases in India corporate ground transportation are a new site launch, a sudden demand spike, and a partial vendor or fleet failure, each modeled with clear triggers, playbooks, and measurable KPIs.

For a new site launch, leadership can insist on a standard “go-live mobility pack” that includes a pre-transition plan, a Project Planner-style timeline, clear infrastructural requirements per branch, and an indicative transition plan that runs from pre-transition to fleet deployment. The model should define upfront buffer fleet, command-center coverage, and compliance checks so that launch readiness can be shown as a checklist instead of a promise.

For a demand spike, the CEO or COO can define what constitutes a spike in employee mobility services or corporate rentals, specify temporary capacity buffers, and pre-approve rapid EV or ICE redeployment using project commute or event commute constructs. Fleet utilization, on-time performance, and exception-closure times become the board-facing metrics that prove resilience.

For vendor or fleet failure, a modelled business continuity plan is essential. This includes explicit coverage for cab shortages, political strikes, and technology failures, combined with a dual command-center structure and escalation matrix that shows who acts, within what SLA, when a vendor or system drops. Readiness is proven through documented BCPs, central command-center workflows, and technology-based observability that links incident detection to closure.

A short set of scenario dashboards can then frame board conversations around stress-tested outcomes instead of anecdotes. The dashboards should expose OTP%, exception latency, EV utilization ratio, and cost per employee trip under each modeled scenario, aligned with business continuity, safety, and ESG targets. This shifts the narrative from “will transport fail again?” to “we know what happens in our top three stress cases, and here is the evidence that we remain within agreed service and risk thresholds.”

For CRD, how can Finance model flight delays, airport waiting, and cancellations so we don’t get billing surprises that become an audit headache?

B0598 CRD delay and billing exposure — For India corporate Corporate Car Rental (CRD) services, how should a Finance Controller use scenario models to estimate exposure from flight delays, airport wait time, and cancellation policies so billing surprises don’t become an audit issue?

For Corporate Car Rental airport use in India, a Finance Controller should build simple scenario models that convert flight delays, free‑wait limits, and cancellation rules into expected monthly cost ranges under different behaviours and seasons. These models must mirror vendor SLAs and billing logic so that “extra” airport charges are predicted, provisioned, and fully reconcilable during audits rather than appearing as unexplained leakage.

The starting point is to codify every airport‑related commercial term from current CRD contracts. Each vendor’s free‑wait window, slabs for extra hours or kilometers, night‑time charges, and cancellation/no‑show rules should be translated into clear formulae for “cost per minute of delay,” “cost per missed pickup,” and “cost per re‑dispatch.” These formulae should be linked directly to the contract text so Internal Audit can trace each scenario back to an agreed term.

Scenario inputs should then be layered on top of this tariff logic. Typical dimensions include historical flight delay patterns by route and time band, planned booking volumes by segment (domestic vs international, peak vs off‑peak), cancellation rates from travel desk history, and night‑shift percentages. Finance can then define three standard scenarios per route cluster. One scenario assumes normal operations with historical average delays. A stress scenario assumes seasonal disruption such as monsoon congestion. A control scenario assumes strict policy compliance with tighter booking cut‑offs and fewer last‑minute changes.

For each scenario, the Finance Controller should estimate the share of trips that fall into free‑wait versus billed‑wait buckets and the share that trigger cancellation or no‑show fees. This produces an expected “airport overhead factor” such as “airport trips typically carry plus 8–12% over base fare during monsoon windows due to wait‑time billing.” That factor can then be embedded into monthly budgets and accrual models. Finance can pre‑define thresholds at which variance against these scenario ranges automatically triggers an exception review with the transport desk.

A common failure mode is to ignore behavioural levers. Scenario models should explicitly test the impact of simple policy shifts on exposure. Examples include enforcing minimum booking lead times for high‑risk routes, aligning free‑wait windows to realistic arrival patterns, or routing more senior executive trips to vendors with more generous free‑wait terms but slightly higher base fares. These what‑if comparisons help Finance justify trade‑offs such as “marginally higher contracted base rate reduces unplanned extras and reconciliation effort.”

To avoid audit friction, Finance should ensure the scenario logic and actual invoices share a single source of truth. Trip‑level data from the CRD platform or vendor MIS should export billed base fare, wait‑time minutes, and cancellation flags in a structured format. That feed can be reconciled monthly against the scenario model so that over‑ or under‑spend is explained by documented changes in flight behaviour, volumes, or compliance. When audit teams ask why airport extras increased, Finance can point to the scenario assumptions, variance analysis, and linked operational drivers instead of relying on ad‑hoc explanations.

In practice, a robust approach for a Finance Controller is to maintain a short, recurring checklist for airport exposure governance.

Key items include:

• Updating delay and cancellation assumptions quarterly based on vendor and airline data.

• Reviewing a sample of invoices where billed wait‑time exceeds a defined threshold.

• Publishing a one‑page “airport terms and cost driver” summary for HR and the travel desk so upstream behaviour aligns with the cost model.

How can Procurement write the RFP so all vendors disclose scenario assumptions—traffic, seat-fill, dead-mile—so we can compare fairly and avoid black boxes?

B0600 Comparable scenario disclosures in RFP — In India corporate employee transport, how can a category manager structure an RFP requirement so vendors must disclose scenario model assumptions (traffic, seat-fill targets, dead-mile caps) in a comparable way rather than using proprietary black-box outputs?

In India corporate employee transport RFPs, a category manager can force comparable, non–black‑box proposals by prescribing a standard “assumptions template” and making its completion a scored, non‑negotiable requirement. Vendors then submit model outputs only alongside declared inputs on traffic profiles, seat‑fill targets, and dead‑mile caps in that common format.

A structured RFP usually works best when the category manager fixes the comparison frame in advance. The RFP can define standard shift windows, sample rosters, and geography clusters, and require every bidder to run scenarios on exactly those inputs instead of their own preferred samples. The RFP can also ask for explicit declarations of targeted Trip Fill Ratio, allowed dead mileage per route, and assumed On‑Time Performance percentage for each scenario, so that procurement can line up vendors on the same KPI axes.

Strong RFPs also separate “what you assume” from “what you promise.” The document can ask for a dedicated “Routing & Capacity Assumptions” annex with parameters such as traffic timebands, seat‑fill targets by shift, fleet mix policies, buffers for peak‑hour variability, and routing recalibration frequency. Scenario outputs like cost per employee trip and fleet size are then evaluated only in light of those disclosed parameters.

To keep vendors from hiding critical logic, the RFP can make disclosure of model constraints and guardrails a compliance item. This may include maximum duty hours, rules on routing women at night, and hard caps on dead mileage. Vendors that do not map these assumptions cleanly to required KPIs like Vehicle Utilization Index and Trip Adherence Rate can be marked down or treated as non‑compliant.

A practical RFP structure often includes:

- A standardized input pack describing rosters, sites, and shift windowing.

- A mandatory assumptions sheet listing traffic bands, seat‑fill goals, dead‑mile caps, and peak buffers.

- A KPI mapping table that links each assumption to outputs such as cost per km, OTP%, and Trip Fill Ratio.

How do we link OTP/closure/seat-fill commercials to agreed scenario baselines so we don’t end up fighting every month about what was assumed?

B0601 Commercials anchored to baselines — For India corporate mobility contracts, what is a practical way to tie outcome-linked commercials (OTP, closure SLAs, seat-fill) to scenario-model baselines so Finance doesn’t get trapped in endless disputes about ‘what was assumed’?

For India corporate mobility contracts, the most practical way to tie outcome-linked commercials to scenario-model baselines is to freeze a jointly validated “design baseline pack” before go-live and then pay incentives/penalties only on clearly defined deltas from that pack. Finance needs the baseline to be version-controlled, data-backed, and auditable so that every later dispute can be answered with “this is exactly what we all signed off.”

First, organizations should separate assumptions from outcomes. The baseline pack should capture shift windows, expected attendance patterns, target seat-fill, agreed dead-mile caps, and committed fleet mix for EMS, CRD, ECS, or LTR. This baseline should sit in a single repository linked to roster data, HRMS, and historic trip logs where available. Any subsequent routing or capacity optimization should be traced back to these frozen inputs.

Second, outcome metrics such as OTP%, complaint closure SLA, Trip Fill Ratio, and exception-closure time should be defined as contract KPIs, not as informal expectations. Each KPI needs a clear formula, data source (command center logs, GPS, ticketing), and minimum sample size per month. This reduces arguments about whether a reported OTP value is “real” or selectively sampled.

Third, the commercial logic should be expressed as simple, laddered bands anchored to the baseline. For example, a banded incentive or penalty around OTP linked to the agreed shift structure, or a per-seat saving share once seat-fill exceeds a baseline threshold without breaching safety or compliance limits. Finance can then reconcile payouts against a deterministic rule-set rather than debating assumptions.

To keep disputes low, organizations should mandate three operational disciplines:

- Monthly joint “assumption variance” reviews that distinguish demand-side changes (e.g., new shift timings, hybrid policy changes) from vendor performance.

- Immutable audit trails from the command center that tie trip events, incident logs, and closure SLAs to specific invoices.

- Explicit change-control for any structural assumption shifts, so a new baseline version is created instead of retrofitting commercials to old scenarios.

When baselines are frozen in an agreed scenario model and every change passes through a simple versioned change-control, Finance gains predictable, defendable numbers instead of recurring arguments about “what was assumed.”

What red flags show the scenarios are just sales theater, and what quick checks can ops run before trusting them for a site launch or vendor switch?

B0602 Spotting scenario model theater — In India corporate mobility platform implementations, what are the warning signs that scenario modeling is being used as ‘sales theater’—and what simple reality checks should a Transport Head run before trusting the numbers for a site launch or vendor transition?

In corporate mobility platform pitches, scenario modeling becomes “sales theater” when it hides operational risk, ignores local constraints, or cannot be traced back to real-world execution patterns. A Transport Head should treat any model as unproven until it passes a few simple, shift-level reality checks using operational metrics and on-ground scenarios.

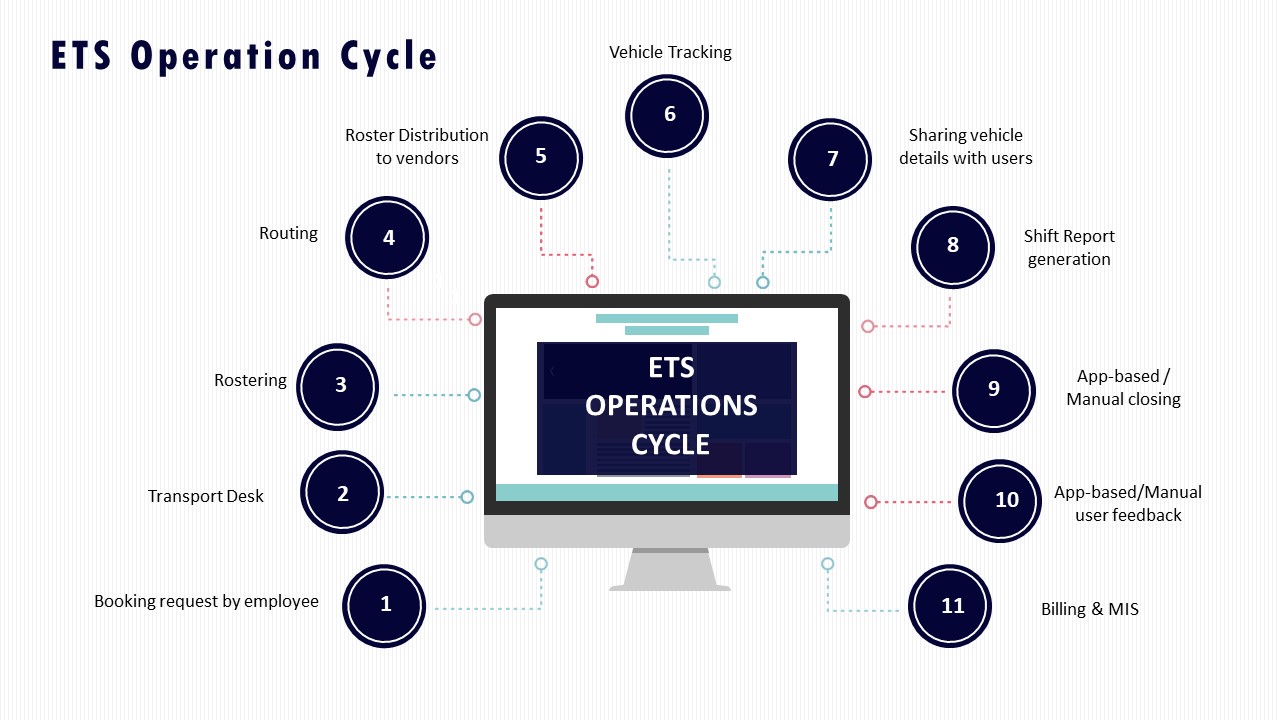

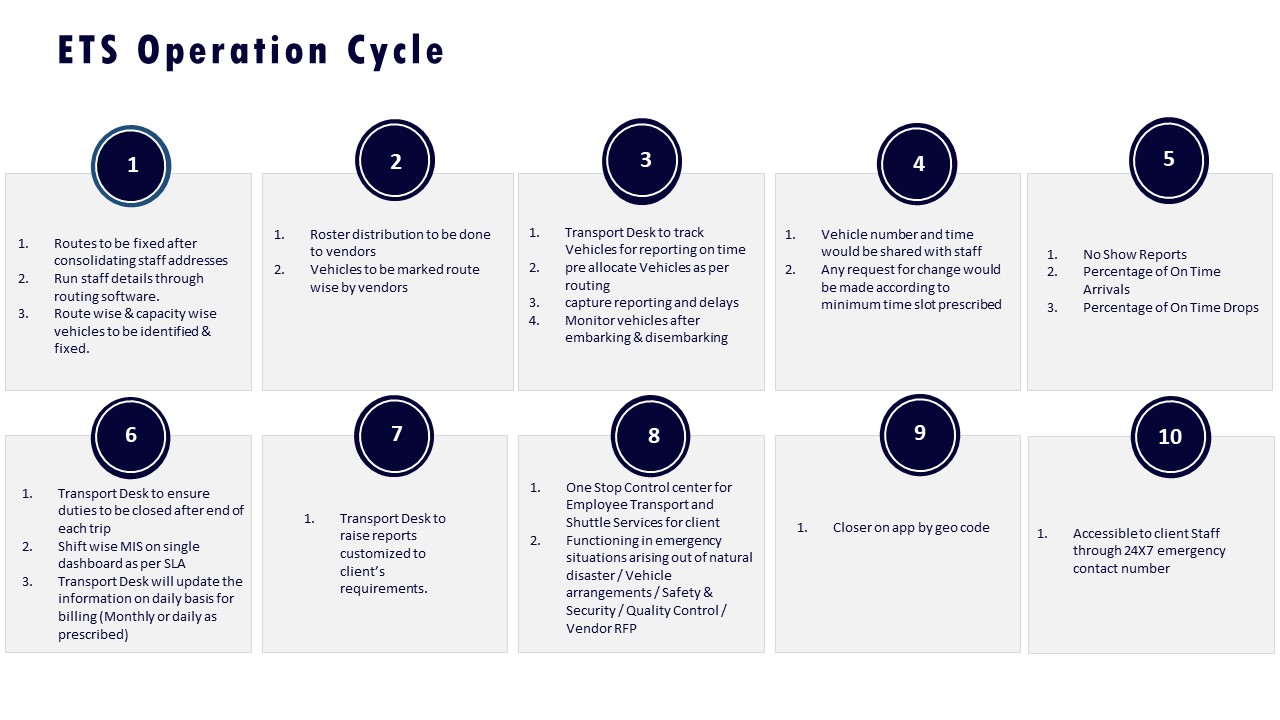

Warning signs that modeling is sales theater include models that assume perfect OTP and fleet uptime with no buffers, ignore predictable disruptions like monsoon traffic or political strikes, and promise double‑digit cost reductions without explaining dead mileage control or seat‑fill assumptions. Another warning sign is when EV scenarios show diesel‑parity uptime and cost per km without disclosing charging topology, night‑shift feasibility, or fleet mix rules between EV and ICE vehicles. Scenario outputs that quote aggregate KPIs such as “10–15% idle time reduction” or “25% fuel savings” but cannot be mapped to a detailed ETS Operation Cycle, routing logic, or Business Continuity Plan are usually not implementation-ready.

A Transport Head should run basic reality checks before trusting any modeled numbers for a new site launch or vendor transition. The first check is to demand route‑wise and shift‑wise OTP, Trip Adherence Rate, and fleet uptime assumptions, and then stress‑test them against known peak traffic windows, hybrid‑work attendance volatility, and night‑shift escort rules. The second check is to ask for a concrete routing and capacity plan that shows seat‑fill targets, dead‑mile caps, and buffers for driver shortages or vehicle breakdowns, aligned to an ETS Operation Cycle and Command Center escalation matrix. The third check is to verify that modeled EV performance matches proven case studies with six‑month trajectories for emissions, cost per km, and uptime, rather than relying on one‑time snapshots.

A Transport Head can also insist on a limited pilot where modeled KPIs like OTP%, Trip Fill Ratio, and Cost per Employee Trip are compared against live data under typical disruption conditions such as heavy rain or partial tech downtime. Platform claims about AI routing or data‑driven insights should be validated by checking whether the NOC or Transport Command Centre can demonstrate live triage, alerts, and closure workflows instead of static dashboards. Any scenario that cannot survive these simple reality checks should be treated as sales theater rather than a basis for launch decisions.

How can Finance use scenarios to set downside guardrails—like demand spikes or vendor shortfalls—so overruns are capped and board-explainable?

B0605 Downside guardrails for budgets — In India corporate mobility budgeting, how should a CFO use scenario models to set guardrails for downside risk (worst-case demand spike, vendor capacity shortfall) so cost overruns are capped and explainable to the board?

In Indian corporate mobility, a CFO should build 2–3 explicit “stress-test” scenarios around demand spikes and vendor shortfalls, assign each a clear cost ceiling, and then lock these ceilings into contracts, SOPs, and board narratives. Scenario models are most useful when they convert messy operational volatility into pre-agreed ranges for cost per km (CPK), cost per employee trip (CET), and total monthly mobility spend.

A practical starting point is to define a “base case” tied to current roster patterns, seat-fill, and on-time performance, and then layer two downside cases. One downside case should model a worst‑week demand spike from hybrid-work changes or events. Another downside case should assume vendor capacity failure that forces use of higher-cost backup supply. Each scenario should quantify dead mileage, extra fleet buffers, and emergency ECS or CRD usage, and translate these into incremental CPK and CET bands.

Guardrails are most credible when scenario outputs are wired into outcome-linked contracts. A CFO can cap exposure by defining per‑trip and per‑km bands, specifying when surge ECS or spot CRD can be triggered, and tying payouts to OTP%, Trip Adherence Rate, and seat-fill, rather than pure volume. Procurement can then embed surge rules, buffers, and substitution playbooks into the Vendor Governance Framework so that operations cannot exceed the pre-modelled spend without a defined approval path.

To make these guardrails explainable to a board, Finance should maintain a simple bridge: base budget, modeled downside allowances for spikes and vendor failure, and the controls that keep real CPK and CET inside those modeled bands. This links EV transition costs, hybrid-work elasticity, and emergency ECS usage back to a single, defensible narrative of predictable, governed risk.

How should Finance push back on ‘guaranteed savings’ scenarios by demanding sensitivity ranges—traffic, attrition, absenteeism, fuel/energy—instead of one best-case number?

B0609 Savings sensitivities not single number — In India corporate mobility, how should a Finance Controller challenge scenario models that promise EBITDA-impacting savings by asking for sensitivity ranges (traffic, attrition, absenteeism, fuel/energy price) rather than a single ‘best-case’ number?

A Finance Controller in Indian corporate mobility should challenge EBITDA-saving claims by forcing vendors to express outcomes as sensitivity ranges across key drivers like traffic, attendance, driver attrition, and fuel/energy prices instead of a single best-case number. Scenario models become decision-useful only when they show how cost per km, cost per employee trip, and EBITDA move under realistic variability in these parameters.

The Finance Controller should first anchor the conversation in current baselines for cost per kilometer, cost per employee trip, dead mileage, and on-time performance. Scenario outputs should then be requested in low, medium, and high bands for each driver, rather than a single average. Finance should insist that route-optimization, EV transition, and utilization gains are modeled under different traffic congestion and hybrid-attendance patterns that reflect actual shift windowing and peak-load behavior.

A common failure mode is accepting route-cost reduction claims that assume perfect attendance and stable driver supply. Another failure mode is ignoring EV-related exposure to energy tariffs and charging availability when projecting TCO and EBITDA uplift. The Finance Controller should ask vendors to show how EBITDA and CET behave when driver attrition rises, or when Trip Fill Ratio and Vehicle Utilization Index fall below targets.

Key practical asks include: - A sensitivity table that links each 5–10% change in OTP, Trip Fill Ratio, and dead mileage to EBITDA impact. - Separate lines for diesel and EV fleets that stress-test fuel and power price bands. - Explicit linkage between SLA breach rates and expected credit notes or penalties in outcome-based contracts.

This approach reduces the risk of over-optimistic business cases and supports cleaner audit narratives about mobility transformation economics.

For our employee commute program, how can our CFO sanity-check scenario models for attendance swings so we don’t get surprise cost spikes later?

B0612 CFO stress-test demand volatility — In India corporate Employee Mobility Services (shift-based employee transport), how should a CFO pressure-test what-if scenario models for demand volatility so the savings story doesn’t collapse when attendance patterns swing and the monthly mobility bill spikes?

In corporate employee mobility in India, a CFO should pressure-test what‑if models by stress‑running them against real attendance volatility, linking every scenario back to unit economics like cost per employee trip and cost per kilometer, and validating that SLAs and billing logic still hold when rosters and shift-fill swing sharply. A robust model must show how OTP, fleet mix, and dead mileage behave across low, medium, and surge demand, and it must keep invoices reconcilable to trip data even when patterns change week to week.

The first failure point is usually optimistic, “average” attendance assumptions. CFOs should insist on scenarios built from historical roster variability, hybrid-work patterns, and peak events in Employee Mobility Services. A common weakness is models that show savings at 85–90% seat-fill but never simulate what happens to CET when fill drops or when extra cabs are rushed in to protect on-time performance.

A second failure mode is commercials that are not outcome-linked. CFOs should test whether per‑km or per‑trip contracts include guardrails such as dead‑mile caps, seat‑fill thresholds, and OTP‑linked incentives and penalties. Models should explicitly show cost impact when attendance falls but minimum guarantees or long‑term rental style commitments continue.

CFOs also need to test data integrity and reconciliation under stress. Scenario models should be validated against how the EMS platform actually records trips, exceptions, and no‑shows, and whether monthly invoices still tie cleanly back to SLA metrics and trip logs when operations are in firefighting mode. Without that, a “savings” story can vanish into disputes and manual reconciliations once volatility hits.

What are the typical unrealistic assumptions in scenario models—seat-fill, dead miles, driver availability—and how do we catch them before signing?

B0617 Spot unrealistic model assumptions — In India corporate Employee Mobility Services, what are the most common ‘too-good-to-be-true’ assumptions in scenario models (seat-fill, dead mileage, driver availability, traffic, roster stability), and how can Operations expose them before a contract is signed?

Most “too-good-to-be-true” mobility models in India assume away variability in people, vehicles, and traffic. Operations teams need to stress-test these assumptions with real rosters, real-route data, and worst-case day simulations before any contract or SLA is finalized.

Common failure-prone assumptions in EMS scenario models relate to seat-fill, dead mileage, driver availability, traffic, and roster stability. These assumptions usually hold in spreadsheets but break under hybrid attendance, night-shift safety rules, and city-specific congestion patterns. When they fail, on-time performance drops, driver fatigue rises, and escalation load on the transport head increases.

Operations leaders can expose weak assumptions by insisting on a data-backed baseline. This baseline should come from recent trip logs, route adherence audits, and on-ground observations for each major shift window. It should reflect actual Trip Fill Ratios, dead-mileage patterns, and no-show rates rather than vendor-declared norms. This protects the Facility / Transport Head from inheriting an unworkable model that only functions on paper.

Practical guardrails that Operations can use before signing a contract include:

• Running pilot routes using the proposed routing logic and shift windowing.

• Comparing promised OTP%, seat-fill, and Cost per Employee Trip against pilot outcomes.

• Stress-testing driver and fleet availability under night-shift and weekend peaks.

• Incorporating buffer capacity and contingency playbooks into the scope and SLAs.

By challenging optimistic inputs early, Operations can convert scenario models into realistic playbooks. This reduces daily firefighting, preserves driver morale, and keeps leadership escalations under control.

How do we connect the scenario model outputs to billing rules so the ‘expected savings’ don’t become monthly invoice fights?

B0618 Link scenarios to invoice rules — In India corporate Employee Mobility Services finance governance, how can Finance tie what-if scenario outputs (volume bands, route changes, shift elasticity) to invoice rules so ‘model savings’ don’t turn into recurring billing disputes?

In India EMS finance governance, Finance can prevent “model savings” from turning into billing disputes by converting every what‑if scenario assumption into an explicit, machine-readable invoicing rule with a matching audit trail. Finance teams need contracts, billing logic, and reports to use the same definitions for volume bands, route changes, and shift elasticity that the optimization models use.

Finance governance usually fails when commercial constructs drift from operational reality. Modelled seat-fill, dead mileage caps, or volume bands sit in a slide deck, while invoices still follow legacy per‑km or per‑cab practices. A common failure mode is unpaid or disputed invoices because Procurement, Transport, and the vendor interpret “band thresholds,” “minimum billing units,” or “cancellation windows” differently.

To keep scenarios and invoices aligned, Finance can enforce a few concrete disciplines:

- Define canonical units and thresholds. Volume bands, CET/CPK baselines, and shift windows should be parameterized in the contract. The same parameters must drive route optimization and the billing engine.

- Bind SLAs and penalties to measurable EMS KPIs. OTP%, Trip Fill Ratio, dead mileage, and no‑show rules should feed directly into credit notes or penalty lines instead of ad‑hoc negotiations.

- Require a single “trip ledger” as source of truth. HRMS-linked rosters, GPS trip logs, and command-center exceptions should reconcile into one data set that both parties use for billing and dispute resolution.

Operationally, this ties into centralized command-center observability, data-driven insights, and indicative management reports that already track utilization, safety, and exceptions. When those same dashboards also compute billable units per band and show how each optimization scenario changes CET/CPK, Finance gains a defendable bridge from scenario modelling to monthly EMS invoices.

How can our leadership tell if scenario modeling will genuinely reduce late pickups and escalations, instead of just giving us prettier dashboards?

B0619 Prove models change outcomes — In India corporate Employee Mobility Services, how should an executive sponsor decide whether scenario modeling is actually ‘solving the recurring board problem’ versus just producing nicer dashboards that don’t change late pickups or escalations?

An executive sponsor should treat scenario modeling as “solving the board problem” only when it changes operational KPIs like OTP%, exception-closure time, and incident rate, not just how dashboards look. Scenario modeling is valuable when it drives different routing, fleet-mix, and contract decisions that reduce late pickups, driver fatigue, and escalations in measurable, repeatable ways.

Scenario modeling helps when it is wired into the real Employee Mobility Services operating model. Scenario outputs must feed roster design, shift windowing, seat-fill targets, dead-mile caps, EV vs ICE allocation, and buffer policies for peaks or monsoon traffic. Modeling is impactful when the Command Center and routing engine use these scenarios to pre-position vehicles, adjust routes by timeband, and change standby logic before disruptions hit.

A common failure mode is treating modeling as a one-time consulting artifact. In that pattern, teams get attractive visualizations and “what-if” slides, but daily rostering, vendor allocation, and escalation SOPs remain manual and reactive. Another failure mode is focusing on long-range carbon or cost projections without linking them to daily SLA governance, exception workflows, and on-ground playbooks.

To distinguish real value from cosmetic analytics, an executive sponsor can insist on three checks:

- Every scenario must specify which knobs change in operations, commercials, or vendor governance.

- Each knob must have a target delta on OTP%, Trip Adherence Rate, no-show rate, or safety incidents.

- The Command Center must have clear SOPs and authority to act on those scenario-driven thresholds.

If scenario modeling does not alter routing rules, fleet buffers, EV adoption sequencing, or escalation triggers, then it is usually just nicer dashboards and not a solution to recurring board-level questions about late pickups and safety escalations.

How do we use scenarios to test if the pricing still works in a worst month—low seat-fill, more reroutes—so Finance doesn’t get shocked at quarter-end?

B0627 Test commercials under worst month — In India corporate ground transportation vendor selection for Employee Mobility Services, how can Finance use scenario models to test whether the vendor’s commercials still hold under ‘worst month’ conditions (higher cancellations, reroutes, lower seat-fill) so the CFO isn’t surprised at the quarter-end?

Finance can pressure-test Employee Mobility Services commercials by building simple scenario models that stress the vendor’s assumptions on demand, routing, and utilization, then pushing those models to a “worst month” envelope before signing. Scenario models should explicitly vary seat-fill, dead mileage, cancellation rates, and reroute frequency to see how Cost per Employee Trip and Cost per Kilometer behave when OTP and SLA adherence are under strain.

A robust model starts from the vendor’s base case. Finance should capture assumed Trip Fill Ratio, vehicle utilization, dead-mile caps, cancellation norms, and the split between peak and non-peak hours. Finance then builds 2–3 stress scenarios that increase no-show and cancellation rates, add unplanned trips and reroutes, and reduce seat-fill on critical shift windows. The objective is to quantify how quickly commercials drift once routing is no longer perfectly optimized.

A common failure mode is accepting per-km or per-seat commercials that look attractive at ideal utilization but break down when hybrid attendance patterns, weather disruptions, or vendor-side driver shortages hit. A well-constructed scenario model forces the vendor to disclose thresholds where extra charges, minimum guarantees, or surge fleets kick in. Finance can then negotiate outcome-linked clauses, dead-mileage controls, and clear caps on special charges. This reduces quarter-end surprises and aligns payouts with OTP, safety, and utilization KPIs rather than optimistic base assumptions.

Operational resilience playbooks

Turn scenario outputs into concrete NOC playbooks, escalation paths, buffer rules, and recovery procedures. Ensure you can execute within 5 minutes during a disruption.

For airport and intercity bookings, what what-if models help us anticipate flight delays, surges, and driver shortages so exec trips don’t get messed up?

B0567 CRD airport/intercity failure modeling — For India corporate CRD (corporate car rental) airport and intercity operations, what scenario models help a travel desk predict failure modes like flight delays, surge demand, and driver availability so executives don’t experience repeated service misses?

For Indian corporate car rental (CRD) airport and intercity operations, travel desks get the most control by running a small set of repeatable “scenario models” around flight behavior, demand spikes, and fleet/driver risk, and wiring those into SLAs, routing rules, and dispatch SOPs. These models do not need to be complex, but they must be explicit, data-backed, and tied to clear decision actions so executives stop seeing repeated misses.

The first scenario model most organizations need is a flight‑linked risk model for airport trips. Travel desks should maintain airline and route-level patterns for early/late arrivals, average turnaround at the airport, and immigration/baggage delay windows, then translate these into buffer rules for vehicle reporting time and free‑waiting time. When live flight status crosses certain thresholds, the model should drive automatic actions like reassigning the car, switching to a standby vehicle, or extending the waiting window within pre‑defined cost caps. This converts random flight delays into predictable triggers for dispatch and escalation instead of one‑off judgments by agents.

The second critical scenario model focuses on surge demand and overlapping trip windows. Travel desks should forecast demand bands by day of week, time‑of‑day, city pair, and event calendar, and then simulate fleet coverage against these peaks. In practice this means modeling how many airport arrivals or intercity departures can overlap within a 60–90‑minute window before SLA risk rises, and pre‑allocating standby vehicles or multi‑vendor capacity for those bands. This approach reduces denial of service, last‑minute outsourcing at high cost, and cascading delays when one overrun trip blocks the next.

The third model should address driver and vehicle availability and fatigue risk. A basic duty‑cycle and rest‑window model tied to intercity trip lengths, night driving, and expected return times can predict when a driver will be legally or operationally unavailable for the next assignment. If the platform tracks actual duty hours, no‑show patterns, and breakdown history at vehicle level, it can flag high‑risk combinations in advance and push the travel desk to reshuffle drivers, split long intercity legs, or move a trip to a backup vendor. This directly reduces last‑minute cancellations and unsafe over‑utilization that often spill over into VIP service misses.

To make these scenario models operational rather than theoretical, travel desks need simple, codified rules tied to their CRD platform or dispatch process. Typical actions include automatic rerouting of the nearest available car for high‑priority executives when a flight ETA changes, dynamic allocation of a buffer pool of vehicles for known surge bands, and pre‑defined escalation matrices when service level risks exceed agreed thresholds. Over time, historical trip, delay, and incident data can refine thresholds and improve predictions, but even basic models, if consistently applied, materially cut recurring airport and intercity failures.

What should we ask to make sure the what-if model includes real downtime coverage—spares, substitutions, and maintenance—instead of assuming perfect uptime?

B0570 Modeling downtime and continuity — In India corporate ground transportation (EMS/LTR), what should a buyer ask to ensure scenario models include realistic downtime coverage—spare vehicle rules, substitution playbooks, and preventive maintenance windows—rather than assuming perfect uptime?

Buyers of employee mobility services and long-term rentals in India should ask explicit, operational questions about downtime scenarios, replacement rules, and maintenance windows, because most failures occur when vendors implicitly assume 100% uptime and do not codify substitution logic in the operating model or contract.

They should probe how the vendor’s target operating model handles cab shortages, natural disruptions, and technology failures. They should also verify that the business continuity plan, fleet governance, and command-center monitoring are tied to concrete uptime and substitution KPIs, not just high-level assurances.

Key questions to ask in RFPs, reviews, and QBRs include:

- Spare vehicles and buffer logic

“What minimum buffer fleet do you commit per site or per X active vehicles for EMS and LTR?”

“Is the buffer defined by shift window, route type, or volume bands, and how is it recalibrated when demand changes?”

“What is your SLA for replacing a down vehicle mid-shift, and how is this measured in your command center?”

- Substitution playbooks and vendor aggregation

“Show the written substitution playbook you use when there is a cab shortage, driver no‑show, or sudden volume spike.”

“How many secondary or tertiary vendors are tagged per location, and what are the rules for activating them?”

“What is the escalation matrix when the primary fleet cannot meet committed OTP or seat-fill during peaks?”

- Preventive maintenance windows and scheduling

“How do you schedule preventive maintenance for LTR and EMS vehicles so that it does not undermine shift adherence?”

“What percentage of fleet can be simultaneously in maintenance, and how is this capped in the planning model?”

“Show an example month where maintenance, fitness, and compliance renewals are visible in your dashboards and roster plans.”

- Business continuity and disruption scenarios

“Walk through your business continuity playbooks for strikes, natural disasters, and fuel or EV charging disruptions.”

“How do you alter shift times, reroute, or re-cluster employees during city-wide disruptions while protecting safety and OTP?”

“Which specific contingency measures from your BCP have you actually executed for existing clients in the last 12–24 months?”

- Technology and command-center handling of downtime

“What alerts does your NOC or command center raise for vehicle breakdowns, GPS loss, or app downtime, and what are the SLA timers for closure?”

“If the routing or mobility app fails during a shift, what is the manual SOP for continuing operations and reconciling trips later?”

“Can we see your alert supervision system screens that track over-speeding, geofence violations, and breakdowns, and how those link to replacement dispatch?”

- Contractualization of uptime and downtime

“Which KPIs in the contract explicitly measure fleet uptime, vehicle utilization, dead mileage, and exception closure times?”

“How are penalties or earn-backs tied to missed OTP due to preventable downtime versus force-majeure events?”

“Do you provide a performance or bank guarantee that covers chronic under-availability or repeated fleet shortfalls?”