How to build an evidence-driven, crisis-ready EMS vendor playbook that actually calms operations

Facility heads live the problem every shift—driver shortages, late pickups, and weather or traffic disruptions. This framework turns vendor proof into a practical, on-ground playbook that reduces firefighting and keeps the operation in control. You’ll see concrete, SOP-level steps and escalation plans designed for peak shifts and off-hours, so the team can act quickly without guessing or overengineering.

Is your operation showing these patterns?

- Escalations stall with no single owner, dashboard confusion

- Driver no-shows spike during night shifts

- GPS/app outages aren’t clearly reflected in reports

- Vendor-runbooks look perfect on paper but fail in practice during disruptions

- Site-level SLAs diverge and leadership questions data credibility

- Audit packs require heavy manual work under audit pressure

Operational Framework & FAQ

evidence foundations and verification

Defines minimum proof, audit artifacts, and data integrity checks that ground vendor evaluation and ongoing governance; guards against cherry-picking and bad data.

For our employee transport program, what proof should we expect from a vendor—data samples, audit reports, KPI improvements, and references—and what’s the minimum we should ask for before we pitch it to HR and Finance?

B0468 Minimum evidence set required — In India corporate employee mobility services (EMS), what does “proof and references” practically include during vendor evaluation—anonymized trip data packs, third-party audit reports, before/after OTP deltas, and customer references by city and timeband—and what’s a reasonable minimum evidence set before I take this to my CHRO and CFO?

In India EMS vendor evaluation, “proof and references” typically means concrete, auditable artifacts.

A reasonable minimum evidence set combines real operations data, independent validation, and like-for-like customer references across your cities and timebands.

Most organizations expect an anonymized trip-data pack with basic KPIs. This usually includes on-time performance percentages, trip adherence rates, no-show rates, and incident logs over at least 3–6 recent months. The data is typically sliced by city, shift window, and service vertical to mirror actual operating conditions for employee mobility services. This allows transport and HR teams to verify reliability claims against real-world peak and night-shift behavior.

Third-party or internal audit reports provide separate assurance on safety and compliance. These reports commonly cover driver KYC and PSV credential controls, vehicle compliance checks, women-safety protocol adherence, and GPS or route-audit integrity. Security or EHS teams use these documents to confirm that escort rules, incident response SOPs, and audit trails exist beyond marketing slides.

Before–after impact metrics are used as proof of improvement capability. Examples include OTP deltas after vendor transition, cost-per-trip trends, customer satisfaction scores, and case studies on difficult scenarios such as monsoon routing or night-shift operations. These metrics show whether the vendor can move a program from firefighting to stable performance.

Customer references by city and timeband are critical for comfort. Teams usually ask for at least one reference in the same city, one reference for late-evening or night operations, and one reference with comparable scale. Transport heads and CHROs often speak directly with these peers to validate escalation response, command-center behavior, and driver management in real conditions.

- For CHRO sign-off, the minimum set usually includes: a 3–6 month KPI pack with OTP and safety incident data, a women-safety or night-shift case study, and at least one HR or security reference call.

- For CFO sign-off, the minimum set usually includes: reconciled cost and utilization trends, billing or process documentation, and at least one finance or procurement reference confirming billing accuracy and dispute handling.

For night-shift and women-safety, what concrete reports or logs should we ask for (SOS response, escort compliance, incidents, RCAs) so Security isn’t caught short after an incident?

B0470 Safety audit artifacts checklist — In India employee mobility services (EMS) with women-safety and night-shift obligations, what specific artifacts should an EHS/Security Lead request as “audit-ready proof” (incident logs, SOS response timelines, escort adherence, geofence breaches, RCA trail) to feel confident they won’t be exposed during a serious incident review?

In India EMS programs with women-safety and night-shift obligations, an EHS/Security Lead needs audit-ready artifacts that reconstruct the full trip lifecycle and prove that policy, law, and SOPs were followed in real time. These artifacts must be time-stamped, tamper-evident, and easily retrievable for any serious incident window.

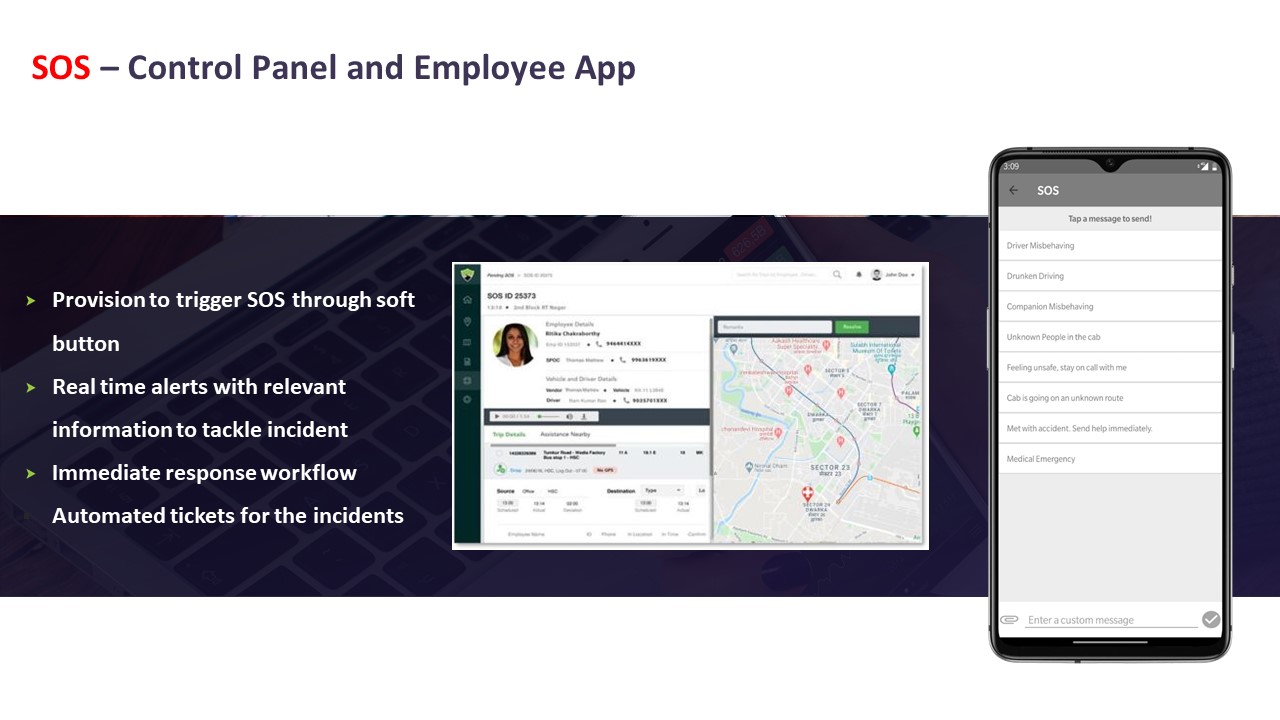

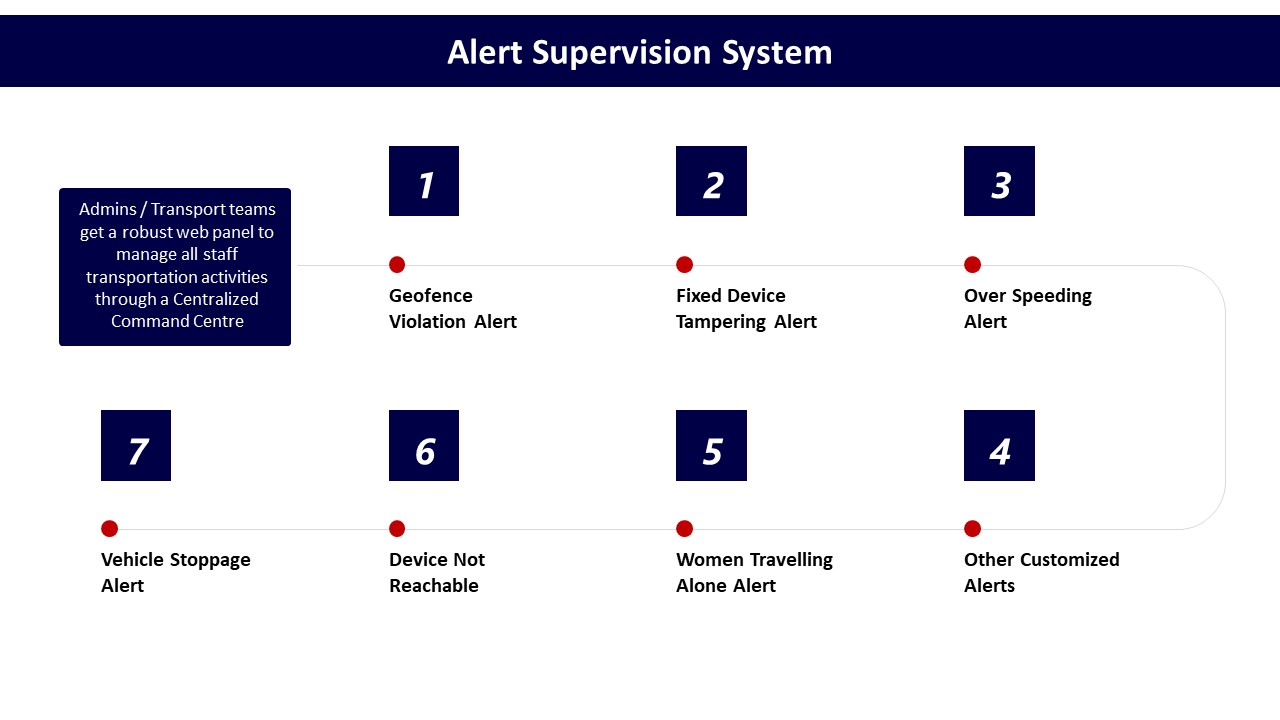

For incident management, an EHS/Security Lead should insist on a centralized incident log with unique IDs, time-stamped entries, and a complete escalation trail. The incident record should show the trigger source, the exact time of SOS activation from the employee app or driver device, acknowledgement time at the command center, intervention actions with precise timestamps, and closure notes that include whether the employee was safely handed over. This log should align with the SOS control panel evidence and call-center records that demonstrate 24/7 monitoring as described in the Alert Supervision System and SOS – Control Panel and Employee App materials.

For women-safety and night-shift compliance, the Lead should request trip manifests that prove female-first routing and escort rules, along with driver compliance records that include background checks, POSH training, and periodic refresher training. GPS trip logs must evidence route adherence, geofence configurations, and any geofence breach alerts generated by systems similar to the Transport Command Centre and Safety & Security dashboards. Each alert should map to a documented response action and, where relevant, a root-cause analysis that points to corrective and preventive measures.

For systemic defensibility, an EHS/Security Lead should also demand integrated safety and compliance dashboards showing on-time performance, incident rates, credential currency, and audit outcomes over time. These dashboards should be backed by raw trip, driver, and vehicle-level data that can be exported for independent verification, reinforcing the Safety and Compliances and Centralized Compliance Management approaches shown in the collateral.

If a vendor claims big OTP and safety improvements, how do we check it’s real and not because they changed definitions or excluded the hard routes/time slots?

B0471 Validate KPI delta credibility — In India corporate employee transport (EMS), how should a CHRO pressure-test a vendor’s claimed “before/after KPI deltas” (OTP/OTD, incident rate, seat-fill, closure SLA) to ensure the improvement isn’t just from changing definitions, excluding tough routes, or shifting timebands?

The CHRO should insist that any “before/after” KPI delta is calculated on a like-for-like basis using frozen definitions, identical route and timeband coverage, and auditable raw trip logs that can be re-run independently.

The first pressure test is KPI definition stability. The CHRO should demand written definitions for OTP/OTD, incident rate, seat-fill, and closure SLA that specify grace windows, what counts as a valid trip, and how cancellations and no-shows are treated. The CHRO should then require proof that these definitions were identical in the “before” and “after” periods, and that there was no silent change in grace minutes, exclusion rules, or thresholds during the reported improvement.

The second pressure test is scope continuity. The CHRO should verify that difficult routes, night shifts, women-first policies, and peak-load windows remain in-scope, and that the vendor has not quietly removed high-risk timebands, remote geographies, or escort-mandated routes from the denominator. Evidence should include route-level and shift-level OTP and incident rate, not only an averaged global number, so that critical corridors and night shifts can be checked separately.

The third pressure test is data lineage and auditability. The CHRO should ask for anonymized trip ledgers, GPS logs, and incident tickets for a sample period and confirm that every SLA metric can be reconstructed from those raw records. A common failure mode is when vendors present dashboards without consistent trip IDs, closure timestamps, or linkages between alerts and resolution actions.

The fourth pressure test is population comparability. The CHRO should confirm that seat-fill and OTP are being compared across similar attendance patterns, hybrid-work baselines, and fleet mixes, rather than before/after periods with radically different demand or EV penetration. The CHRO should also ensure that temporary buffers or standby vehicles used during pilot phases are disclosed so that structural vs tactical improvements can be separated.

The fifth pressure test is exclusion transparency. The CHRO should require a clear list of excluded trips and incidents, such as force majeure events or incomplete logs, and insist that exclusion criteria are symmetric across both baseline and improved periods. This prevents vendors from discarding politically sensitive incidents or “bad” days only in the after period while retaining them in the baseline.

- The CHRO can mandate a short joint audit with HR, Transport, and Security teams using live EMS data.

- The CHRO can ask to see KPIs broken down by route cluster, timeband, and gender mix rather than only as global averages.

- The CHRO can require that KPI formulas and raw data access are written into the contract as part of the SLA governance model.

When we review a vendor’s sample trip data, what red flags (missing fields, odd timestamps, too-good-to-be-true exception rates) should IT watch for?

B0473 Data pack red flags — In India corporate ground transportation (EMS/CRD), what are reasonable red flags in a vendor’s anonymized data pack (missing fields, inconsistent timestamps, suspiciously low exception rates, non-reconcilable trip IDs) that should make a CIO question data integrity and auditability?

In corporate ground transport, any pattern that weakens traceability, reconciliation, or replay of trips is a red flag for data integrity and auditability. A CIO should distrust anonymized data packs where trips cannot be tied to a consistent lifecycle, SLAs, or finance baselines, or where the “story” in the data looks cleaner than real-world EMS/CRD operations ever are.

Key warning signals usually cluster in four areas.

1. Identity, keys, and structural consistency

A serious red flag is non-reconcilable or recycled trip IDs. Each trip should have a unique, stable identifier across rider app, driver app, NOC tools, and billing exports. Missing primary keys, or IDs that change between files, prevent reliable Trip Lifecycle Management and weaken any future dispute resolution.

Datasets that omit basic trip lifecycle fields are also problematic. For EMS/CRD this includes missing booking timestamp, allocation timestamp, actual start/end times, and cancellation flags. If these fields are absent, the vendor cannot credibly support SLA claims on on-time performance, response times, or exception closure.

2. Time, location, and SLA plausibility

Inconsistent or impossible timestamps should trigger immediate concern. Examples include trips where end time is before start time, durations that are unrealistically short for known city pairs, or a high volume of rides with identical or rounded durations. These patterns suggest post-facto data massaging rather than streaming telematics feeding a mobility data lake and observability stack.

Red flags also include an absence of failed or partial trips in high-traffic or monsoon-affected cities, despite industry evidence that peak congestion, hybrid shift patterns, and weather routinely create delays and re-routing. Completely clean On-Time Performance across all timebands and regions is rarely credible in EMS.

3. Exceptions, incidents, and safety noise

Suspiciously low exception rates are a classic warning sign. In real EMS/CRD operations, geo-fence breaks, no-shows, last-minute roster changes, and SOS or incident flags do occur. A data pack that shows near-zero safety incidents, no route deviations, and perfect Trip Adherence Rate across months suggests that exceptions are either unlogged or filtered out, which undermines any “safety by design” posture and future EHS or DPDP-era investigations.

Similarly, if there is no trace of random route audits, driver fatigue indicators, or cancelled/aborted trips, the vendor likely lacks continuous assurance practices, despite industry movement away from episodic audits toward automated governance and audit trail integrity.

4. Commercial and cross-system reconciliation gaps

Data that cannot be reconciled to basic finance or HR reference points is a material red flag. For example, total trips and kilometers that do not align with representative Cost per Kilometer or Cost per Employee Trip benchmarks from billing summaries indicate weak integration with ERP or HRMS. If seat-fill or Trip Fill Ratio cannot be inferred at all, it is difficult to validate claims of route optimization or dead mileage reduction.

A CIO should also worry if raw data structures are opaque or proprietary with no clear schema, API-first integration pattern, or documentation for how SLA metrics are computed from events. Lack of clarity here tends to correlate with vendor lock‑in risks and fragile data governance later.

- Missing or unstable trip IDs undermine Trip Lifecycle Management and dispute handling.

- Impossibly “clean” timestamps and SLA metrics signal post‑hoc editing rather than streaming telematics.

- Near-zero exceptions or incidents in EMS/CRD contradict normal hybrid-work and urban-traffic realities.

- Inability to reconcile trips, km, and utilization with finance or HR baselines weakens auditability.

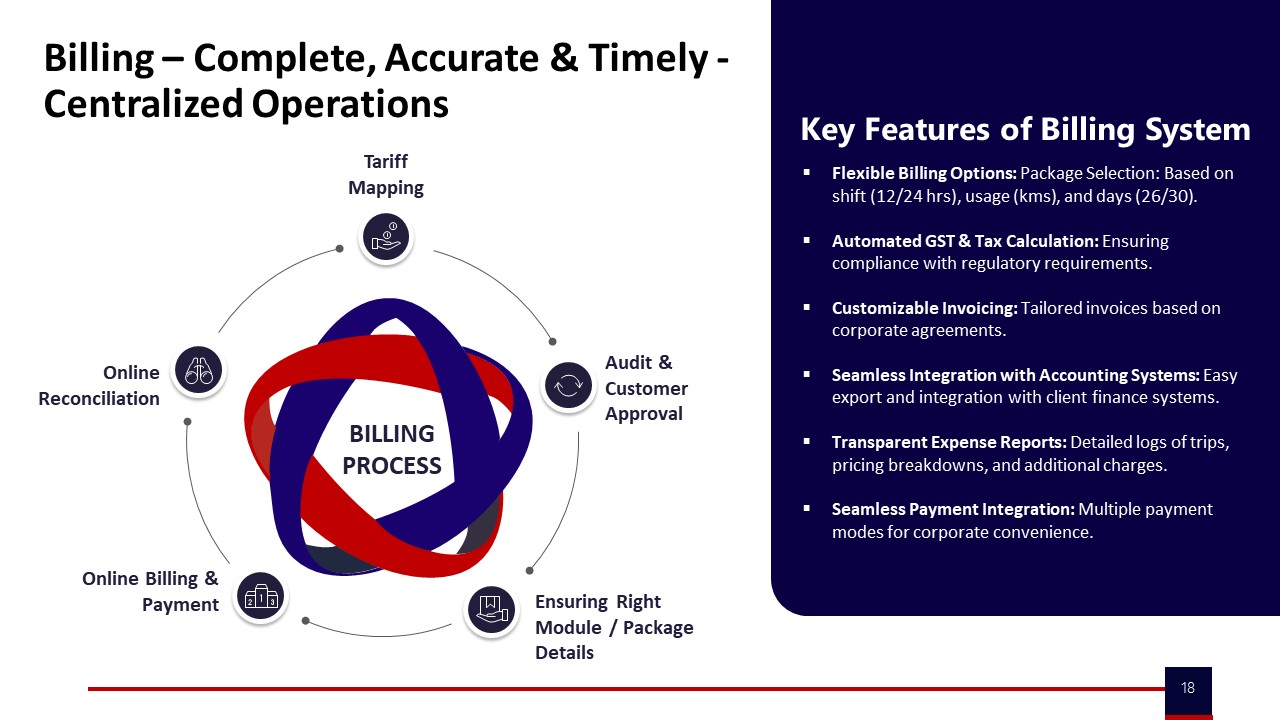

How do we ensure the SLA numbers in the dashboard actually match the invoice—penalties, incentives, dead mileage—so Finance doesn’t end up reconciling manually each month?

B0474 SLA-to-invoice traceability — In India employee mobility services (EMS), how can a Finance Controller verify that SLA metrics shown in the vendor’s dashboards can be tied back to invoice line items (penalties/bonuses, dead mileage, no-shows) without manual reconciliation every billing cycle?

In Indian employee mobility services, a Finance Controller can verify that SLA metrics tie cleanly to invoices by insisting on a single, governed data backbone where trip logs, SLA computation, and billing logic all come from the same auditable trip ledger rather than from separate, manually curated reports. The Finance Controller needs invoice line items to be machine-derivable from raw GPS and trip data, with transparent formulas linking SLA outcomes to penalties, bonuses, dead mileage, and no-shows.

A common failure mode is when vendors treat dashboards, MIS, and billing as separate products. This breaks traceability because SLA numbers cannot be reconciled to trip-level evidence. Finance leaders avoid this by requiring trip-level identifiers, timestamps, and route data to be stored in a mobility data lake with audit trail integrity, then mapped into billing models like per km, trip based, or pay-per-usage without re-keying. SLA penalties for OTP%, no-shows, or route adherence then become deterministic functions of these same records instead of judgment calls.

The Finance Controller should formalize this in contracts and RFPs by defining invoice readiness as an outcome of the platform’s trip lifecycle management. Clear rules for dead mileage calculation, no-show handling, and incentive ladders can be encoded upfront. This approach reduces manual reconciliation because exceptions, adjustments, and disputes are resolved in the platform’s governance layer before invoices are generated, rather than during audit or month-end firefighting.

Which third-party audits actually matter for employee transport—safety, compliance, security—and how do we verify the audit scope and how recent it is?

B0481 Meaningful third-party audits — In India employee mobility services (EMS), what kinds of third-party audits (safety/compliance, data security, process adherence) are meaningful versus “checkbox” audits, and how should a Risk or Internal Audit team validate the scope and recency?

In Indian employee mobility services, meaningful third‑party audits are those that test live operational reality, produce traceable evidence, and align with how EMS is actually delivered day and night. Checkbox audits stay at policy and paper level and avoid touching real trips, drivers, vehicles, apps, and data flows.

Meaningful safety and compliance audits validate current driver KYC/PSV, escort and women‑safety protocols, vehicle fitness, and route adherence with auditable trip logs. Checkbox audits only sample static documents without checking whether credentials are current, whether night‑shift escort rules are followed on actual routes, or whether geo‑fencing and SOS controls generate and close alerts through a command center or Transport Command Centre.

Robust data‑security audits map where commute data is stored and processed, test role‑based access and log retention, and align with India’s emerging data and privacy expectations. Superficial reviews only note that a mobility vendor claims app encryption or has generic ISO certificates, without tracing how trip, GPS, and employee identifiers move between EMS platforms, HRMS, and command‑center dashboards.

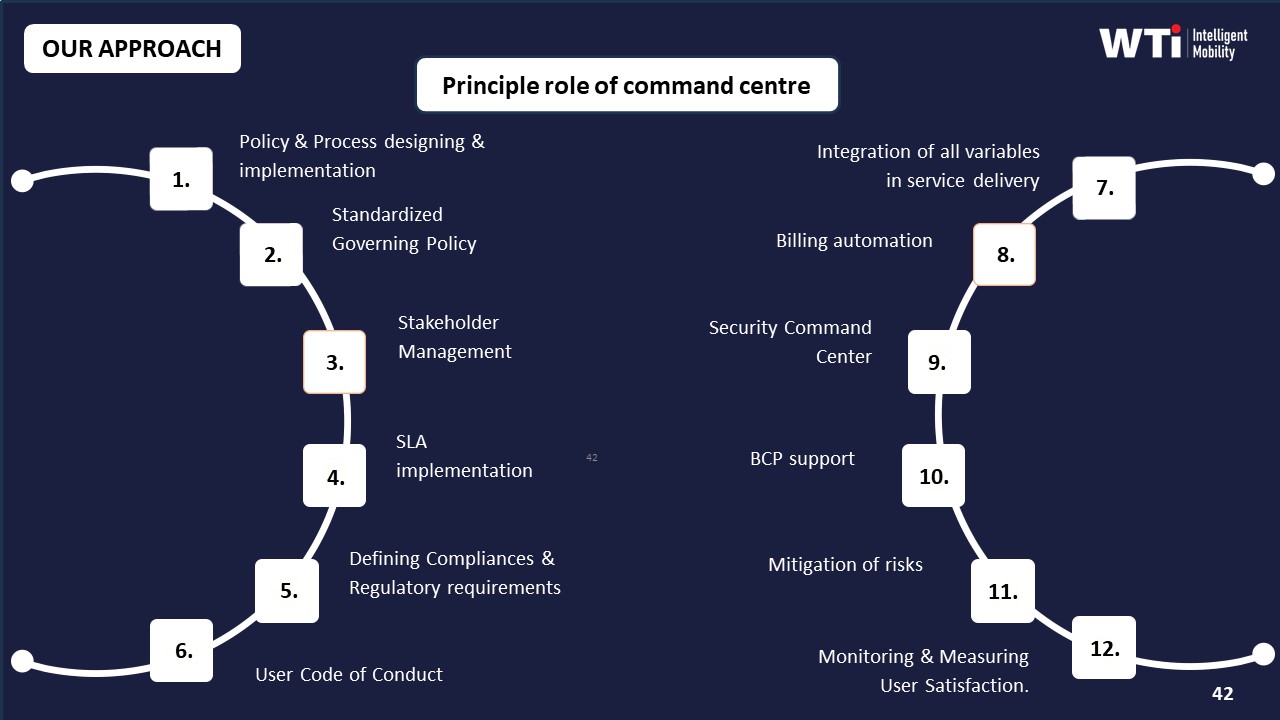

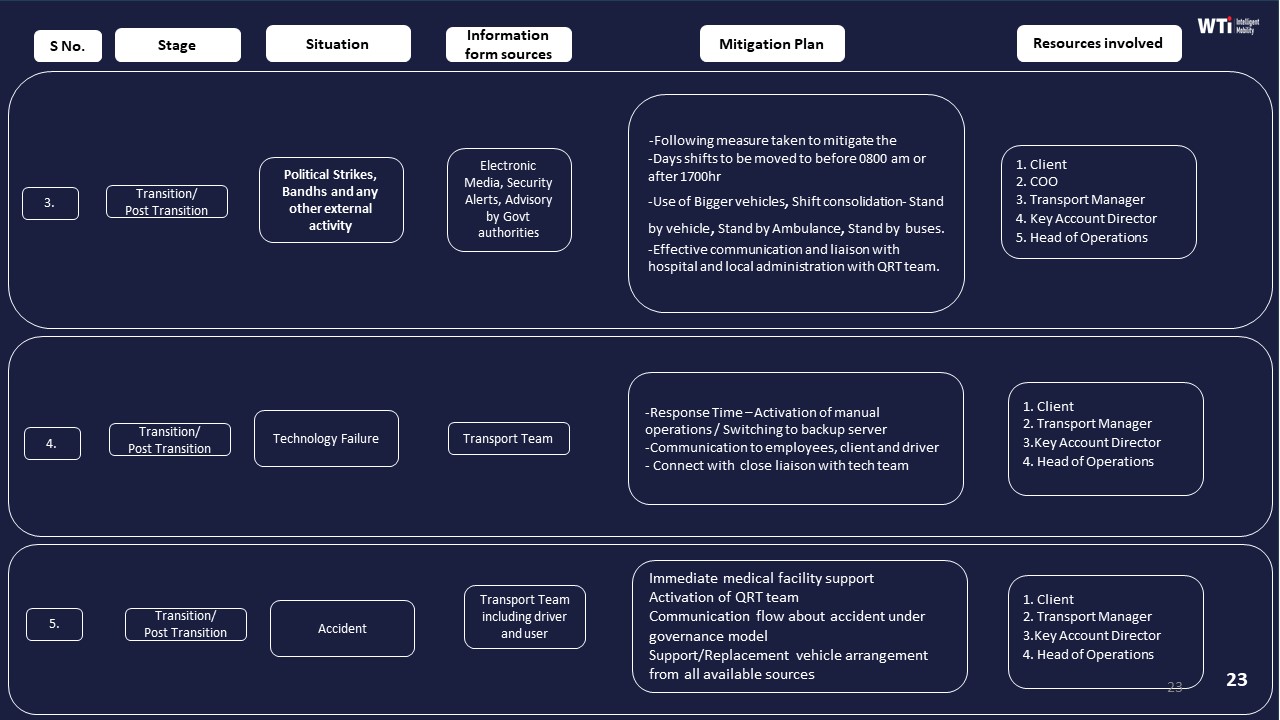

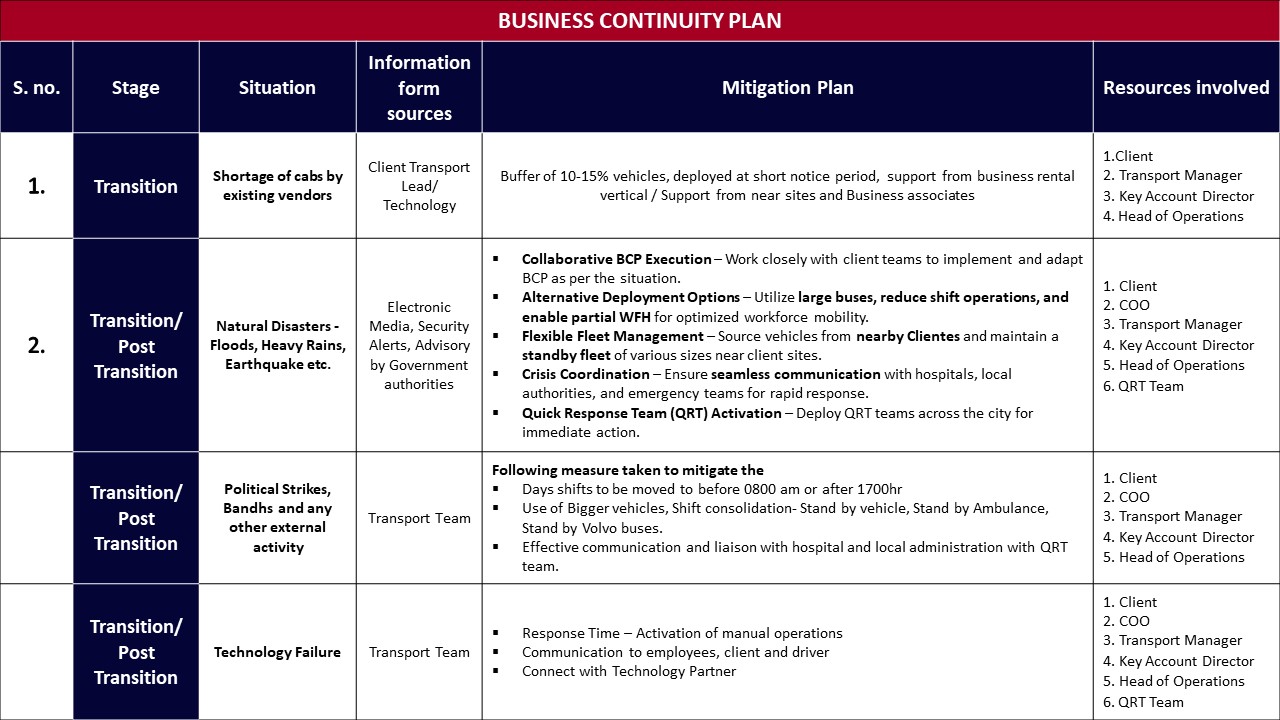

Process‑adherence audits that matter replay the full ETS Operation Cycle and Command Center operations. These audits follow bookings from employee app or desk, through rostering, routing, vendor allocation, live monitoring, incident handling, billing, and MIS, and then reconcile SLAs and exceptions with management reports and dashboards. Weak audits only verify that SOPs, Business Continuity Plans, and escalation matrices exist, without testing whether they are invoked during real disruptions such as cab shortages, monsoon traffic, political strikes, or technology failures.

Risk or Internal Audit teams should validate scope by insisting that audits explicitly cover end‑to‑end EMS flow, including driver and fleet compliance management, safety and women‑centric protocols, centralized compliance dashboards, and measurable performance indicators like OTP%, incident rates, and audit‑trail integrity. They should also validate recency by checking audit dates, sampling post‑audit trip logs and safety events, and confirming that non‑conformities led to documented corrective actions and updated SOPs rather than remaining as static findings.

How do we confirm the sample data pack is the real production schema (not cleaned up) so we don’t discover HRMS/attendance integration issues after go-live?

B0485 Representative sample data validation — In India multi-site employee mobility services (EMS), how can an IT lead validate that anonymized data packs shared for evaluation are still representative (same schema as production, no manual ‘cleaning’) and won’t mask integration pain later with HRMS and attendance systems?

In India multi-site employee mobility services, an IT lead can validate anonymized evaluation data packs by insisting on using the same pipelines, schemas, and APIs as production and then technically verifying that structure and behaviour match HRMS and attendance integration needs. The goal is to test the real data model and integration friction, not a manually curated demo extract.

First, the IT lead should require that the vendor’s anonymization is pipeline-based, not Excel-based. The IT lead should ask for documented anonymization rules applied in the mobility data lake or ETL pipeline, such as deterministic tokenization of employee IDs, masking of phone numbers, and hashing of personally identifiable information. The IT lead should then confirm that these rules are applied on top of the production trip ledger schema, and not on a separate “demo” schema.

Next, the IT lead should demand a full schema export and compare it against what will be used in production integrations. The IT lead can request database DDL or JSON schema definitions for core entities such as trips, rosters, vehicles, drivers, and employees. The IT lead should verify that field names, data types, allowed values, and primary key and foreign key relationships in the evaluation pack match the stated production schema. Any missing fields that are required for HRMS mapping, such as employee code, cost center, and shift window identifiers, must be explicitly called out and justified.

The IT lead should also enforce API-first testing instead of offline file drops. The IT lead can insist on using the same REST or webhook interfaces that will be connected to HRMS, ERP, and attendance systems later. The IT lead should run test calls against the vendor’s staging API with anonymized data and confirm that pagination, filtering, and timestamp semantics are consistent with production documentation. This approach exposes potential performance and latency issues and reveals any undocumented transformations applied between storage and API responses.

To avoid hidden manual “cleaning,” the IT lead should request an end-to-end sample covering noisy edge cases. The IT lead should ask the vendor for data that includes cancelled trips, no-shows, partial shifts, GPS gaps, and manual overrides, because these situations are common in real EMS operations. If the evaluation data shows only ideal trips with perfect GPS and complete manifests, the IT lead should treat that as a signal of manual curation and request a raw slice from an actual multi-site operation, with PII still masked.

Finally, the IT lead should run a small, time-boxed integration spike with the enterprise HRMS and attendance systems. The IT lead can map anonymized employee identifiers to synthetic HRMS records and then test the full reconciliation logic from trip events to attendance outcomes. Any mapping gaps, inconsistent shift windowing, or timestamp mismatches that appear in this spike will likely reappear in production, so resolving them early prevents integration pain later.

How can HR use exception and closure data to figure out if our daily escalations are mainly a vendor execution issue or something in our own roster/policy setup?

B0487 Diagnose vendor vs internal causes — In India employee mobility services (EMS), how can HR quantify whether recurring escalations are a ‘vendor execution problem’ versus an internal policy/roster problem, using evidence like exception latency, closure SLAs, and timeband-specific patterns from the vendor’s proof pack?

In India employee mobility services, HR can distinguish vendor execution failures from internal policy or roster issues by correlating each escalation with time-stamped operational evidence such as exception latency, closure SLA adherence, and timeband-specific patterns from the vendor’s proof pack. HR should treat every recurring complaint as a data point in a structured incident ledger that compares “what should have happened per SOP” versus “what actually happened per trip log and command-center evidence.”

HR can use exception latency as a primary discriminator. If GPS, driver app, or command center alerts show that delays or route deviations were detected early but not acted on within agreed SLAs, the issue is likely vendor execution. If there was no upstream exception at all because rosters were confirmed too late, pickup windows were unrealistic, or seat-fill policies forced impractical routing, the root cause sits with internal policies or roster design.

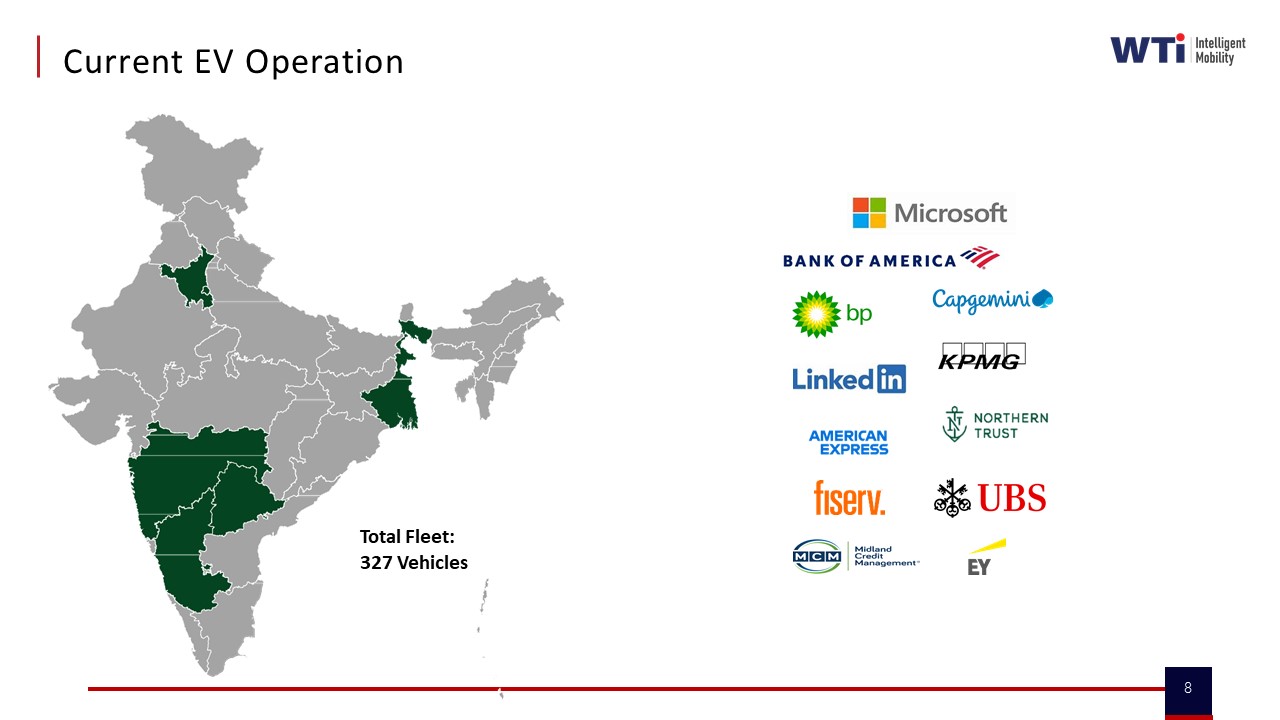

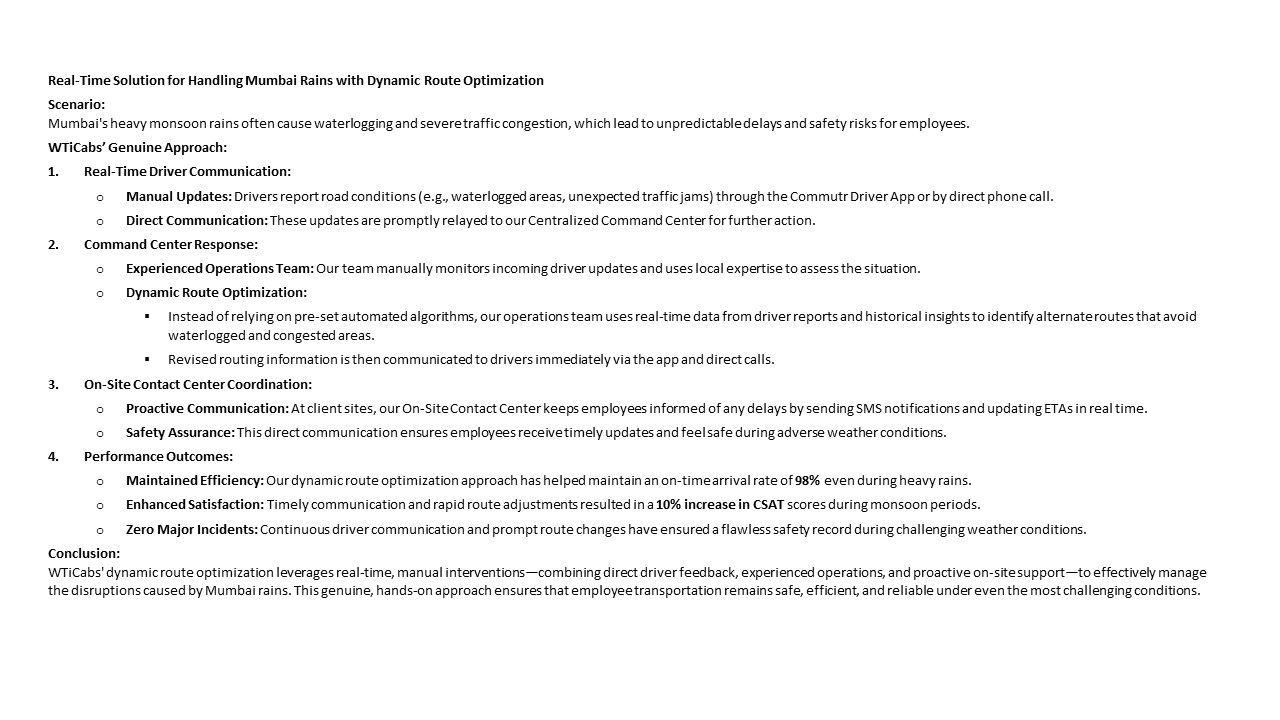

Timeband analysis helps reveal structural versus execution problems. If late pickups cluster in specific bands like heavy-traffic monsoon evenings or post-midnight windows, and vendor logs still show high fleet uptime and proactive rerouting (as in WTicabs’ monsoon case study with 98% on-time arrival), then escalation volume may indicate misaligned shift timing or buffer rules. If the same bands show frequent “no vehicle assigned,” last-minute cancellations, or repeated driver no-shows despite stable demand, vendor capacity planning or driver management is the likely gap.

HR should also compare closure SLAs and incident workflows. A consistent pattern where the command center opens tickets promptly, communicates ETAs, and closes incidents within agreed timelines points away from pure vendor negligence, especially if employees still escalate mainly about policy entitlements or rigid cutoff rules. Repeated breaches of closure SLAs, silent tickets, or inconsistent communication indicate vendor-side operational gaps regardless of policy quality.

Practically, HR can set up a recurring joint review with the transport head and vendor using a simple evidence-based split:

- “Supply-side” KPIs for vendor accountability such as OTP%, exception detection-to-action time, fleet uptime, driver compliance, and route adherence.

- “Demand and design-side” indicators for internal accountability such as late roster finalization, frequent last-minute additions, unrealistic reporting times, and chronic over- or under-subscription on certain routes or timebands.

When proof packs include CO₂ dashboards, EV utilization ratios, or business continuity logs, HR can further check whether sustainability or BCP decisions are constraining operations or whether the vendor is using these as excuses despite clear evidence of adequate infrastructure and buffers.

By insisting that every escalation is matched to auditable trip data, command center logs, and timeband trends, HR can move conversations from blame to design. The result is a defensible split of responsibility where vendor penalties focus on SLA and execution breaches, and internal improvements target roster logic, shift policies, and entitlement rules.

How should we document reference checks for employee transport so it’s audit-defensible later—who we spoke to, what we asked, and what they shared?

B0491 Audit-defensible reference documentation — In India employee mobility services (EMS), what’s the best way for Procurement to capture reference feedback so it’s audit-defensible later (who was contacted, what questions were asked, what evidence was provided) rather than informal notes that can be challenged?

Procurement teams in India EMS make reference checks audit-defensible when they treat them as a governed process with standard templates, logged channels, and preserved artefacts instead of informal calls and notes.

The core is a simple SOP. Procurement defines when references are required, which reference personas must be contacted for EMS (for example, HR, Transport Head, Security, or Finance at the client), and which minimum topics must be covered such as on-time performance, safety incidents, billing integrity, escalation responsiveness, and command-center behavior. This SOP is then tied to the mobility vendor governance framework so that no award can be issued without completed, filed reference packs.

Most organizations improve defensibility when they standardize a reference questionnaire. Each question is written down in a template and mapped to specific EMS concerns like women-safety protocols, night-shift reliability, BCP performance during disruptions, and billing or SLA dispute handling. The same question set is then used for all vendors. This reduces bias risk and makes later comparisons easier to defend in an audit.

Procurement usually needs a clear evidence trail. A practical pattern is to use only traceable channels such as official email IDs or recorded virtual meetings with consent. Teams log each interaction in a central repository, capturing reference contact identity, organization, role, and date along with the filled questionnaire and any supporting documents such as sample MIS reports, safety dashboards, or BCP playbooks that the reference client is willing to share.

To keep the process resilient, Procurement can define a short checklist of minimum reference artefacts that must be retained for each vendor. This often includes the completed questionnaire, a summary of call minutes, a simple scoring matrix across reliability, safety, cost discipline, and responsiveness, plus any documentary samples mentioned by the reference. This package can then be attached to the RFP evaluation file so that, if challenged later, the organization can demonstrate that reference feedback was collected systematically and used as one input to the final decision.

After rollout, what ongoing proof should we ask for—monthly scorecards by city/shift, RCAs, audit logs—so service doesn’t slip once the deal is done?

B0492 Prevent post-contract performance drift — In India corporate employee transport (EMS), after go-live, what proof should an operations head continuously demand from the vendor (monthly city/timeband scorecards, incident RCAs, audit trails) to prevent performance drift once the contract is signed?

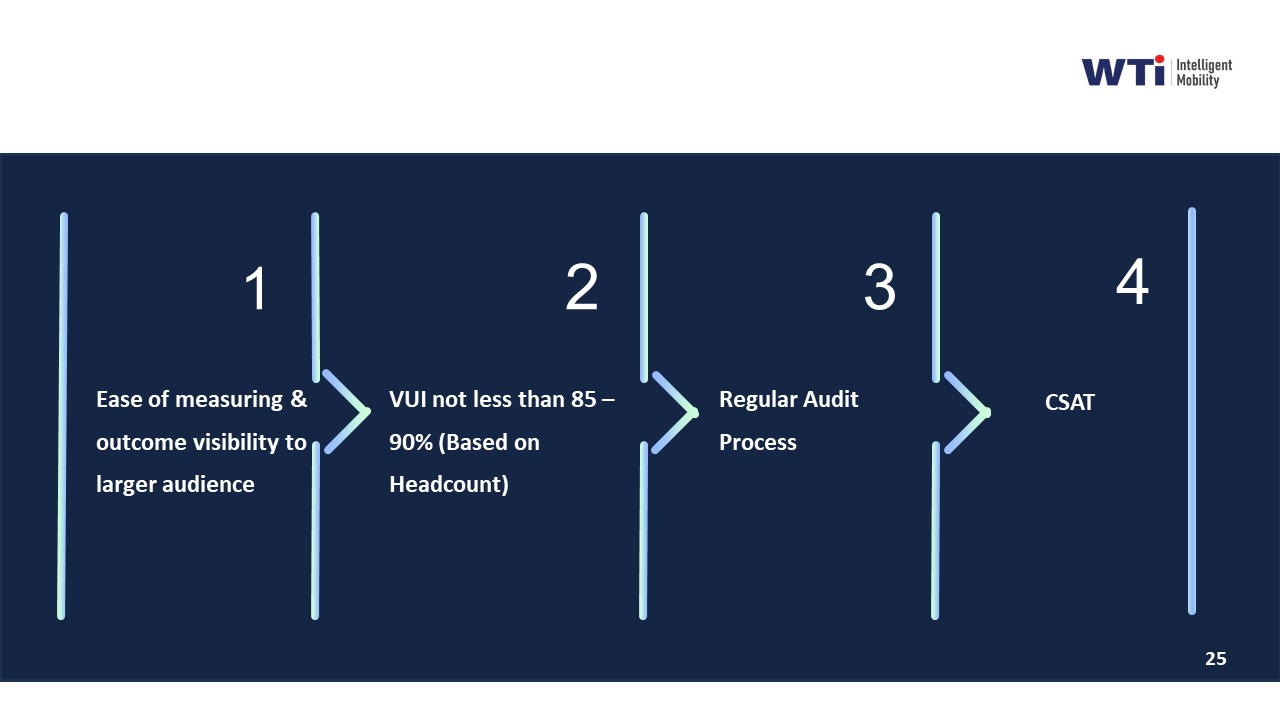

In India EMS after go‑live, an operations head should insist on a fixed “evidence pack” every month that proves reliability, safety, compliance, and cost control in a way that can withstand internal and external audits. The goal is to convert a signed contract into a monitored, SLA-governed operation with no silent performance drift.

A core proof set is city– and timeband–wise performance scorecards. These should show on‑time performance, trip adherence, vehicle utilization, dead mileage, no‑show rates, and exception closure times for each location and shift window. Operations leaders can then catch pattern drift in specific cities, night bands, or vendor pools before it becomes a crisis.

Safety and compliance need their own continuous evidence. This includes driver KYC and PSV validity status, vehicle fitness and permit logs, women‑safety escort compliance, geo‑fence and over‑speeding alerts, and random route audit summaries. Incident logs with full root‑cause analyses and timestamped closure actions are essential for every safety event, even minor ones.

Data integrity is a separate proof stream. Vendors should provide GPS trip ledgers, roster vs actual run variance reports, and reconciled SLA dashboards that tie directly to billing. This reduces disputes with Finance and keeps Procurement and HR confident that service quality and cost per employee trip are not quietly degrading.

A practical monthly evidence pack usually includes:

- City/shift scorecards with OTP%, Trip Adherence Rate, utilization, and exception trends.

- Safety and compliance dashboards plus sample audit trails and incident RCAs.

- Billing‑linked MIS that reconciles trips, kilometers, and SLAs to invoices.

For our employee commute program, what hard proof should a vendor share (data packs, KPI improvement before/after, third-party audits) so HR can confidently show safety and reliability actually improved—especially for night shifts?

B0497 Proof pack for HR safety — In India corporate employee mobility services (EMS), what specific proof artifacts should a vendor provide—anonymized trip data packs, before/after on-time performance deltas, and third-party audit reports—so a CHRO can defend “women’s night-shift safety and reliability improved” without relying on vendor promises?

In India EMS programs, a CHRO can credibly defend “women’s night‑shift safety and reliability improved” only when vendors provide auditable artifacts that tie night‑shift trips to safety controls, outcomes, and closure quality. These artifacts must be time‑bound, anonymized, and reconstructable for any incident or board query.

The strongest base is a structured trip and incident data pack for women’s night shifts. This should include anonymized trip‑level logs with timestamps, origins/destinations, escort flags, SOS activations, GPS traces, and OTP outcomes segmented by gender and time band. Vendors should align this pack with internal HRMS data only through IDs or hashed keys so that CHROs can show incident rates, OTP%, and no‑show patterns for women in defined night windows.

CHROs also need clear before/after reliability and safety deltas specifically for women’s night shifts. Vendors should provide pre‑implementation and post‑implementation OTP% for night bands, safety incident counts per 10,000 trips, escort compliance rates, and women‑specific complaint volumes and closure SLAs. This lets a CHRO say that OTP improved and reported safety deviations fell after SOP and technology changes, supported by six‑month trend charts.

Independent assurance is essential for credibility beyond vendor claims. Third‑party safety and compliance audit reports should cover driver background‑check processes, route and escort compliance sampling, GPS tamper checks, and SOS response drills. These can be complemented by business continuity and risk‑mitigation playbooks that show how political strikes, technology failures, and cab shortages are handled without exposing women to added risk.

Finally, CHROs benefit from command‑center and alert‑system evidence. Dashboards from centralized command centers, alert supervision systems, and women‑centric safety protocols should show real‑time monitoring, escalation matrices, and closure timestamps for night‑shift alerts. Case studies that document measurable improvements in women’s late‑night safety and satisfaction, combined with internal commute‑NPS surveys for women employees, complete an evidence set that is defensible to leadership, auditors, and ESG stakeholders.

How do we check that the vendor’s KPI improvements aren’t cherry-picked, and what should we see in the anonymized data to trust the numbers?

B0501 Auditability of KPI deltas — In India corporate employee mobility services (EMS), how can Internal Audit validate that a vendor’s “before/after KPI deltas” for on-time pickup and incident reduction are not cherry-picked, and what minimum sampling or traceability should be visible in the supporting anonymized data pack?

Internal Audit can validate a mobility vendor’s “before/after” KPI deltas only when each trip and incident is traceable back to an auditable trip log, with a clearly defined universe, stable definitions, and sampling that covers whole timebands and high‑risk cohorts, not hand‑selected routes. Internal Audit should insist that the anonymized data pack allows reconstruction of OTP and incident rates from raw trip rows, with enough metadata to test completeness, consistency of definitions, and exclusion rules.

Internal Audit should first anchor on definitions and universes for EMS KPIs. On‑time pickup must have a fixed time window and rule for early/late arrivals, and incident reduction must be tied to a stable incident taxonomy over the comparison periods. The “before” and “after” datasets must cover the same sites, shift windows, and employee categories, or clearly disclose scope changes.

The anonymized data pack should expose row‑level trip data with unique trip IDs, date–time stamps, route or site tags, OTP flag based on a documented rule, incident flags, and a field that shows whether a trip was included or excluded in KPI calculations with a coded reason. Internal Audit can then recompute OTP% and incident rates, test that exclusions are rule‑based rather than outcome‑based, and look for breaks in continuity.

Sampling should prioritize risk rather than convenience. Internal Audit should draw full‑period samples for at least one full month pre‑change and one full month post‑change, and within those periods test 100% of trips for one or two critical shifts such as night and early‑morning windows. Additional stratified samples should cover different locations, vendors, and days of week, with particular focus on night‑shift women‑safety cohorts where duty of care is highest.

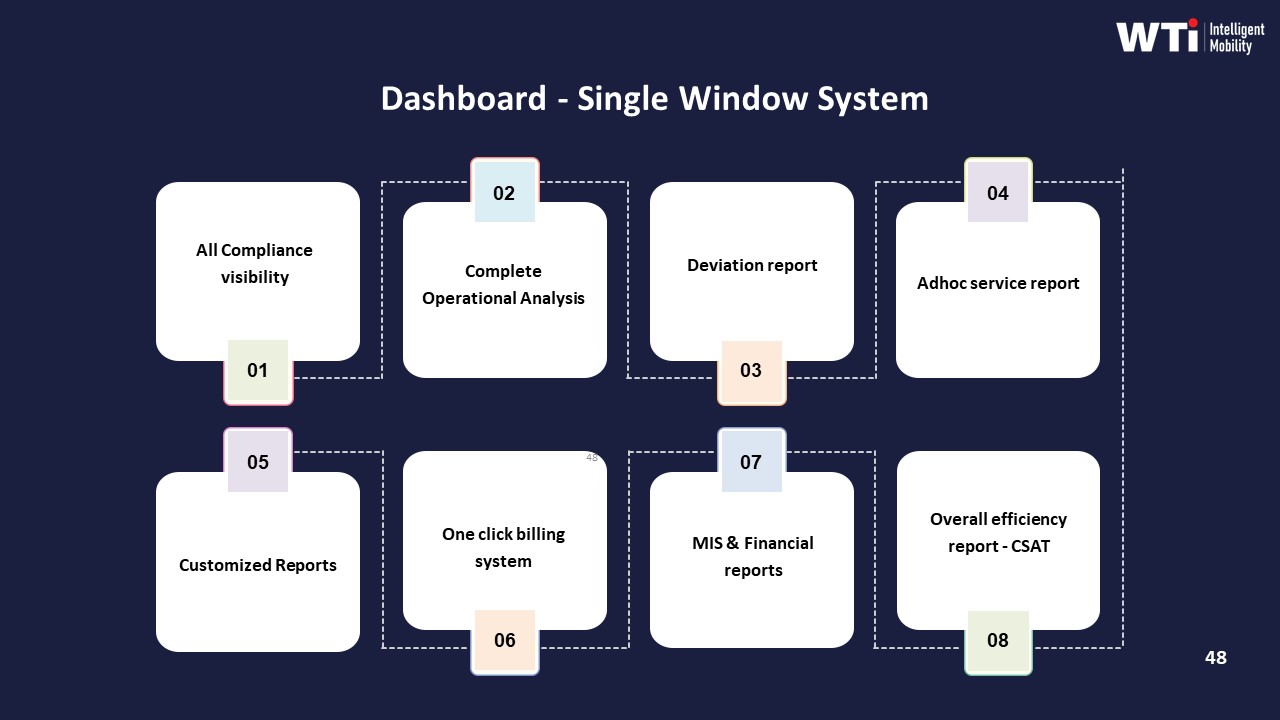

Traceability expectations should include the ability to tie sampled trips back to immutable trip logs, GPS or telematics traces, and any associated SOS or safety events as described in the command center and alert supervision collateral. Audit should see that OTP and incident metrics reconcile to what is shown on mobility dashboards and in management reports such as the indicative management report and single‑window dashboards, and that no alternative “shadow” dataset was used to generate marketing figures.

Red flags for cherry‑picking include missing high‑risk timebands, unexplained drops in trip counts between raw and reported numbers, period boundaries chosen to avoid known disruption events, and KPI improvements confined to narrow, low‑risk cohorts rather than overall EMS operations. A defensible vendor will be able to show consistent KPI computation across EMS, CRD, and project shuttle services, and will align its evidence pack with the same SLA and observability structures that support billing, safety compliance, and business continuity documentation.

If an auditor asks tomorrow, what should the vendor be able to produce instantly—trip logs, escort compliance proof, incident RCA—and how do we test that before signing?

B0505 One-click audit pack readiness — In India corporate employee mobility services (EMS), what does a “panic button” compliance pack look like for auditors—such as immutable trip logs, escort compliance evidence, and incident RCA artifacts—and how should a buyer test that the vendor can generate it quickly under audit pressure?

A panic button compliance pack in Indian employee mobility services is a complete, audit-ready evidence bundle for any SOS-triggered trip. It must reconstruct who travelled, when and where the panic was raised, what the system did in real time, and how the incident was closed and learned from. Auditors look for traceable trip logs, escort compliance, and structured RCA artefacts that stand up to legal and internal scrutiny.

A robust pack usually includes the following categories of evidence, each tied to a unique trip/incident ID.

-

Trip and routing evidence

• Immutable trip ledger with timestamps. There should be a system-generated trip record showing booking creation, roster allocation, vehicle assignment, start/stop times, route, and status changes.

• GPS trace and geo-fence logs. The vendor should provide a time‑stamped route trace, with any deviations and geo-fence violation alerts clearly marked.

• OTP/boarding proof. Logs of employee check-in (OTP, QR, app check-in) to prove who was in the vehicle at each point.

• Command center view. Screenshots or exports from the command-center dashboard showing the trip status before, during, and after the panic event. -

Panic/SOS activation trail

• SOS trigger details. Time, location, trigger channel (employee app SOS, IVMS, hardware button), and the user/vehicle ID that raised it.

• Alert fan-out. Evidence that the alert reached the transport command center and any escalation contacts (e.g., security, HR, vendor supervisor) with exact timestamps.

• Response SLAs. System logs showing when the call-back was initiated, when the driver was contacted, when security or local authorities were informed, and when the incident was marked “under control.”

• In-ride safeguards. If available, IVMS or dashcam event markers, over-speeding or harsh-brake alerts, and any accompanying notes by the command center. -

Escort and women-safety compliance

• Policy linkage. The relevant women‑safety or night‑shift policy showing when escort is mandatory and the defined routing/stop rules.

• Escort assignment proof. Roster or manifest showing escort name, ID, and duty times for the trip.

• Presence evidence. Check‑in/check‑out records for the escort (e.g., app attendance, RFID, manual duty slip scanned into the system).

• Route approval records. Pre-approved route details and any route change approvals stored in the system.

• Special protocols. Evidence of women‑centric safety controls such as “last‑drop female” rules, call masking, and safe‑reach‑home confirmation logs where applicable. -

Driver, vehicle, and compliance state at time of incident

• Driver profile snapshot. Current KYC/PSV validity, background verification status, and completion of POSH/safety training modules.

• Duty and fatigue metrics. Driver duty hours for that day/week to demonstrate adherence to rest‑period norms.

• Vehicle compliance status. Fitness, permit, insurance, and other statutory documents marked “valid” as on the incident date, ideally from a centralized compliance dashboard.

• Pre‑trip checks. Evidence from any digital or logged pre-trip safety inspection for that specific duty (e.g., “Safety Inspection Checklist for Vehicle”). -

Incident handling and RCA artefacts

• Incident ticket history. A ticket ID with full timeline of updates, attached notes, and role-based actions (who did what, when).

• Communication records. Call logs, SMS/app notifications, and email alerts related to the incident, redacted only for privacy but preserving metadata.

• RCA document. A structured root cause analysis describing the trigger, contributing factors, immediate containment, and preventive actions.

• Corrective and preventive actions (CAPA). Evidence that specific measures were implemented, such as driver re‑training, route changes, geo-fence tuning, or policy updates.

• Closure confirmation. Records showing employee acknowledgement (where appropriate), HR/Security sign‑off, and final closure status with date. -

Data integrity and chain-of-custody

• Audit trail integrity. System logs proving that core trip and incident records cannot be edited without trace, and that any changes are versioned with user IDs and timestamps.

• Access logs. Evidence of who accessed the incident data and when, to satisfy internal controls and data‑protection expectations.

• Retention policy. Documentation of how long panic/incident data is stored and how it aligns with corporate policy and emerging DPDP requirements.

To test whether a vendor can actually deliver this under audit pressure, buyers should move beyond presentations and run practical drills.

Key buyer tests and checks

• Live incident drill. Ask the vendor to pull the complete evidence pack for a real, recent SOS event (with identifiers anonymized). Set a strict time limit, such as 2–4 hours, and observe how many teams they need to involve.

• Simulated audit request. During evaluation, provide a random past date and trip ID and request: trip logs, GPS trace, panic logs, driver/vehicle compliance snapshot, and the RCA document. Measure completeness, response time, and consistency of timestamps across systems.

• Walk-through at the command center. Visit the centralized command center or NOC and ask operators to show, on their live system, how an SOS alert appears, how they acknowledge it, and where the resulting incident record is stored.

• Evidence export demo. Ask them to export a “panic button compliance pack” as a single bundle (e.g., zipped PDF set or structured report) while you watch, rather than stitching it manually in slides.

• Cross-check against policies. Share your own night-shift and women-safety policies. Ask the vendor to map, in writing, exactly which logs and artefacts they will provide to prove compliance for each clause.

• Data-integrity probing. Request to see audit logs and version histories for one sample incident to confirm that edits and deletions are traceable.

• Multi-stakeholder review. Have HR, Security/EHS, IT, and Internal Audit jointly review the sample pack and confirm whether it answers their standard questions about “what happened, were we compliant, and what changed after.”

Common failure signals during testing

• Reliance on manual collation using emails, WhatsApp, and Excel rather than a unified system of record.

• Gaps between trip logs and GPS data, such as inconsistent timestamps or missing segments.

• Inability to show escort presence beyond a static roster.

• No formal RCA template or CAPA tracking for closed incidents.

• Delayed response (e.g., taking days instead of hours) when asked for a complete evidence bundle.

In practice, a panic button compliance pack is credible when it is system-generated, time‑aligned across trip, device, and command-center logs, and directly tied to policies on escort and safety. Buyers should validate this by forcing the vendor to reproduce a full incident story on demand, under time pressure, before awarding any contract.

How do we write the contract so the vendor’s KPI claims must be backed by verifiable evidence and not just slides—especially around OTP, incident rates, and closure SLAs?

B0506 Contracting for claims substantiation — In India corporate ground transportation contracting for employee mobility services (EMS), how should Legal and Procurement structure reference verification and claims substantiation so a vendor’s promised KPI outcomes (OTP, closure SLA, incident rates) are tied to verifiable evidence rather than marketing statements?

In India EMS contracting, Legal and Procurement should convert vendor KPI claims into auditable obligations by hard-wiring definitions, data sources, and evidence packs into the contract and RFP, rather than accepting narrative promises or marketing dashboards.

Most organizations treat KPIs like OTP, incident rates, or closure SLAs as numeric targets but do not define how trips are counted, which system is the “source of truth,” or what evidence must be produced during disputes. This allows vendors to showcase selective data or unverifiable reports. Reference verification fails when buyer and vendor use different baselines, when telematics logs are not preserved, or when HRMS and transport data do not reconcile.

Legal and Procurement can reduce this risk by locking three elements into EMS contracts. First, they should define KPI semantics in operational language. For example, OTP should be defined per trip against rostered shift time, based only on trips with valid manifests, and measured across all locations, not pilot clusters. Second, they should mandate system-of-record and auditability. The contract should specify that GPS or NOC trip logs, panic/SOS records, and closure timestamps will be retained as an immutable audit trail for a defined period under Indian audit norms, and that HRMS or access-control data can be used to cross-check attendance-linked metrics.

Third, they should make claims substantiation a precondition to award and renewal. Reference checks should require concrete proof such as prior client OTP%, incident rates, seat-fill, and CO₂ reductions that align with the same KPI definitions, not just testimonials. Vendors should be required to share sample anonymized trip ledgers, command-center escalation logs, and SLA compliance reports that reflect central command-center operations, safety and compliance controls, and business continuity playbooks.

Effective structures usually include a short set of enforceable mechanisms. These mechanisms include RFP annexures that define KPI formulas and data fields, contract clauses that require full data access and export in case of audit or exit, and quarterly joint reviews where HR, Finance, and Transport teams reconcile vendor-reported KPIs with internal HRMS and finance data. They also include penalty or earnback ladders linked to KPIs that can only be triggered when supported by trip-ledger evidence, incident reports, and closure SLAs. These approaches make EMS performance review a data-governed process instead of a debate about marketing claims.

How do we validate ‘zero-incident’ claims in employee transport—what incident definitions, near-miss reporting, and RCA proof should we ask for in data packs and reference calls?

B0514 Validating zero-incident claims — In India corporate employee mobility services (EMS), how can an EHS/Security lead verify that “zero-incident” claims are meaningful—what incident taxonomy, near-miss reporting, and RCA evidence should appear in anonymized data packs and reference customer discussions?

In corporate employee mobility services in India, “zero-incident” claims are only meaningful when they are backed by a clear incident taxonomy, consistent near-miss capture, and traceable RCA evidence across time and routes. An EHS/Security lead should treat the vendor’s anonymized data packs and reference discussions as an audit artefact, not a marketing narrative.

First, the incident taxonomy must be explicit and consistently applied. It should separate at minimum: security incidents during trips, safety incidents or accidents, women-safety specific events, compliance breaches, and technology failures that impact safety (e.g., GPS outage during night-shift EMS). Each category should have sub‑severities defined and mapped to closure SLAs and escalation matrices. A common failure mode is a vendor reporting “zero incidents” only because they classify everything below a high severity threshold as “service deviation” rather than “safety incident”.

Second, meaningful performance evidence requires near-miss reporting as a separate, high-volume layer. An EHS/Security lead should expect anonymized counts and trends for near‑miss events such as geofence violations, overspeeding alerts, fixed device tampering, escort-rule drops, SOS activations without injury, route deviations, and repeated night‑shift routing through red‑flag zones. A vendor that shows flat “zero incidents” and near-zero “near‑miss” numbers usually has weak telemetry or poor reporting culture rather than an exceptionally safe operation.

Third, RCA evidence needs to demonstrate a complete chain of custody from detection to closure. For a sample period, an EHS/Security lead should request redacted RCAs that show the original alert or incident log, time‑stamped data from GPS/IVMS and apps, escalation path through the command center, corrective actions taken (driver retraining, route change, escort policy enforcement), and how these fed back into SOPs. The strongest vendors also align RCA outputs to their continuous assurance loop, business continuity plans, and HSSE culture reinforcement programs.

In the RFP itself, what’s the minimum proof we should demand upfront—documents and sample data—so we don’t evaluate vendors who can’t be audit-ready later?

B0518 Minimum evidence threshold in RFP — In India corporate employee mobility services (EMS) RFPs, what is a practical minimum “evidence threshold” (documents and sample datasets) to require at bid stage so Procurement doesn’t waste cycles on vendors who can’t produce audit-ready proof later?

A practical minimum evidence threshold in Indian EMS RFPs is a compact “proof pack” that demonstrates real operations, audit readiness, and data discipline before shortlisting. Procurement should ask for a standard set of documents plus at least one anonymized data extract that can be inspected like an audit sample.

At bid stage, most organizations can filter vendors effectively using four evidence buckets. The first bucket is compliance and safety governance. Typical asks include sample driver KYC and PSV documentation, a snapshot of centralized compliance dashboards, and written SOPs for women safety, night-shift escort rules, incident response, and Business Continuity Planning for cab shortages, strikes, tech failures, and disasters. Vendors who cannot show current, structured compliance artifacts usually fail later during audits.

The second bucket is command-center and observability proof. Procurement can require screenshots or redacted reports from a live 24x7 command center, including alert supervision (geofence violation, device tampering, overspeeding), escalation matrices, and sample shift-level OTP and incident reports. This connects directly to the Facility / Transport Head’s need for early alerts instead of firefighting.

The third bucket is sample operational data and billing traceability. A minimal dataset should include a one-week anonymized trip ledger with trip IDs, route details, timestamps, GPS-derived OTP%, no-show flags, and closure times, plus a linked sample invoice and MIS summary for the same period. This lets Finance and Procurement check whether SLAs, trips, and billing line items reconcile without manual patchwork.

The fourth bucket is ESG and EV-readiness evidence where relevant. For EMS programs that carry sustainability targets, vendors should provide a sample emissions dashboard, methodology for commute CO₂ calculations, and an example of EV utilization reporting. This supports ESG leads who must defend emission numbers with traceable data.

A simple way to operationalize this threshold is to make submission of these artefacts mandatory for technical evaluation. Procurement can specify that bids lacking: 1) formal safety and compliance SOPs, 2) live command-center reporting samples, 3) an auditable trip-to-invoice dataset, and 4) where applicable, an emissions sample, will not proceed to commercial opening. This reduces wasted cycles on vendors who look impressive in presentations but have no audit-ready backbone behind their claims.

How do we check that the vendor’s third-party audit is actually about their EMS operations (NOC, driver KYC, trip logs) and not just a generic certificate?

B0519 Relevance check for third-party audits — In India corporate employee mobility services (EMS), how should a buyer validate that a third-party audit report is relevant to the actual operating model—NOC processes, driver KYC cadence, and trip log integrity—instead of being a generic certification that doesn’t reduce real risk?

In India EMS, a buyer should only treat a third-party audit report as meaningful if it maps directly to the live operating model for NOC processes, driver KYC cadence, and trip log integrity, rather than just naming high-level certifications. The audit must test how daily shift operations, command-center workflows, and compliance checks actually run in production across cities and timebands.

A practical first filter is scope matching. The report should explicitly cover employee mobility services, not just generic fleet or ISO-style system audits. It should describe sampled routes, shifts, and locations, and reference elements like centralized or site-based NOC monitoring, escort rules for night shifts, and SLA governance for OTP and safety incidents.

The next check is operational traceability. A useful audit describes how driver KYC and PSV verification are sampled over time, how often re-checks are done, and how non-compliance is handled. It should test driver onboarding processes such as background verification, license validation, and periodic revalidation, rather than just listing there is a “policy.” Evidence such as sampling from AVD checks, driver compliance dashboards, and Driver Fatigue Index controls is a strong signal of depth.

For NOC and command-center processes, the report should document how real-time monitoring is performed, how alerts are triaged, and what escalation matrices exist. It should reference actual NOC tools like geo-fencing, SOS handling, and command center operations, and show sample incident tickets with timestamps and closure SLAs. If the report cannot trace a sample incident from detection to closure, it is likely too generic to reduce real risk.

Trip log integrity must be verifiable. A robust audit traces trips from booking and routing to trip completion and billing, and checks for GPS tampering controls, route adherence audits, and audit trail integrity. The report should confirm that trip ledgers, GPS logs, and billing entries reconcile, and that exceptions such as no-shows or diversions are captured and closed with a reason code.

Buyers can further validate relevance by asking the auditor or vendor to walk through a few real, de-identified cases end-to-end. For example, they can request a sample of night-shift trips for women employees, including manifests, driver credentials, route approval, SOS readiness, and closure of any deviations. If the vendor cannot produce this chain-of-custody on demand, the audit is unlikely to reflect the live EMS operating model.

Finally, the buyer should check how often audits are repeated and how findings are tracked. An audit that feeds into a continuous assurance loop, with corrective actions and re-testing, provides more real risk reduction than a one-time certification. Reports that tie findings to specific KPIs like OTP%, incident rates, and SLA breaches show tighter coupling between audit and daily EMS performance.

Before a large rollout, what proof should we ask for that grievance tickets actually get resolved—ticket aging, closure SLAs by shift, and what reference customers say about escalations?

B0520 Proof of grievance closure performance — In India corporate employee mobility services (EMS), what proof should HR and Facilities ask for to confirm grievance closure works in practice—like ticket aging distributions, closure SLA adherence by timeband, and reference customer feedback on escalations—before rolling out to thousands of employees?

In Indian employee mobility services, HR and Facility heads should demand hard, operations-grade evidence that grievance closure is real, repeatable, and auditable before scaling to thousands of employees. They should focus on data that proves how fast issues are detected, how reliably they are closed across timebands, and how employees rate the outcome after escalations.

They should first ask for a live or redacted view of the vendor’s command-center tooling and alert supervision layer. This includes evidence of real-time alerts for geofence violations, device tampering, overspeeding, SOS triggers, and no-show patterns, along with associated escalation workflows and closure tracking. They should verify that the Transport Command Centre or equivalent has 24/7 coverage, clear escalation matrices, and structured dashboards that show open tickets, pending actions, and closure SLAs.

They should evaluate historical ticket and escalation data across at least one or two reference accounts. This should include ticket aging distribution by severity, adherence to closure SLAs by timeband (day vs late-night), and exception-to-closure timelines for safety-related incidents. HR and Facilities should insist on audit-ready reports that map grievances to root-cause analysis, corrective actions, and recurrence trends.

They should also ask for reference customer feedback specifically on escalations and incident handling. This includes client testimonials and satisfaction surveys that highlight responsiveness, reliability during crises, and improvements in on-time performance and employee satisfaction after implementing the grievance and command-center model. Evidence of improved OTP, reduced complaints during adverse conditions, and high CSAT or user satisfaction scores are strong signals that grievance closure works in practice.

Finally, they should verify that the grievance and closure process is embedded into a broader governance and BCP framework. That means there are documented business continuity plans, safety and security protocols, women-centric safety measures, and periodic audits. These should all feed into dashboards and management reports that HR and Facility heads can use to monitor performance without relying solely on vendor assurances.

After go-live, what proof cadence should we expect—monthly KPI packs, quarterly audits, periodic checks—so we catch performance drops early and stay audit-ready?

B0524 Post-go-live proof cadence — In India corporate employee mobility services (EMS) post-purchase governance, what ongoing evidence cadence—monthly KPI delta packs, quarterly audits, and periodic reference check-ins—helps HR and Finance stay audit-ready and avoid being blindsided when performance quietly degrades after the first 90 days?

In corporate employee mobility services in India, HR and Finance stay audit-ready by enforcing a fixed evidence cadence that combines monthly KPI delta packs, quarterly deep-dive audits, and semi-annual reference or market check-ins. This cadence keeps reliability, safety, cost, and ESG trends visible before complaints or audits surface, and it creates a defensible paper trail when performance drifts after the first 90 days.

A monthly rhythm works best when vendors deliver standardized KPI “delta packs” rather than raw dumps. These packs typically include On‑Time Performance, exception latency, incident and safety logs, Trip Fill Ratio, dead mileage, Cost per Employee Trip, and basic EV/ESG metrics if relevant. HR uses this to link commute reliability to attendance and complaints. Finance uses it to reconcile billed trips with system data and to flag leakage or unexplained cost movement.

Quarterly, organizations benefit from a heavier governance cycle with structured route adherence and safety audits, sample billing and tariff verification, and compliance checks on driver and vehicle documentation. This audit layer catches slow degradation in routing quality, fatigue management, or women-safety protocol adherence that monthly snapshots may not reveal. It also gives Procurement and Internal Audit defensible evidence if contracts need penalty triggers or course-corrections.

On a slower cadence, HR and Finance should also run periodic external reference or benchmark check-ins. These can include independent customer satisfaction baselines, cross‑site comparisons, and limited external benchmarking against similar enterprises using end‑to‑end services. This protects against a single-vendor view, surfaces silent dissatisfaction, and gives CFOs a narrative if rebids or EV-transition changes are proposed.

During a pilot, how can Ops quickly check if the vendor’s ‘proof’ actually reduces day-to-day firefighting—like fewer escalations and manual follow-ups—without building heavy analytics?

B0525 Pilot measurement of operational drag — In India corporate employee mobility services (EMS), how can a Facilities/Transport manager measure whether “proof and references” translate into less operational drag—fewer escalations, lower manual follow-ups—during a pilot, without needing a full analytics rebuild?

In Indian corporate employee mobility, a Facilities or Transport manager can test whether a vendor’s “proof and references” actually reduce operational drag by running a tight pilot that tracks a few manual, operations-facing indicators before and after, without building a new analytics stack.

The most reliable signal set comes from the control-room workload and escalation noise. Operational drag reduces when night-shift calls drop, WhatsApp/SMS chasing reduces, and exception tickets close faster with less manual intervention. These patterns can be captured using simple logs, spreadsheets, and existing email or ticket trails rather than new dashboards.

A practical approach is to define a short pilot window, lock 4–6 low-friction metrics, and compare them against a baseline from a similar shift pattern. Facilities or Transport teams can count vendor references and case studies as “claims” but treat these pilot numbers as the real validation of those claims.

Key metrics the manager can track with minimal tooling are: - Number of transport-related calls to the control room per 100 trips. - Number of escalations to HR, Security, or senior leadership per week. - Manual roster or route changes per shift due to vendor-side failures. - Average time to close incidents or exceptions using existing ticket or email trails. - No-show or missed-pickup incidents per 100 trips, especially in night shifts. - GPS or app-failure workarounds where manual tracking or calling was required.

If the vendor’s “proof” is real, the pilot should show a visible drop in calls, escalations, and manual patchwork, even if core analytics or HRMS integrations remain unchanged during the test.

For our employee transport program, what proof should we ask for besides a presentation—like anonymized trip data and OTP improvements—so HR isn’t left defending the decision after a night-shift issue?

B0527 HR-ready proof beyond demos — In India corporate Employee Mobility Services (EMS) for shift-based employee transport, what specific proof artifacts should a CHRO require beyond vendor slideware—such as anonymized trip data packs, incident logs, and before/after on-time pickup (OTP) deltas—to feel confident the program will hold up during night-shift escalations and leadership scrutiny?

A CHRO who wants EMS decisions to withstand night-shift incidents and leadership scrutiny should insist on concrete proof packs across five areas. Each artifact must be exportable, auditable, and tied to shift-based operations, not just marketing narratives.

First, CHROs should require operational reliability evidence. This includes anonymized, time-banded OTP and Trip Adherence Rate reports by site, shift window, and gender split, with before/after deltas for any “optimization” claims. It should include dead mileage and Trip Fill Ratio data for pooled routes, plus exception latency reports showing how fast missed/late pickups were detected and closed. Case-study style summaries of challenging conditions, such as monsoon routing with quantified OTP and customer satisfaction uplift, strengthen this pack.

Second, CHROs should demand safety and compliance artifacts. These include redacted incident and near-miss logs covering SOS triggers, route deviations, and escort or women-first protocol breaches, with time-stamped escalation chains and closure notes. They should also see driver and fleet compliance packs, including documented KYC/PSV verification, background check steps, medical fitness, and structured training calendars, plus vehicle compliance checklists and pre-induction audit records. A documented night-shift SOP bundle that covers routing rules, escort logic, and rest-hour observance is critical.

Third, CHROs should ask for command-center and governance proof. This means sample dashboards or screenshots from the 24x7 command center showing live route tracking, geo-fencing alerts, and SLA breach views, together with escalation matrices and business continuity playbooks that cover cab shortages, technology failures, political strikes, and extreme weather. Evidence of periodic route adherence audits and management reports, such as indicative MIS or single-window dashboards, reinforces governance maturity.

Fourth, CHROs should require employee-experience and grievance closure evidence. This includes commute satisfaction or NPS survey outputs and methodology, complaint and ticket logs with age, severity, and closure SLA performance, and examples of women-centric safety protocols in action, such as dedicated fleets, safety cells, and POSH-linked driver training. Redacted employee app screenshots showing SOS capabilities, live tracking, and feedback loops can demonstrate that EX is embedded in the trip lifecycle.

Fifth, CHROs should insist on data integrity and auditability proof. This includes descriptions of how trip data, GPS logs, and incident records are stored, for how long, and in what format for audits, as well as confirmation of role-based access and tamper-evident audit trails. Demonstrable integration with HRMS or attendance systems, and the ability to produce exportable, investigator-ready bundles for a single route, date, and employee cohort, will increase confidence that any future investigation or leadership review can be handled with evidence instead of anecdote.

For executive/airport trips, how do we ask for KPI improvements in a way that’s credible and not cherry-picked—punctuality, cancellations, and complaint closure?

B0529 Interpreting before/after KPI deltas — In India corporate Corporate Car Rental (CRD) for executive and airport travel, what is a credible way to request and interpret before/after KPI deltas (e.g., pickup punctuality, cancellation rate, complaint closure time) so a Travel Desk and Admin team can distinguish genuine service improvement from cherry-picked reporting?

In corporate car rental for executives and airport travel in India, the most credible way to request and interpret before/after KPI deltas is to standardize definitions and time windows upfront, demand raw trip-level extracts aligned to Finance and HR systems, and compare performance across the entire eligible population rather than only “managed” or “priority” trips. This approach reduces cherry-picking and forces vendors to prove that improvements in pickup punctuality, cancellation rate, and complaint closure time are consistent, auditable, and finance-reconcilable.

A common failure mode is accepting percentage improvements on a subset of routes or VIP users. Travel Desk and Admin teams should insist that KPI baselines and post-change numbers are computed for all CRD trips in scope, over the same day-of-week and timeband mix, and explicitly tagged with inclusions and exclusions. They should also align reported trip counts and spend with Finance billing data to ensure that no adverse trips are silently dropped from the analysis.

A practical guardrail is to treat each KPI as a contract-grade metric. For pickup punctuality and cancellation rate, teams should request trip-level logs with timestamps, SLA thresholds, and exception codes, then validate random samples against GPS data or duty slips. For complaint closure time, they should demand ticket-level data with open/close timestamps and categorize issues by severity so that fast closure of minor issues does not mask slow resolution of serious complaints.

To distinguish genuine improvement from noise, Travel Desk and Admin can compare pre-and post-implementation KPIs over at least one full quarter each, segmented by city, vendor, and customer persona. They can then examine whether gains in on-time pickup coincide with side effects such as higher no-show charges or increased dead mileage, which would shift cost or risk elsewhere rather than delivering true service enhancement.

How do we make sure the vendor’s data pack ties back to billing—trip IDs, exceptions, cancellations—so Finance isn’t doing manual reconciliations every month?

B0533 Data pack ties to invoicing — In India enterprise ground transportation outsourcing for Employee Mobility Services (EMS), how can a CFO validate that a vendor’s anonymized data pack is reconciliable to invoice logic (trip IDs, route IDs, exceptions, cancellations) so Finance isn’t trapped in monthly manual reconciliation and audit risk?

In enterprise EMS outsourcing, a CFO can validate that a vendor’s anonymized data pack is reconciliable to invoice logic only if every billed rupee can be traced to a unique trip record, with explicit handling of exceptions, cancellations, and manual overrides. Finance gains control when trip-level telemetry, EMS process flow, and billing models share the same IDs, timestamps, and business rules, and those rules are documented and testable in a repeatable SOP.

A practical starting point is to insist on a canonical trip ledger. Each ride in Employee Mobility Services needs a single trip ID that appears identically in the driver app, employee app, command center dashboard, and billing system. Route IDs, roster batches, and vendor-allocated vehicles should be stable keys that link operational logs to commercial models such as per-km, trip-based, or per-seat billing. An anonymized data pack remains auditable when sensitive employee fields are masked but all technical identifiers and timestamps are preserved.

Finance leaders should require a clearly documented mapping between the EMS operation cycle and the billing pipeline. That mapping should show how booking, routing, dispatch, completion, no-show, cancellation, and exception events flow into chargeable or non-chargeable line items. A common failure mode is when exceptions are tracked in the command center or alert supervision system but never flow through to automated tariff mapping or centralized billing features, forcing manual intervention. Another failure mode is when route optimization or re-routing during monsoon or disruption changes trip geometry but does not update the commercial basis, creating unreconciled dead mileage.

To reduce monthly manual reconciliation, CFOs can define a small set of regression checks as part of vendor onboarding. These checks include verifying that the count of completed trips in the EMS operation cycle matches the count of billed trips, that total billed distance matches the sum of GPS or odometer distance within an agreed tolerance, and that cancellations and no-shows appear both in the operational logs and in credit notes or zero-value invoice lines. Finance should also test whether outcome-linked metrics like on-time performance, seat-fill, and SLA breaches can be independently recomputed from the anonymized pack and tied to any incentives or penalties in the contract.

When we call references, how do we validate that low complaints and fast closures are real—and based on logs, not hidden escalations?

B0537 Validating complaint and closure claims — In India enterprise Employee Mobility Services (EMS), how should an HR Operations manager structure a reference call to validate whether employee experience claims (low complaint volume, fast grievance closure) are backed by traceable logs and not suppressed escalations?

An HR Operations manager should structure an EMS reference call to move from generic satisfaction talk to specific, verifiable evidence about complaints and closure logs.

The most reliable calls follow a simple structure. The structure begins with clarifying what “good employee experience” means in that organization in terms of complaints per 1,000 trips, typical issues by shift band, and expected closure SLAs. The structure then moves into how the reference client captures, tags, and reports complaints across channels, and closes with questions about failure modes, suppressed escalations, and how issues are surfaced to leadership.

A practical way to run the call is to chunk it into four sections.

- Context and scale. The HR Operations manager should ask the reference to quantify daily trips, city mix, shift mix, and tenure with the vendor. This anchors any “low complaint” claim against actual EMS volume.

- Complaint intake and logging. The HR Operations manager should ask which channels employees actually use to complain, such as app, call center, email, or security desk. The manager should ask if all these channels feed into a single ticketing or command-center system, and whether every incident receives a unique ID and time-stamped log.

- Closure and governance. The HR Operations manager should ask what formal closure SLA exists for transport complaints by severity, and whether the vendor publishes weekly or monthly reports showing open, in-progress, and closed grievances. The manager should ask who reviews these in the client organization, such as HR, Security, or Transport, and how often.

- Suppression and escalation culture. The HR Operations manager should ask if employees bypass the vendor and go directly to HR or leadership for commute issues. The manager should then ask whether those direct escalations are back-entered into the same log, and whether any pattern of “quiet” dissatisfaction was discovered only through surveys or floor connects.

To validate traceability, the HR Operations manager should request that the reference describe one or two real night-shift incidents end-to-end from first complaint to closure. The manager should listen for whether timestamps, channel transitions, and actions taken are clear and consistent with a centralized command-center or NOC model. The manager should also ask if HR has self-service access to historic complaint logs and not only curated MIS sent by the vendor.

The HR Operations manager should interpret red flags carefully. Red flags include a reference that cannot quote even approximate closure SLAs, that relies only on informal WhatsApp or phone resolutions, or that reports “no complaints” despite high trip volume or challenging geographies. Signals of maturity include alignment with centralized command-center operations, presence of a documented escalation matrix, and linkages between complaint reports, HRMS integration, and user satisfaction or NPS dashboards.

What’s a reasonable minimum bar for third-party audits in mobility, and how do we check the audit scope is current and meaningful?

B0539 Minimum bar for third-party audits — In India corporate ground transportation vendor evaluation (EMS/CRD), what is a reasonable minimum bar for third-party audits (safety, compliance, security) and how should a buyer verify the audit scope isn’t superficial or outdated?

A reasonable minimum bar for third‑party audits in Indian corporate ground transportation is that vendors undergo independent, recurring audits that cover driver and fleet compliance, safety processes (especially women’s safety and night shifts), statutory adherence, data/security practices, and business continuity readiness, with traceable documentation that can be tied to daily operations and command‑center workflows. Buyers should verify that the audit scope is recent, evidence‑backed, and aligned to actual EMS/CRD operations, not just a one‑time or generic certification exercise.

A robust baseline usually includes continuous driver and fleet compliance checks, not only onboarding checks. Vendors should be able to show structured frameworks like detailed driver verification flows, periodic medical fitness and training records, and vehicle compliance and induction processes that reference fitness, documentation, and mechanical condition over time. Buyers should look for centralized compliance management processes with automated reminders, maker–checker controls, and vehicle/driver document repositories rather than static PDFs.